目标是在预览时可以在命令行中输入录像命令进行录像。

更新一下:使用的环境是gstreamer 1.20.3

当前存在的问题是,使用jpeg_enc,avi_mux编码录像时,必须要将整个管道从重置(NULL->PALYING),刚又看了下,发现使用x264enc和mp4mux编码时不需要整个重置管道状态。我想这可能是jpeg_enc组件的问题。

将 gst_element_set_state (pipeline, GST_STATE_NULL);替换为:

gst_element_set_state (pipeline, GST_STATE_PAUSED);

gst_element_set_state (pipeline, GST_STATE_PLAYING);

在将删除videorate,然后jpeg_enc,avi_mux替换为x264enc和mp4mux即可。

原本是因为上台设备无法使用mp4mux组件。按照官网给的录像管道例子也有一些问题,所以才使用了jpeg_enc录像。

code如下:

#include <gst/gst.h>

#include <stdio.h>

#include <linux/input.h>

#include <sys/types.h>

#include <sys/stat.h>

#include <fcntl.h>

#include <stdlib.h>

static gboolean

bus_call (GstBus * bus, GstMessage * msg, gpointer data)

{

GMainLoop *loop = (GMainLoop *) data;

switch (GST_MESSAGE_TYPE (msg)) {

case GST_MESSAGE_EOS:

g_print ("End of stream\n");

g_main_loop_quit (loop);

break;

case GST_MESSAGE_ERROR:{

gchar *debug;

GError *error;

gst_message_parse_error (msg, &error, &debug);

g_printerr ("ERROR from element %s: %s\n",

GST_OBJECT_NAME (msg->src), error->message);

if (debug)

g_printerr ("Error details: %s\n", debug);

g_free (debug);

g_error_free (error);

g_main_loop_quit (loop);

break;

}

default:

break;

}

return TRUE;

}

static void

parse_input (gchar * input)

{

fflush (stdout);

memset (input, '\0', 128 * sizeof (*input));

if (!fgets (input, 128, stdin) ) {

g_print ("Failed to parse input!\n");

return;

}

// Clear trailing whitespace and newline.

g_strchomp (input);

}

int main(int argc, char *argv[]) {

GstElement *pipeline, *v4l2_src, *video_convert, *capsfilter, *tee, *disp_queue, *xvimage_sink;

GstElement *record_queue,*video_rate,*jpeg_enc,*avi_mux,*file_sink;

GstPad *tee_disp_pad, *tee_record_pad;

GstPad *queue_record_pad, *queue_disp_pad;

GstCaps *filtercaps;

// Initialize GStreamer

gst_init (&argc, &argv);

// Create the elements

v4l2_src = gst_element_factory_make ("v4l2src", "v4l2_src");

video_convert = gst_element_factory_make ("videoconvert", "video_convert");

capsfilter = gst_element_factory_make ("capsfilter", "capsfilter");

tee = gst_element_factory_make ("tee", "tee");

disp_queue = gst_element_factory_make ("queue", "disp_queue");

xvimage_sink = gst_element_factory_make ("xvimagesink", "xvimage_sink");

//Create the empty pipeline

pipeline = gst_pipeline_new ("test-pipeline");

if (!pipeline || !v4l2_src || !video_convert || !capsfilter || !tee || !disp_queue || !xvimage_sink ) {

g_printerr ("Not all elements could be created.\n");

return -1;

}

//Configure elements

// Link all elements that can be automatically linked because they have "Always" pads

gst_bin_add_many (GST_BIN (pipeline), v4l2_src, video_convert, capsfilter, tee, disp_queue, xvimage_sink, NULL);

if (gst_element_link_many (v4l2_src, video_convert, capsfilter, tee, NULL) != TRUE ||

gst_element_link_many (disp_queue, xvimage_sink, NULL) != TRUE ) {

g_printerr ("Elements could not be linked.\n");

gst_object_unref (pipeline);

return -1;

}

// Manually link the Tee, which has "Request" pads

tee_disp_pad = gst_element_request_pad_simple (tee, "src_%u");

g_print ("Obtained request pad %s for disp branch.\n", gst_pad_get_name (tee_disp_pad));

queue_disp_pad = gst_element_get_static_pad (disp_queue, "sink");

if (gst_pad_link (tee_disp_pad, queue_disp_pad) != GST_PAD_LINK_OK ) {

g_printerr ("Tee could not be linked.\n");

gst_object_unref (pipeline);

return -1;

}

filtercaps = gst_caps_new_simple ("video/x-raw",

"format", G_TYPE_STRING, "NV12",

"width", G_TYPE_INT, 640,

"height", G_TYPE_INT, 480,

"framerate", GST_TYPE_FRACTION, 30, 1,

NULL);

g_object_set (G_OBJECT (capsfilter), "caps", filtercaps, NULL);

gst_caps_unref (filtercaps);

g_object_set (G_OBJECT(xvimage_sink),"async",FALSE,"sync",TRUE, NULL);

g_object_set (G_OBJECT(disp_queue),"max-size-buffers",0,NULL);

g_object_set (G_OBJECT(disp_queue),"max-size-time",0,NULL);

g_object_set (G_OBJECT(disp_queue),"max-size-bytes",512000000,NULL);

gst_object_unref (queue_disp_pad);

// Start playing the pipeline

gst_element_set_state (pipeline, GST_STATE_PLAYING);

GstBus *bus = NULL;

GMainLoop *loop = NULL;

guint bus_watch_id;

loop = g_main_loop_new (NULL, FALSE);

bus = gst_pipeline_get_bus (GST_PIPELINE (pipeline));

bus_watch_id = gst_bus_add_watch (bus, bus_call, loop);

gst_object_unref (bus);

{

g_print("test start \n");

gchar *input = g_new0 (gchar, 128);

gboolean active = 1;

while( active ) {

g_print("input option videos videoq q : \n");

parse_input(input);

if(g_str_equal (input, "videos"))

{

g_print("video start !\n");

gst_element_set_state (pipeline, GST_STATE_NULL);

record_queue = gst_element_factory_make ("queue", "record_queue");

video_rate = gst_element_factory_make ("videorate", "video_rate");

jpeg_enc = gst_element_factory_make ("jpegenc", "jpeg_enc");

avi_mux = gst_element_factory_make ("avimux", "avi_mux");

file_sink = gst_element_factory_make ("filesink", "file_sink");

if ( !record_queue || !video_rate || !jpeg_enc || !avi_mux || !file_sink ) {

g_printerr ("Not all elements could be created.\n");

return -1;

}

gst_bin_add_many (GST_BIN (pipeline), record_queue, video_rate, jpeg_enc, avi_mux, file_sink, NULL);

if ( gst_element_link_many (record_queue, video_rate, jpeg_enc, avi_mux, file_sink, NULL) != TRUE) {

g_printerr ("Elements could not be linked.\n");

gst_object_unref (pipeline);

return -1;

}

g_object_set (G_OBJECT(file_sink),"location","video21.avi",NULL);

g_object_set (G_OBJECT(file_sink),"async",FALSE,"sync",TRUE, NULL);

gst_element_sync_state_with_parent(record_queue);

/*

gst_element_sync_state_with_parent(video_rate);

gst_element_sync_state_with_parent(jpeg_enc);

gst_element_sync_state_with_parent(avi_mux);

gst_element_sync_state_with_parent(file_sink);*/

tee_record_pad = gst_element_request_pad_simple (tee, "src_%u");

g_print ("Obtained request pad %s for record branch.\n", gst_pad_get_name (tee_record_pad));

queue_record_pad = gst_element_get_static_pad (record_queue, "sink");

g_print("video link start !\n");

if ( gst_pad_link (tee_record_pad, queue_record_pad) != GST_PAD_LINK_OK) {

g_printerr ("Tee could not be linked.\n");

gst_object_unref (pipeline);

return -1;

}

/*gst_element_set_state (record_queue, GST_STATE_PLAYING);

gst_element_set_state (video_rate, GST_STATE_PLAYING);

gst_element_set_state (jpeg_enc, GST_STATE_PLAYING);

gst_element_set_state (avi_mux, GST_STATE_PLAYING);

gst_element_set_state (file_sink, GST_STATE_PLAYING);*/

gst_element_set_state (pipeline, GST_STATE_PLAYING);

} else if (g_str_equal (input, "videoq"))

{

g_print("video quit !\n");

gst_element_set_state (record_queue, GST_STATE_NULL);

gst_element_set_state (video_rate, GST_STATE_NULL);

gst_element_set_state (jpeg_enc, GST_STATE_NULL);

gst_element_set_state (avi_mux, GST_STATE_NULL);

gst_element_set_state (file_sink, GST_STATE_NULL);

g_print("video unlink !\n");

//appctx->caps_video_pad = gst_element_get_static_pad (appctx->v_capsfilter, "sink");

if( gst_pad_unlink(tee_record_pad, queue_record_pad) != TRUE )

{

g_printerr ("video could not be unlinked.\n");

return -1;

}

gst_element_release_request_pad (tee, tee_record_pad);

gst_object_unref (tee_record_pad);

gst_object_unref (queue_record_pad);

gst_bin_remove(GST_BIN (pipeline), record_queue);

gst_bin_remove(GST_BIN (pipeline), video_rate);

gst_bin_remove(GST_BIN (pipeline), jpeg_enc);

gst_bin_remove(GST_BIN (pipeline), avi_mux);

gst_bin_remove(GST_BIN (pipeline), file_sink);

} else {

g_print("quit\n");

active = 0;

}

}

}

g_main_loop_run (loop);

gst_element_release_request_pad (tee, tee_disp_pad);

gst_object_unref (tee_disp_pad);

gst_element_set_state (pipeline, GST_STATE_NULL);

gst_object_unref (pipeline);

return 0;

}

编译命令:gcc main.c -o main `pkg-config --cflags --libs gstreamer-1.0`

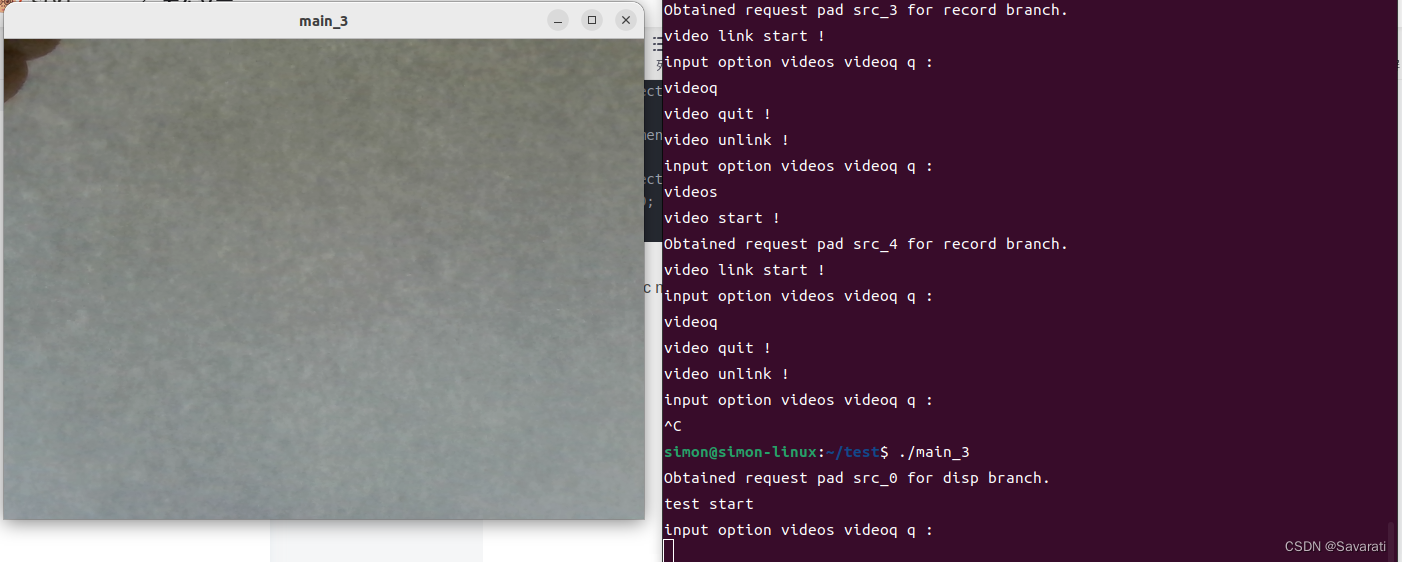

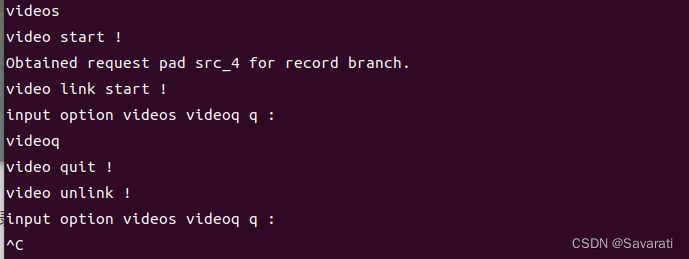

具体效果:

运行可执行程序

输入videos开始录像,输入videoq结束录像。

运行录像命令时预览会卡一下,这是为了将管道重新开始,以便开始录像,不然录像的时间会是整个管道的运行时间。这样会导致录像的内容不是从我们输入录像命令开始的,而是从运行可执行程序开始的,这里就先把管道重新开始下。

1297

1297

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?