多目标追踪的主要步骤

获取原始视频帧数

利用目标检测器对目标进行检测(此处用的为yolov5)

将检测到的目标框进行特征提取,一般特征提取用的是ReID

计算前后两帧的目标匹配程度(利用匈牙利算法和级联匹配),为每个追踪到的目标匹配ID。

什么是ReID

行人重识别(Person Re-identification也称行人再识别,简称为ReID,是利用计算机视觉技术判断图像或者视频序列中是否存在特定行人的技术。广泛被认为是一个图像检索的子问题。给定一个监控行人图像,检索跨设备下的该行人图像。也就是看下一帧检测出的人是不是上一帧的人。在本项目中我们主要是训练一个ReID模型来对yolov5检测到的和track进行特征提取,提取出一个128维度的特征向量,进行比较。

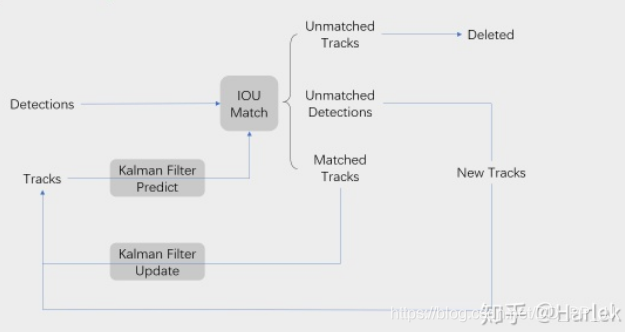

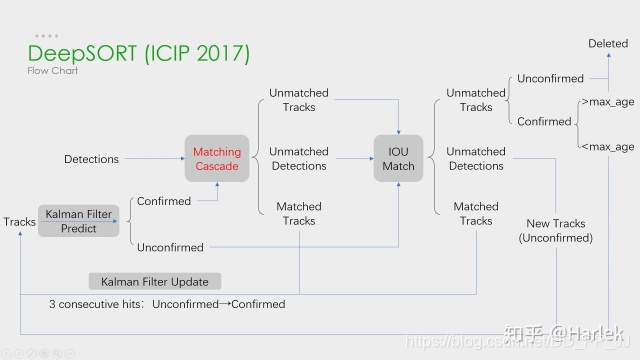

一.sort与deepsort

deepsort是以sort为基础加入了deep也就是深度学习。主要体现在加入了级联匹配Matching Cascade(优先缺失帧数最小的进行匹配)和新轨迹confirmed的确认,其余的大体上还是sort的结构。deepsort相对于sort的优点在于,当物体发生遮挡时,不会将物体丢失。(加入了feature)

sort没有ReID等深度学习特征

二.重要环节

1.匈牙利匹配(KM算法)

大白话:匈牙利算法可以告诉我们当前帧的某个目标,是否与前一帧的某个目标相同。

其实就是通过通过代价矩阵来解决分配问题。

当我们拿到一个代价矩阵,对其进行化简是一个问题,我们在化简时要遵循:如果代价矩阵的某一行或某一列同时加上或减去某个数,则这个新的代价矩阵的最优分配仍然是原代价矩阵的最优分配。这一原则。

具体算法步骤:

- 对于矩阵的每一行,减去其中最小的元素

- 对于矩阵的每一列,减去其中最小的元素

- 用最少的水平线或垂直线覆盖矩阵中所有的0

- 如果线的数量等于N,则找到了最优分配,算法结束,否则进入步骤5

- 找到没有被任何线覆盖的最小元素,每个没被线覆盖的行减去这个元素,每个被线覆盖的列加上这个元素,返回步骤3

代码实现:

def min_cost_matching(

distance_metric, max_distance, tracks, detections, track_indices=None,

detection_indices=None):

"""Solve linear assignment problem.

Parameters

----------

distance_metric : Callable[List[Track], List[Detection], List[int], List[int]) -> ndarray

The distance metric is given a list of tracks and detections as well as

a list of N track indices and M detection indices. The metric should

return the NxM dimensional cost matrix, where element (i, j) is the

association cost between the i-th track in the given track indices and

the j-th detection in the given detection_indices.

max_distance : float

Gating threshold. Associations with cost larger than this value are

disregarded.

tracks : List[track.Track]

A list of predicted tracks at the current time step.

detections : List[detection.Detection]

A list of detections at the current time step.

track_indices : List[int]

List of track indices that maps rows in `cost_matrix` to tracks in

`tracks` (see description above).

detection_indices : List[int]

List of detection indices that maps columns in `cost_matrix` to

detections in `detections` (see description above).

Returns

-------

(List[(int, int)], List[int], List[int])

Returns a tuple with the following three entries:

* A list of matched track and detection indices.

* A list of unmatched track indices.

* A list of unmatched detection indices.

"""

if track_indices is None:

track_indices = np.arange(len(tracks))

if detection_indices is None:

detection_indices = np.arange(len(detections))

if len(detection_indices) == 0 or len(track_indices) == 0:

return [], track_indices, detection_indices # Nothing to match.

# 计算成本矩阵

cost_matrix = distance_metric(

tracks, detections, track_indices, detection_indices)

cost_matrix[cost_matrix > max_distance] = max_distance + 1e-5

# 执行匈牙利算法,得到指派成功的索引对,行索引为tracks的索引,列索引为detections的索引

row_indices, col_indices = linear_assignment(cost_matrix)

matches, unmatched_tracks, unmatched_detections = [], [], []

# 找出未匹配的detections

for col, detection_idx in enumerate(detection_indices):

if col not in col_indices:

unmatched_detections.append(detection_idx)

# 找出未匹配的tracks

for row, track_idx in enumerate(track_indices):

if row not in row_indices:

unmatched_tracks.append(track_idx)

# 遍历匹配的(track, detection)索引对

for row, col in zip(row_indices, col_indices):

track_idx = track_indices[row]

detection_idx = detection_indices[col]

# 如果相应的cost大于阈值max_distance,也视为未匹配成功

if cost_matrix[row, col] > max_distance:

unmatched_tracks.append(track_idx)

unmatched_detections.append(detection_idx)

else:

matches.append((track_idx, detection_idx))

return matches, unmatched_tracks, unmatched_detections2.卡尔曼滤波

在目标跟踪中,需要估计track的以下两个状态:

- 均值(Mean):表示目标的位置信息,由bbox的中心坐标 (cx, cy),宽高比r,高h,以及各自的速度变化值组成,由8维向量表示为 x = [cx, cy, r, h, vx, vy, vr, vh],各个速度值初始化为0。

- 协方差(Covariance ):表示目标位置信息的不确定性,由8x8的对角矩阵表示,矩阵中数字越大则表明不确定性越大,可以以任意值初始化。

卡尔曼滤波分为两个阶段:(1) 预测track在下一时刻的位置,(2) 基于detection来更新预测的位置。

(1)预测

基于track在t-1时刻的状态来预测其在t时刻的状态。

(1)

(2)

在公式1中,x为track在t-1时刻的均值,F称为状态转移矩阵,该公式预测t时刻的x'

矩阵F中的dt是当前帧和前一帧之间的差,将等号右边的矩阵乘法展开,可以得到cx'=cx+dt*vx,cy'=cy+dt*vy...,所以这里的卡尔曼滤波是一个匀速模型(Constant Velocity Model)。

在公式2中,P为track在t-1时刻的协方差,Q为系统的噪声矩阵,代表整个系统的可靠程度,一般初始化为很小的值,该公式预测t时刻的P'。

代码实现:

def predict(self, mean, covariance):

"""Run Kalman filter prediction step.

Parameters

----------

mean : ndarray

The 8 dimensional mean vector of the object state at the previous

time step.

covariance : ndarray

The 8x8 dimensional covariance matrix of the object state at the

previous time step.

Returns

-------

(ndarray, ndarray)

Returns the mean vector and covariance matrix of the predicted

state. Unobserved velocities are initialized to 0 mean.

"""

#卡尔曼滤波器由目标上一时刻的均值和协方差进行预测。

std_pos = [

self._std_weight_position * mean[3],

self._std_weight_position * mean[3],

1e-2,

self._std_weight_position * mean[3]]

std_vel = [

self._std_weight_velocity * mean[3],

self._std_weight_velocity * mean[3],

1e-5,

self._std_weight_velocity * mean[3]]

# 初始化噪声矩阵Q;np.r_ 按列连接两个矩阵

# motion_cov是过程噪声 W_k的 协方差矩阵Qk

motion_cov = np.diag(np.square(np.r_[std_pos, std_vel]))

# Update time state x' = Fx (1)

# x为track在t-1时刻的均值,F称为状态转移矩阵,该公式预测t时刻的x'

# self._motion_mat为F_k是作用在 x_{k-1}上的状态变换模型

mean = np.dot(self._motion_mat, mean)

# Calculate error covariance P' = FPF^T+Q (2)

# P为track在t-1时刻的协方差,Q为系统的噪声矩阵,代表整个系统的可靠程度,一般初始化为很小的值,

# 该公式预测t时刻的P'

# covariance为P_{k|k} ,后验估计误差协方差矩阵,度量估计值的精确程度

covariance = np.linalg.multi_dot((

self._motion_mat, covariance, self._motion_mat.T)) + motion_cov

return mean, covariance(2)更新

基于t时刻检测到的detection,校正与其关联的track的状态,得到一个更精确的结果。

(3)

(4)

(5)

(6)

(7)

在公式3中,z为detection的均值向量,不包含速度变化值,即z=[cx, cy, r, h],H称为测量矩阵,它将track的均值向量x'映射到检测空间,该公式计算detection和track的均值误差;

在公式4中,R为检测器的噪声矩阵,它是一个4x4的对角矩阵,对角线上的值分别为中心点两个坐标以及宽高的噪声,以任意值初始化,一般设置宽高的噪声大于中心点的噪声,该公式先将协方差矩阵P'映射到检测空间,然后再加上噪声矩阵R;

公式5计算卡尔曼增益K,卡尔曼增益用于估计误差的重要程度;

公式6和公式7得到更新后的均值向量x和协方差矩阵P。

源码:

def project(self, mean, covariance):

"""Project state distribution to measurement space.

投影状态分布到测量空间

Parameters

----------

mean : ndarray

The state's mean vector (8 dimensional array).

covariance : ndarray

The state's covariance matrix (8x8 dimensional).

mean:ndarray,状态的平均向量(8维数组)。

covariance:ndarray,状态的协方差矩阵(8x8维)。

Returns

-------

(ndarray, ndarray)

Returns the projected mean and covariance matrix of the given state

estimate.

返回(ndarray,ndarray),返回给定状态估计的投影平均值和协方差矩阵

"""

# 在公式4中,R为检测器的噪声矩阵,它是一个4x4的对角矩阵,

# 对角线上的值分别为中心点两个坐标以及宽高的噪声,

# 以任意值初始化,一般设置宽高的噪声大于中心点的噪声,

# 该公式先将协方差矩阵P'映射到检测空间,然后再加上噪声矩阵R;

std = [

self._std_weight_position * mean[3],

self._std_weight_position * mean[3],

1e-1,

self._std_weight_position * mean[3]]

# R为测量过程中噪声的协方差;初始化噪声矩阵R

innovation_cov = np.diag(np.square(std))

# 将均值向量映射到检测空间,即 Hx'

mean = np.dot(self._update_mat, mean)

# 将协方差矩阵映射到检测空间,即 HP'H^T

covariance = np.linalg.multi_dot((

self._update_mat, covariance, self._update_mat.T))

return mean, covariance + innovation_cov # 公式(4)

def update(self, mean, covariance, measurement):

"""Run Kalman filter correction step.

通过估计值和观测值估计最新结果

Parameters

----------

mean : ndarray

The predicted state's mean vector (8 dimensional).

covariance : ndarray

The state's covariance matrix (8x8 dimensional).

measurement : ndarray

The 4 dimensional measurement vector (x, y, a, h), where (x, y)

is the center position, a the aspect ratio, and h the height of the

bounding box.

Returns

-------

(ndarray, ndarray)

Returns the measurement-corrected state distribution.

"""

# 将均值和协方差映射到检测空间,得到 Hx'和S

projected_mean, projected_cov = self.project(mean, covariance)

# 矩阵分解

chol_factor, lower = scipy.linalg.cho_factor(

projected_cov, lower=True, check_finite=False)

# 计算卡尔曼增益K;相当于求解公式(5)

# 公式5计算卡尔曼增益K,卡尔曼增益用于估计误差的重要程度

# 求解卡尔曼滤波增益K 用到了cholesky矩阵分解加快求解;

# 公式5的右边有一个S的逆,如果S矩阵很大,S的逆求解消耗时间太大,

# 所以代码中把公式两边同时乘上S,右边的S*S的逆变成了单位矩阵,转化成AX=B形式求解。

kalman_gain = scipy.linalg.cho_solve(

(chol_factor, lower), np.dot(covariance, self._update_mat.T).T,

check_finite=False).T

# y = z - Hx' (3)

# 在公式3中,z为detection的均值向量,不包含速度变化值,即z=[cx, cy, r, h],

# H称为测量矩阵,它将track的均值向量x'映射到检测空间,该公式计算detection和track的均值误差

innovation = measurement - projected_mean

# 更新后的均值向量 x = x' + Ky (6)

new_mean = mean + np.dot(innovation, kalman_gain.T)

# 更新后的协方差矩阵 P = (I - KH)P' (7)

new_covariance = covariance - np.linalg.multi_dot((

kalman_gain, projected_cov, kalman_gain.T))

return new_mean, new_covariance参考 目标跟踪初探(DeepSORT) - 知乎 (zhihu.com)

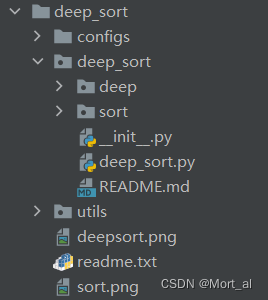

三.deepsort部分的源码解析

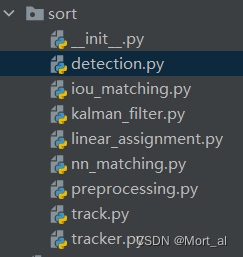

1.sort源码分析

(1)detection.py用来保存通过目标检测后的检测框

import numpy as np

class Detection(object):

def __init__(self, tlwh, confidence, feature):

self.tlwh = np.asarray(tlwh, dtype=np.float)

self.confidence = float(confidence)

self.feature = np.asarray(feature, dtype=np.float32)

def to_tlbr(self):

"""Convert bounding box to format `(min x, min y, max x, max y)`, i.e.,

`(top left, bottom right)`.

"""

ret = self.tlwh.copy()

ret[2:] += ret[:2]

return ret

def to_xyah(self):

"""Convert bounding box to format `(center x, center y, aspect ratio,

height)`, where the aspect ratio is `width / height`.

"""

ret = self.tlwh.copy()

ret[:2] += ret[2:] / 2

ret[2] /= ret[3]

return ret- tlwh: 代表左上角坐标+宽高

- tlbr: 代表左上角坐标+右下角坐标

- xyah: 代表中心坐标+宽高比+高

(2)track类

class Track:

"""

A single target track with state space `(x, y, a, h)` and associated

velocities, where `(x, y)` is the center of the bounding box, `a` is the

aspect ratio and `h` is the height.

具有状态空间(x,y,a,h)并关联速度的单个目标轨迹(track),

其中(x,y)是边界框的中心,a是宽高比,h是高度。

Parameters

----------

mean : ndarray

Mean vector of the initial state distribution.

初始状态分布的均值向量

covariance : ndarray

Covariance matrix of the initial state distribution.

初始状态分布的协方差矩阵

track_id : int

A unique track identifier.

唯一的track标识符

n_init : int

Number of consecutive detections before the track is confirmed. The

track state is set to `Deleted` if a miss occurs within the first

`n_init` frames.

确认track之前的连续检测次数。 在第一个n_init帧中

第一个未命中的情况下将跟踪状态设置为“Deleted”

max_age : int

The maximum number of consecutive misses before the track state is

set to `Deleted`.

跟踪状态设置为Deleted之前的最大连续未命中数;代表一个track的存活期限

feature : Optional[ndarray]

Feature vector of the detection this track originates from. If not None,

this feature is added to the `features` cache.

此track所源自的检测的特征向量。 如果不是None,此feature已添加到feature缓存中。

Attributes

----------

mean : ndarray

Mean vector of the initial state distribution.

初始状态分布的均值向量

covariance : ndarray

Covariance matrix of the initial state distribution.

初始状态分布的协方差矩阵

track_id : int

A unique track identifier.

hits : int

Total number of measurement updates.

测量更新总数

age : int

Total number of frames since first occurence.

自第一次出现以来的总帧数

time_since_update : int

Total number of frames since last measurement update.

自上次测量更新以来的总帧数

state : TrackState

The current track state.

features : List[ndarray]

A cache of features. On each measurement update, the associated feature

vector is added to this list.

feature缓存。每次测量更新时,相关feature向量添加到此列表中

"""

def __init__(self, mean, covariance, track_id, n_init, max_age,

feature=None):

self.mean = mean

self.covariance = covariance

self.track_id = track_id

# hits代表匹配上了多少次,匹配次数超过n_init,设置Confirmed状态

# hits每次调用update函数的时候+1

self.hits = 1

self.age = 1 # 和time_since_update功能重复

# 每次调用predict函数的时候就会+1; 每次调用update函数的时候就会设置为0

self.time_since_update = 0

self.state = TrackState.Tentative # 初始化一个Track的时设置Tentative状态

# 每个track对应多个features, 每次更新都会将最新的feature添加到列表中

self.features = []

if feature is not None:

self.features.append(feature)

self._n_init = n_init

self._max_age = max_agestate代表框的状态,在deepsort里面有三种confirmed,unconfirmed(tentative),deleted。

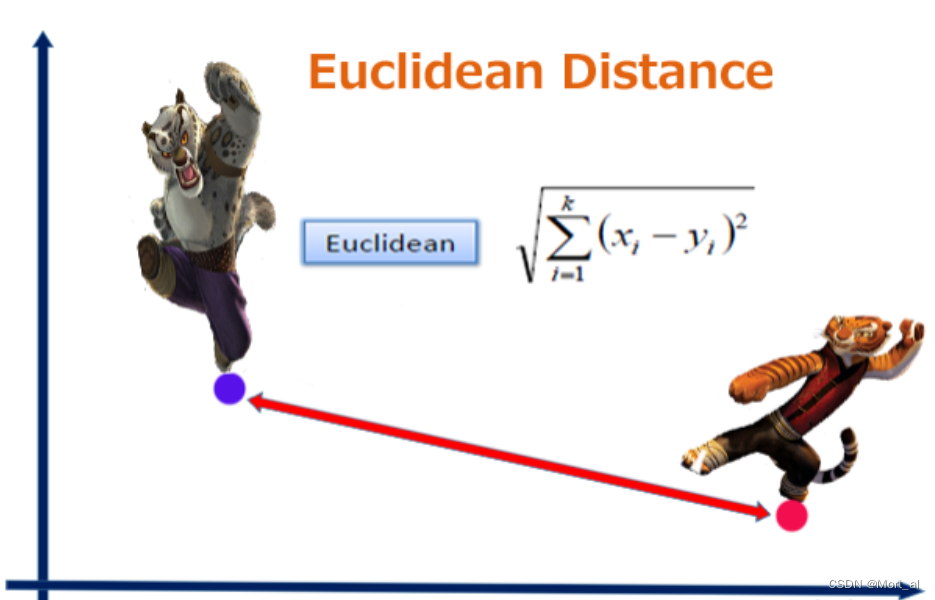

(3)计算欧氏距离

什么是欧氏距离?

它是在m维空间中两个点之间的真实距离。

代码实现

def _pdist(a, b):

"""Compute pair-wise squared distance between points in `a` and `b`.

Parameters

----------

a : array_like

An NxM matrix of N samples of dimensionality M.

b : array_like

An LxM matrix of L samples of dimensionality M.

Returns

-------

ndarray

Returns a matrix of size len(a), len(b) such that element (i, j)

contains the squared distance between `a[i]` and `b[j]`.

用于计算成对点之间的平方距离

a :NxM 矩阵,代表 N 个样本,每个样本 M 个数值

b :LxM 矩阵,代表 L 个样本,每个样本有 M 个数值

返回的是 NxL 的矩阵,比如 dist[i][j] 代表 a[i] 和 b[j] 之间的平方和距离

参考:https://blog.csdn.net/frankzd/article/details/80251042

"""

a, b = np.asarray(a), np.asarray(b)

if len(a) == 0 or len(b) == 0:

return np.zeros((len(a), len(b)))

a2, b2 = np.square(a).sum(axis=1), np.square(b).sum(axis=1)

r2 = -2. * np.dot(a, b.T) + a2[:, None] + b2[None, :]

r2 = np.clip(r2, 0., float(np.inf))

return r2(4)计算余弦距离

什么是余弦距离?

用向量空间中两个向量夹角的余弦值作为衡量两个个体间差异的大小的度量。要确定两个向量方向是否一致,这就要用到余弦定理计算向量的夹角。

代码实现

def _cosine_distance(a, b, data_is_normalized=False):

"""Compute pair-wise cosine distance between points in `a` and `b`.

Parameters

----------

a : array_like

An NxM matrix of N samples of dimensionality M.

b : array_like

An LxM matrix of L samples of dimensionality M.

data_is_normalized : Optional[bool]

If True, assumes rows in a and b are unit length vectors.

Otherwise, a and b are explicitly normalized to lenght 1.

Returns

-------

ndarray

Returns a matrix of size len(a), len(b) such that eleement (i, j)

contains the squared distance between `a[i]` and `b[j]`.

用于计算成对点之间的余弦距离

a :NxM 矩阵,代表 N 个样本,每个样本 M 个数值

b :LxM 矩阵,代表 L 个样本,每个样本有 M 个数值

返回的是 NxL 的矩阵,比如 c[i][j] 代表 a[i] 和 b[j] 之间的余弦距离

参考:

https://blog.csdn.net/u013749540/article/details/51813922

"""

if not data_is_normalized:

# np.linalg.norm 求向量的范式,默认是 L2 范式

a = np.asarray(a) / np.linalg.norm(a, axis=1, keepdims=True)

b = np.asarray(b) / np.linalg.norm(b, axis=1, keepdims=True)

return 1. - np.dot(a, b.T) # 余弦距离 = 1 - 余弦相似度

(5)最近邻距离度量类

对于每个目标,返回最近邻距的距离度量,即与目前为止已观察到的任何样本的最接近距离。

利用上述的欧氏距离和余弦距离来得出结论。

class NearestNeighborDistanceMetric(object):

# 对于每个目标,返回一个最近的距离

def __init__(self, metric, matching_threshold, budget=None):

# 默认matching_threshold = 0.2 budge = 100

if metric == "euclidean":

# 使用最近邻欧氏距离

self._metric = _nn_euclidean_distance

elif metric == "cosine":

# 使用最近邻余弦距离

self._metric = _nn_cosine_distance

else:

raise ValueError("Invalid metric; must be either 'euclidean' or 'cosine'")

self.matching_threshold = matching_threshold

# 在级联匹配的函数中调用

self.budget = budget

# budge 预算,控制feature的多少

self.samples = {}

# samples是一个字典{id->feature list}

def partial_fit(self, features, targets, active_targets):

# 作用:部分拟合,用新的数据更新测量距离

# 调用:在特征集更新模块部分调用,tracker.update()中

for feature, target in zip(features, targets):

self.samples.setdefault(target, []).append(feature)

# 对应目标下添加新的feature,更新feature集合

# 目标id : feature list

if self.budget is not None:

self.samples[target] = self.samples[target][-self.budget:]

# 设置预算,每个类最多多少个目标,超过直接忽略

# 筛选激活的目标

self.samples = {k: self.samples[k] for k in active_targets}

def distance(self, features, targets):

# 作用:比较feature和targets之间的距离,返回一个代价矩阵

# 调用:在匹配阶段,将distance封装为gated_metric,

# 进行外观信息(reid得到的深度特征)+

# 运动信息(马氏距离用于度量两个分布相似程度)

cost_matrix = np.zeros((len(targets), len(features)))

for i, target in enumerate(targets):

cost_matrix[i, :] = self._metric(self.samples[target], features)

return cost_matrix

(6)tracker类

tracker集合了多个track,里面包含卡尔曼滤波的更新和预测和匈牙利匹配,iou匹配等等重要任务。

class Tracker:

# 是一个多目标tracker,保存了很多个track轨迹

# 负责调用卡尔曼滤波来预测track的新状态+进行匹配工作+初始化第一帧

# Tracker调用update或predict的时候,其中的每个track也会各自调用自己的update或predict

"""

This is the multi-target tracker.

"""

def __init__(self, metric, max_iou_distance=0.7, max_age=70, n_init=3):

# 调用的时候,后边的参数全部是默认的

self.metric = metric

# metric是一个类,用于计算距离(余弦距离或马氏距离)

self.max_iou_distance = max_iou_distance

# 最大iou,iou匹配的时候使用

self.max_age = max_age

# 直接指定级联匹配的cascade_depth参数,也就是删除轨迹前的最大未命中数

self.n_init = n_init

# n_init代表需要n_init次数的update才会将track状态设置为confirmed

self.kf = kalman_filter.KalmanFilter()# 卡尔曼滤波器

self.tracks = [] # 保存一系列轨迹

self._next_id = 1 # 下一个分配的轨迹id

def predict(self):

# 遍历每个track都进行一次预测,将跟踪状态分布向前传播一步

"""Propagate track state distributions one time step forward.

This function should be called once every time step, before `update`.

"""

for track in self.tracks:

track.predict(self.kf)

tracker里还有两大重要的函数,是update和match。

update的代码如下:

def update(self, detections):

"""Perform measurement update and track management.

执行测量更新和轨迹管理

Parameters

----------

detections : List[deep_sort.detection.Detection]

A list of detections at the current time step.

"""

# Run matching cascade.

matches, unmatched_tracks, unmatched_detections = \

self._match(detections)

# Update track set.

# 1. 针对匹配上的结果

for track_idx, detection_idx in matches:

# 更新tracks中相应的detection

self.tracks[track_idx].update(

self.kf, detections[detection_idx])

# 2. 针对未匹配的track, 调用mark_missed进行标记

# track失配时,若Tantative则删除;若update时间很久也删除

# max age是一个存活期限,默认为70帧

for track_idx in unmatched_tracks:

self.tracks[track_idx].mark_missed()

# 3. 针对未匹配的detection, detection失配,进行初始化

for detection_idx in unmatched_detections:

self._initiate_track(detections[detection_idx])

# 得到最新的tracks列表,保存的是标记为Confirmed和Tentative的track

self.tracks = [t for t in self.tracks if not t.is_deleted()]

# Update distance metric.

active_targets = [t.track_id for t in self.tracks if t.is_confirmed()]

features, targets = [], []

for track in self.tracks:

# 获取所有Confirmed状态的track id

if not track.is_confirmed():

continue

features += track.features # 将Confirmed状态的track的features添加到features列表

# 获取每个feature对应的trackid

targets += [track.track_id for _ in track.features]

track.features = []

# 距离度量中的特征集更新

self.metric.partial_fit(

np.asarray(features), np.asarray(targets), active_targets)match函数

def _match(self, detections):

# 主要功能是进行匹配,找到匹配的,未匹配的部分

def gated_metric(tracks, dets, track_indices, detection_indices):

# 功能: 用于计算track和detection之间的距离,代价函数

# 需要使用在KM算法之前

# 调用:

# cost_matrix = distance_metric(tracks, detections,

# track_indices, detection_indices)

features = np.array([dets[i].feature for i in detection_indices])

targets = np.array([tracks[i].track_id for i in track_indices])

# 1. 通过最近邻计算出代价矩阵 cosine distance

cost_matrix = self.metric.distance(features, targets)

# 2. 计算马氏距离,得到新的状态矩阵

cost_matrix = linear_assignment.gate_cost_matrix(

self.kf, cost_matrix, tracks, dets, track_indices,

detection_indices)

return cost_matrix

# Split track set into confirmed and unconfirmed tracks.

# 划分不同轨迹的状态

confirmed_tracks = [

i for i, t in enumerate(self.tracks) if t.is_confirmed()

]

unconfirmed_tracks = [

i for i, t in enumerate(self.tracks) if not t.is_confirmed()

]

# 进行级联匹配,得到匹配的track、不匹配的track、不匹配的detection

'''

!!!!!!!!!!!

级联匹配

!!!!!!!!!!!

'''

# gated_metric->cosine distance

# 仅仅对确定态的轨迹进行级联匹配

matches_a, unmatched_tracks_a, unmatched_detections = \

linear_assignment.matching_cascade(

gated_metric,

self.metric.matching_threshold,

self.max_age,

self.tracks,

detections,

confirmed_tracks)

# 将所有状态为未确定态的轨迹和刚刚没有匹配上的轨迹组合为iou_track_candidates,

# 进行IoU的匹配

iou_track_candidates = unconfirmed_tracks + [

k for k in unmatched_tracks_a

if self.tracks[k].time_since_update == 1 # 刚刚没有匹配上

]

# 未匹配

unmatched_tracks_a = [

k for k in unmatched_tracks_a

if self.tracks[k].time_since_update != 1 # 已经很久没有匹配上

]

'''

!!!!!!!!!!!

IOU 匹配

对级联匹配中还没有匹配成功的目标再进行IoU匹配

!!!!!!!!!!!

'''

# 虽然和级联匹配中使用的都是min_cost_matching作为核心,

# 这里使用的metric是iou cost和以上不同

matches_b, unmatched_tracks_b, unmatched_detections = \

linear_assignment.min_cost_matching(

iou_matching.iou_cost,

self.max_iou_distance,

self.tracks,

detections,

iou_track_candidates,

unmatched_detections)

matches = matches_a + matches_b # 组合两部分match得到的结果

unmatched_tracks = list(set(unmatched_tracks_a + unmatched_tracks_b))

return matches, unmatched_tracks, unmatched_detections

(7)级联匹配

def matching_cascade(

distance_metric, max_distance, cascade_depth, tracks, detections,

track_indices=None, detection_indices=None):

"""Run matching cascade.

Parameters

----------

distance_metric : Callable[List[Track], List[Detection], List[int], List[int]) -> ndarray

The distance metric is given a list of tracks and detections as well as

a list of N track indices and M detection indices. The metric should

return the NxM dimensional cost matrix, where element (i, j) is the

association cost between the i-th track in the given track indices and

the j-th detection in the given detection indices.

距离度量:

输入:一个轨迹和检测列表,以及一个N个轨迹索引和M个检测索引的列表。

返回:NxM维的代价矩阵,其中元素(i,j)是给定轨迹索引中第i个轨迹与

给定检测索引中第j个检测之间的关联成本。

max_distance : float

Gating threshold. Associations with cost larger than this value are

disregarded.

门控阈值。成本大于此值的关联将被忽略。

cascade_depth: int

The cascade depth, should be se to the maximum track age.

级联深度应设置为最大轨迹寿命。

tracks : List[track.Track]

A list of predicted tracks at the current time step.

当前时间步的预测轨迹列表。

detections : List[detection.Detection]

A list of detections at the current time step.

当前时间步的检测列表。

track_indices : Optional[List[int]]

List of track indices that maps rows in `cost_matrix` to tracks in

`tracks` (see description above). Defaults to all tracks.

轨迹索引列表,用于将 cost_matrix中的行映射到tracks的

轨迹(请参见上面的说明)。 默认为所有轨迹。

detection_indices : Optional[List[int]]

List of detection indices that maps columns in `cost_matrix` to

detections in `detections` (see description above). Defaults to all

detections.

将 cost_matrix中的列映射到的检测索引列表

detections中的检测(请参见上面的说明)。 默认为全部检测。

Returns

-------

(List[(int, int)], List[int], List[int])

Returns a tuple with the following three entries:

* A list of matched track and detection indices.

* A list of unmatched track indices.

* A list of unmatched detection indices.

返回包含以下三个条目的元组:

匹配的跟踪和检测的索引列表,

不匹配的轨迹索引的列表,

未匹配的检测索引的列表。

"""

# 分配track_indices和detection_indices两个列表

if track_indices is None:

track_indices = list(range(len(tracks)))

if detection_indices is None:

detection_indices = list(range(len(detections)))

# 初始化匹配集matches M ← ∅

# 未匹配检测集unmatched_detections U ← D

unmatched_detections = detection_indices

matches = []

# 由小到大依次对每个level的tracks做匹配

for level in range(cascade_depth):

# 如果没有detections,退出循环

if len(unmatched_detections) == 0: # No detections left

break

# 当前level的所有tracks索引

# 步骤6:Select tracks by age

track_indices_l = [

k for k in track_indices

if tracks[k].time_since_update == 1 + level

]

# 如果当前level没有track,继续

if len(track_indices_l) == 0: # Nothing to match at this level

continue

# 步骤7:调用min_cost_matching函数进行匹配

matches_l, _, unmatched_detections = \

min_cost_matching(

distance_metric, max_distance, tracks, detections,

track_indices_l, unmatched_detections)

matches += matches_l # 步骤8

unmatched_tracks = list(set(track_indices) - set(k for k, _ in matches)) # 步骤9

return matches, unmatched_tracks, unmatched_detections

(8)门控矩阵

门控成本矩阵:通过计算卡尔曼滤波的状态分布和测量值之间的距离对成本矩阵进行限制,

成本矩阵中的距离是track和detection之间的外观相似度。

如果一个轨迹要去匹配两个外观特征非常相似的 detection,很容易出错;

分别让两个detection计算与这个轨迹的马氏距离,并使用一个阈值gating_threshold进行限制,

就可以将马氏距离较远的那个detection区分开,从而减少错误的匹配。

代码:

def gate_cost_matrix(

kf, cost_matrix, tracks, detections, track_indices, detection_indices,

gated_cost=INFTY_COST, only_position=False):

"""Invalidate infeasible entries in cost matrix based on the state

distributions obtained by Kalman filtering.

Parameters

----------

kf : The Kalman filter.

cost_matrix : ndarray

The NxM dimensional cost matrix, where N is the number of track indices

and M is the number of detection indices, such that entry (i, j) is the

association cost between `tracks[track_indices[i]]` and

`detections[detection_indices[j]]`.

tracks : List[track.Track]

A list of predicted tracks at the current time step.

detections : List[detection.Detection]

A list of detections at the current time step.

track_indices : List[int]

List of track indices that maps rows in `cost_matrix` to tracks in

`tracks` (see description above).

detection_indices : List[int]

List of detection indices that maps columns in `cost_matrix` to

detections in `detections` (see description above).

gated_cost : Optional[float]

Entries in the cost matrix corresponding to infeasible associations are

set this value. Defaults to a very large value.

代价矩阵中与不可行关联相对应的条目设置此值。 默认为一个很大的值。

only_position : Optional[bool]

If True, only the x, y position of the state distribution is considered

during gating. Defaults to False.

如果为True,则在门控期间仅考虑状态分布的x,y位置。默认为False。

Returns

-------

ndarray

Returns the modified cost matrix.

"""

# 根据通过卡尔曼滤波获得的状态分布,使成本矩阵中的不可行条目无效。

gating_dim = 2 if only_position else 4 # 测量空间维度

# 马氏距离通过测算检测与平均轨迹位置的距离超过多少标准差来考虑状态估计的不确定性。

# 通过从逆chi^2分布计算95%置信区间的阈值,排除可能性小的关联。

# 四维测量空间对应的马氏阈值为9.4877

gating_threshold = kalman_filter.chi2inv95[gating_dim]

measurements = np.asarray(

[detections[i].to_xyah() for i in detection_indices])

for row, track_idx in enumerate(track_indices):

track = tracks[track_idx]

#KalmanFilter.gating_distance 计算状态分布和测量之间的选通距离

gating_distance = kf.gating_distance(track.mean, track.covariance, measurements, only_position)

cost_matrix[row, gating_distance > gating_threshold] = gated_cost

return cost_matrix

四.总结

以上就是Deep SORT算法代码部分的解析,核心在于类图和流程图,理解Deep SORT实现的过程。其余内容以后慢慢补充,感谢观看。

7805

7805

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?