下面进行代码验证

下面进行代码验证

单个样本

import torch.nn.functional as F

# this is the same example in wiki

P = F.softmax(torch.randn(1,4),dim=1)

Q = F.softmax(torch.randn(1,4),dim=1)

print((P * (P / Q).log()).sum()) #

# tensor(0.0863), 10.2 µs ± 508

print(F.kl_div(Q.log(), P, reduction='sum')) # 首先顺序是要反过来,

# 对于torch.KLdivloss(input,target)

print("=========KL_loss求均值============")

# this is the same example in wiki

P = F.softmax(torch.randn(1,4),dim=1)

Q = F.softmax(torch.randn(1,4),dim=1)

print((P * (P / Q).log()).mean()) #

# tensor(0.0863), 10.2 µs ± 508

F.kl_div(Q.log(), P, reduction='mean') # 首先顺序是要反过来,

# 对于torch.KLdivloss(input,target)

# 验证计算方式

# 验证计算方式

## 验证计算方式

x = F.softmax(torch.randn(2,4),dim=1)

y = F.softmax(torch.randn(2,4),dim=1)

KL_mat=np.ones_like(x.cpu().data.numpy())

#print(KL_mat)

for i in range(x.size()[0]):

for j in range(x.size()[1]):

KL_mat[i,j]=y.cpu().data.numpy()[i,j]*(np.log(y.cpu().data.numpy()[i,j])-x.cpu().data.numpy()[i,j])

print("=======手动计算======")

print(KL_mat)

print("=======pytorch计算===")

KL_torch_mat_all= nn.KLDivLoss(reduction='none')(x,y)

print(KL_torch_mat_all)

print("=======sum()========")

KL_torch_mat_sum= nn.KLDivLoss(reduction='sum')(x,y)

print(KL_torch_mat_sum)

print(np.sum(KL_mat))

print("=======mean()=======")

KL_torch_mat_mean= nn.KLDivLoss(reduction='mean')(x,y)

print(KL_torch_mat_mean)

print(np.mean(KL_mat))

print(KL_torch_mat_sum/(x.size()[0]*x.size()[1]))

print("=======batchmean()=======")

KL_torch_mat_batch_mean= nn.KLDivLoss(reduction='batchmean')(x,y)

print(KL_torch_mat_batch_mean)# 直接除以样本而

print(KL_torch_mat_sum/x.size()[0])

通过这个例子就大概知道pytorch怎么计算这个KLdivloss了

通过这个例子就大概知道pytorch怎么计算这个KLdivloss了

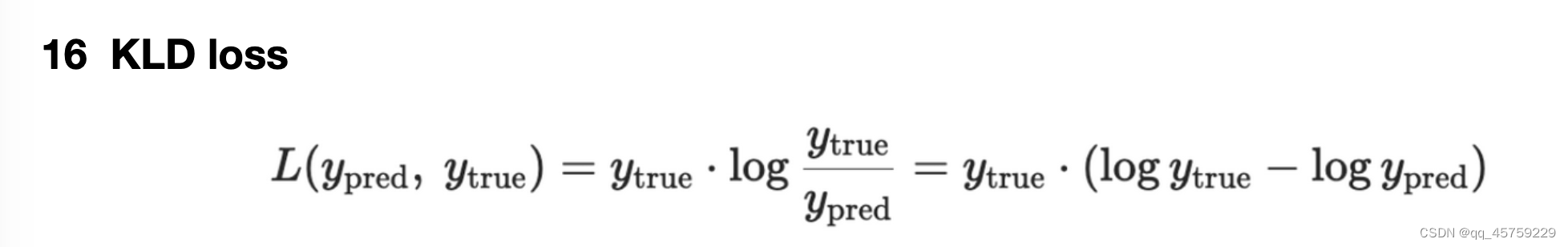

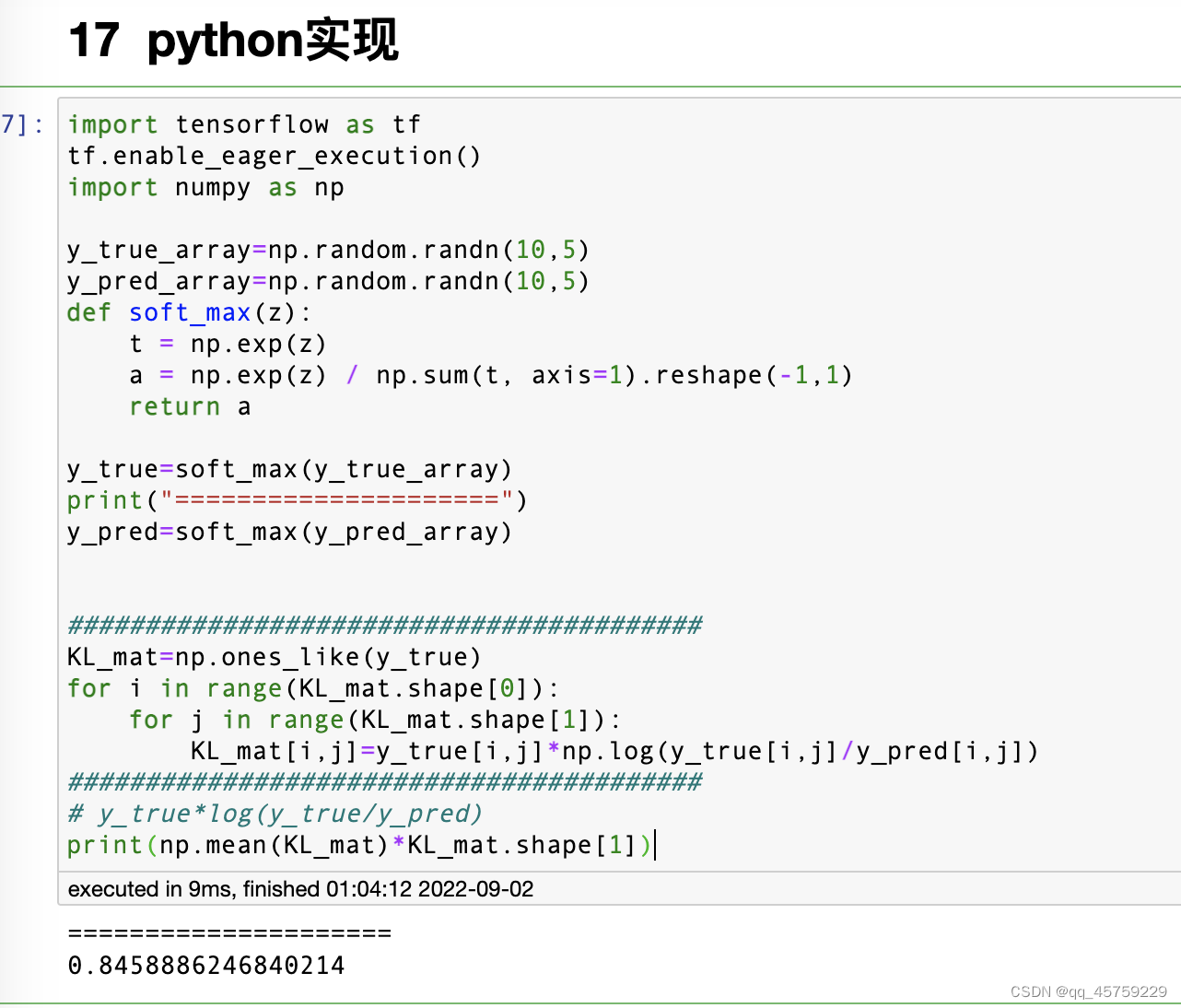

python实现

import tensorflow as tf

tf.enable_eager_execution()

import numpy as np

y_true_array=np.random.randn(10,5)

y_pred_array=np.random.randn(10,5)

def soft_max(z):

t = np.exp(z)

a = np.exp(z) / np.sum(t, axis=1).reshape(-1,1)

return a

y_true=soft_max(y_true_array)

print("=====================")

y_pred=soft_max(y_pred_array)

#########################################

KL_mat=np.ones_like(y_true)

for i in range(KL_mat.shape[0]):

for j in range(KL_mat.shape[1]):

KL_mat[i,j]=y_true[i,j]*np.log(y_true[i,j]/y_pred[i,j])

#########################################

# y_true*log(y_true/y_pred)

print(np.mean(KL_mat)*KL_mat.shape[1])

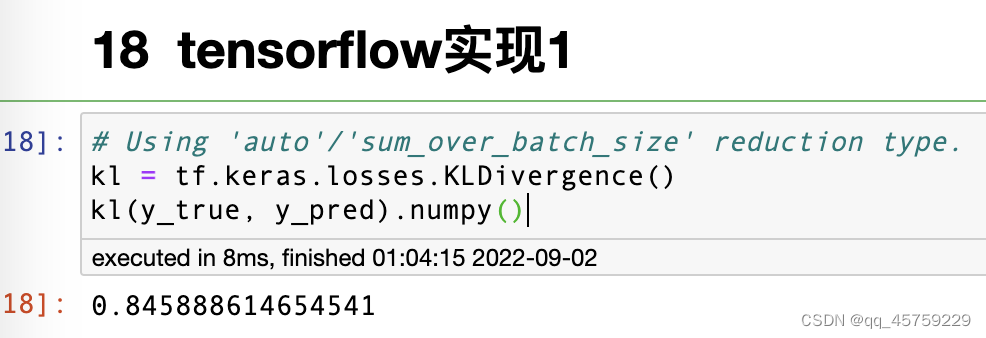

tensorfow实现1

# Using 'auto'/'sum_over_batch_size' reduction type.

kl = tf.keras.losses.KLDivergence()

kl(y_true, y_pred).numpy()

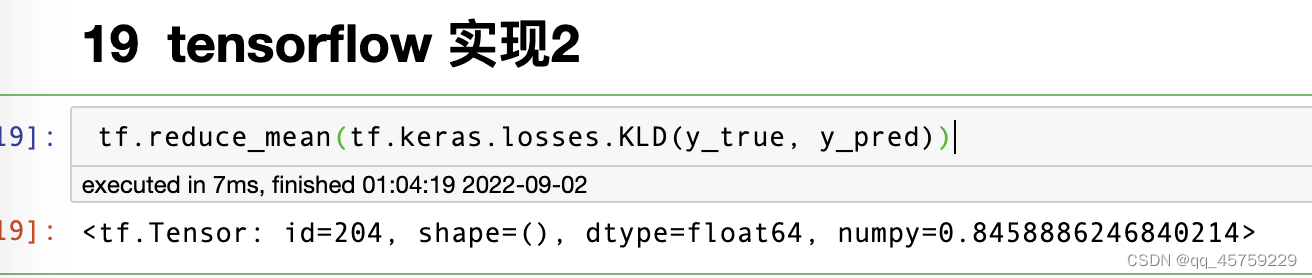

tensorflow实现2

tf.reduce_mean(tf.keras.losses.KLD(y_true, y_pred))

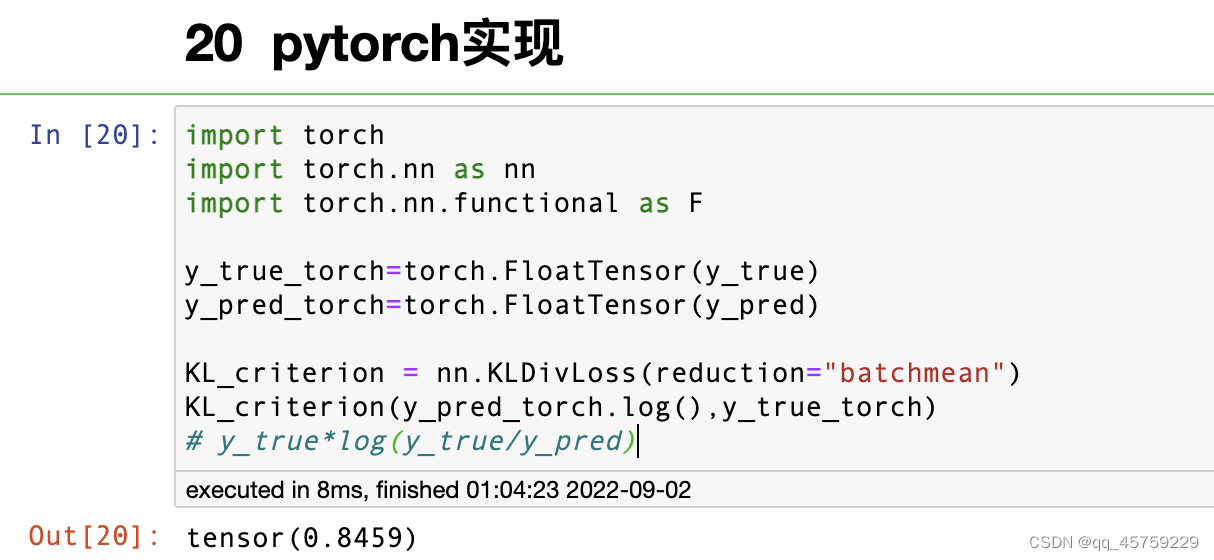

pytorch实现

import torch

import torch.nn as nn

import torch.nn.functional as F

y_true_torch=torch.FloatTensor(y_true)

y_pred_torch=torch.FloatTensor(y_pred)

KL_criterion = nn.KLDivLoss(reduction="batchmean")

KL_criterion(y_pred_torch.log(),y_true_torch)

# y_true*log(y_true/y_pred)

758

758

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?