目录

1.设备

2.实现思路及实物接线

3.yolov5训练及UI设计代码编写

4.arduino控制代码及讲解

5.实现效果

6.待改进思路

1.设备

Windows电脑:win10、8G、i5-7200U(本人电脑无英伟达显卡,所有操作均是CPU运行)

单片机:arduino UNO

舵机:SG90

摄像头:普通摄像头(最好用工业摄像头)

2.实现思路及实物接线

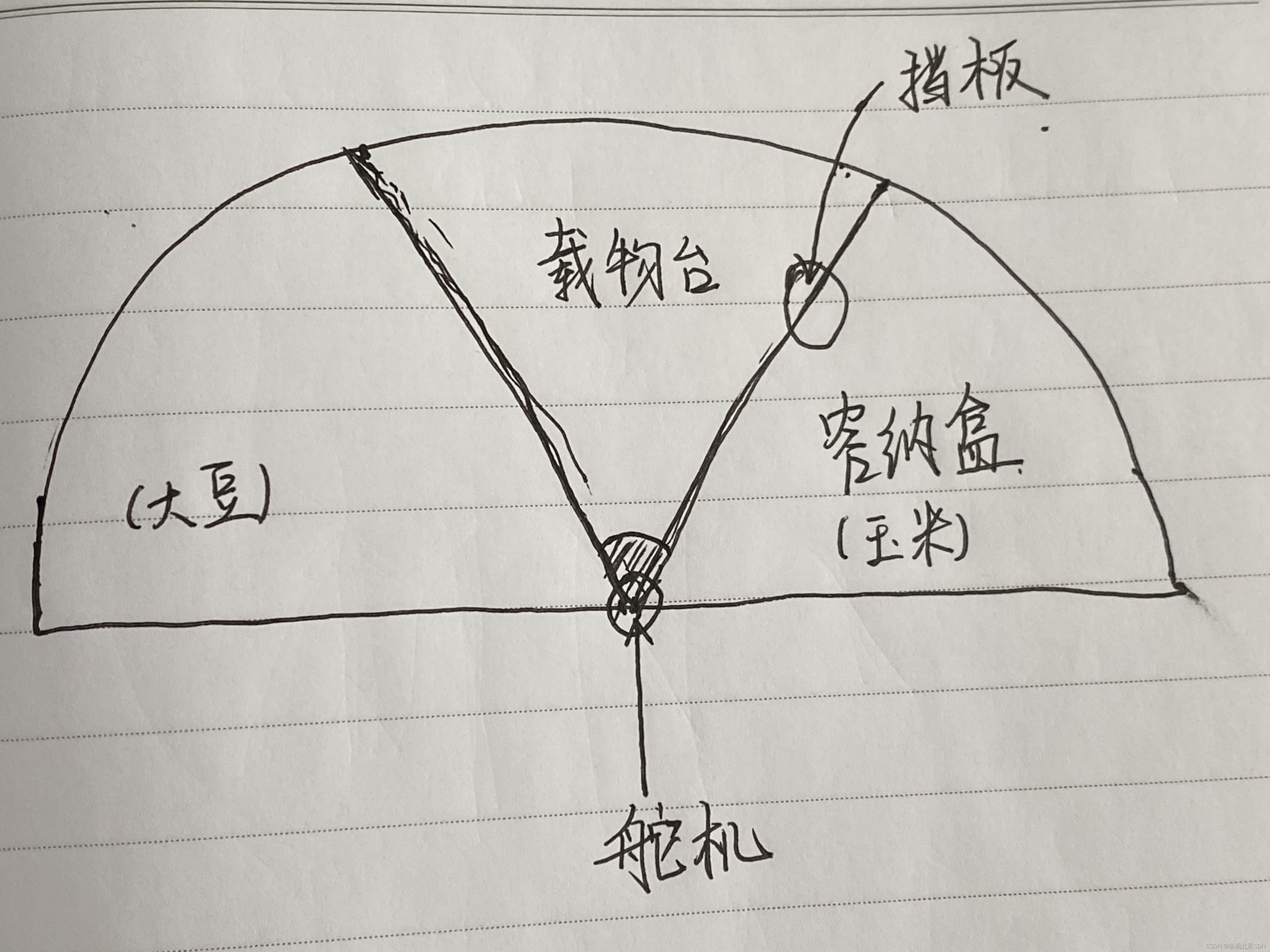

2.1实现思路

通过Yolov5检测种子类别,来控制舵机正反转动一定的角度,设计上图平台,当检测到种子是玉米时,舵机带动挡板顺时针转动120°,将玉米推入玉米容纳盒;当检测到种子是大豆时,舵机带动挡板逆时针转动120°,将大豆推入大豆容纳盒;从而实现种子的自动分拣。

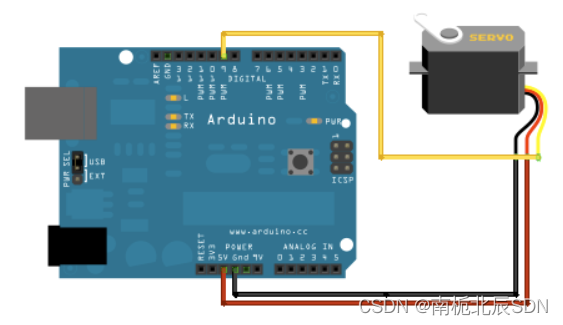

2.2实物接线

线路图中,舵机GND(黑色线)、VCC(红色线)、Signal(黄色线)

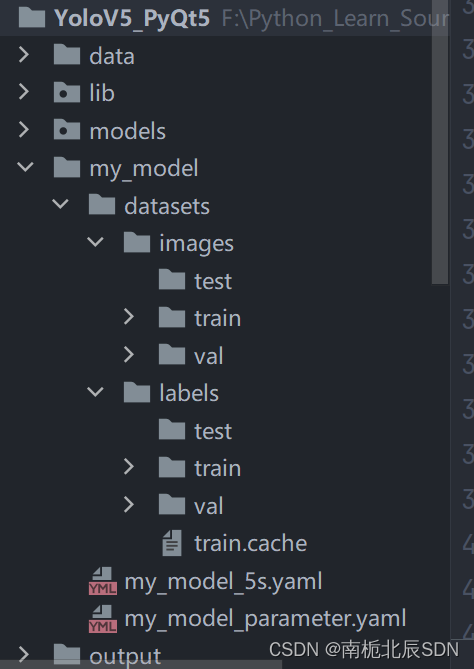

3.yolov5训练及UI设计代码编写

3.1 yolov5训练

yolov5部分详细讲解参考我上篇文章:

https://blog.csdn.net/qq_46173016/article/details/129379962?spm=1001.2014.3001.5501

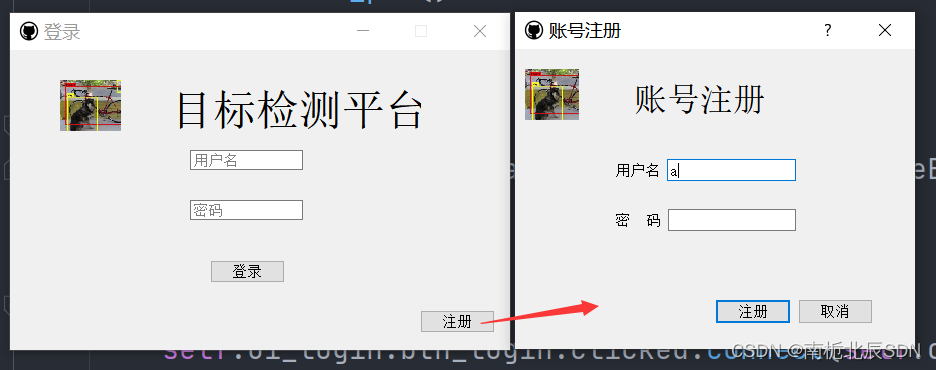

3.2 UI界面设计

登录界面代码:

# -*- coding: utf-8 -*-

# @Modified by: Ruihao

# @ProjectName:yolov5-pyqt5

import sys

from datetime import datetime

from PyQt5 import QtWidgets

from PyQt5.QtWidgets import *

from utils.id_utils import get_id_info, sava_id_info # 账号信息工具函数

from lib.share import shareInfo # 公共变量名

# 导入QT-Design生成的UI

from ui.login_ui import Login_Ui_Form

from ui.registe_ui import Ui_Dialog

# 导入设计好的检测界面

from detect_logical import UI_Logic_Window

# 界面登录

class win_Login(QMainWindow):

def __init__(self, parent = None):

super(win_Login, self).__init__(parent)

self.ui_login = Login_Ui_Form()

self.ui_login.setupUi(self)

self.init_slots()

self.hidden_pwd()

# 密码输入框隐藏

def hidden_pwd(self):

self.ui_login.edit_password.setEchoMode(QLineEdit.Password)

# 绑定信号槽

def init_slots(self):

self.ui_login.btn_login.clicked.connect(self.onSignIn) # 点击按钮登录

self.ui_login.edit_password.returnPressed.connect(self.onSignIn) # 按下回车登录

self.ui_login.btn_regeist.clicked.connect(self.create_id)

# 跳转到注册界面

def create_id(self):

shareInfo.createWin = win_Register()

shareInfo.createWin.show()

# 保存登录日志

def sava_login_log(self, username):

with open('login_log.txt', 'a', encoding='utf-8') as f:

f.write(username + '\t log in at' + datetime.now().strftimestrftime+ '\r')

# 登录

def onSignIn(self):

print("You pressed sign in")

# 从登陆界面获得输入账户名与密码

username = self.ui_login.edit_username.text().strip()

password = self.ui_login.edit_password.text().strip()

# 获得账号信息

USER_PWD = get_id_info()

# print(USER_PWD)

if username not in USER_PWD.keys():

replay = QMessageBox.warning(self,"登陆失败!", "账号或密码输入错误", QMessageBox.Yes)

else:

# 若登陆成功,则跳转主界面

if USER_PWD.get(username) == password:

print("Jump to main window")

# # 实例化新窗口

# # 写法1:

# self.ui_new = win_Main()

# # 显示新窗口

# self.ui_new.show()

# 写法2:

# 不用self.ui_new,因为这个子窗口不是从属于当前窗口,写法不好

# 所以使用公用变量名

shareInfo.mainWin = UI_Logic_Window()

shareInfo.mainWin.show()

# 关闭当前窗口

self.close()

else:

replay = QMessageBox.warning(self, "!", "账号或密码输入错误", QMessageBox.Yes)

# 注册界面

class win_Register(QDialog):

def __init__(self, parent = None):

super(win_Register, self).__init__(parent)

self.ui_register = Ui_Dialog()

self.ui_register.setupUi(self)

self.init_slots()

# 绑定槽信号

def init_slots(self):

self.ui_register.pushButton_regiser.clicked.connect(self.new_account)

self.ui_register.pushButton_cancer.clicked.connect(self.cancel)

# 创建新账户

def new_account(self):

print("Create new account")

USER_PWD = get_id_info()

# print(USER_PWD)

new_username = self.ui_register.edit_username.text().strip()

new_password = self.ui_register.edit_password.text().strip()

# 判断用户名是否为空

if new_username == "":

replay = QMessageBox.warning(self, "!", "账号不准为空", QMessageBox.Yes)

else:

# 判断账号是否存在

if new_username in USER_PWD.keys():

replay = QMessageBox.warning(self, "!", "账号已存在", QMessageBox.Yes)

else:

# 判断密码是否为空

if new_password == "":

replay = QMessageBox.warning(self, "!", "密码不能为空", QMessageBox.Yes)

else:

# 注册成功

print("Successful!")

sava_id_info(new_username, new_password)

replay = QMessageBox.warning(self, "!", "注册成功!", QMessageBox.Yes)

# 关闭界面

self.close()

# 取消注册

def cancel(self):

self.close() # 关闭当前界面

if __name__ == "__main__":

app = QApplication(sys.argv)

# 利用共享变量名来实例化对象

shareInfo.loginWin = win_Login() # 登录界面作为主界面

shareInfo.loginWin.show()

sys.exit(app.exec_())

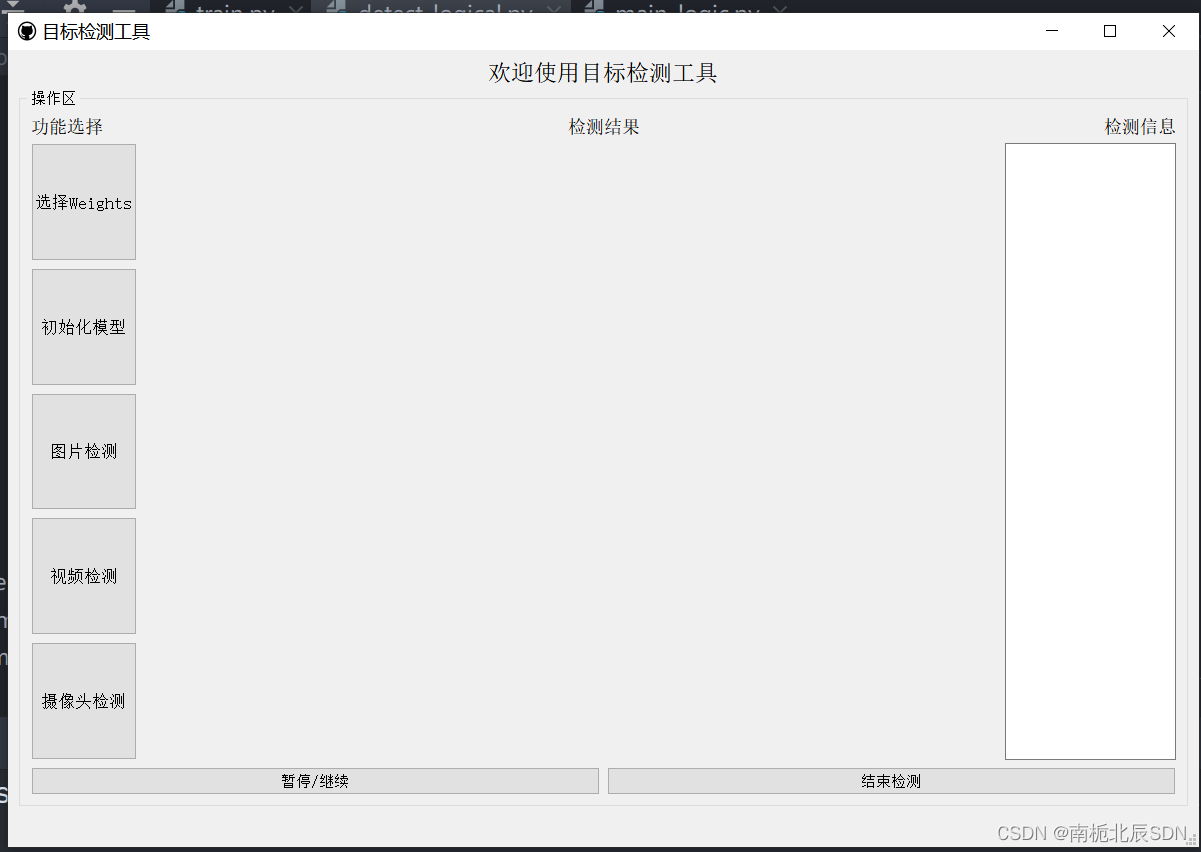

检测平台操作界面:

检测平台操作界面代码:

# -*- coding: utf-8 -*-

# @Modified by: Ruihao

# @ProjectName:yolov5-pyqt5

import sys

import cv2

import time

import argparse

import random

import torch

import numpy as np

import torch.backends.cudnn as cudnn

import threading

from PyQt5 import QtCore, QtGui, QtWidgets

from PyQt5.QtCore import *

from PyQt5.QtGui import *

from PyQt5.QtWidgets import *

from utils.torch_utils import select_device

from models.experimental import attempt_load

from utils.general import check_img_size, non_max_suppression, scale_coords

from utils.datasets import letterbox

from utils.plots import plot_one_box2

from ui.detect_ui import Ui_MainWindow # 导入detect_ui的界面

import serial # arduino 与 python通信

import time

class UI_Logic_Window(QtWidgets.QMainWindow):

def __init__(self, parent=None):

super(UI_Logic_Window, self).__init__(parent)

self.timer_video = QtCore.QTimer() # 创建定时器

self.ui = Ui_MainWindow()

self.ui.setupUi(self)

self.init_slots()

self.cap = cv2.VideoCapture()

self.num_stop = 1 # 暂停与播放辅助信号,note:通过奇偶来控制暂停与播放

self.output_folder = 'output/'

self.vid_writer = None

# 权重初始文件名

self.openfile_name_model = None

# 控件绑定相关操作

def init_slots(self):

self.ui.pushButton_img.clicked.connect(self.button_image_open)

self.ui.pushButton_video.clicked.connect(self.button_video_open)

self.ui.pushButton_camer.clicked.connect(self.button_camera_open)

self.ui.pushButton_weights.clicked.connect(self.open_model)

self.ui.pushButton_init.clicked.connect(self.model_init)

self.ui.pushButton_stop.clicked.connect(self.button_video_stop)

self.ui.pushButton_finish.clicked.connect(self.finish_detect)

self.timer_video.timeout.connect(self.show_video_frame) # 定时器超时,将槽绑定至show_video_frame

# 打开权重文件

def open_model(self):

self.openfile_name_model, _ = QFileDialog.getOpenFileName(self.ui.pushButton_weights, '选择weights文件',

'weights/')

if not self.openfile_name_model:

QtWidgets.QMessageBox.warning(self, u"Warning", u"打开权重失败", buttons=QtWidgets.QMessageBox.Ok,

defaultButton=QtWidgets.QMessageBox.Ok)

else:

print('加载weights文件地址为:' + str(self.openfile_name_model))

# 由获取的标签名称Obj_Name作为信号控制电机

def control(self, obj_name):

# Arduino所在串口是COM3,这是windows下的表示

# board = Arduino('COM3')

# obj_name = obj_name[0]

print(obj_name)

ser = serial.Serial('COM3', 9600, timeout=2) # 建立串口连接

time.sleep(3)

# while True:

if obj_name == 'corn':

# obj_name = ''

val = ser.write('0'.encode('utf-8'))

# board.digital[9].write(0) # 向端口13写入0 0 代表0-180转

print('正转')

# time.sleep(3)

val2 = ser.readline().decode('utf-8')

print(val2)

if obj_name == 'soybean':

# obj_name = ''

val = ser.write('1'.encode('utf-8'))

# board.digital[9].write(1) # 向端口13写入1 0 代表0-180转

print('反转')

# time.sleep(3)

val2 = ser.readline().decode('utf-8')

print(val2)

if obj_name == '':

val = ser.write('2'.encode('utf-8'))

# board.digital[9].write(1) # 向端口13写入1 0 代表0-180转

print('初始位置')

# time.sleep(3)

val2 = ser.readline().decode('utf-8')

print(val2)

# 加载相关参数,并初始化模型

def model_init(self):

# ser = serial.Serial('COM3', 9600, timeout=1) # 建立串口连接

# 模型相关参数配置

parser = argparse.ArgumentParser()

parser.add_argument('--weights', nargs='+', type=str, default='weights/yolov5s.pt', help='model.pt path(s)')

parser.add_argument('--source', type=str, default='data/images', help='source') # file/folder, 0 for webcam

parser.add_argument('--img-size', type=int, default=640, help='inference size (pixels)')

parser.add_argument('--conf-thres', type=float, default=0.1, help='object confidence threshold')

parser.add_argument('--iou-thres', type=float, default=0.45, help='IOU threshold for NMS')

parser.add_argument('--device', default='cpu', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')

parser.add_argument('--view-img', action='store_true', help='display results')

parser.add_argument('--save-txt', action='store_true', help='save results to *.txt')

parser.add_argument('--save-conf', action='store_true', help='save confidences in --save-txt labels')

parser.add_argument('--nosave', action='store_true', help='do not save images/videos')

parser.add_argument('--classes', nargs='+', type=int, help='filter by class: --class 0, or --class 0 2 3')

parser.add_argument('--agnostic-nms', action='store_true', help='class-agnostic NMS')

parser.add_argument('--augment', action='store_true', help='augmented inference')

parser.add_argument('--update', action='store_true', help='update all models')

parser.add_argument('--project', default='runs/detect', help='save results to project/name')

parser.add_argument('--name', default='exp', help='save results to project/name')

parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment')

self.opt = parser.parse_args()

print(self.opt)

# 默认使用opt中的设置(权重等)来对模型进行初始化

source, weights, view_img, save_txt, imgsz = self.opt.source, self.opt.weights, self.opt.view_img, self.opt.save_txt, self.opt.img_size

# 若openfile_name_model不为空,则使用此权重进行初始化

if self.openfile_name_model:

weights = self.openfile_name_model

print("Using button choose model")

self.device = select_device(self.opt.device)

self.half = self.device.type != 'cpu' # half precision only supported on CUDA

cudnn.benchmark = True

# Load model

self.model = attempt_load(weights, map_location=self.device) # load FP32 model

stride = int(self.model.stride.max()) # model stride

self.imgsz = check_img_size(imgsz, s=stride) # check img_size

if self.half:

self.model.half() # to FP16

# Get names and colors

self.names = self.model.module.names if hasattr(self.model, 'module') else self.model.names

self.colors = [[random.randint(0, 255) for _ in range(3)] for _ in self.names]

print("model initial done")

# 设置提示框

QtWidgets.QMessageBox.information(self, u"Notice", u"模型加载完成", buttons=QtWidgets.QMessageBox.Ok,

defaultButton=QtWidgets.QMessageBox.Ok)

# return ser

# 目标检测

def detect(self, name_list, img):

'''

:param name_list: 文件名列表

:param img: 待检测图片

:return: info_show:检测输出的文字信息

'''

showimg = img

with torch.no_grad():

img = letterbox(img, new_shape=self.opt.img_size)[0]

# Convert

img = img[:, :, ::-1].transpose(2, 0, 1) # BGR to RGB, to 3x416x416

img = np.ascontiguousarray(img)

img = torch.from_numpy(img).to(self.device)

img = img.half() if self.half else img.float() # uint8 to fp16/32

img /= 255.0 # 0 - 255 to 0.0 - 1.0

if img.ndimension() == 3:

img = img.unsqueeze(0)

# Inference

pred = self.model(img, augment=self.opt.augment)[0]

# Apply NMS

pred = non_max_suppression(pred, self.opt.conf_thres, self.opt.iou_thres, classes=self.opt.classes,

agnostic=self.opt.agnostic_nms)

info_show = ""

obj_name = ""

# Process detections

for i, det in enumerate(pred):

if det is not None and len(det):

# Rescale boxes from img_size to im0 size

det[:, :4] = scale_coords(img.shape[2:], det[:, :4], showimg.shape).round()

for *xyxy, conf, cls in reversed(det):

label = '%s %.2f' % (self.names[int(cls)], conf)

name_list.append(self.names[int(cls)])

single_info = plot_one_box2(xyxy, showimg, label=label, color=self.colors[int(cls)], line_thickness=2)

# print(single_info)

info_show = info_show + single_info + "\n"

obj_name = info_show.split(': ')[2].split(' ')[0]

# t = threading.Thread(target=self.control, args=(obj_name,))

# t.start()

self.control(obj_name)

return info_show

# 打开图片并检测

def button_image_open(self):

# ser = serial.Serial('COM3', 9600, timeout=1) # 建立串口连接

print('button_image_open')

name_list = []

try:

img_name, _ = QtWidgets.QFileDialog.getOpenFileName(self, "打开图片", "data/images", "*.jpg;;*.png;;All Files(*)")

except OSError as reason:

print('文件打开出错啦!核对路径是否正确'+ str(reason))

else:

# 判断图片是否为空

if not img_name:

QtWidgets.QMessageBox.warning(self, u"Warning", u"打开图片失败", buttons=QtWidgets.QMessageBox.Ok,

defaultButton=QtWidgets.QMessageBox.Ok)

else:

img = cv2.imread(img_name)

print("img_name:", img_name)

info_show = self.detect(name_list, img)

print(type(info_show))

print(info_show)

# 获取当前系统时间,作为img文件名

now = time.strftime("%Y-%m-%d-%H-%M-%S", time.localtime(time.time()))

file_extension = img_name.split('.')[-1]

new_filename = now + '.' + file_extension # 获得文件后缀名

file_path = self.output_folder + 'img_output/' + new_filename

cv2.imwrite(file_path, img)

# 检测信息显示在界面

self.ui.textBrowser.setText(info_show)

# 检测结果显示在界面

self.result = cv2.cvtColor(img, cv2.COLOR_BGR2BGRA)

self.result = cv2.resize(self.result, (640, 480), interpolation=cv2.INTER_AREA)

self.QtImg = QtGui.QImage(self.result.data, self.result.shape[1], self.result.shape[0], QtGui.QImage.Format_RGB32)

self.ui.label.setPixmap(QtGui.QPixmap.fromImage(self.QtImg))

self.ui.label.setScaledContents(True) # 设置图像自适应界面大小

def set_video_name_and_path(self):

# 获取当前系统时间,作为img和video的文件名

now = time.strftime("%Y-%m-%d-%H-%M-%S", time.localtime(time.time()))

# if vid_cap: # video

fps = self.cap.get(cv2.CAP_PROP_FPS)

w = int(self.cap.get(cv2.CAP_PROP_FRAME_WIDTH))

h = int(self.cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

# 视频检测结果存储位置

save_path = self.output_folder + 'video_output/' + now + '.mp4'

return fps, w, h, save_path

# 打开视频并检测

def button_video_open(self):

video_name, _ = QtWidgets.QFileDialog.getOpenFileName(self, "打开视频", "data/", "*.mp4;;*.avi;;All Files(*)")

flag = self.cap.open(video_name)

if not flag:

QtWidgets.QMessageBox.warning(self, u"Warning", u"打开视频失败", buttons=QtWidgets.QMessageBox.Ok,defaultButton=QtWidgets.QMessageBox.Ok)

else:

#-------------------------写入视频----------------------------------#

fps, w, h, save_path = self.set_video_name_and_path()

self.vid_writer = cv2.VideoWriter(save_path, cv2.VideoWriter_fourcc(*'mp4v'), fps, (w, h))

self.timer_video.start(2000) # 以30ms为间隔,启动或重启定时器

# 进行视频识别时,关闭其他按键点击功能

self.ui.pushButton_video.setDisabled(True)

self.ui.pushButton_img.setDisabled(True)

self.ui.pushButton_camer.setDisabled(True)

# 打开摄像头检测

def button_camera_open(self):

print("Open camera to detect")

# 设置使用的摄像头序号,系统自带为0

camera_num = 0

# 打开摄像头

self.cap = cv2.VideoCapture(camera_num)

# 判断摄像头是否处于打开状态

bool_open = self.cap.isOpened()

if not bool_open:

QtWidgets.QMessageBox.warning(self, u"Warning", u"打开摄像头失败", buttons=QtWidgets.QMessageBox.Ok,

defaultButton=QtWidgets.QMessageBox.Ok)

else:

fps, w, h, save_path = self.set_video_name_and_path()

fps = 30 # 控制摄像头检测下的fps,Note:保存的视频,播放速度有点快,我只是粗暴的调整了FPS

self.vid_writer = cv2.VideoWriter(save_path, cv2.VideoWriter_fourcc(*'mp4v'), fps, (w, h))

self.timer_video.start(1000) # 默认30ms

self.ui.pushButton_video.setDisabled(True)

self.ui.pushButton_img.setDisabled(True)

self.ui.pushButton_camer.setDisabled(True)

# 定义视频帧显示操作

def show_video_frame(self):

name_list = []

flag, img = self.cap.read()

if img is not None:

info_show = self.detect(name_list, img) # 检测结果写入到原始img上

self.vid_writer.write(img) # 检测结果写入视频

print(info_show)

# 检测信息显示在界面

self.ui.textBrowser.setText(info_show)

show = cv2.resize(img, (640, 480)) # 直接将原始img上的检测结果进行显示

self.result = cv2.cvtColor(show, cv2.COLOR_BGR2RGB)

showImage = QtGui.QImage(self.result.data, self.result.shape[1], self.result.shape[0],

QtGui.QImage.Format_RGB888)

self.ui.label.setPixmap(QtGui.QPixmap.fromImage(showImage))

self.ui.label.setScaledContents(True) # 设置图像自适应界面大小

else:

self.timer_video.stop()

# 读写结束,释放资源

self.cap.release() # 释放video_capture资源

self.vid_writer.release() # 释放video_writer资源

self.ui.label.clear()

# 视频帧显示期间,禁用其他检测按键功能

self.ui.pushButton_video.setDisabled(False)

self.ui.pushButton_img.setDisabled(False)

self.ui.pushButton_camer.setDisabled(False)

# 暂停与继续检测

def button_video_stop(self):

self.timer_video.blockSignals(False)

# 暂停检测

# 若QTimer已经触发,且激活

if self.timer_video.isActive() == True and self.num_stop%2 == 1:

self.ui.pushButton_stop.setText(u'暂停检测') # 当前状态为暂停状态

self.num_stop = self.num_stop + 1 # 调整标记信号为偶数

self.timer_video.blockSignals(True)

# 继续检测

else:

self.num_stop = self.num_stop + 1

self.ui.pushButton_stop.setText(u'继续检测')

# 结束视频检测

def finish_detect(self):

# self.timer_video.stop()

self.cap.release() # 释放video_capture资源

self.vid_writer.release() # 释放video_writer资源

self.ui.label.clear() # 清空label画布

# 启动其他检测按键功能

self.ui.pushButton_video.setDisabled(False)

self.ui.pushButton_img.setDisabled(False)

self.ui.pushButton_camer.setDisabled(False)

# 结束检测时,查看暂停功能是否复位,将暂停功能恢复至初始状态

# Note:点击暂停之后,num_stop为偶数状态

if self.num_stop%2 == 0:

print("Reset stop/begin!")

self.ui.pushButton_stop.setText(u'暂停/继续')

self.num_stop = self.num_stop + 1

self.timer_video.blockSignals(False)

if __name__ == '__main__':

app = QtWidgets.QApplication(sys.argv)

current_ui = UI_Logic_Window()

current_ui.show()

sys.exit(app.exec_())

3.3 python-arduino通信python部分

windows下安装通信包:

pip install pyserial

python向arduino发送消息:

def control(self, obj_name):

# Arduino所在串口是COM3,这是windows下的表示

# board = Arduino('COM3')

# obj_name = obj_name[0]

print(obj_name)

ser = serial.Serial('COM3', 9600, timeout=2) # 建立串口连接

time.sleep(3)

# while True:

if obj_name == 'corn':

# obj_name = ''

val = ser.write('0'.encode('utf-8'))

# board.digital[9].write(0) # 向端口13写入0 0 代表0-180转

print('正转')

# time.sleep(3)

val2 = ser.readline().decode('utf-8')

print(val2)

if obj_name == 'soybean':

# obj_name = ''

val = ser.write('1'.encode('utf-8'))

# board.digital[9].write(1) # 向端口13写入1 0 代表0-180转

print('反转')

# time.sleep(3)

val2 = ser.readline().decode('utf-8')

print(val2)

if obj_name == '':

val = ser.write('2'.encode('utf-8'))

# board.digital[9].write(1) # 向端口13写入1 0 代表0-180转

print('初始位置')

# time.sleep(3)

val2 = ser.readline().decode('utf-8')

print(val2)

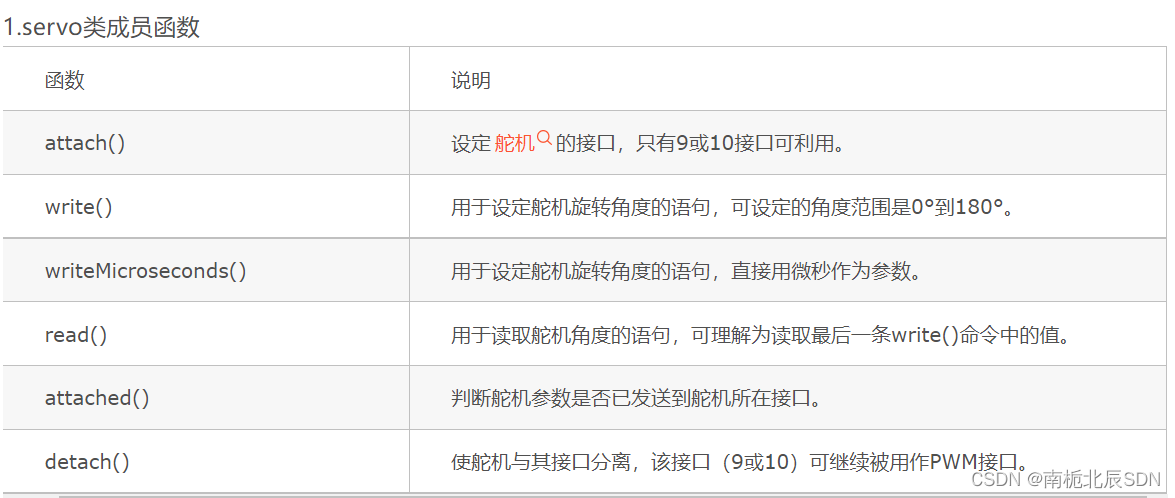

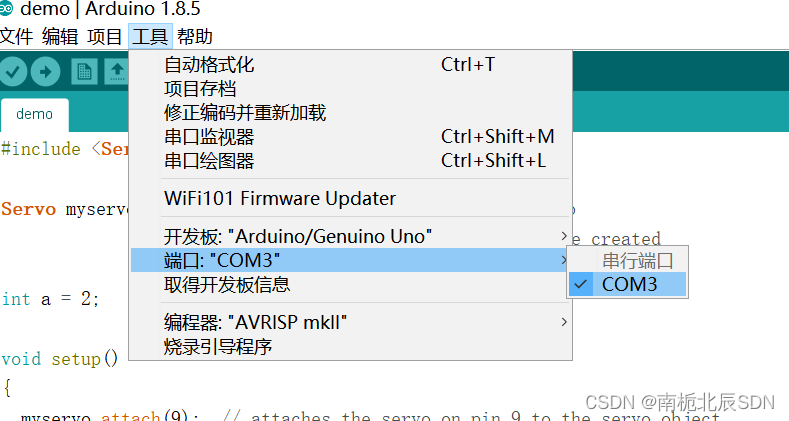

4.arduino控制代码及讲解

4.1将arduino接入PC,查看端口

- 下载CH340串口驱动并安装

2.下载arduino IDE 1.8.5 我的串口’COM3’

4.2 arduino接收python消息并控制舵机

arduino接收python消息并控制舵机:

#include <Servo.h>

Servo myservo; // create servo object to control a servo

// a maximum of eight servo objects can be created

int a = 2;

void setup()

{

myservo.attach(9); // attaches the servo on pin 9 to the servo object

Serial.begin(9600);

myservo.write(90);

}

void loop()

{

if(Serial.available()){

a = Serial.parseInt();

if(a == 0){

Serial.println("收到来自python的信号0");

myservo.write(0); // tell servo to go to position in variable 'pos'

delay(1500); // waits 15ms for the servo to reach the position

myservo.write(90);

}

if(a == 1){

Serial.println("收到来自python的信号1");

myservo.write(180); // tell servo to go to position in variable 'pos'

delay(1500); // waits 15ms for the servo to reach the position

myservo.write(90);

}

if(a == 2){

Serial.println("收到来自python的信号2");

myservo.write(90); // tell servo to go to position in variable 'pos'

delay(50); // waits 15ms for the servo to reach the position

}

}

}

5.实现效果

视频链接:

https://download.csdn.net/download/qq_46173016/87727084

基于yolov5和arduino实现种子分拣

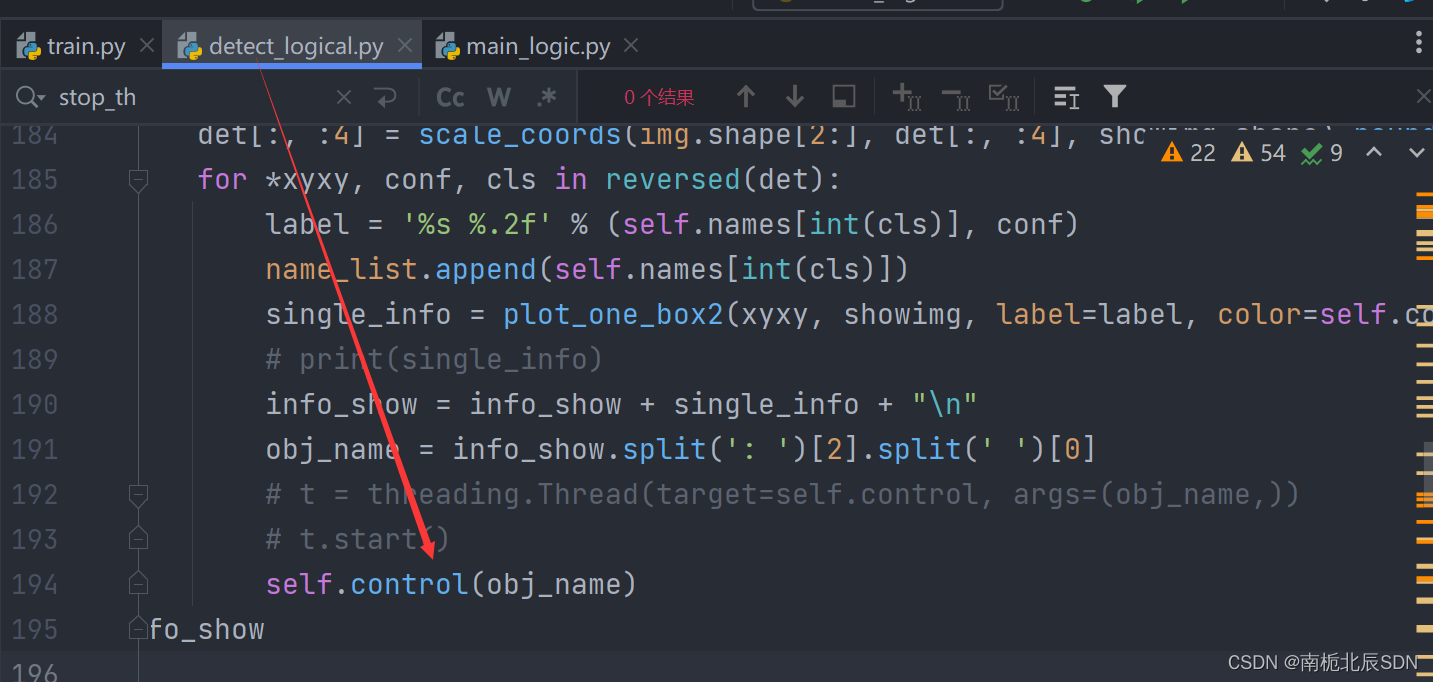

6.待改进思路

现存问题

在图中py文件195行处,调用通信函数,但是存在一个问题,yolov5检测速度是ms级的,单片机接收指令到动作完成大概需要2s,此代码会强制将yolo检测间隔变成2s左右,因为舵机动作运行在yolo检测循环内,导致运行过程变成了,yolo检测–发信号–舵机动作–开始下一轮yolo检测。

改进思路

将建立通信–发信号–舵机动作等耗时操作放在新线程中执行。从而使整个检测显示画面更加流畅丝滑,避免视频中卡顿问题。

1437

1437

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?