scikit-learn中的SVM

import numpy as np

import matplotlib.pyplot as plt

import numpy as np

import matplotlib.pyplot as plt

一个常用的画图函数

def plot_decision_boundary(model, axis):

"""绘制不规则决策边界"""

x0, x1 = np.meshgrid(

np.linspace(axis[0], axis[1], int((axis[1] - axis[0]) * 100)).reshape(1, -1),

np.linspace(axis[2], axis[3], int((axis[3] - axis[2]) * 100)).reshape(1, -1)

)

X_new = np.c_[x0.ravel(), x1.ravel()]

y_predict = model.predict(X_new)

zz = y_predict.reshape(x0.shape)

from matplotlib.colors import ListedColormap

custom_cmap = ListedColormap(['#EF9A9A', '#FFF59D', '#90CAF9'])

plt.contourf(x0, x1, zz, linewidth=5, cmap=custom_cmap)

def plot_svc_decision_boundary(model, axis):

plot_decision_boundary(model, axis)

w = model.coef_[0]

b = model.intercept_[0]

# 绘制margin的直线

# 决策边界所在直线的表达式:w0 * x0 + w1 * x1 + b = 0 -> x1 = -w0 * x0 / w1 - b / w1

plot_x = np.linspace(axis[0], axis[1], 200)

# w0 * x0 + w1 * x1 + b = 1 -> x1 = 1/w1 - w0 * x0 / w1 - b / w1

up_y = -w[0]/w[1]*plot_x - b/w[1] + 1/w[1]

down_y = -w[0]/w[1]*plot_x - b/w[1] - 1/w[1]

# 处理超过了坐标轴范围的值

up_index = (up_y >= axis[2]) & (up_y <= axis[3])

down_index = (down_y >= axis[2]) & (down_y <= axis[3])

plt.plot(plot_x[up_index], up_y[up_index], color='black')

plt.plot(plot_x[down_index], down_y[down_index], color='black')

from sklearn import datasets

iris = datasets.load_iris()

X = iris.data

y = iris.target

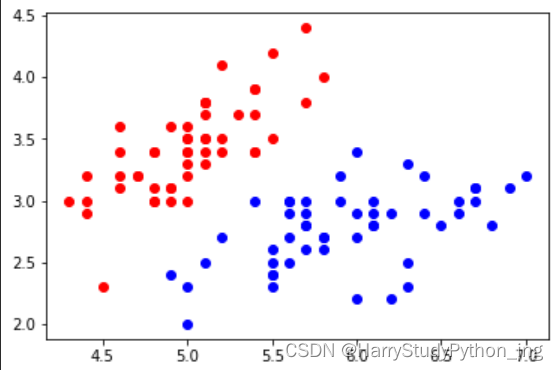

# 为方便可视化,只取前2个特征;

# 暂时只处理2分类问题,所以只取2个类别

X = X[y<2, :2]

y = y[y<2]

plt.scatter(X[y==0,0], X[y==0,1], color='red')

plt.scatter(X[y==1,0], X[y==1,1], color='blue')

plt.show()

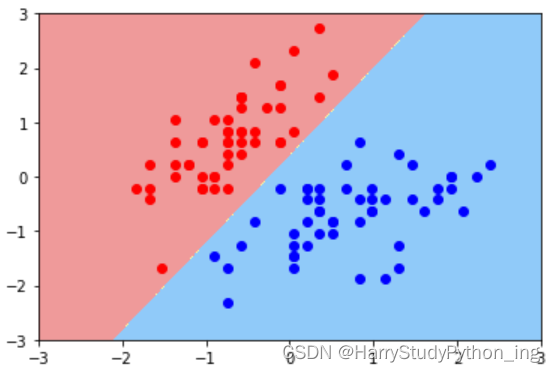

svm前必须对数据进行标准化处理

我们只是想看分类结果的可视化,不需要对未知数据进行预测,所以就不区分训练集与测试集

from sklearn.preprocessing.data import StandardScaler

standard_scaler = StandardScaler()

standard_scaler.fit(X)

X_standard = standard_scaler.transform(X)

from sklearn.svm.classes import LinearSVC

# C取值越大,就越倾向于是一个hard margin

svc = LinearSVC(C=1e9)

svc.fit(X_standard, y)

画出决策边界

plot_decision_boundary(svc, axis=[-3,3,-3,3])

plt.scatter(X_standard[y==0,0], X_standard[y==0,1], color='red')

plt.scatter(X_standard[y==1,0], X_standard[y==1,1], color='blue')

plt.show()

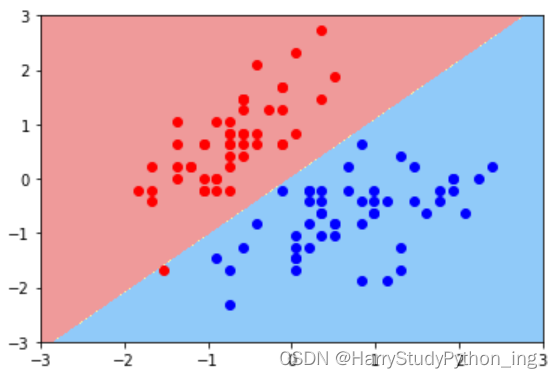

画出决策边界(C=0.01)

svc_2 = LinearSVC(C=0.01)

svc_2.fit(X_standard, y)

plot_decision_boundary(svc_2, axis=[-3,3,-3,3])

plt.scatter(X_standard[y==0,0], X_standard[y==0,1], color='red')

plt.scatter(X_standard[y==1,0], X_standard[y==1,1], color='blue')

plt.show()

SVM也是有系数的

svc.coef_

svc.intercept_

可以看到系数和截距都是数组,这是因为sklearn已经预先为我们处理了使svm可以处理多分类任务,每多一个分类就等于多一条线/平面/超平面,于是系数和截距的数组就多一个元素。

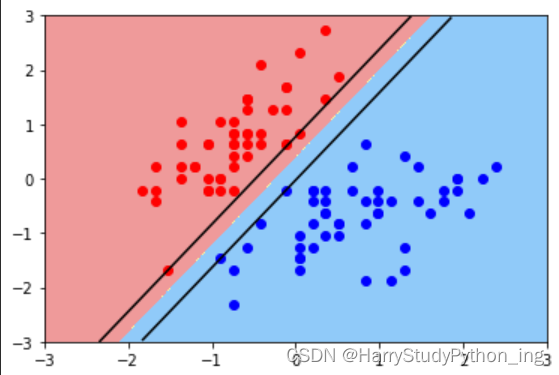

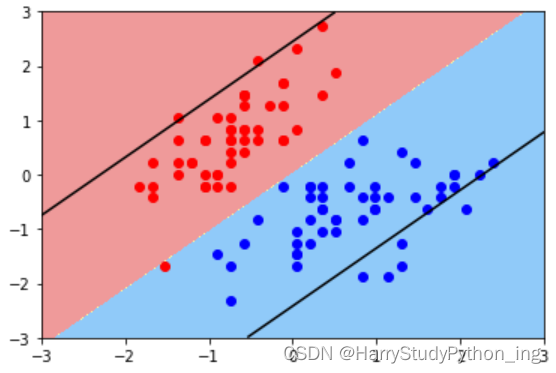

增加绘制margin的方法

画出hard margin的margin

plot_svc_decision_boundary(svc, axis=[-3,3,-3,3])

plt.scatter(X_standard[y==0,0], X_standard[y==0,1], color='red')

plt.scatter(X_standard[y==1,0], X_standard[y==1,1], color='blue')

plt.show()

画出soft margin的margin

plot_svc_decision_boundary(svc_2, axis=[-3,3,-3,3])

plt.scatter(X_standard[y==0,0], X_standard[y==0,1], color='red')

plt.scatter(X_standard[y==1,0], X_standard[y==1,1], color='blue')

plt.show()

5458

5458

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?