学习参考:

10 多层感知机 + 代码实现 - 动手学深度学习v2_哔哩哔哩_bilibili

前序知识点:典型激活函数

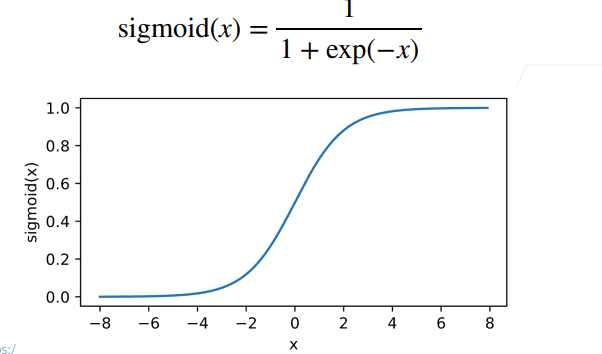

A:Sigmoid激活函数

将输入投影至(0,1),是阶跃函数的soft版本

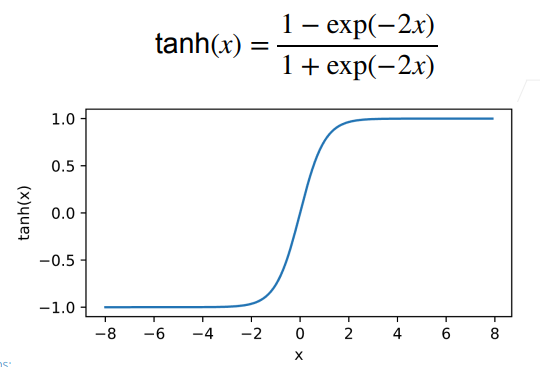

B:Tanh激活函数

将输入投影到(-1, 1)

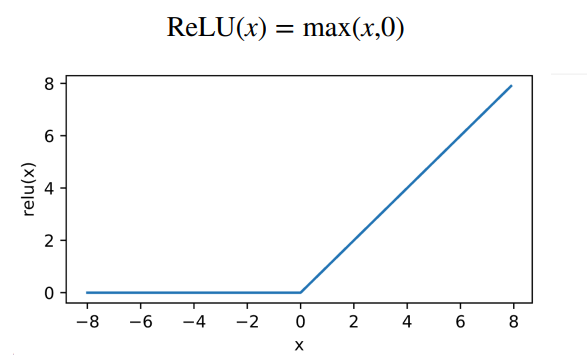

C:ReLU激活函数

rectified linear unit

1. 多层感知机的从零开始实现

import torch

from torch import nn

from d2l import torch as d2ld2l.load_data_fashion_mnist(batch_size, resize = None)

# 读取Fashion MNIST数据集,将其加载到内存中

# 通过转换列表,对数据进行格式转换,包括ToTensor和resize

# 调用内置数据加载器data.DataLoader()

d2l.get_dataloader_worker():

# 确定读取数据的进程数

d2l.accuracy(y_hat, y):

# 计算预测正确的样本数

d2l.evaluate_accuracy(net, data_iter):

# 计算指定模型在指定数据集上的精度

# 预测正确的样本数/当前批次总样本数

d2l.train_epoch_ch3(net, train_iter, loss, updater):

# 一个epoch内,更新模型参数

# 输出当前一个epoch训练完成后的精度和损失

d2l.train_ch3(net, train_iter, teat_iter, loss, num_epochs, updater)

# 完成所有epoch的训练,更新权重

# 可视化每轮epoch的train_loss,train_acc和test_acc

1.1 生成训练数据迭代生成器和测试/验证数据迭代生成器

batch_size = 256

train_iter, test_iter = load_data_fashion_mnist(batch)1.2 定义多层感知机的结构和模型参数初始化

num_inputs, num_outputs, num_hiddens = 28 * 28, 10, 256

# num_inputs和num_outputs分别是输入特征维度,输出类别个数

# num_hiddens是隐藏层的大小(可以理解为隐藏层的特征维度)

W1 = nn.Parameter(

torch.randn(num_inputs, num_hiddens, requires_grad = True) * 0.01)

b1 = nn.Parameter(torch.zeros(num_hiddens), requires_grad = True)

W2 = nn.Parameter(

torch.randn(num_hiddens, num_outputs, requires_grad = True) * 0.01)

b2 = nn.Parameter(torch.zeros(num_outputs), requires_grad = True)

# 参数列表,用于更新梯度

params = [W1, b1, W2, b2]

1.3 实现ReLU函数

def relu(X):

a = torch.zeros_like(X)

# 生成一个和X大小相同的全0张量

return torch.max(X, a)1.4 搭建模型

def net(X):

X = X.reshape(-1, num_inuts)

H = relu(X @ W1 + b1)

return (H @ W2 + b2)1.5 多层感知机的训练过程

num_epochs, lr = 10, 0.1

loss = nn.CrossEntropy()

updater = torch.optim.SGD(params, lr = lr)

train_ch3(net, train_iter, test_iter, loss, num_epochs, updater)

1.6 在测试数据集应用模型

def predict_ch3(net, test_iter, n = 6):

for X, y in test_iter:

break

trues = d2l.get_fashion_mnist_labels(y)

preds = d2l.get_fashion_mnist_labels(net(X).argmax(axis = 1))

titles = [true + '\n' + pred for true, presd in zip(trues, pres)]

d2l.show_image(X[0:n].reshape((n, 28, 28)), 1, n, titles = titles[0: n])

predict_ch3(net, test_iter)2. 多层感知机的简洁实现

import torch

from torch import nn

from d2l import torch as d2l2.1 定义模型与初始化参数

net = nn.Sequential(

nn.Flatten(), nn.Linear(28*28, 256), nn.ReLU(), nn.Linear(256, 10))

def init_weights(m):

if type(m) == nn.Linear():

nn.init.normal_(m.weight, std = 0.01)

net.apply(init_weight)2.2 训练过程

batch_size, lr, num_epoches = 256, 0.1, 10

loss = nn.CrossEntropy()

trainer = torch.optim.SGD(net.parameters(), lr = lr)

train_iter, test_iter = load_data_fashion_mnsit(batch_size)

train_ch3(net, train_iter, test_iter, loss, num_epoches, trainer)

705

705

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?