参考文章:Pytorch系列(四):猫狗大战1-训练和测试自己的数据集

数据集:

采用kaggle官方Cats VS. Dogs比赛数据集。该数据集是由 Microsoft Research Asia 发布的猫狗大战数据集。该数据集包括 25000 张猫和狗的图片,其中 12500 张是猫的图片,另外 12500 张是狗的图片。每张图片的大小不一,颜色、角度、光线等也有所不同。

目录

1.Dataloader.py

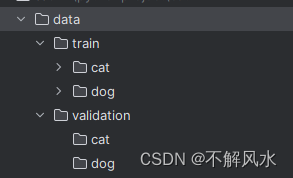

将下载好的数据集放在Data文件夹中,文件夹结构如下:

训练集中的cat与dog文件夹内分类存放了1000张猫狗图片

Dataloader.py代码如下

import torch.utils.data

import numpy as np

import os, random, glob

from torchvision import transforms

from PIL import Image

import matplotlib.pyplot as plt

# 数据集读取

class DogCatDataSet(torch.utils.data.Dataset):

def __init__(self, img_dir, transform=None):

self.transform = transform

dog_dir = os.path.join(img_dir, "dog")

cat_dir = os.path.join(img_dir, "cat")

imgsLib = []

imgsLib.extend(glob.glob(os.path.join(dog_dir, "*.jpg")))

imgsLib.extend(glob.glob(os.path.join(cat_dir, "*.jpg")))

random.shuffle(imgsLib) # 打乱数据集

self.imgsLib = imgsLib

# 作为迭代器必须要有的

def __getitem__(self, index):

img_path = self.imgsLib[index]

label = 1 if 'dog' in img_path.split('/')[-1] else 0 #狗的label设为1,猫的设为0

img = Image.open(img_path).convert("RGB")

img = self.transform(img)

return img, label

def __len__(self):

return len(self.imgsLib)

# 读取数据

if __name__ == "__main__":

CLASSES = {0: "cat", 1: "dog"}

img_dir = "D:/pythonproject/cat/data/train"

data_transform = transforms.Compose([

transforms.Resize(256), # resize到256

transforms.CenterCrop(224), # crop到224

transforms.ToTensor(),

# 把一个取值范围是[0,255]的PIL.Image或者shape为(H,W,C)的numpy.ndarray,转换成形状为[C,H,W],取值范围是[0,1.0]的torch.FloadTensor /255.操作

])

dataSet = DogCatDataSet(img_dir=img_dir, transform=data_transform)

dataLoader = torch.utils.data.DataLoader(dataSet, batch_size=8, shuffle=True, num_workers=4)

image_batch, label_batch = next(iter(dataLoader))

for i in range(image_batch.data.shape[0]):

label = np.array(label_batch.data[i]) ## tensor ==> numpy

# print(label)

img = np.array(image_batch.data[i]*255, np.int32)

print(CLASSES[int(label)])

plt.imshow(np.transpose(img, [1, 2, 0]))

plt.show()

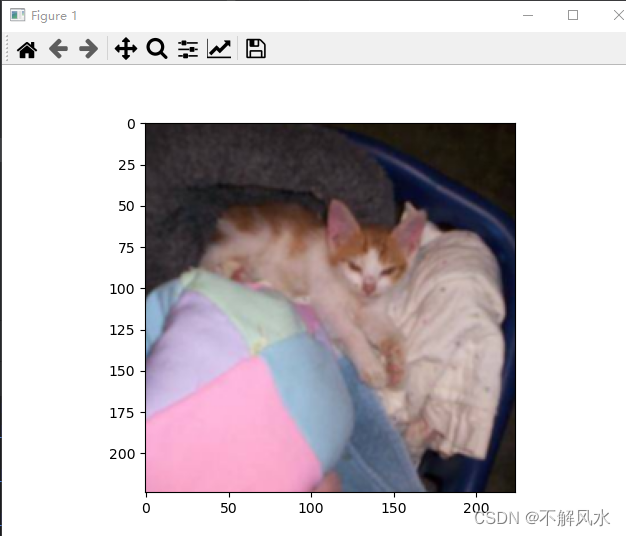

运行后会显示数据集中的图片,同时在运行窗口给出相应的分类名称

2.train.py

数据集制作好之后就可以开始训练了

from __future__ import print_function, division

import numpy as np

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torch.autograd import Variable

from torch.utils.data import Dataset

from torchvision import transforms, datasets, models

from DataLoader import DogCatDataSet

# 配置参数

random_state = 1

torch.manual_seed(random_state) # 设置随机数种子,确保结果可重复

torch.cuda.manual_seed(random_state)

torch.cuda.manual_seed_all(random_state)

np.random.seed(random_state)

# random.seed(random_state)

epochs = 30 # 训练次数

batch_size = 16 # 批处理大小

num_workers = 4 # 多线程的数目

use_gpu = torch.cuda.is_available()

# 对加载的图像作归一化处理, 并裁剪为[224x224x3]大小的图像

data_transform = transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

])

train_dataset = DogCatDataSet(img_dir="D:/pythonproject/cat/data/train", transform=data_transform)

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=batch_size, shuffle=True, num_workers=4)

test_dataset = DogCatDataSet(img_dir="D:/pythonproject/cat/data/validation", transform=data_transform)

test_loader = torch.utils.data.DataLoader(train_dataset, batch_size=batch_size, shuffle=True, num_workers=4)

# 加载resnet18 模型,

net = models.resnet18(pretrained=False)

num_ftrs = net.fc.in_features

net.fc = nn.Linear(num_ftrs, 2) # 更新resnet18模型的fc模型,

if use_gpu:

net = net.cuda()

print(net)

'''

Net (

(conv1): Conv2d(3, 6, kernel_size=(5, 5), stride=(1, 1))

(maxpool): MaxPool2d (size=(2, 2), stride=(2, 2), dilation=(1, 1))

(conv2): Conv2d(6, 16, kernel_size=(5, 5), stride=(1, 1))

(fc1): Linear (44944 -> 2048)

(fc2): Linear (2048 -> 512)

(fc3): Linear (512 -> 2)

)

'''

# 定义loss和optimizer

cirterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(net.parameters(), lr=0.0001, momentum=0.9)

# 开始训练

net.train()

for epoch in range(epochs):

running_loss = 0.0

train_correct = 0

train_total = 0

for i, data in enumerate(train_loader, 0):

inputs, train_labels = data

if use_gpu:

inputs, labels = Variable(inputs.cuda()), Variable(train_labels.cuda())

else:

inputs, labels = Variable(inputs), Variable(train_labels)

# inputs, labels = Variable(inputs), Variable(train_labels)

optimizer.zero_grad()

outputs = net(inputs)

_, train_predicted = torch.max(outputs.data, 1)

# import pdb

# pdb.set_trace()

train_correct += (train_predicted == labels.data).sum()

loss = cirterion(outputs, labels)

loss.backward()

optimizer.step()

running_loss += loss.item()

print("epoch: ", epoch, " loss: ", loss.item())

train_total += train_labels.size(0)

print('train %d epoch loss: %.3f acc: %.3f ' % (

epoch + 1, running_loss / train_total * batch_size, 100 * train_correct / train_total))

# 模型测试

correct = 0

test_loss = 0.0

test_total = 0

test_total = 0

net.eval()

for data in test_loader:

images, labels = data

if use_gpu:

images, labels = Variable(images.cuda()), Variable(labels.cuda())

else:

images, labels = Variable(images), Variable(labels)

outputs = net(images)

_, predicted = torch.max(outputs.data, 1)

loss = cirterion(outputs, labels)

test_loss += loss.item()

test_total += labels.size(0)

correct += (predicted == labels.data).sum()

print('test %d epoch loss: %.3f acc: %.3f ' % (epoch + 1, test_loss / test_total, 100 * correct / test_total))

torch.save(net, "my_model3.pth")

最开始直接在pycharm里训练报错,要将

num_workers=4修改为

num_workers=0

但是这样会使得训练效果非常差,于是在服务器训练。服务器上训练了80轮,其实在50多轮的时候就已经达到了98+的准确率

已将训练之后的模型上传并与本篇博客绑定

3.test.py

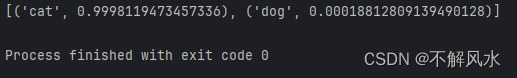

接下来可以挑选任意猫狗图像对模型进行测试

from PIL import Image

import torch

from torchvision import transforms

# 图片路径

save_path = 'D:/pythonproject/cat/acc100.pth'

# ------------------------ 加载数据 --------------------------- #

# Data augmentation and normalization for training

# Just normalization for validation

# 定义预训练变换

preprocess_transform = transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

# transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

])

class_names = ['cat', 'dog']

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# ------------------------ 载入模型并且测试 --------------------------- #

model = torch.load(save_path)

model.eval()

# print(model)

image_PIL = Image.open('D:/pythonproject/cat/data/train/cat/cat.0.jpg')

#

image_tensor = preprocess_transform(image_PIL)

# 以下语句等效于 image_tensor = torch.unsqueeze(image_tensor, 0)

image_tensor.unsqueeze_(0)

# 没有这句话会报错

image_tensor = image_tensor.to(device)

out = model(image_tensor)

# 得到预测结果,并且从大到小排序

_, indices = torch.sort(out, descending=True)

# 返回每个预测值的百分数

percentage = torch.nn.functional.softmax(out, dim=1)[0]

print([(class_names[idx], percentage[idx].item()) for idx in indices[0][:5]])

测试结果正确

828

828

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?