一 本地部署流程:

1.下载模型文件

https://huggingface.co/THUDM/chatglm2-6b/tree/main

GitHub - THUDM/ChatGLM2-6B: ChatGLM2-6B: An Open Bilingual Chat LLM | 开源双语对话语言模型

2.本地部署:

虚拟环境进入到模型文件文件夹中 下载所需依赖:

pip install -r requirements.txt

这里有问题:xtuner1.20需要4.36.0及以上但是不是4.38.0等版本的transformer,这里和requirements.txt文件中要求的版本冲突,报错如下:

ERROR: pip's dependency resolver does not currently take into account all the packages that are installed. This behaviour is the source of the following dependency conflicts. xtuner 0.1.21 requires transformers!=4.38.0,!=4.38.1,!=4.38.2,>=4.36.0, but you have transformers 4.30.2 which is incompatible.

解决方案:这里手动将transformers版本更新到4.36.0即可

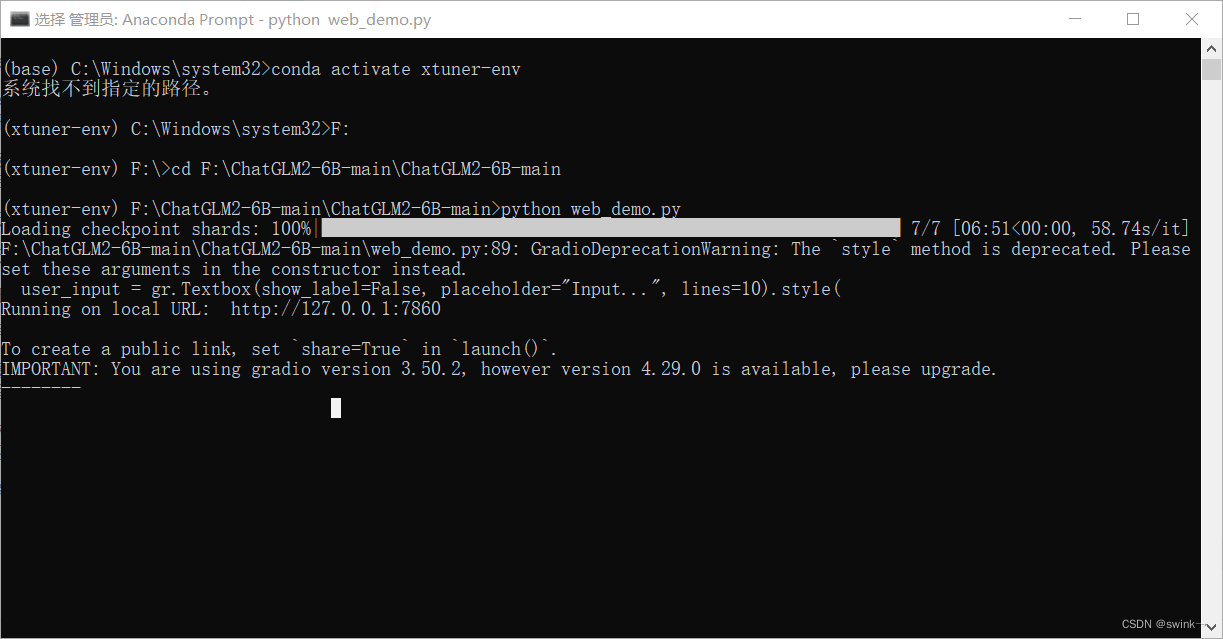

下载完成后进入到大模型文件夹中,运行

python web_demo.py

又报错:

Traceback (most recent call last): File "F:\ChatGLM2-6B-main\ChatGLM2-6B-main\web_demo.py", line 7, in <module> model = AutoModel.from_pretrained("F:\\glm2", trust_remote_code=True).cuda() File "F:\Python3.0\lib\site-packages\transformers\modeling_utils.py", line 2432, in cuda return super().cuda(*args, **kwargs) File "F:\Python3.0\lib\site-packages\torch\nn\modules\module.py", line 918, in cuda return self._apply(lambda t: t.cuda(device)) File "F:\Python3.0\lib\site-packages\torch\nn\modules\module.py", line 810, in _apply module._apply(fn) File "F:\Python3.0\lib\site-packages\torch\nn\modules\module.py", line 810, in _apply module._apply(fn) File "F:\Python3.0\lib\site-packages\torch\nn\modules\module.py", line 810, in _apply module._apply(fn) File "F:\Python3.0\lib\site-packages\torch\nn\modules\module.py", line 833, in _apply param_applied = fn(param) File "F:\Python3.0\lib\site-packages\torch\nn\modules\module.py", line 918, in <lambda> return self._apply(lambda t: t.cuda(device)) File "F:\Python3.0\lib\site-packages\torch\cuda\__init__.py", line 289, in _lazy_init raise AssertionError("Torch not compiled with CUDA enabled")

AssertionError: Torch not compiled with CUDA enabled

重点在最后一行报错,经过上网查询,这个是由于CUDA和pytorch版本冲突了

查询自己的CUDA版本为12.2,这里降到12.1(先卸载12.2然后去安装12.1)

原因为pytorch官方提供了和cuda12.1相容的版本,但是我没找到12.2的:

pip3 install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu121

安装成功之后 再次输入指令

python web_demo.py

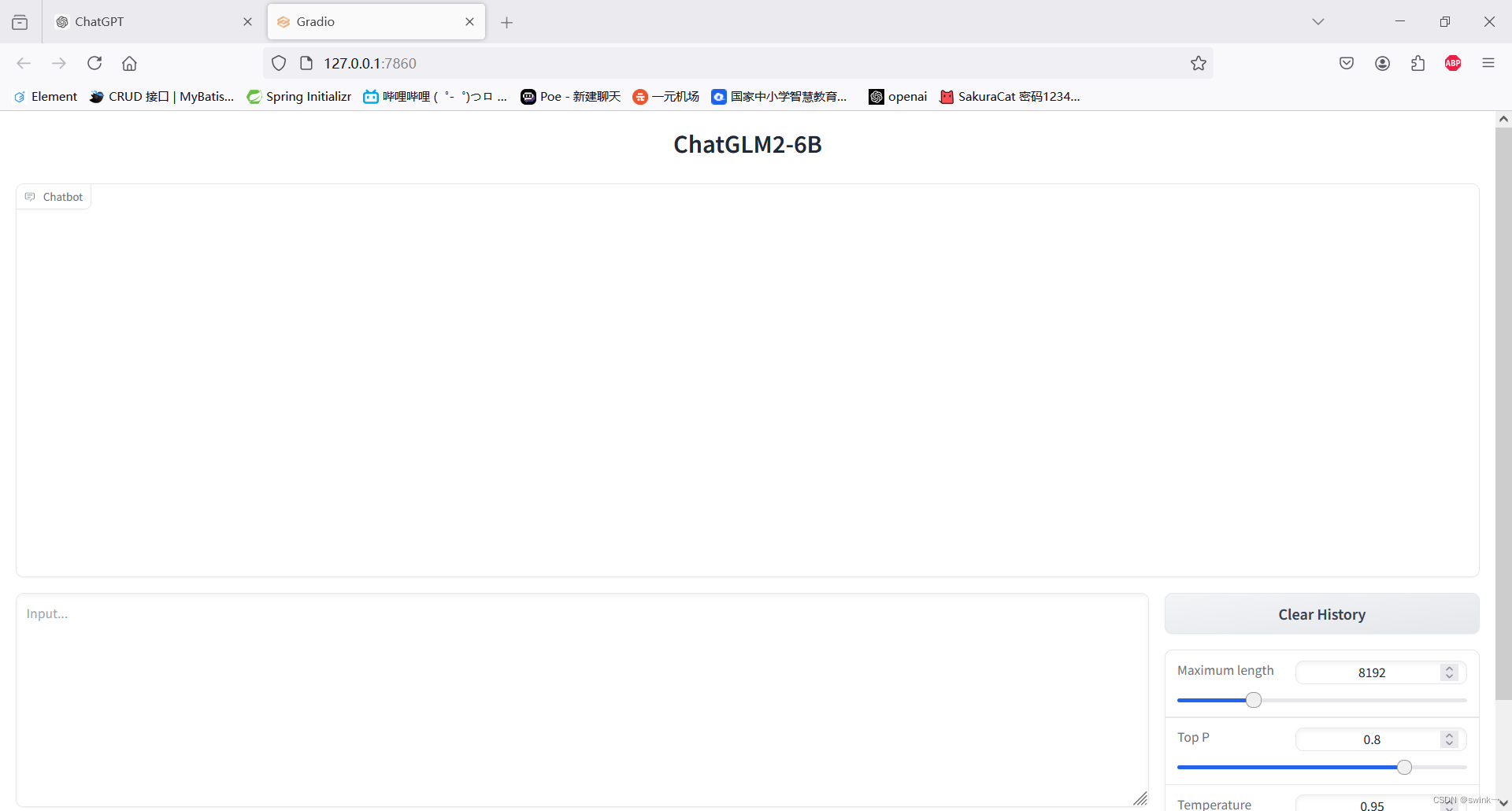

这个自带的前端跑起来了,说明部署成功。

392

392

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?