前提条件

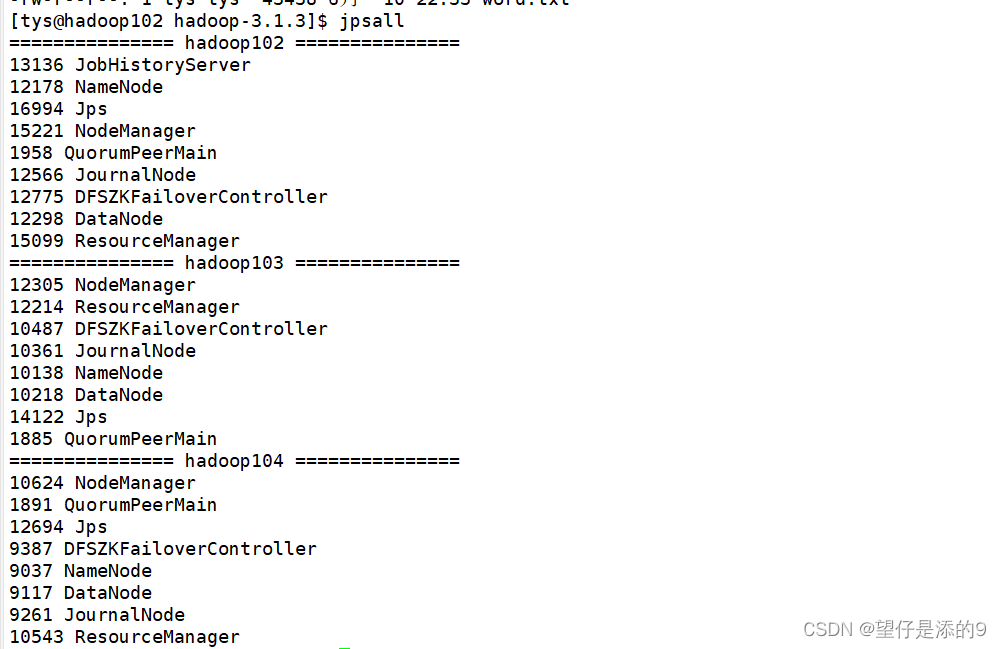

首先确保自己的虚拟机已经部署好了高可用集群.我的高可用集群启动成功后如下所示(启动集群之前还要启动zookeeper)

准备工作

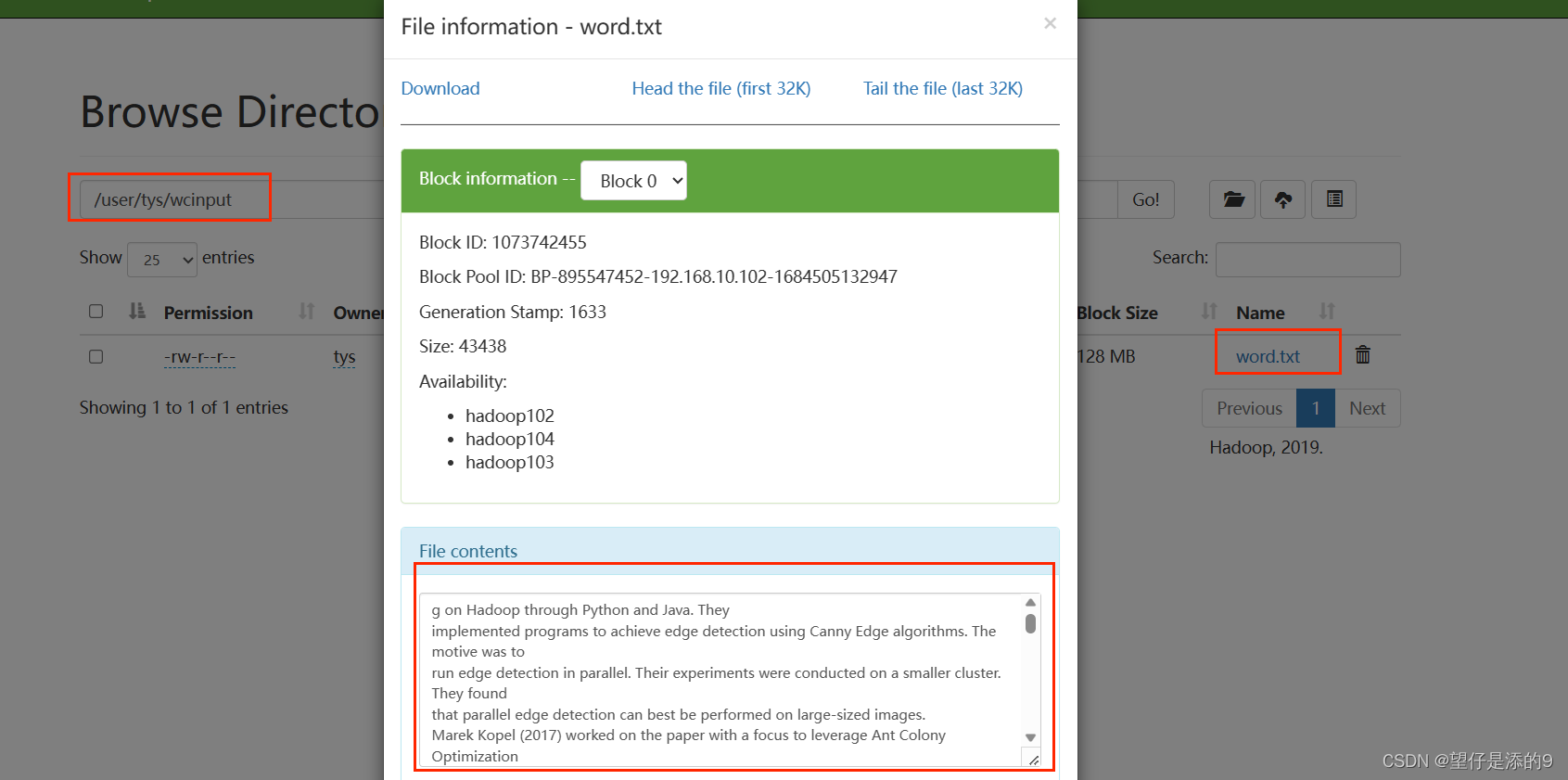

将单词数据上传到hdfs

命令:

[tys@hadoop102 hadoop-3.1.3]$ hadoop fs -put word1.txt /user/tys/wcinput上传的路径自己确定,自己建立

在web端查看是否上传成功,如下就说明成功

编写代码

在idea中的相关代码:

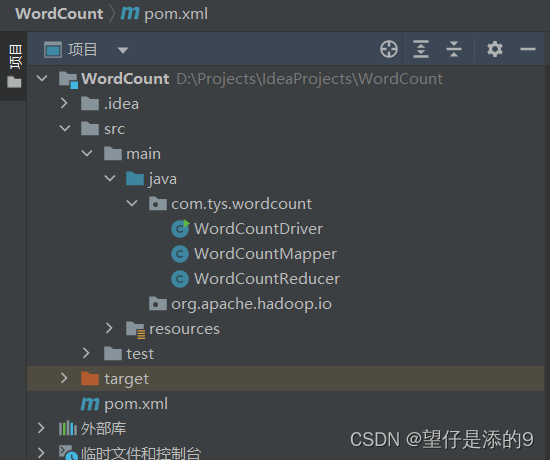

我的文件目录:

pom.xml文件里面添加的内容:

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.1.3</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>1.7.30</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.6.1</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

<plugin>

<artifactId>maven-assembly-plugin</artifactId>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>(也可以不用添加<build>里面的内容,我没有添加,因为一直报错说找不到插件,而且我们后面需要用到的jar包也是那个没有添加依赖的jar包)

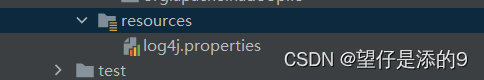

在resources下新建“资源包” log4j,

添加以下内容

log4j.rootLogger=INFO, stdout

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/spring.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%nWordCountReducer代码:

package com.tys.wordcount;

import java.io.IOException;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

public class WordCountReducer extends Reducer<Text, IntWritable, Text, IntWritable>{

int sum;

IntWritable v = new IntWritable();

@Override

protected void reduce(Text key, Iterable<IntWritable> values,Context context) throws IOException, InterruptedException {

// 1 累加求和

sum = 0;

for (IntWritable count : values) {

sum += count.get();

}

// 2 输出

v.set(sum);

context.write(key,v);

}

}WordCountMapper代码

package com.tys.wordcount;

import java.io.IOException;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

public class WordCountMapper extends Mapper<LongWritable, Text, Text, IntWritable>{

Text k = new Text();

IntWritable v = new IntWritable(1);

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// 1 获取一行

String line = value.toString();

// 2 切割

String[] words = line.split("\\s+" );

// 3 输出

for (String word : words) {

k.set(word);

context.write(k, v);

}

}

}WordCountDriver代码:

package com.tys.wordcount;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class WordCountDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

// 1 获取配置信息以及获取job对象

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

// 2 关联本Driver程序的jar

job.setJarByClass(WordCountDriver.class);

// 3 关联Mapper和Reducer的jar

job.setMapperClass(WordCountMapper.class);

job.setReducerClass(WordCountReducer.class);

// 4 设置Mapper输出的kv类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

// 5 设置最终输出kv类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

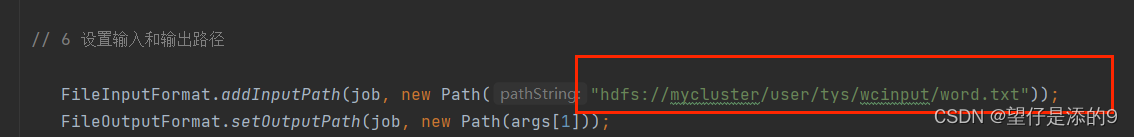

// 6 设置输入和输出路径

FileInputFormat.addInputPath(job, new Path("hdfs://mycluster/user/tys/wcinput/word.txt"));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

// 7 提交job

boolean result = job.waitForCompletion(true);

System.exit(result ? 0 : 1);

}

}注意事项

在WordCountDriver代码中要注意以下路径

这里的路径跟上面”准备工作“那一步的路径保持一致,注意这里的输出路径要写成

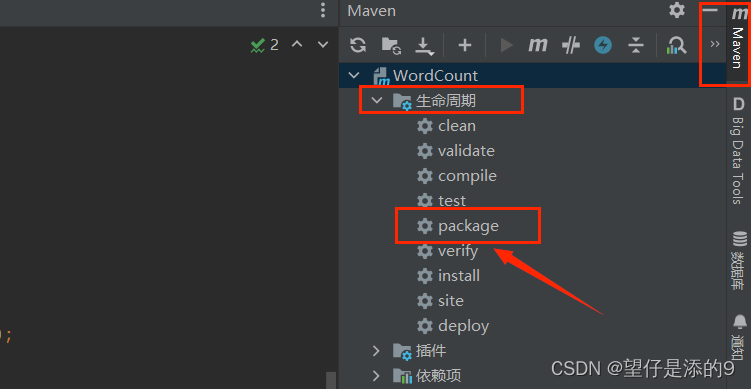

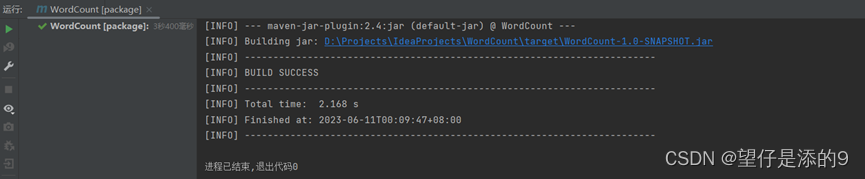

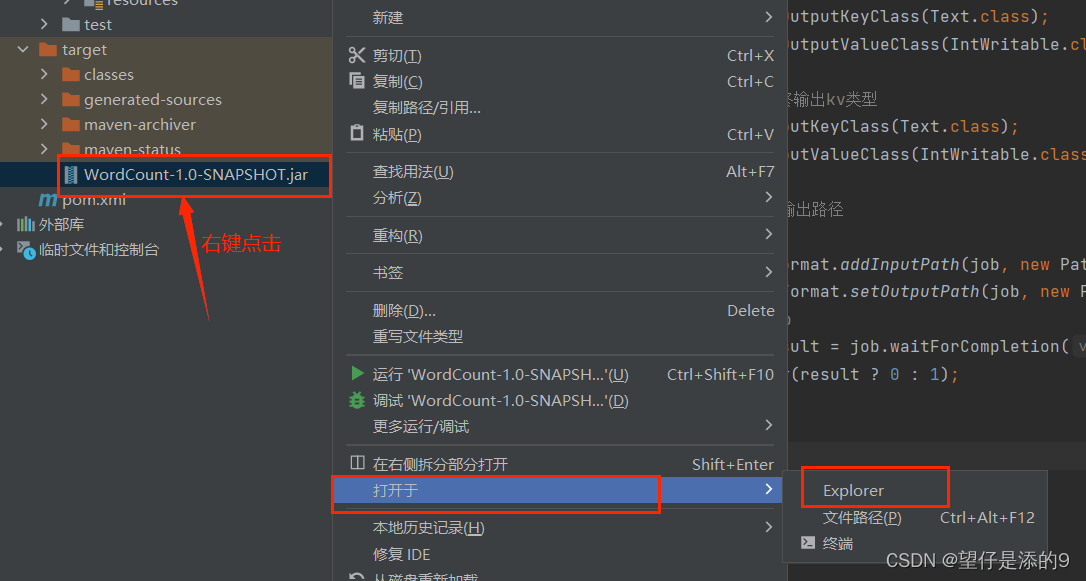

args[1]打包成jar包

点击package

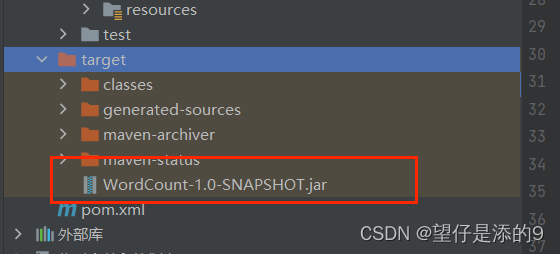

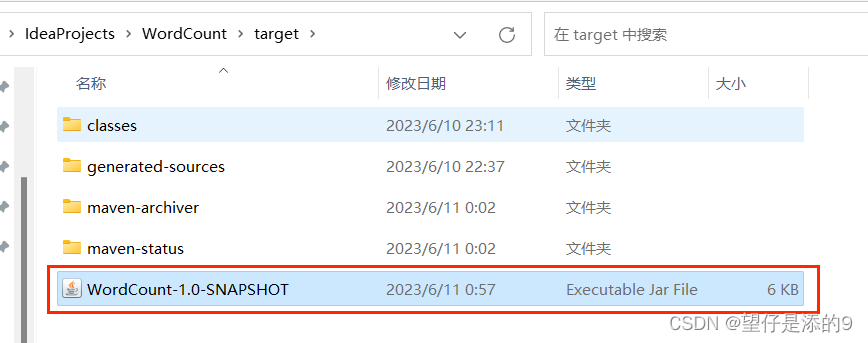

结果:

(因为我没有加上一步的<build>里面的内容,所以只出现一个jar包,加上<build>里面的内容就会出现两个jar包,选我上图中的jar包就可以了)

来到如下界面

将该文件托到桌面并重命名为wc

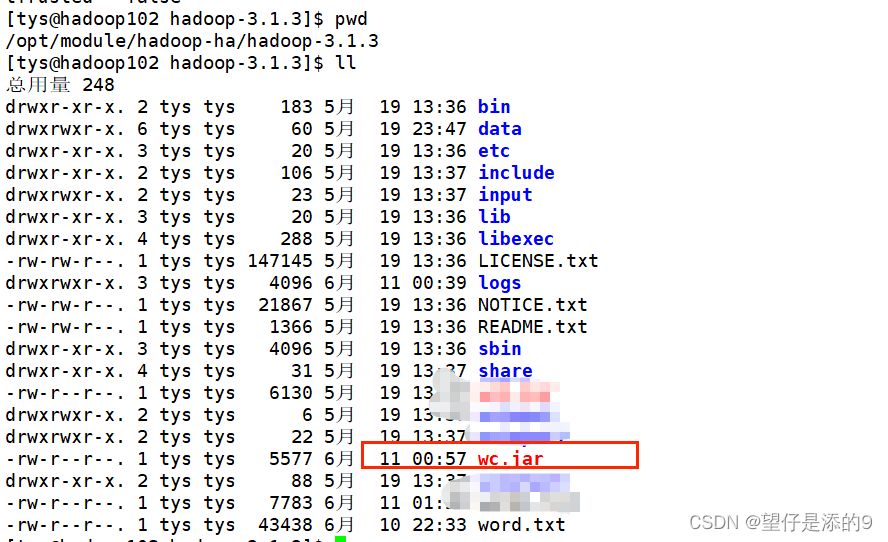

然后将该文件上传到虚拟机高可用集群的目录下

运行jar包:

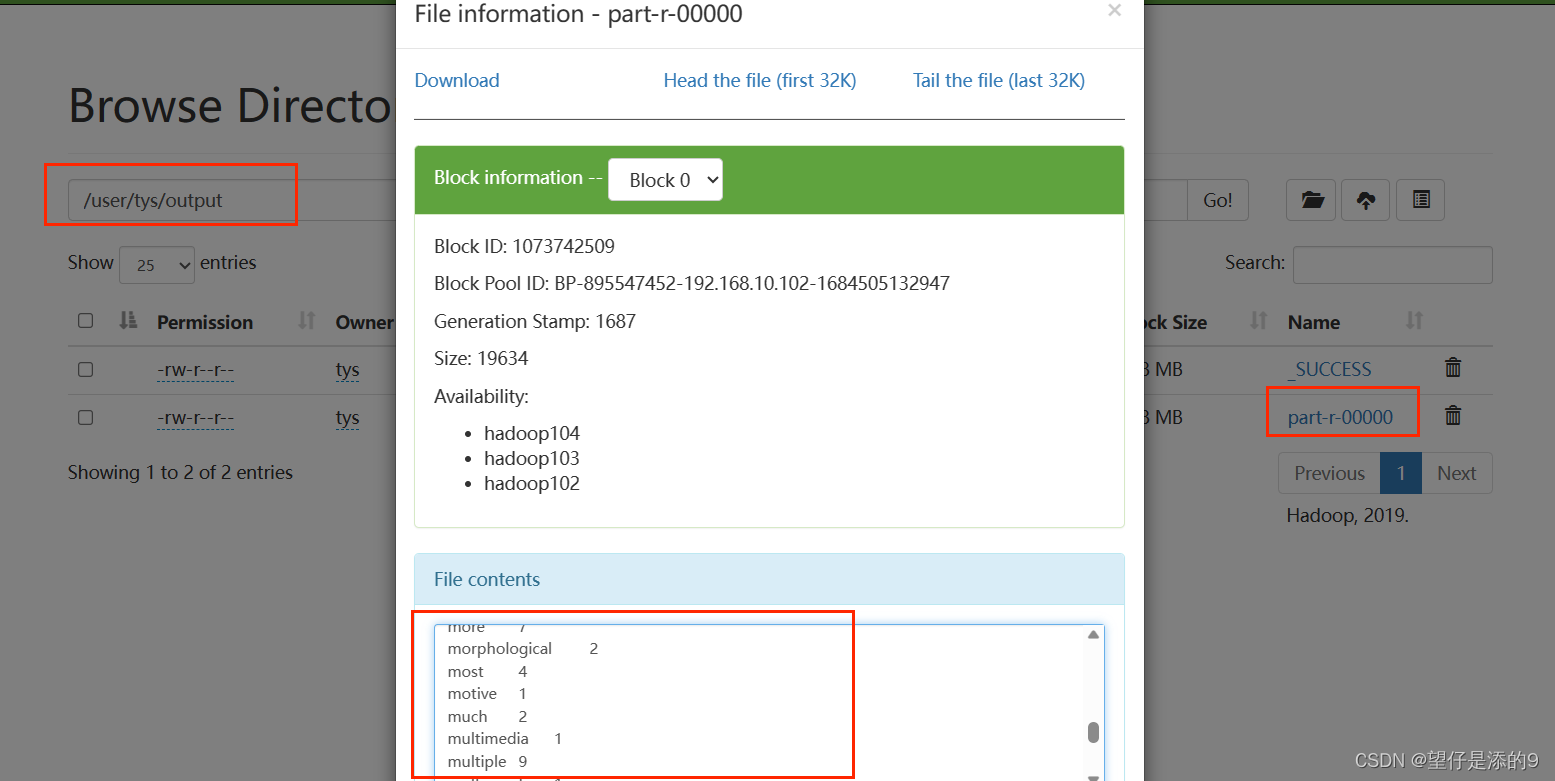

[tys@hadoop102 hadoop-3.1.3]$ hadoop jar wc.jar com.tys.wordcount.WordCountDriver /user/tys/wcinput/word.txt /user/tys/output“注意输出路径

/user/tys/output一定不能自己建立,如果“/user/tys/”下已经有output这个路径了就要先删掉output,或者将output改为output1(或者其他不存在的路径)

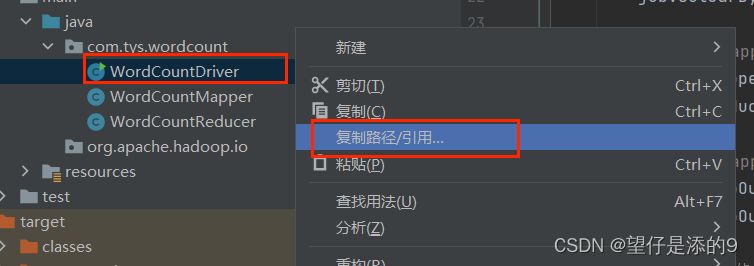

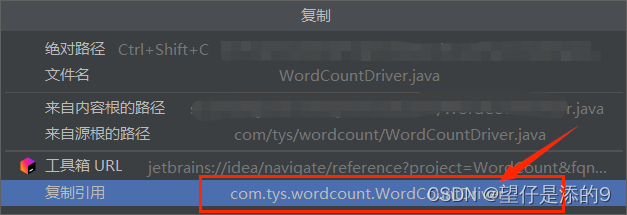

"com.tys.wordcount.WordCountDriver"这个代码的由来如下:

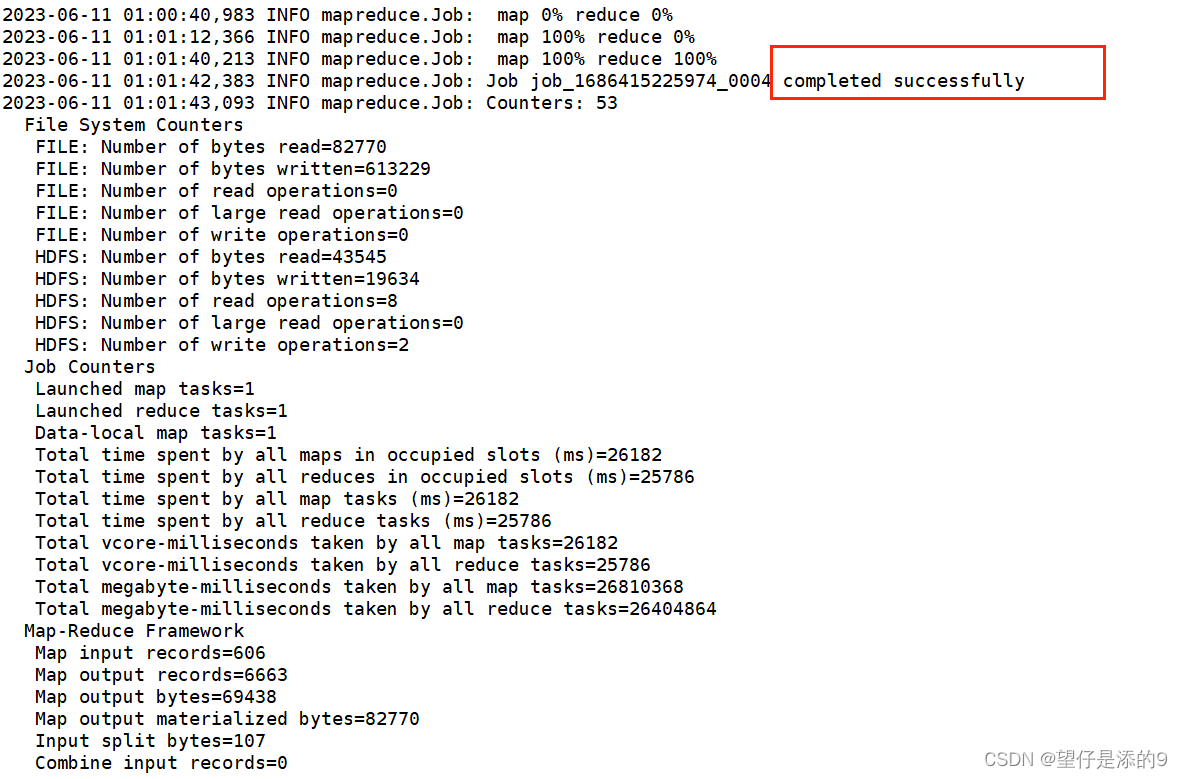

运行成功截图

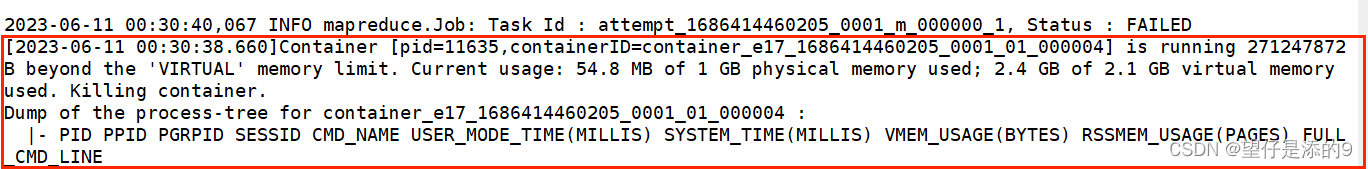

运行过程中出现虚拟内存溢出

如下错误:

Container [pid=11635,containerID=container_e17_1686414460205_0001_01_000004] is running 271247872B beyond the 'VIRTUAL' memory limit. Current usage: 54.8 MB of 1 GB physical memory used; 2.4 GB of 2.1 GB virtual memory used. Killing container.

报错如下图所示:

解决方法;

修改yarn-site.xml配置文件将分配的内存设置大一点

[tys@hadoop102 ~]$ cd /opt/module/hadoop-ha/hadoop-3.1.3/etc/hadoop/

[tys@hadoop102 hadoop]$ vim yarn-site.xml 添加(修改)以下内容

<!-- yarn容器允许分配的最大最小内存 -->

<property>

<name>yarn.scheduler.minimum-allocation-mb</name>

<value>1024</value>

</property>

<property>

<name>yarn.scheduler.maximum-allocation-mb</name>

<value>4096</value>

</property>

<!-- yarn容器允许管理的物理内存大小 -->

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>4096</value>

</property>

<!-- 关闭yarn对物理内存和虚拟内存的限制检查 -->

<property>

<name>yarn.nodemanager.pmem-check-enabled</name>

<value>false</value>

</property>

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

</property>修改好之后保存退出,别忘记分发

[tys@hadoop102 hadoop]$ xsync yarn-site.xml然后重启yarn再运行jar包就可以了

1396

1396

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?