一、项目要求

根据电商日志文件,分析:

-

统计页面浏览量(每行记录就是一次浏览)

-

统计各个省份的浏览量 (需要解析IP)

-

日志的ETL操作(ETL:数据从来源端经过抽取(Extract)、转换(Transform)、加载(Load)至目的端的过程)

为什么要ETL:没有必要解析出所有数据,只需要解析出有价值的字段即可。本项目中需要解析出:ip、url、pageId(topicId对应的页面Id)、country、province、city

二、创建Maven项目编写e-commerce-practice程序

- 统计页面浏览量(每行记录就是一次浏览)

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class LogAnalysisDriver {

public static void main(String[] args) throws Exception {

if (args.length != 2) {

System.err.println("Usage: LogAnalysisDriver <input path> <output path>");

System.exit(-1);

}

Configuration conf = new Configuration();

Job job = Job.getInstance(conf, "Page View Count");

job.setJarByClass(LogAnalysisDriver.class);

job.setMapperClass(PageViewMapper.class);

job.setCombinerClass(PageViewReducer.class);

job.setReducerClass(PageViewReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}编写mapper类对文件的行进行计数统计

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class PageViewMapper extends Mapper<LongWritable, Text, Text, IntWritable> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// Split the input line into fields based on the delimiter

context.write(new Text("line"), new IntWritable(1));

}

}编写reducer类对map的结果累加

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class PageViewReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

private final IntWritable result = new IntWritable();

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

result.set(sum);

context.write(key, result);

}

}以上是对项目二第一问的实现过程

三、将编写的MapReduce程序打包并上传,启动集群的Linux系统,运行结果

(1)Idea中打包 java程序可参考往期内容

(2)运行程序

在运行程序之前,需要启动Hadoop,命令如下:

cd /usr/local/hadoop //hadoop目录

./sbin/start-dfs.sh在启动Hadoop之后,需要首先删除HDFS中与当前Linux用户hadoop对应的input和output目录

cd /usr/local/hadoop

./bin/hdfs dfs -rm -r input

./bin/hdfs dfs -rm -r output然后,再在HDFS中新建与当前Linux用户hadoop对应的input目录,即“/user/hadoop/input”目录,具体命令如下:

cd /usr/local/hadoop

./bin/hdfs dfs -mkdir etcinput然后,把之前的文件trackinfo_20130721.txt上传到HDFS中的“/user/hadoop/input”目录下,命令如下:

cd /usr/local/hadoop

./bin/hdfs dfs -put ./trackinfo_20130721.txt input

现在,就可以在Linux系统中,使用hadoop jar命令运行程序,命令如下:

cd /usr/local/hadoop

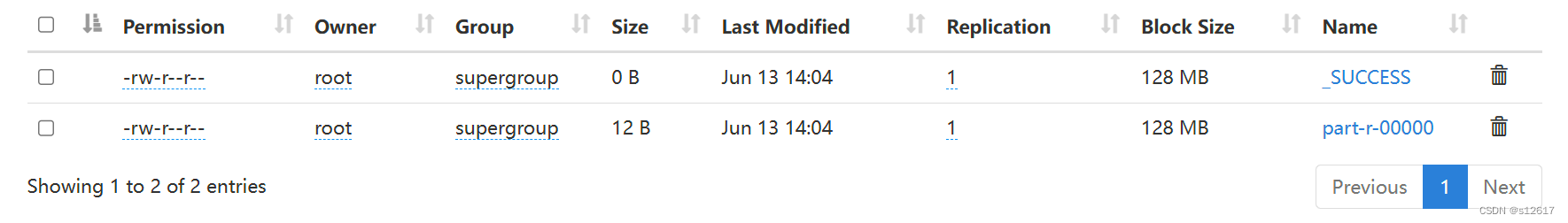

./bin/hadoop jar ./Log1.jar etcinput etcoutput上面命令执行以后,当运行顺利结束时,HDFS中output目录下出现以下文件证明成功:

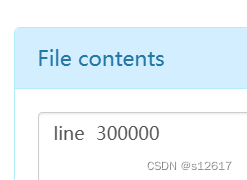

文件详情内容:

6719

6719

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?