一、任务需求

统计每个手机号上行流量和、下行流量和、总流量和(上行流量和+下行流量和),并且:将统计结果按照手机号的前缀

进行区分,并输出到不同的输出文件中去。如:

13* ==> ..

15* ==> ..

other ==> ..

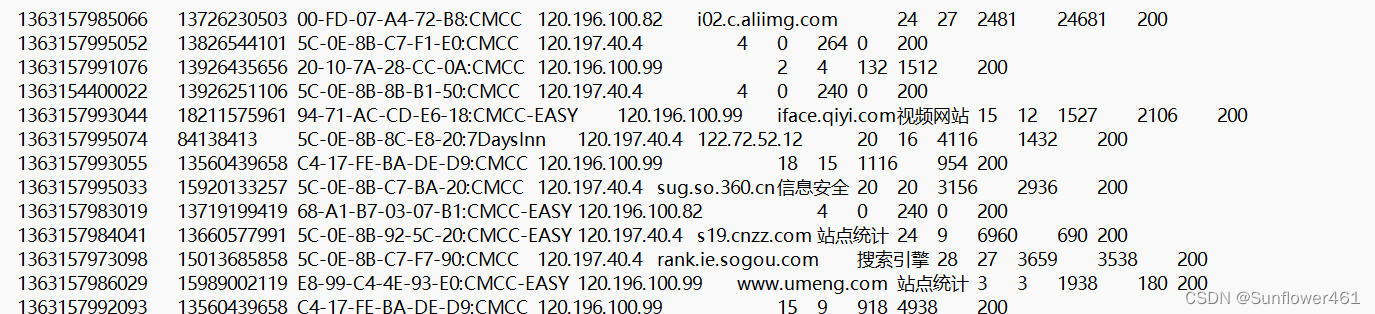

其中,access.log数据文件

第二个字段:手机号

倒数第三个字段:上行流量

倒数第二个字段:下行流量

部分数据文件:

二、项目思路

(1)自定义Access类

包括属性:手机号、上行流量、下行流量、总流量

(2)自定义Map任务类(Map Task)

对每一行日志内容进行拆分,Map输出数据为:

phone==>Access(手机号,该行手机号的上行流量,该行手机号的

下行流量)

(3)编写Reduce任务类(Reduce Task)

对每个手机号的流量进行汇总,Map输出数据为:

phone==>Access(手机号,上行流量和,下行流量和)

也可以优化为:

phone==>Access(NullWritable对象,上行流量和,下行流量和)

(4)编写分区处理类

继承org.apache.hadoop.mapreduce.Partitioner

类,"13"开头的手机号交给第一个ReduceTask任务处理,最终

输出到0号分区,"15"开头的手机号交给第二个ReduceTask任

务处理,最终输出到1号分区,其余手机号交给第三个

ReduceTask任务处理,最终输出到2号分区。

三、代码实现

1.Access类

package org.mapreduce;

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

//1实现writable方法

public class Access implements Writable{

private long upflow;

private long downflow;

private long sumflow;

//必须要有空参构造,为了以后反射用

public Access() {

super();

}

public Access(long upflow, long downflow) {

super();

this.upflow = upflow;

this.downflow = downflow;

this.sumflow = upflow+downflow;

}

public void set(long upflow, long downflow) {

this.upflow = upflow;

this.downflow = downflow;

this.sumflow = upflow+downflow;

}

//序列化的方法

@Override

public void write(DataOutput out) throws IOException {

out.writeLong(upflow);

out.writeLong(downflow);

out.writeLong(sumflow);

//反序列化方法

//注意序列化方法和反序列化方法顺序必须保持一致

}

@Override

public void readFields(DataInput in) throws IOException {

this.upflow=in.readLong();

this.downflow=in.readLong();

this.sumflow=in.readLong();

}

@Override

public String toString() {

return upflow + "\t" + downflow + "\t" + sumflow;

}

public void setUpflow(long upflow) {

this.upflow = upflow;

}

public long getUpflow() {

return upflow;

}

public long getDownflow() {

return downflow;

}

public void setDownflow(long downflow) {

this.downflow = downflow;

}

public long getSumflow() {

return sumflow;

}

public void setSumflow(long sumflow) {

this.sumflow = sumflow;

}

}

2.FlowMapper类

package org.mapreduce;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class FlowMapper extends Mapper<LongWritable, Text, Text, Access>{

Text k=new Text();

Access v=new Access();

@Override

protected void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

String line=value.toString();

String[] fields=line.split("\t");

String phNum=fields[1];

long upFlow=Long.parseLong(fields[fields.length-3]);

long downFlow=Long.parseLong(fields[fields.length-2]);

k.set(phNum);

v.set(upFlow,downFlow);

context.write(k, v);

}

}

3.FlowReducer类

package org.mapreduce;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class FlowReducer extends Reducer<Text, Access, Text, Access>{

@Override

protected void reduce(Text key, Iterable<Access> values, Context context)

throws IOException, InterruptedException {

long sumUpFlow=0;

long sumDownFlow=0;

System.out.println(values);

for (Access access : values) {

sumUpFlow+= access.getUpflow();

sumDownFlow+= access.getDownflow();

}

Access v=new Access(sumUpFlow,sumDownFlow);

context.write(key, v);

}

}

4.FlowDriver类

package org.mapreduce;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class FlowDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

if (args.length < 2) {

System.err.println("Usage: FlowDriver <inputPath> <outputPath>");

System.exit(1);

}

Configuration configuration = new Configuration();

Job job = Job.getInstance(configuration, "Flow Calculation");

job.setJarByClass(FlowDriver.class);

job.setMapperClass(FlowMapper.class);

job.setReducerClass(FlowReducer.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(Access.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Access.class);

FileInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

boolean result = job.waitForCompletion(true);

System.exit(result ? 0 : 1);

}

}

5.FlowHDFSApp类

package org.mapreduce;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.net.URI;

public class FlowHDFSApp {

public static void main(String[] args) throws Exception{

System.setProperty("HADOOP_USER_NAME", "root");

Configuration configuration = new Configuration();

configuration.set("fs.defaultFS","hdfs://hadoop102:8020");

// 创建一个Job

Job job = Job.getInstance(configuration);

// 设置Job对应的参数: 主类

job.setJarByClass(FlowHDFSApp.class);

// 设置Job对应的参数: 设置自定义的Mapper和Reducer处理类

job.setMapperClass(FlowMapper.class);

job.setReducerClass(FlowReducer.class);

// 添加Combiner的设置即可

job.setCombinerClass(FlowReducer.class);

// 设置Job对应的参数: Mapper输出key和value的类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

// 设置Job对应的参数: Reduce输出key和value的类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

// 如果输出目录已经存在,则先删除

FileSystem fileSystem = FileSystem.get(new URI("hdfs://hadoop102:8020"),configuration, "root");

Path outputPath = new Path("/user/output");

if(fileSystem.exists(outputPath)) {

fileSystem.delete(outputPath,true);

}

// 设置Job对应的参数: Mapper输出key和value的类型:作业输入和输出的路径

FileInputFormat.setInputPaths(job, new Path("hdfs://hadoop102:8020/user/input.txt"));

FileOutputFormat.setOutputPath(job, outputPath);

// 提交job

boolean result = job.waitForCompletion(true);

System.exit(result ? 0 : -1);

}

}

6.PhonePartitioner类

package org.mapreduce;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Partitioner;

public class PhonePartitioner extends Partitioner<Text, Access> {

@Override

public int getPartition(Text key, Access value, int numPartitions) {

String phonePrefix = key.toString().substring(0, 2);

switch (phonePrefix) {

case "13":

return 0;

case "15":

return 1;

default:

return 2;

}

}

}

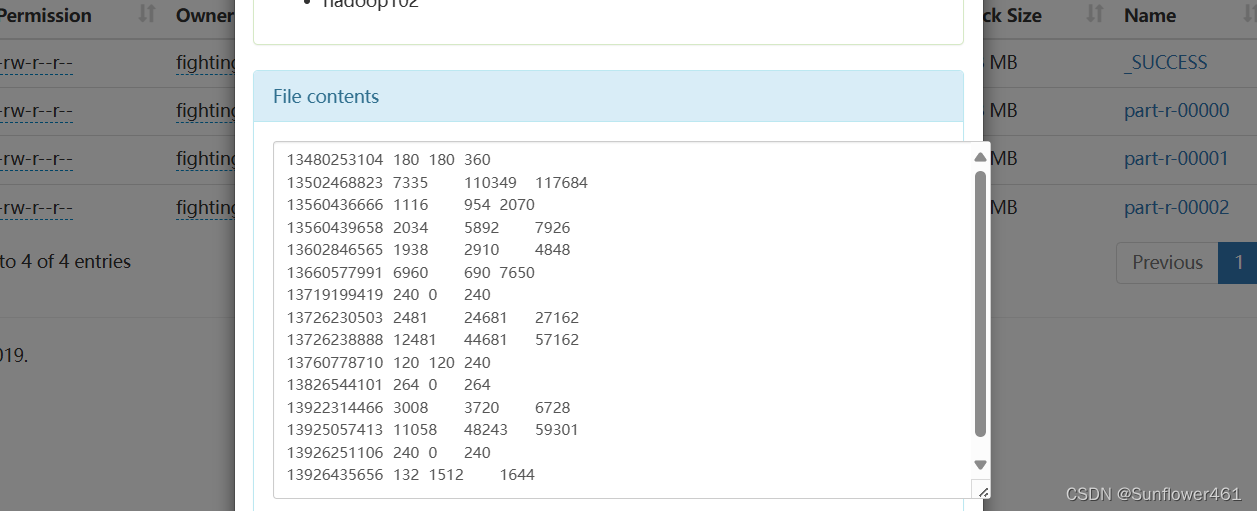

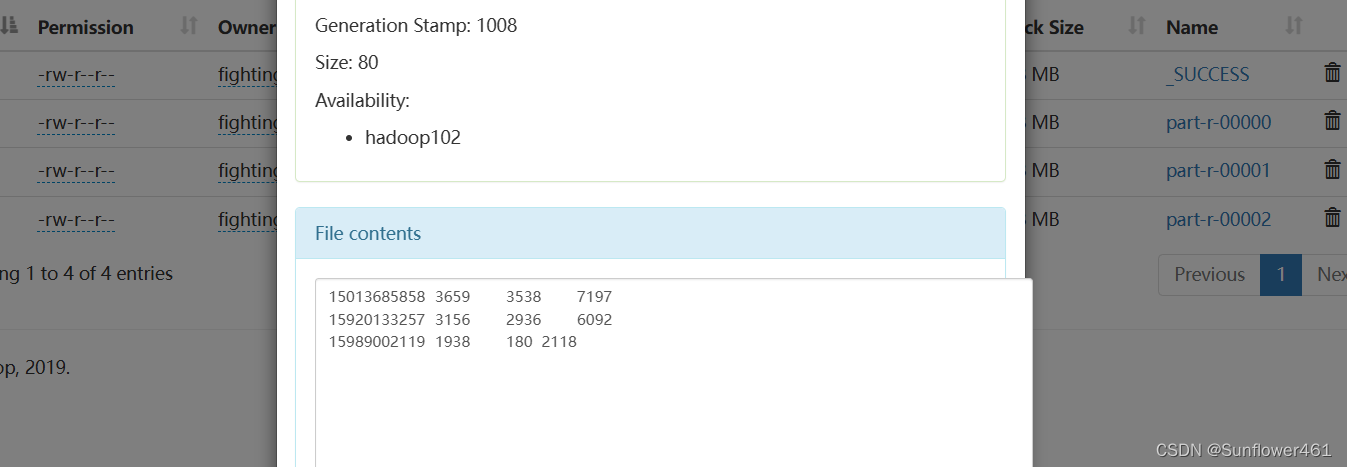

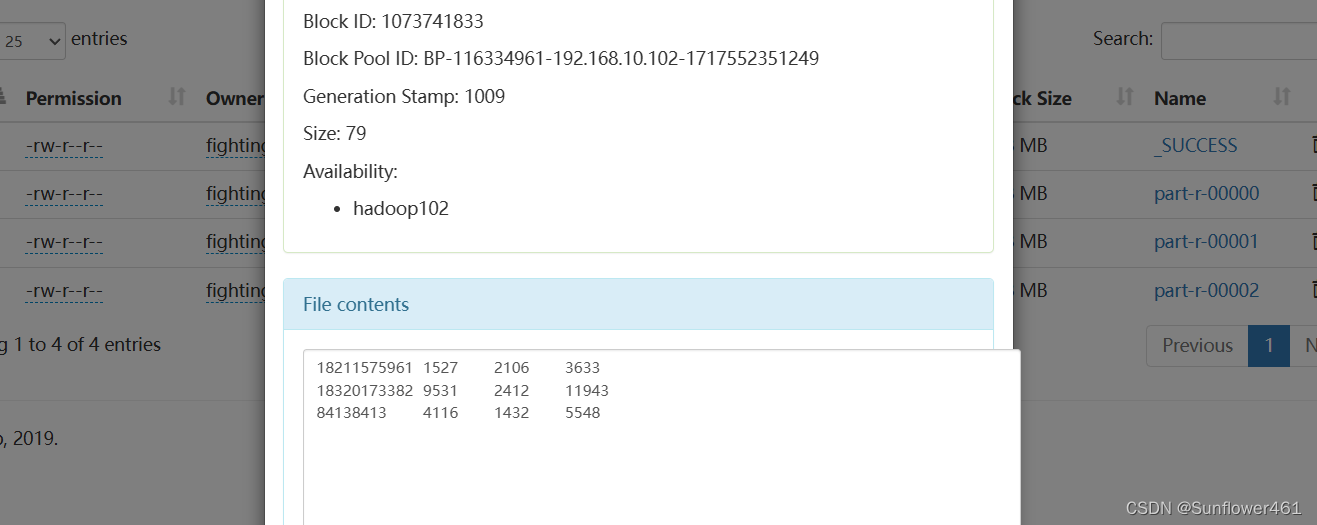

四、结果展示

在本次实验中,要注意数据文件上传HDFS文件系统的方法,以及idea的maven环境配置;输出文件的路径要正确。

1028

1028

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?