原论文为 "From Point to Space: 3D Moving Human Pose Estimation Using Commodity WiF"

本文仅对论文的模型部分进行了复现,不包括数据导入及模型训练!

论文的算法模型为经典的残差网络ResNet

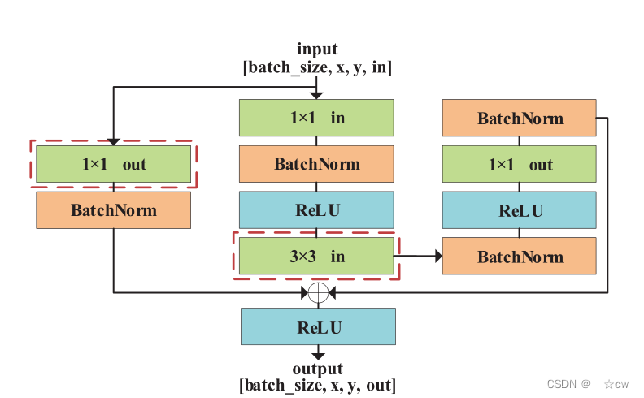

基本残差块的结构:

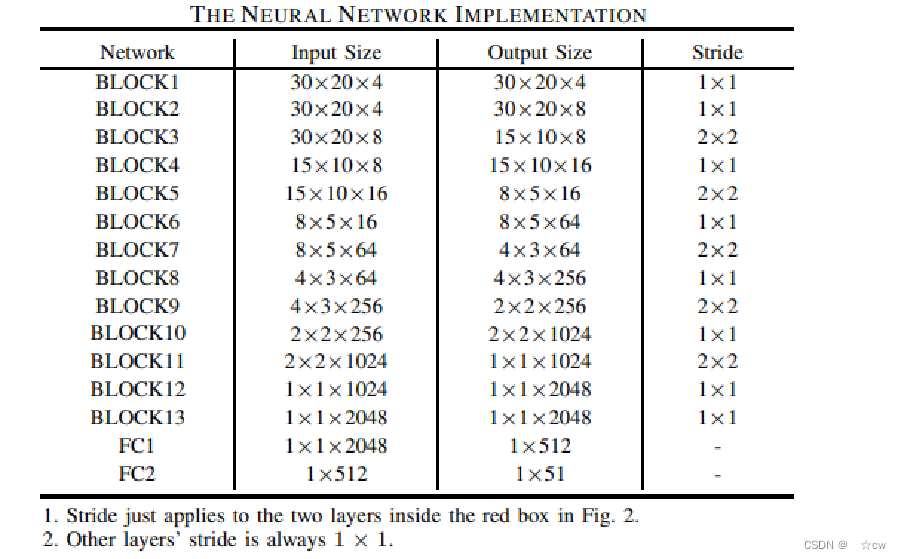

Res-Net网络结构:

复现的模型代码如下:

import torch

from torch import nn

import torch.optim as optim

class Block(nn.Module):

def __init__(self, in_channels, out_channels, stride):

super(Block, self).__init__()

self.conv1 = nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size=1, stride=1, padding=0),

nn.BatchNorm2d(out_channels),

nn.ReLU(inplace=True),

nn.Conv2d(out_channels, out_channels, kernel_size=3, stride=stride, padding=1),

nn.BatchNorm2d(out_channels),

nn.ReLU(inplace=True),

nn.Conv2d(out_channels, out_channels, kernel_size=1, stride=1, padding=0),

nn.BatchNorm2d(out_channels))

self.conv2 = nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size=1, stride=stride, padding=0),

nn.BatchNorm2d(out_channels)

)

def forward(self, x):

residual = x

x = self.conv1(x)

out = self.conv2(residual) + x

out = nn.ReLU(inplace=True)(out)

return out

class Resnet(nn.Module):

def __init__(self, block):

super(Resnet, self).__init__()

self.conv1 = nn.Sequential(

block(4, 4, 1),

block(4, 8, 1),

block(8, 8, 2),

block(8, 16, 1),

block(16, 16, 2),

block(16, 64, 1),

block(64, 64, 2),

block(64, 256, 1),

block(256, 256, 2),

block(256, 1024, 1),

block(1024, 1024, 2),

block(1024, 2048, 1),

block(2048, 2048, 1)

)

self.fc1 = nn.Linear(2048, 512)

self.fc2 = nn.Linear(512, 51)

def forward(self, x):

x = self.conv1(x)

x = x.reshape(-1, 2048)

x = self.fc1(x)

x = self.fc2(x)

return x

可以简单的验证一下模型的输入输出数据的shape:

wi_mose = Resnet(Block)

num_epochs = 20

batch_size = 16

learning_rate = 0.0001

loss_fc = nn.MSELoss()

optimizer = optim.Adam(wi_mose.parameters(), lr=learning_rate)

scheduler = torch.optim.lr_scheduler.MultiStepLR(optimizer, milestones=[3, 6, 9, 12, 15, 18], gamma=0.5)

x = torch.randn(batch_size,4,30,20)

y = wi_mose(x)

print(f'size of x is {x.shape},size of y is {y.shape}')最终结果小伙伴可以试一试,如果手里有数据最好可以来训练一下模型,欢迎评论区讨论。

765

765

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?