背景

随着技术发展的日新月异,虚拟现实产业已经从过去的探索期,自2020年起,慢慢过渡到高速发展期,随着5G时代的到来,大带宽高可靠低延迟网络环境,为虚拟现实产业提供了很好的网络保障,虚拟现实在越来越多的场景下有了应用价值,典型场景如工业互联网、虚拟仿真、文旅文博、智慧交通、智慧能源、智慧医疗、智慧校园、智慧农业等。同事,行业也对清晰度、流畅性和交互感也提出了更高的要求。本文从Android平台的采集推送为例,介绍下基于头显或类似终端的低延迟解决方案。

实现

大多数头显设备,基于Android平台,本文以Unity环境下的窗体采集、麦克风、和Unity内部音频采集为例,介绍下具体实现思路,其中,音频采集可分为:采集麦克风、采集Unity音频、麦克风和Unity音频混音、2路Unity音频混音。采集到的音视频原始数据,分别投递到Android原生封装的模块,进行编码、打包,通过RTMP传输到服务端,实现毫秒级延迟的RTMP直播方案。

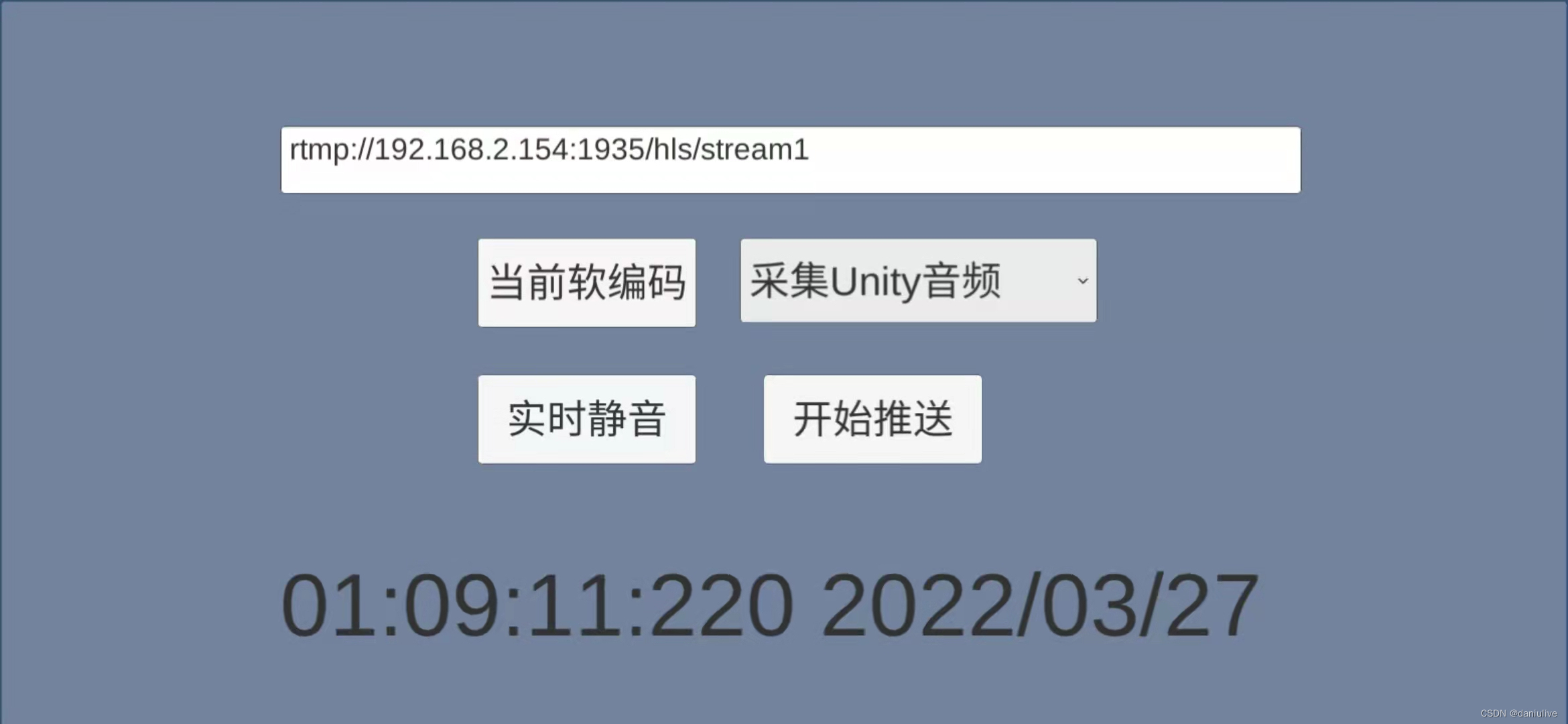

其中音频这块,分单独采集和混音,如需采集麦克风,记得动态获取麦克风权限,由于仅限于功能展示,页面页面比较粗糙:

首先是音频采集类型定义,我们把音频分为以下几类:

/*定义Audio源选项*/

public enum PB_AUDIO_OPTION : uint

{

AUDIO_OPTION_CAPTURE_MIC = 0x0, /*采集麦克风音频*/

AUDIO_OPTION_EXTERNAL_PCM_DATA = 0x1, /*外部PCM数据*/

AUDIO_OPTION_MIC_EXTERNAL_PCM_MIXER = 0x2, /*麦克风+外部PCM数据混音*/

AUDIO_OPTION_TWO_EXTERNAL_PCM_MIXER = 0x3, /* 两路外部PCM数据混音*/

}1. 基础初始化

基础初始化,主要完成和Android封装层的拉通和Audio权限动态检测。

private void Start()

{

game_object_ = this.gameObject.name; //获取GameObject Name

AndroidJavaClass android_class = new AndroidJavaClass("com.unity3d.player.UnityPlayer");

java_obj_cur_activity_ = android_class.GetStatic<AndroidJavaObject>("currentActivity");

pusher_obj_ = new AndroidJavaObject("com.daniulive.smartpublisher.SmartPublisherUnity3d");

NT_PB_U3D_Init();

//NT_U3D_SetSDKClientKey("", "", 0);

audioOptionSel.onValueChanged.AddListener(SelAudioPushType);

btn_encode_mode_.onClick.AddListener(OnEncodeModeBtnClicked);

btn_pusher_.onClick.AddListener(OnPusherBtnClicked);

btn_mute_.onClick.AddListener(OnMuteBtnClicked);

#if PLATFORM_ANDROID

if (!Permission.HasUserAuthorizedPermission(Permission.Microphone))

{

Permission.RequestUserPermission(Permission.Microphone);

permission_dialog_ = new GameObject();

}

#endif

}2.OpenPusher实现

OpenPusher主要是调用底层模块的Open接口,创建推送实例,并返回推送句柄,如只需要推送纯音频或纯视频,也可通过NT_PB_U3D_Open()接口,做相关设定。

private void OpenPusher()

{

if ( java_obj_cur_activity_ == null )

{

Debug.LogError("getApplicationContext is null");

return;

}

int audio_opt = 1;

int video_opt = 1;

pusher_handle_ = NT_PB_U3D_Open(audio_opt, video_opt, video_width_, video_height_);

if (pusher_handle_ != 0)

Debug.Log("NT_PB_U3D_Open success");

else

Debug.LogError("NT_PB_U3D_Open fail");

}3.InitAndSetConfig具体实现

InitAndSetConfig主要完成SDK的一些参数设定工作,如软、硬编码设定、码率设定、音频采集类型、视频帧率、码率设置等。

private void InitAndSetConfig()

{

if (is_hw_encode_)

{

int h264HWKbps = setHardwareEncoderKbps(true, video_width_, video_height_);

Debug.Log("h264HWKbps: " + h264HWKbps);

int isSupportH264HWEncoder = NT_PB_U3D_SetVideoHWEncoder(pusher_handle_, h264HWKbps);

if (isSupportH264HWEncoder == 0)

{

Debug.Log("Great, it supports h.264 hardware encoder!");

}

}

else {

if (is_sw_vbr_mode_) //H.264 software encoder

{

int is_enable_vbr = 1;

int video_quality = CalVideoQuality(video_width_, video_height_, true);

int vbr_max_bitrate = CalVbrMaxKBitRate(video_width_, video_height_);

NT_PB_U3D_SetSwVBRMode(pusher_handle_, is_enable_vbr, video_quality, vbr_max_bitrate);

//NT_PB_U3D_SetSWVideoEncoderSpeed(pusher_handle_, 2);

}

}

NT_PB_U3D_SetAudioCodecType(pusher_handle_, 1);

NT_PB_U3D_SetFPS(pusher_handle_, 25);

NT_PB_U3D_SetGopInterval(pusher_handle_, 25*2);

//NT_PB_U3D_SetSWVideoBitRate(pusher_handle_, 600, 1200);

if (audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_MIC_EXTERNAL_PCM_MIXER

|| audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_TWO_EXTERNAL_PCM_MIXER)

{

NT_PB_U3D_SetAudioMix(pusher_handle_, 1);

}

else

{

NT_PB_U3D_SetAudioMix(pusher_handle_, 0);

}

}4.Push()封装

获取到推送实例句柄后,设置推送参数和RTMP URL,采集音视频数据,推送到RTMP服务,如需推送麦克风,启动麦克风,并设定采样率和通道数,如需混音:

public void Push()

{

if (is_running_)

{

Debug.Log("已推送..");

return;

}

video_width_ = Screen.width;

video_height_ = Screen.height;

//获取输入框的url

string url = input_url_.text.Trim();

if (!url.StartsWith("rtmp://"))

{

push_url_ = "rtmp://192.168.2.154:1935/hls/stream1";

}

else

{

push_url_ = url;

}

OpenPusher();

if (pusher_handle_ == 0)

return;

NT_PB_U3D_Set_Game_Object(pusher_handle_, game_object_);

/* ++ 推送前参数配置可加在此处 ++ */

InitAndSetConfig();

NT_PB_U3D_SetPushUrl(pusher_handle_, push_url_);

/* -- 推送前参数配置可加在此处 -- */

int flag = NT_PB_U3D_StartPublisher(pusher_handle_);

if (flag == DANIULIVE_RETURN_OK)

{

Debug.Log("推送成功..");

is_running_ = true;

if (audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_EXTERNAL_PCM_DATA

|| audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_MIC_EXTERNAL_PCM_MIXER

|| audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_TWO_EXTERNAL_PCM_MIXER)

{

PostUnityAudioClipData();

}

if (audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_CAPTURE_MIC

|| audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_MIC_EXTERNAL_PCM_MIXER)

{

// 注意多路或者调用了其他StartXXX的情况

NT_PB_U3D_StartAudioRecord(44100, 1);

NT_PB_U3D_EnableAudioRecordCapture(pusher_handle_, true);

}

}

else

{

Debug.LogError("推送失败..");

}

}2. 停止推送

停止推送之前,如采集AudioSource或麦克风数据,先停掉后再调用NT_PB_U3D_StopPublisher()即可,如无其他录像之类操作,接着调用NT_PB_U3D_Close()和NT_PB_U3D_UnInit()接口,并置空推送实例。

private void ClosePusher()

{

if (audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_EXTERNAL_PCM_DATA

|| audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_MIC_EXTERNAL_PCM_MIXER

|| audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_TWO_EXTERNAL_PCM_MIXER)

{

StopAudioSource();

}

if (audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_CAPTURE_MIC

|| audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_MIC_EXTERNAL_PCM_MIXER)

{

NT_PB_U3D_EnableAudioRecordCapture(pusher_handle_, false);

// 注意多路或者调用了其他StartXXX的情况

NT_PB_U3D_StopAudioRecord();

}

if (texture_ != null)

{

UnityEngine.Object.Destroy(texture_);

texture_ = null;

}

int flag = NT_PB_U3D_StopPublisher(pusher_handle_);

if (flag == DANIULIVE_RETURN_OK)

{

Debug.Log("停止成功..");

}

else

{

Debug.LogError("停止失败..");

}

flag = NT_PB_U3D_Close(pusher_handle_);

if (flag == DANIULIVE_RETURN_OK)

{

Debug.Log("关闭成功..");

}

else

{

Debug.LogError("关闭失败..");

}

pusher_handle_ = 0;

NT_PB_U3D_UnInit();

is_running_ = false;

}6.数据采集

摄像头和屏幕的数据采集,还是调用Android原生封装的接口,本文不再赘述,如果需要采集Unity窗体的数据,可以用参考以下代码:

if (texture_ == null || video_width_ != Screen.width || video_height_ != Screen.height)

{

if (texture_ != null)

{

UnityEngine.Object.Destroy(texture_);

texture_ = null;

}

video_width_ = Screen.width;

video_height_ = Screen.height;

scale_width_ = (video_width_ + 1) / 2;

scale_height_ = (video_height_ + 1) / 2;

if (scale_width_ % 2 != 0)

{

scale_width_ = scale_width_ + 1;

}

if (scale_height_ % 2 != 0)

{

scale_height_ = scale_height_ + 1;

}

texture_ = new Texture2D(video_width_, video_height_, TextureFormat.RGBA32, false);

Debug.Log("OnPostRender screen changed--");

return;

}

texture_.ReadPixels(new Rect(0, 0, video_width_, video_height_), 0, 0, false);

//texture_.ReadPixels(new Rect(0, Screen.height * 0.5f, video_width_, video_height_), 0, 0, false); //半屏测试

texture_.Apply();从 texture里面,获取到原始数据。

如需采集Unity的AudioClip数据:

/// <summary>

/// 获取AudioClip数据

/// </summary>

private void PostUnityAudioClipData()

{

//重新初始化

TimerManager.UnRegister(this);

if (audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_EXTERNAL_PCM_DATA

|| audio_push_type_ == (int)PB_AUDIO_OPTION.AUDIO_OPTION_TWO_EXTERNAL_PCM_MIXER)

{

audio_clip_info_ = new AudioClipInfo();

audio_clip_info_.audio_source_ = GameObject.Find("Canvas/Panel/AudioSource").GetComponent<AudioSource>();

if (audio_clip_info_.audio_source_ == null)

{

Debug.LogError("audio source is null..");

return;

}

if (audio_clip_info_.audio_source_.clip == null)

{

Debug.LogError("audio clip is null..");

return;

}

audio_clip_info_.audio_clip_ = audio_clip_info_.audio_source_.clip;

audio_clip_info_.audio_clip_offset_ = 0;

audio_clip_info_.audio_clip_length_ = audio_clip_info_.audio_clip_.samples * audio_clip_info_.audio_clip_.channels;

}

TimerManager.Register(this, 0.039999f, (PostPCMData), false);

}7. 数据对接

Unity的视频数据,获取到Texture数据后,通过调用 NT_PB_U3D_OnCaptureVideoRGBA32Data()接口,传递给SDK层。

如果是Unity的AudioClip采集的数据,调用NT_PB_U3D_OnPCMFloatArray()传递给封装模块。

NT_PB_U3D_OnPCMFloatArray(pusher_handle_, pcm_data.data_,

0, pcm_data.size_,

pcm_data.sample_rate_,

pcm_data.channels_,

pcm_data.per_channel_sample_number_);8. 相关event回调处理

/// <summary>

/// android 传递过来 code

/// </summary>

/// <param name="code"></param>

public void onNTSmartEvent(string param)

{

if (!param.Contains(","))

{

Debug.Log("[onNTSmartEvent] android传递参数错误");

return;

}

string[] strs = param.Split(',');

string player_handle =strs[0];

string code = strs[1];

string param1 = strs[2];

string param2 = strs[3];

string param3 = strs[4];

string param4 = strs[5];

Debug.Log("[onNTSmartEvent] code: 0x" + Convert.ToString(Convert.ToInt32(code), 16));

String publisher_event = "";

switch (Convert.ToInt32(code))

{

case EVENTID.EVENT_DANIULIVE_ERC_PUBLISHER_STARTED:

publisher_event = "开始..";

break;

case EVENTID.EVENT_DANIULIVE_ERC_PUBLISHER_CONNECTING:

publisher_event = "连接中..";

break;

case EVENTID.EVENT_DANIULIVE_ERC_PUBLISHER_CONNECTION_FAILED:

publisher_event = "连接失败..";

break;

case EVENTID.EVENT_DANIULIVE_ERC_PUBLISHER_CONNECTED:

publisher_event = "连接成功..";

break;

case EVENTID.EVENT_DANIULIVE_ERC_PUBLISHER_DISCONNECTED:

publisher_event = "连接断开..";

break;

case EVENTID.EVENT_DANIULIVE_ERC_PUBLISHER_STOP:

publisher_event = "关闭..";

break;

case EVENTID.EVENT_DANIULIVE_ERC_PUBLISHER_RECORDER_START_NEW_FILE:

publisher_event = "开始一个新的录像文件 : " + param3;

break;

case EVENTID.EVENT_DANIULIVE_ERC_PUBLISHER_ONE_RECORDER_FILE_FINISHED:

publisher_event = "已生成一个录像文件 : " + param3;

break;

case EVENTID.EVENT_DANIULIVE_ERC_PUBLISHER_SEND_DELAY:

publisher_event = "发送时延: " + param1 + " 帧数:" + param2;

break;

case EVENTID.EVENT_DANIULIVE_ERC_PUBLISHER_CAPTURE_IMAGE:

publisher_event = "快照: " + param1 + " 路径:" + param3;

if (Convert.ToInt32(param1) == 0)

{

publisher_event = publisher_event + "截取快照成功..";

}

else

{

publisher_event = publisher_event + "截取快照失败..";

}

break;

case EVENTID.EVENT_DANIULIVE_ERC_PUBLISHER_RTSP_URL:

publisher_event = "RTSP服务URL: " + param3;

break;

case EVENTID.EVENT_DANIULIVE_ERC_PUSH_RTSP_SERVER_RESPONSE_STATUS_CODE:

publisher_event = "RTSP status code received, codeID: " + param1 + ", RTSP URL: " + param3;

break;

case EVENTID.EVENT_DANIULIVE_ERC_PUSH_RTSP_SERVER_NOT_SUPPORT:

publisher_event = "服务器不支持RTSP推送, 推送的RTSP URL: " + param3;

break;

}

Debug.Log(publisher_event);

}总结

如果需要头显端采集实时数据,可以参考上述写法,获取到Texture数据和AudioClip数据,直接把数据投递到Android封装的底层模块,底层模块实现数据的编码打包按协议规范发送即可。感兴趣的开发者,也可以参考我们针对Android模块做的二次接口封装,然后自行参考尝试即可。

/*

* WebSite: daniusdk.com

* SmartPublisherAndroidMono

* Android原生模块和Unity交互接口封装

*/

1.【最先调用】NT_PB_U3D_Init,推送实例初始化,目前预留;

2.【最后调用】NT_PB_U3D_UnInit,UnInit推送SDK,最后调用。

3.【启动麦克风】NT_PB_U3D_StartAudioRecord,请确保sample_rate有效,当前只支持{44100, 8000, 16000, 24000, 32000, 48000}, 推荐44100,channels,当前通道支持单通道(1)和双通道(2),推荐单通道(1),如只需要采集Unity音频,无需启用麦克风采集,如需采集麦克风音频,可在Unity动态获取麦克风采集权限。

4.【停止麦克风】NT_PB_U3D_StopAudioRecord,如启动了麦克风,调用停止推送相关操作之前,把麦克风采集停掉。

5.【获得推送实例句柄】NT_PB_U3D_Open,设置上下文信息,返回推送实例句柄;

6.【是否启用麦克风采集】NT_PB_U3D_EnableAudioRecordCapture,设置是否使用麦克风采集的音频,is_enable_audio_record_capture为true时启用。

7.【设置GameObject】NT_PB_U3D_Set_Game_Object,注册Game Object,用于消息传递;

8.【设置H.264硬编码】NT_PB_U3D_SetVideoHWEncoder,设置特定机型H.264硬编码;

9.【设置H.265硬编码】NT_PB_U3D_SetVideoHevcHWEncoder,设置特定机型H.265硬编码;

10.【设置软编码可变码率编码】NT_PB_U3D_SetSwVBRMode,设置软编码可变码率编码模式;

11.【设置关键帧间隔】NT_PB_U3D_SetGopInterval,设置关键帧间隔,一般来说,关键帧间隔可以设置到帧率的2-4倍;

12.【软编码编码码率】NT_PB_U3D_SetSWVideoBitRate,设置软编码编码码率;

13.【设置帧率】NT_PB_U3D_SetFPS,设置视频帧率;

14.【设置编码profile】NT_PB_U3D_SetSWVideoEncoderProfile,设置软编码编码profile;

15.【设置软编码编码速度】NT_PB_U3D_SetSWVideoEncoderSpeed,设置软编码编码速度;

16.【设置是否混音】NT_PB_U3D_SetAudioMix,设置混音,is_mix: 1混音, 0不混音, 默认不混音,目前支持两路音频混音;

17.【实时静音】NT_PB_U3D_SetMute,设置推送过程中,音频实时静音;

18.【输入音量调节】NT_PB_U3D_SetInputAudioVolume,设置输入音量, 这个接口一般不建议调用, index: 一般是0和1, 如果没有混音的只用0, 有混音的话, 0,1分别设置音量,在一些特殊情况下可能会用, 一般不建议放大音量;

19.【设置音频编码类型】NT_PB_U3D_SetAudioCodecType,设置音频编码类型,默认AAC编码;

20.【设置音频编码码率】NT_PB_U3D_SetAudioBitRate,设置音频编码码率, 当前只对AAC编码有效;

21.【设置speex音频编码质量】NT_PB_U3D_SetSpeexEncoderQuality,设置speex音频编码质量;

22.【设置音频噪音抑制】NT_PB_U3D_SetNoiseSuppression,设置音频噪音抑制;

23.【设置音频自动增益控制】NT_PB_U3D_SetAGC,设置音频自动增益控制;

24.【设置快照flag】NT_PB_U3D_SetSaveImageFlag,设置是否需要在推流或录像过程中快照;

25.【实时快照】NT_PB_U3D_SaveCurImage,推流或录像过程中快照;

26.【录像音频控制】NT_PB_U3D_SetRecorderAudio,音频录制开关, 目的是为了更细粒度的去控制录像, 一般不需要调用这个接口, 这个接口使用场景比如同时推送音视频,但只想录制视频,可以调用这个接口关闭音频录制;

27.【录像视频控制】NT_PB_U3D_SetRecorderVideo,视频录制开关, 目的是为了更细粒度的去控制录像, 一般不需要调用这个接口, 这个接口使用场景比如同时推送音视频,但只想录制音频,可以调用这个接口关闭视频录制;

28.【录像】NT_PB_U3D_CreateFileDirectory,创建录像存储路径;

29.【录像】NT_PB_U3D_SetRecorderDirectory,设置录像存储路径;

30.【录像】NT_PB_U3D_SetRecorderFileMaxSize,设置单个录像文件大小;

31.【数据投递】NT_PB_U3D_OnCaptureVideoI420Data,实时投递YUV数据;

32.【数据投递】NT_PB_U3D_OnCaptureVideoRGB24Data,实时投递RGB24数据;

33.【数据投递】NT_PB_U3D_OnCaptureVideoRGBA32Data,实时投递RGBA数据;

34.【数据投递】NT_PB_U3D_OnPCMData,实时投递PCM数据,数据类型,byte数组;

35.【数据投递】NT_PB_U3D_OnPCMShortArray,实时投递PCM数据,数据类型short数组;

36.【数据投递】NT_PB_U3D_OnPCMFloatArray,实时投递PCM数据,数据类型float数组;

37.【混音数据投递】NT_PB_U3D_OnMixPCMShortArray,传递PCM混音音频数据给SDK, 每10ms音频数据传入一次,数据类型short数组;

38.【混音数据投递】NT_PB_U3D_OnMixPCMFloatArray,传递PCM混音音频数据给SDK, 每10ms音频数据传入一次,数据类型float数组;

39.【推送URL】NT_PB_U3D_SetPushUrl,设置推送的RTMP URL;

40.【开始RTMP推流】NT_PB_U3D_StartPublisher,开始RTMP推流;

41.【停止RTMP推流】NT_PB_U3D_StopPublisher,停止RTMP推流;

42.【开始录像】NT_PB_U3D_StartRecorder,开始录像;

43.【暂停录像】NT_PB_U3D_PauseRecorder,暂停录像;

44.【停止录像】NT_PB_U3D_StopRecorder,停止录像;

45.【关闭】NT_U3D_Close, 关闭推送实例。

476

476

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?