深度学习本质上是表示学习,它通过多层非线性神经网络模型从底层特征中学习出对具体任务而言更有效的高级抽象特征。针对一个具体的任务,我们往往会遇到这种情况:需要用一个针对特定任务已经被训练好的模型学习出特征表示,然后将学习出的特征表示作为另一个模型的输入。这就要求我们会获取模型中间层的输出,下面以具体代码形式介绍两种具体方法。深度学习具有强大的特征表达能力。有时候我们训练好分类模型,并不想用来进行分类,而是用来提取特征用于其他任务,比如相似图片计算。接下来讲下如何使用TensorFlow提取特征。

1.必须在模型中命名好要提取的那一层,如下

self.h_pool_flat = tf.reshape(self.h_pool, [-1, num_filters_total], name=‘h_pool_flat’)

2.通过调用sess.run()来获取h_pool_flat层特征

feature = graph.get_operation_by_name(“h_pool_flat”).outputs[0]

batch_predictions, batch_feature =

sess.run([predictions, feature], {input_x: x_test_batch, dropout_keep_prob: 1.0})

keras输出中间层结果的2种方法

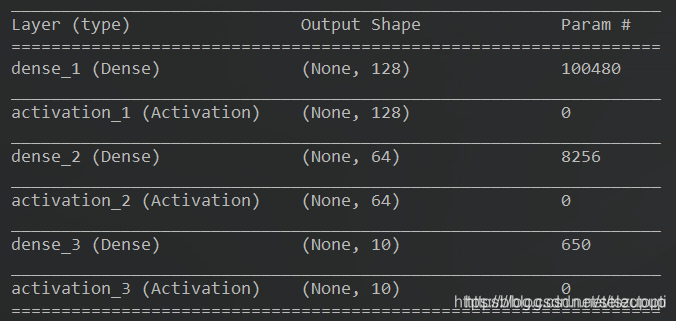

这里的任务是使用多层全连接神经网络预测Mnist图像数据集的标签,模型相对简单,结构图如下:

可以看到模型是一个三层的全连接神经网络。针对该任务的代码及训练效果如下:

以最后一代为例:

Epoch 20/20

128/48000 […] - ETA: 3s - loss: 0.2236 - acc: 0.9453

4352/48000 [=>…] - ETA: 0s - loss: 0.1976 - acc: 0.9426

8192/48000 [>…] - ETA: 0s - loss: 0.1939 - acc: 0.9454

12800/48000 [=>…] - ETA: 0s - loss: 0.1935 - acc: 0.9449

16512/48000 [=>…] - ETA: 0s - loss: 0.1919 - acc: 0.9450

20864/48000 [>…] - ETA: 0s - loss: 0.1952 - acc: 0.9446

24704/48000 [>…] - ETA: 0s - loss: 0.1947 - acc: 0.9447

28672/48000 [>…] - ETA: 0s - loss: 0.1933 - acc: 0.9449

32384/48000 [=>…] - ETA: 0s - loss: 0.1932 - acc: 0.9450

35968/48000 [=>…] - ETA: 0s - loss: 0.1919 - acc: 0.9456

39808/48000 [=>…] - ETA: 0s - loss: 0.1930 - acc: 0.9455

43904/48000 [>…] - ETA: 0s - loss: 0.1921 - acc: 0.9457

47232/48000 [>.] - ETA: 0s - loss: 0.1927 - acc: 0.9456

48000/48000 [================] - 1s 15us/step - loss: 0.1928 - acc: 0.9455 - val_loss: 0.1900 - val_acc: 0.9473

32/10000 […] - ETA: 0s

2976/10000 [=>…] - ETA: 0s

6432/10000 [>…] - ETA: 0s

10000/10000 [==================] - 0s 15us/step

Test score: 0.1925726256787777

Test accuracy: 0.9442

现在我们要获取第三个全连接层dense_3的输出,有以下两种常用的方式:

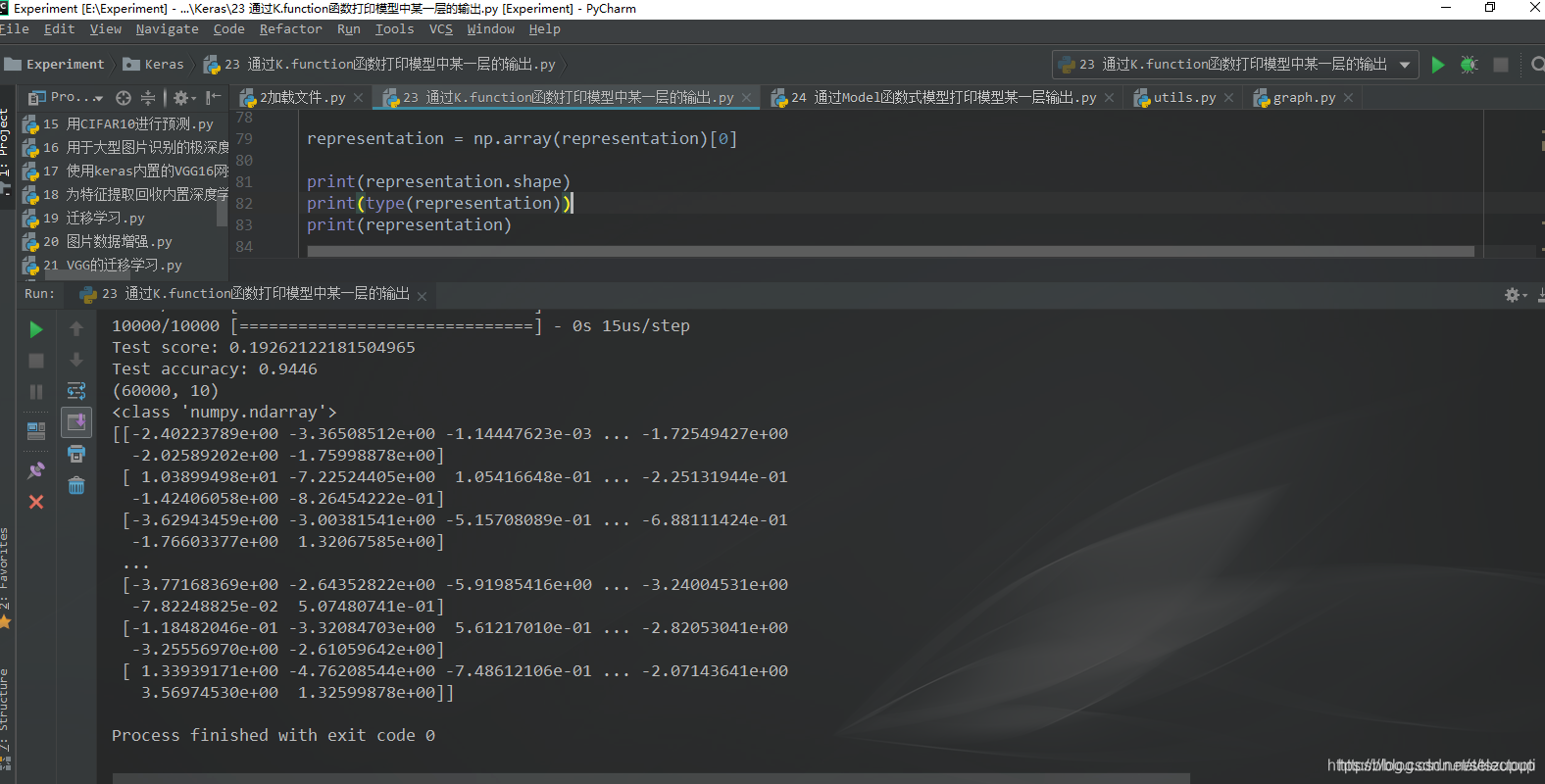

1 通过K.function()函数打印模型中间层的输出

思路是通过K.function()函数创建Keras函数,然后再将数据输入到函数中即可。

实例化keras函数

representation_layer = K.function(inputs=[model.layers[0].input], outputs=[model.get_layer(‘dense_3’).output])

调用实例化后的keras函数获取学习到的特征

representation = representation_layer([X_train])

完整代码及运行效果如下:

#--coding:utf-8--

“”"

@author:taoshouzheng

@time:2019/4/10 19:24

@email:tsz1216@sina.com

“”"

import numpy as np

from keras.datasets import mnist

from keras.models import Sequential

from keras.layers.core import Dense, Activation

from keras.optimizers import SGD

from keras.utils import np_utils

from keras import backend as K

设定随机数种子

np.random.seed(1)

设定超参数

迭代次数

NB_EPOCH = 20

批大小

BATCH_SIZE = 128

日志模式

VERBOSE = 1

类别数

NB_CLASSES = 10

优化器

OPTIMIZER = SGD()

训练集中用于验证的划分比例

VALIDATION_SPLIT = 0.2

数据:混合并划分训练集和测试集数据

(X_train, Y_train), (X_test, Y_test) = mnist.load_data() # X_train是60000行2828的数据,变形为60000784

RESHAPE = 784

X_train = X_train.reshape(60000, RESHAPE) # 变换形状

X_test = X_test.reshape(10000, RESHAPE)

X_train = X_train.astype(‘float32’) # 转换类型

X_test = X_test.astype(‘float32’)

X_train /= 255 # 归一化

X_test /= 255

print(X_train.shape[0], ‘train samples’)

print(X_test.shape[0], ‘test samples’)

将类向量转换为二值类别矩阵

Y_train = np_utils.to_categorical(Y_train, NB_CLASSES)

Y_test = np_utils.to_categorical(Y_test, NB_CLASSES)

构建模型

model = Sequential() # 创建序贯模型实例

model.add(Dense(units=128, input_shape=(RESHAPE, ))) # 输入为784维数组

model.add(Activation(‘relu’))

model.add(Dense(units=64))

model.add(Activation(‘relu’))

model.add(Dense(units=NB_CLASSES))

model.add(Activation(‘softmax’))

打印模型概述信息

model.summary()

编译模型

model.compile(loss=‘categorical_crossentropy’, optimizer=OPTIMIZER, metrics=[‘accuracy’])

训练模型

history = model.fit(X_train, Y_train, batch_size=BATCH_SIZE, epochs=NB_EPOCH, verbose=VERBOSE, validation_split=VALIDATION_SPLIT)

score = model.evaluate(X_test, Y_test, verbose=VERBOSE)

print(‘Test score:’, score[0])

print(‘Test accuracy:’, score[1])

实例化keras函数

注意这里的inputs和outputs应该为list或者tuple对象!!!

representation_layer = K.function(inputs=[model.layers[0].input], outputs=[model.get_layer(‘dense_3’).output])

调用实例化后的keras函数获取学习到的特征

representation = representation_layer([X_train])

representation = np.array(representation)[0]

print(representation.shape)

print(type(representation))

print(representation)

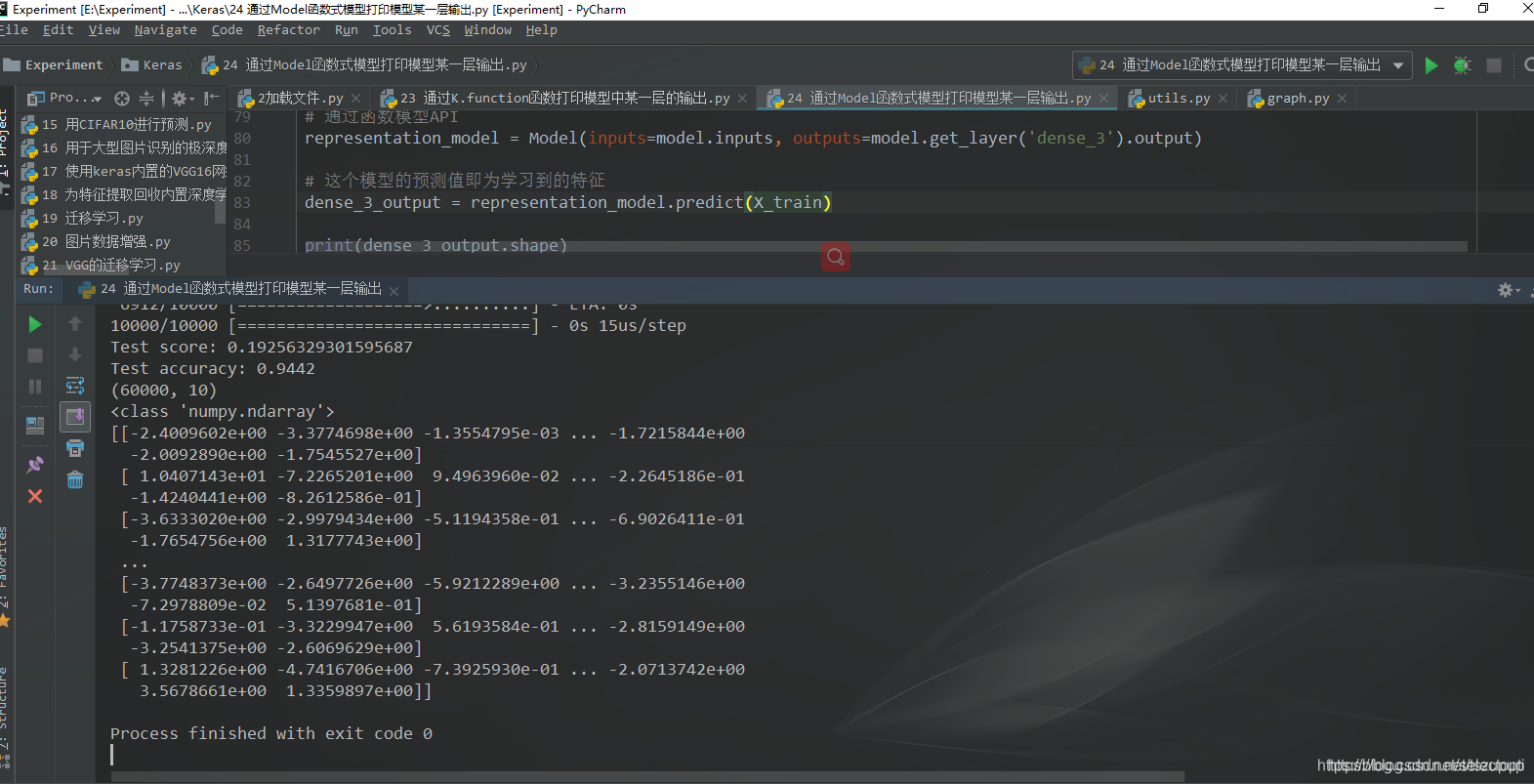

2 通过函数API打印模型中间层的输出

与K.function()函数类似,根据输入输出关系通过Model()构建一个新的模型,然后在新的模型上对输入数据做预测。

通过函数模型API

representation_model = Model(inputs=model.inputs, outputs=model.get_layer(‘dense_3’).output)

这个模型的预测值即为学习到的特征

dense_3_output = representation_model.predict(X_train)

完整代码及运行效果如下:

#--coding:utf-8--

“”"

@author:taoshouzheng

@time:2019/4/10 19:25

@email:tsz1216@sina.com

“”"

#--coding:utf-8--

“”"

@author:taoshouzheng

@time:2019/4/10 19:24

@email:tsz1216@sina.com

“”"

import numpy as np

from keras.datasets import mnist

from keras.models import Sequential, Model

from keras.layers.core import Dense, Activation

from keras.optimizers import SGD

from keras.utils import np_utils

设定随机数种子

np.random.seed(1)

设定超参数

迭代次数

NB_EPOCH = 20

批大小

BATCH_SIZE = 128

日志模式

VERBOSE = 1

类别数

NB_CLASSES = 10

优化器

OPTIMIZER = SGD()

训练集中用于验证的划分比例

VALIDATION_SPLIT = 0.2

数据:混合并划分训练集和测试集数据

(X_train, Y_train_1), (X_test, Y_test) = mnist.load_data() # X_train是60000行2828的数据,变形为60000784

RESHAPE = 784

X_train = X_train.reshape(60000, RESHAPE) # 变换形状

X_test = X_test.reshape(10000, RESHAPE)

X_train = X_train.astype(‘float32’) # 转换类型

X_test = X_test.astype(‘float32’)

X_train /= 255 # 归一化

X_test /= 255

print(X_train.shape[0], ‘train samples’)

print(X_test.shape[0], ‘test samples’)

将类向量转换为二值类别矩阵

Y_train = np_utils.to_categorical(Y_train_1, NB_CLASSES)

Y_test = np_utils.to_categorical(Y_test, NB_CLASSES)

构建模型

model = Sequential() # 创建序贯模型实例

model.add(Dense(units=128, input_shape=(RESHAPE, ))) # 输入为784维数组

model.add(Activation(‘relu’))

model.add(Dense(units=64))

model.add(Activation(‘relu’))

model.add(Dense(units=NB_CLASSES))

model.add(Activation(‘softmax’))

打印模型概述信息

model.summary()

编译模型

model.compile(loss=‘categorical_crossentropy’, optimizer=OPTIMIZER, metrics=[‘accuracy’])

训练模型

history = model.fit(X_train, Y_train, batch_size=BATCH_SIZE, epochs=NB_EPOCH, verbose=VERBOSE, validation_split=VALIDATION_SPLIT)

score = model.evaluate(X_test, Y_test, verbose=VERBOSE)

print(‘Test score:’, score[0])

print(‘Test accuracy:’, score[1])

通过函数模型API

representation_model = Model(inputs=model.inputs, outputs=model.get_layer(‘dense_3’).output)

这个模型的预测值即为学习到的特征

dense_3_output = representation_model.predict(X_train)

print(dense_3_output.shape)

print(type(dense_3_output))

print(dense_3_output)

3.因为我的后端是使用的theano,所以还可以考虑使用theano的函数:

import seaborn as sbn

import pylab as plt

import theano

from keras.models import Sequential

from keras.layers import Dense,Activation

from keras.models import Model

#这是一个theano的函数

dense1 = theano.function([model.layers[0].input],model.layers[1].output,allow_input_downcast=True)

dense1_output = dense1(data) #visualize these images’s FC-layer feature

print dense1_output[0]

本文介绍如何从已训练的深度学习模型中提取特征用于其他任务,包括两种方法:使用K.function()函数和通过函数API构建新模型。这两种方法适用于Keras框架。

本文介绍如何从已训练的深度学习模型中提取特征用于其他任务,包括两种方法:使用K.function()函数和通过函数API构建新模型。这两种方法适用于Keras框架。

447

447

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?