词袋模型

from sklearn.datasets import fetch_20newsgroups

#导入新闻数据集

from sklearn.model_selection import train_test_split

#导入数据切分所需包

news = fetch_20newsgroups(subset='test')

#subset='all'所有的数据集都导入,若等于train只导入训练集,test只导入测试集

print(news.target_names)

print(len(news.data))#数据集长度,共有多少篇文章

print(len(news.target))#标签数量

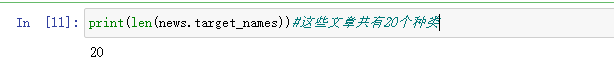

print(len(news.target_names))#这些文章共有20个种类

news.data[0]#选一篇文章来查看

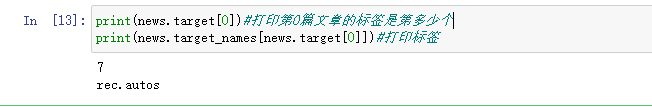

print(news.target[0])#打印第0篇文章的标签是第多少个

print(news.target_names[news.target[0]])#打印标签

#数据切分

x_train,x_test,y_train,y_test = train_test_split(news.data,news.target)

# train = fetch_20newsgroups(subset='train')

# x_train = train.data

# y_train = train.target

# test = fetch_20newsgroups(subset='test')

# x_test = test.data

# y_test = test.target

from sklearn.feature_extraction.text import CountVectorizer

texts=["dog cat fish","dog cat cat","fish bird", 'bird']

cv = CountVectorizer()#实例化这个类

cv_fit=cv.fit_transform(texts)

print(cv.get_feature_names())#特征的描述

print(cv_fit.toarray())#变成一个array形式

print(cv_fit.toarray().sum(axis=0))#将结果纵轴方向累加起来

from sklearn import model_selection

from sklearn.naive_bayes import MultinomialNB

cv = CountVectorizer()

cv_data = cv.fit_transform(x_train)

mul_nb = MultinomialNB()#多项式的贝叶斯模型

#交叉验证然后求平均值

scores = model_selection.cross_val_score(mul_nb, cv_data, y_train, cv=3, scoring='accuracy')

print("Accuracy: %0.3f" % (scores.mean()))

TF-IDF

from sklearn.feature_extraction.text import TfidfVectorizer

# 文本文档列表

text = ["The quick brown fox jumped over the lazy dog.",

"The dog.",

"The fox"]

# 创建变换函数

vectorizer = TfidfVectorizer()

# 词条化以及创建词汇表

vectorizer.fit(text)

# 总结

print(vectorizer.vocabulary_)

print(vectorizer.idf_)

# 编码文档

vector = vectorizer.transform([text[0]])

# 总结编码文档

print(vector.shape)

print(vector.toarray())

# 创建变换函数

vectorizer = TfidfVectorizer()

# 词条化以及创建词汇表

tfidf_train = vectorizer.fit_transform(x_train)

scores = model_selection.cross_val_score(mul_nb, tfidf_train, y_train, cv=3,

scoring='accuracy')

print("Accuracy: %0.3f" % (scores.mean()))

def get_stop_words():

result = set()

#将英文常用的停用词存入stopwords_en.txt

for line in open('stopwords_en.txt', 'r').readlines():

result.add(line.strip())#去掉换行符

return result

# 加载停用词

stop_words = get_stop_words()

# 创建变换函数

vectorizer = TfidfVectorizer(stop_words=stop_words)

#alpha=0.01做平滑的,默认值为1

mul_nb = MultinomialNB(alpha=0.01)

# 词条化以及创建词汇表

tfidf_train = vectorizer.fit_transform(x_train)

scores = model_selection.cross_val_score(mul_nb, tfidf_train, y_train, cv=3,

scoring='accuracy')

print("Accuracy: %0.3f" % (scores.mean()))

# 切分数据集

tfidf_data = vectorizer.fit_transform(news.data)

x_train,x_test,y_train,y_test = train_test_split(tfidf_data,news.target)

mul_nb.fit(x_train,y_train)

print(mul_nb.score(x_train, y_train))

print(mul_nb.score(x_test, y_test))

513

513

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?