计算dice和mdice的方法详解

目前找到三种实现方式,且三种方式结果不同

-

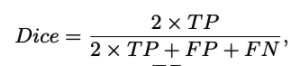

通过计算tp,fp,fn等根据公式

计算,但我觉得使用概率计算fp等值是不准确的,保留意见

计算,但我觉得使用概率计算fp等值是不准确的,保留意见def mDiceLoss(inputs, target, beta=1, smooth=1e-6): n, c, h, w = inputs.size() nt,ct, ht, wt = target.size() if h != ht and w != wt: inputs = F.interpolate(inputs, size=(ht, wt), mode="bilinear", align_corners=True) temp_inputs = torch.softmax(inputs.transpose(1, 2).transpose(2, 3).contiguous().view(n, -1, c), -1) temp_target = target.view(n, -1, ct) # tp = torch.sum(temp_target[..., :-1] * temp_inputs, axis=[0, 1]) #和下面等效 tp = torch.sum(temp_target * temp_inputs, axis=[0, 1]) fp = torch.sum(temp_inputs, axis=[0, 1]) - tp fn = torch.sum(temp_target[..., :-1], axis=[0, 1]) - tp score = ((1 + beta ** 2) * tp + smooth) / ((1 + beta ** 2) * tp + beta ** 2 * fn + fp + smooth) dice_loss = 1 - torch.mean(score) return dice_loss -

通过公示

计算得到的,但是这种方法中的

计算得到的,但是这种方法中的sum()方法没有计算每个通道,而是对所有值计算的。

并且这种方法在网上很通用,各位大佬也是这样计算的。暂时采用这种方法。具体实现有两种方式,如下:

def SoftDiceLoss(y_pred,y_true, epsilon=1e-6):

if len(inputs.shape) == 4:

y_pred=torch.softmax(y_pred,1)

if len(inputs.shape) == 3:

y_pred=torch.softmax(y_pred,0)

numerator = 2. * torch.sum(y_pred * y_true)

# denominator = np.sum(np.square(y_pred) + np.square(y_true), axes)

denominator = torch.sum(y_pred + y_true)

return 1 - torch.mean((numerator + epsilon) / (denominator + epsilon))

class DiceLoss(nn.Module):

def __init__(self, weight=None, size_average=True):

super(DiceLoss, self).__init__()

def forward(self, inputs, targets, smooth=1e-6):

# comment out if your model contains a sigmoid or equivalent activation layer

if len(inputs.shape)==4:

inputs = F.softmax(inputs,dim=1)

if len(inputs.shape)==3:

inputs = F.softmax(inputs,dim=0)

# flatten label and prediction tensors

inputs1 = inputs.view(-1)

targets1 = targets.view(-1)

intersection1 = (inputs1 * targets1).sum()

dice = (2. * intersection1 + smooth) / (inputs1.sum() + targets1.sum() + smooth)

return 1 - torch.mean(dice)

- 基于第二种方法,我觉得应该计算每个通道的dice然后求均值,具体实现如下:

def SoftDiceLoss(y_pred,y_true, epsilon=1e-6):

if len(inputs.shape) == 4:

y_pred=torch.softmax(y_pred,1)

if len(inputs.shape) == 3:

y_pred=torch.softmax(y_pred,0)

numerator = 2. * torch.sum(y_pred * y_true,axis=[0,2,3])

# denominator = np.sum(np.square(y_pred) + np.square(y_true), axes)

denominator = torch.sum(y_pred + y_true,axis=[0,2,3])

return 1 - torch.mean((numerator + epsilon) / (denominator + epsilon))

class DiceLoss(nn.Module):

def __init__(self, weight=None, size_average=True):

super(DiceLoss, self).__init__()

def forward(self, inputs, targets, smooth=1e-6):

# comment out if your model contains a sigmoid or equivalent activation layer

if len(inputs.shape)==4:

inputs = F.softmax(inputs,dim=1)

if len(inputs.shape)==3:

inputs = F.softmax(inputs,dim=0)

temp_inputs=rearrange(inputs, "n c h w->(n h w ) c")

temp_target=rearrange(targets, "n c h w->(n h w ) c")

intersection = (temp_inputs * temp_target).sum(0)

dice1 = (2. * intersection + smooth) / (temp_inputs.sum(0) + temp_target.sum(0) + smooth)

print(dice1)

return 1 - torch.mean(dice)

这种方法分子分母和第二种方法结果一样,只是维度是c,结果差在了不同维度做除法然后再求均值。

但是这种方法要学会如何使用torch.sum(t,axis=[0,1])维度的使用。sum(0)表示在第0维做加法。

3520

3520

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?