14万数据的项目,参考文献与模型特征,选择NBayes和XGBoost做分类,最后也尝试Logist回归模型

数据概览

<class 'pandas.core.frame.DataFrame'>

RangeIndex: 145460 entries, 0 to 145459

Data columns (total 23 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 Date 145460 non-null object

1 Location 145460 non-null object

2 MinTemp 143975 non-null float64

3 MaxTemp 144199 non-null float64

4 Rainfall 142199 non-null float64

5 Evaporation 82670 non-null float64

6 Sunshine 75625 non-null float64

7 WindGustDir 135134 non-null object

8 WindGustSpeed 135197 non-null float64

9 WindDir9am 134894 non-null object

10 WindDir3pm 141232 non-null object

11 WindSpeed9am 143693 non-null float64

12 WindSpeed3pm 142398 non-null float64

13 Humidity9am 142806 non-null float64

14 Humidity3pm 140953 non-null float64

15 Pressure9am 130395 non-null float64

16 Pressure3pm 130432 non-null float64

17 Cloud9am 89572 non-null float64

18 Cloud3pm 86102 non-null float64

19 Temp9am 143693 non-null float64

20 Temp3pm 141851 non-null float64

21 RainToday 142199 non-null object

22 RainTomorrow 142193 non-null object

dtypes: float64(16), object(7)

文章目录

定义两个常用函数:

def vcounts(a):

return a.value_counts()

def group_mean(a,b,c):

return a.groupby(b)[c].mean()

一、清洗数据

1.处理缺失值

df.isnull().sum()

Date 0

Location 0

MinTemp 1485

MaxTemp 1261

Rainfall 3261

Evaporation 62790

Sunshine 69835

WindGustDir 10326

WindGustSpeed 10263

WindDir9am 10566

WindDir3pm 4228

WindSpeed9am 1767

WindSpeed3pm 3062

Humidity9am 2654

Humidity3pm 4507

Pressure9am 15065

Pressure3pm 15028

Cloud9am 55888

Cloud3pm 59358

Temp9am 1767

Temp3pm 3609

RainToday 3261

RainTomorrow 3267

由于下雨为自然现象,妄然用统计数值填充恐影响机器错判,故缺失值虽大,亦删除。

2.处理异常值

用描述性统计找出可疑值后,画图分析

没有充足的理由排除,保留

3.处理非数值

(1)LabelEncoder编码与解码

from sklearn.preprocessing import LabelEncoder

label_1=LabelEncoder()

df_drop['loca']=label_1.fit_transform(df_drop.Location)

#解码,输出对照表

label.inverse_transform(np.arange(0,26))

a=label.inverse_transform(np.arange(0,26))

duizhaobiao=pd.DataFrame(a)

duizhaobiao.to_csv('对照表.csv',index=True)

label_2=LabelEncoder()

df_drop['direction']=label_2.fit_transform(df_drop.WindGustDir)

#df_drop['direction'].describe()#用于知道数量

ma=np.arange(0,16)

jiema=label_2.inverse_transform(ma)

duizhaobiao=pd.DataFrame(jiema)

#duizhaobiao.to_csv('GUST对照表.csv',index=True)

label_3=LabelEncoder()

df_drop['9am']=label_3.fit_transform(df_drop.WindDir9am)

label_4=LabelEncoder()

df_drop['3am']=label_4.fit_transform(df_drop.WindDir3pm)

(2)apply+lamda

虽然用label也可以,因为label按字母先后顺序,但用label用累了,apply更有把握感=。=

要注意:表格内容最好复制,把“No”输成“N0”真的太尴尬了=_=|||

df_drop['today']=df_drop.RainToday.apply(lambda x: 0 if x=='No'

else 1

)

df_drop['tmrow']=df_drop.RainTomorrow.apply(lambda x: 0 if x=='No'

else 1

)

4.相关性分析

先去除掉被清洗的数据:

todrop=['Date','Location','WindGustDir', 'WindDir9am', 'WindDir3pm','RainToday', 'RainTomorrow']

df_num = df_drop.drop(df_drop[todrop],axis=1)

plt.figure(figsize=(15,15))

matrix= df_num[['MinTemp', 'MaxTemp','Rainfall', 'Evaporation', 'Sunshine',

'WindGustSpeed', 'WindSpeed9am', 'WindSpeed3pm', 'Humidity9am',

'Humidity3pm', 'Pressure9am', 'Pressure3pm','Temp9am', 'Temp3pm','Cloud9am', 'Cloud3pm',

'loca', 'direction', '9am', '3am',

'today', 'tmrow']].corr()

sns.heatmap(matrix,annot=True,cmap=sns.color_palette("mako", as_cmap=True))#换个蓝色的

plt.savefig('heatmap')

去除绝对值在0.7及以上的:

df_num=df_num.drop(df_num[['MinTemp','Temp9am', 'Temp3pm','Cloud9am', 'Cloud3pm', 'WindSpeed9am', 'WindSpeed3pm']],axis=1)

5.非标签值标准化

#标准化

shu=['MaxTemp', 'Rainfall', 'Evaporation', 'Sunshine',

'WindGustSpeed', 'Humidity9am',

'Humidity3pm', 'Pressure9am', 'Pressure3pm',

]

from sklearn.preprocessing import StandardScaler

std=StandardScaler()

std_num=std.fit_transform(df_num[shu])

std_dta=pd.DataFrame(std_num,columns=shu)#转化为表格

与标签值拼合:

dfm=df_num[['date_', 'loca', 'direction','9am', '3am', 'today', 'tmrow']]

dfm.reset_index(drop=True, inplace=True) #调整原数据集的索引

dta=pd.concat([dfm,std_dta],axis=1)

dta.tail()

二、训练与评估

划分:

x=dta[[ 'MaxTemp', 'Rainfall', 'Evaporation', 'Sunshine', 'WindGustSpeed',

'Humidity9am', 'Humidity3pm', 'Pressure9am', 'Pressure3pm',

'loca', 'direction', '9am', '3am', 'today']]

y=dta['tmrow']

x_train,x_test, y_train,y_test = train_test_split(x,y,train_size=0.7,random_state=200)

定义常用评估函数:

def evaluate_list(true_name,pred):

from sklearn.metrics import accuracy_score,precision_score,recall_score,f1_score,roc_auc_score

a=accuracy_score(true_name,pred)

b=precision_score(true_name,pred)

c=recall_score(true_name,pred)

d=f1_score(true_name,pred)

e=roc_auc_score(true_name,pred)

list_=[a,b,c,d,e]

svm_evalu=pd.DataFrame(list_,

index=['accuracy_score','precision_score','recall_score','f1_score','roc_auc_score']

).round(2).T

return svm_evalu

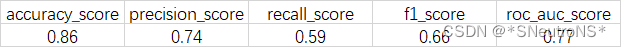

1.XGBoost

注:anaconda3并没有默认安装XGB,需要自己安装

训练

import xgboost as xgb

xgb_moedel2=xgb.XGBClassifier()

xgb_fit2=xgb_moedel2.fit(x_train,y_train)

xgb_pred2=xgb_moedel2.predict(x_test)

评估

ROC

from sklearn.metrics import RocCurveDisplay

predname=xgb_pred2

RocCurveDisplay.from_predictions(y_test,predname)

plt.title('RocCurve of XGBoost')

plt.savefig('XGBoost_roc',bbox_inches='tight')

混淆阵(相对值):

xgb_cfm=confusion_matrix(y_test,xgb_pred2)

sns.heatmap(xgb_cfm/(np.sum(xgb_cfm)),annot=True,cmap=sns.cubehelix_palette(start=.1,rot=-.3,reverse=True,as_cmap=True))

plt.xlabel('predictions')

plt.ylabel('true')

plt.title('XGBoost')

plt.savefig('xgboost_cfm',bbox_inches='tight')

xgb_jieguo= evaluate_list(y_test,xgb_pred2)

接下来的都同理,只展示结果

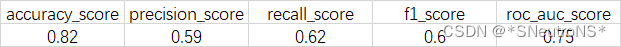

2.Naive Bayes

训练

from sklearn.naive_bayes import GaussianNB

bayes_model=GaussianNB()

bayes_fit=bayes_model.fit(x_train,y_train)

bayes_pred=bayes_model.predict(x_test)

评估

3.Logist

from sklearn.linear_model import LogisticRegression

logist_model=LogisticRegression(solver='liblinear')

logist_fit=logist_model.fit(x_train,y_train)

logist_pred=logist_model.predict(x_test)

from sklearn.metrics import accuracy_score,r2_score

logist_r2=r2_score(y_test,logist_pred)

logist_accruacy=accuracy_score(y_test,logist_pred)

print(f'accuracy_score:{logist_accruacy:.4f}')

print(f'r2_score:{logist_r2:.4f}')#拟合优度太差,弃掉

结果:

accuracy_score:0.8508

r2_score:0.1335

准确率高,但拟合优度太差,不是一个好模型

又考虑是否因变量太多,干扰了模型,又采用PCA降维到5个,但拟合优度直接为负,故不采用

三、结果讨论

1.XGBoost vs NB

- 通过对比混淆矩阵可知,XGB预测“不下雨”的正确率较高,NB预测“下雨”的正确率较高

- 若以recall来论,把所有“是”预测对的概率来论,则NB更胜一筹,

- 选择哪个模型,取决于更关心哪个指标

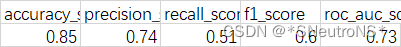

2.因祸得福:SVM失败的验证

也有人用SVM来预测下雨,SVM的确有好性质,但是不适合大数据集。

如果执意用大数据集来做,会发生什么呢?

卡,但确实能做出来,我这里的数据集是(50000+)x7。结果如下:

但比较诡异的是,用降维后的数据反而会卡住=_=

13万+

13万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?