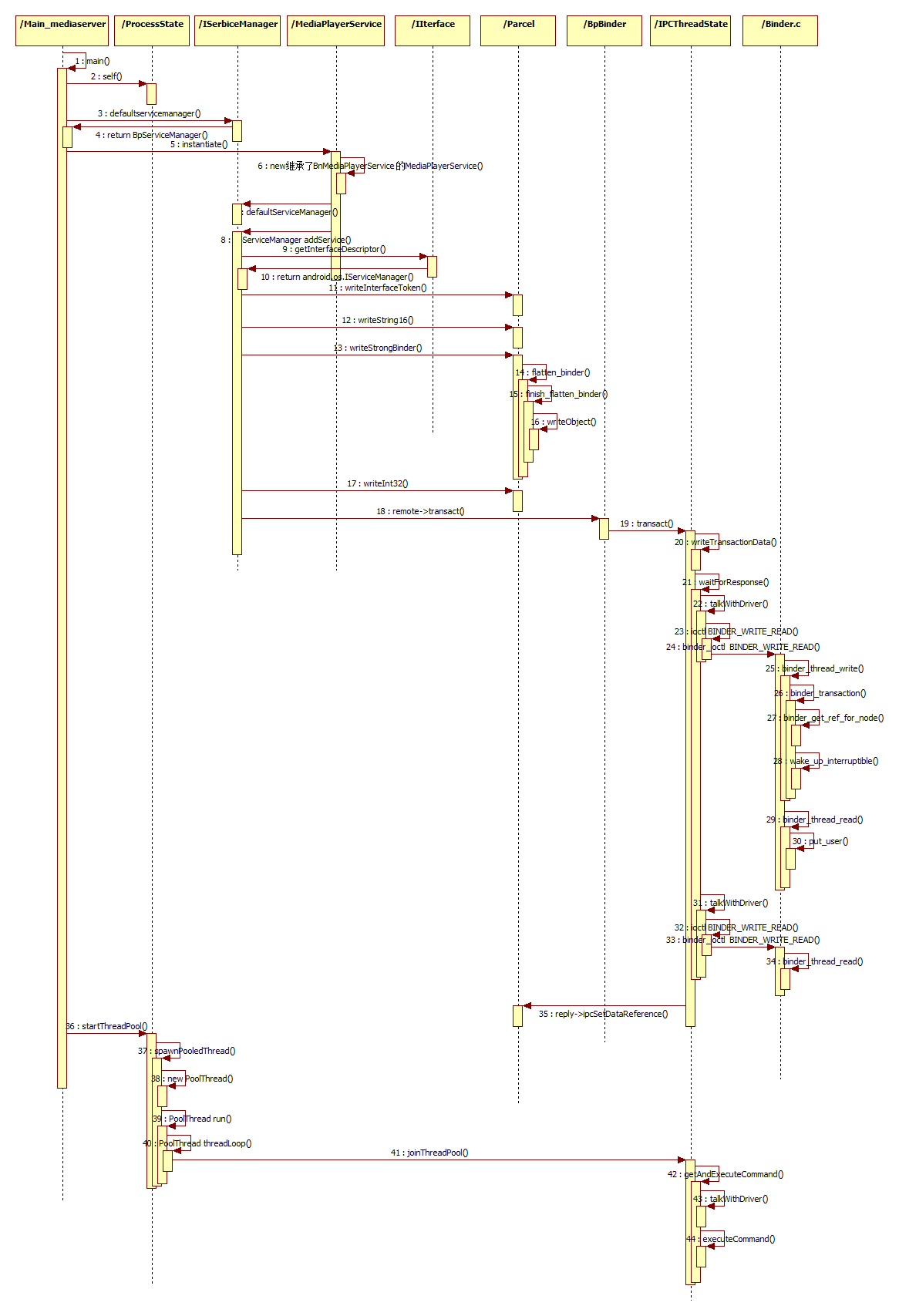

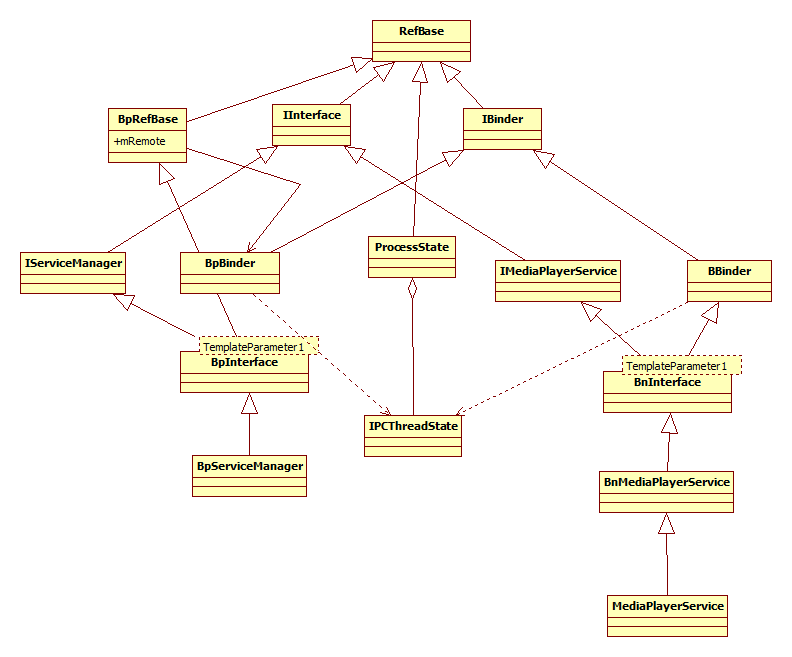

在Android系统Binder进程间通信机制中,Service组件运行在Server进程中,Service进程启动时,会首先在Service Manager中注册,接着再启动一个Binder线程池来等待和处理Client组件,也就是Client进程的通信请求。接下来,从源码中了解一下MediaPlayerService这个Service组件的启动过程。

int main(int argc __unused, char** argv)

{

//获取一个ProcessState实例

sp<ProcessState> proc(ProcessState::self());

//获取Service Manager代理对象

sp<IServiceManager> sm = defaultServiceManager();

ALOGI("ServiceManager: %p", sm.get());

AudioFlinger::instantiate();

}

}

void MediaPlayerService::instantiate() {

//函数defaultServiceManager返回的是Service Manager代理对象BpServiceManager,因此这里调用的是BpServiceManager的addService

defaultServiceManager()->addService(

String16("media.player"), new MediaPlayerService());

}MediaPlayerService::MediaPlayerService()

{

ALOGV("MediaPlayerService created");

mNextConnId = 1;

mBatteryAudio.refCount = 0;

for (int i = 0; i < NUM_AUDIO_DEVICES; i++) {

mBatteryAudio.deviceOn[i] = 0;

mBatteryAudio.lastTime[i] = 0;

mBatteryAudio.totalTime[i] = 0;

}

// speaker is on by default

mBatteryAudio.deviceOn[SPEAKER] = 1;

// reset battery stats

// if the mediaserver has crashed, battery stats could be left

// in bad state, reset the state upon service start.

const sp<IServiceManager> sm(defaultServiceManager());

if (sm != NULL) {

const String16 name("batterystats");

sp<IBatteryStats> batteryStats =

interface_cast<IBatteryStats>(sm->getService(name));

if (batteryStats != NULL) {

batteryStats->noteResetVideo();

batteryStats->noteResetAudio();

}

}

MediaPlayerFactory::registerBuiltinFactories();

}

void MediaPlayerService::instantiate() {

//函数defaultServiceManager返回的是Service Manager代理对象BpServiceManager,因此这里调用的是BpServiceManager的addService

defaultServiceManager()->addService(

String16("media.player"), new MediaPlayerService());

} virtual status_t addService(const String16& name, const sp<IBinder>& service,

bool allowIsolated)

{

//封装进程间的通信数据

Parcel data, reply;

//写入Binder进程间通信请求头

data.writeInterfaceToken(IServiceManager::getInterfaceDescriptor());

//写入要注册的Service组件的名称

data.writeString16(name);

//将Service组件封装成flat_binder_object结构体

data.writeStrongBinder(service);

}

// Write RPC headers. (previously just the interface token)

status_t Parcel::writeInterfaceToken(const String16& interface)

{

writeInt32(IPCThreadState::self()->getStrictModePolicy() |

STRICT_MODE_PENALTY_GATHER);

// currently the interface identification token is just its name as a string

return writeString16(interface);

}status_t Parcel::writeStrongBinder(const sp<IBinder>& val)

{

return flatten_binder(ProcessState::self(), val, this);

}

status_t flatten_binder(const sp<ProcessState>& /*proc*/,

const wp<IBinder>& binder, Parcel* out)

{

//flat_binder_object结构体obj可以用来描述一个Binder实体对象或者Binder引用对象

flat_binder_object obj;

//0x7f用来描述将要注册的Service组件在处理一个进程间通信请求时,他所使用的Server线程的优先级不能低于0x7f

//FLAT_BINDER_FLAG_ACCEPTS_FDS表示可以将包含文件描述符的进程间通信数据传递给将要注册的Service组件处理

obj.flags = 0x7f | FLAT_BINDER_FLAG_ACCEPTS_FDS;

if (binder != NULL) {

sp<IBinder> real = binder.promote();

if (real != NULL) {

//参数binder指向Service组件,继承了BBinder类,BBinder用来描述一个Binder本地对象,成员函数localBinder用来返回一个Binder本地对象接口

IBinder *local = real->localBinder();

if (!local) {

BpBinder *proxy = real->remoteBinder();

if (proxy == NULL) {

ALOGE("null proxy");

}

const int32_t handle = proxy ? proxy->handle() : 0;

//flat_binder_object结构体描述obj描述的是一个Binder引用对象

obj.type = BINDER_TYPE_WEAK_HANDLE;

obj.binder = 0; /* Don't pass uninitialized stack data to a remote process */

obj.handle = handle;

obj.cookie = 0;

} else {

//flat_binder_object结构体描述obj描述的是一个Binder实体对象

obj.type = BINDER_TYPE_WEAK_BINDER;

obj.binder = reinterpret_cast<uintptr_t>(binder.get_refs());

obj.cookie = reinterpret_cast<uintptr_t>(binder.unsafe_get());

}

return finish_flatten_binder(real, obj, out);

}

// XXX How to deal? In order to flatten the given binder,

// we need to probe it for information, which requires a primary

// reference... but we don't have one.

//

// The OpenBinder implementation uses a dynamic_cast<> here,

// but we can't do that with the different reference counting

// implementation we are using.

ALOGE("Unable to unflatten Binder weak reference!");

obj.type = BINDER_TYPE_BINDER;

obj.binder = 0;

obj.cookie = 0;

return finish_flatten_binder(NULL, obj, out);

} else {

obj.type = BINDER_TYPE_BINDER;

obj.binder = 0;

obj.cookie = 0;

return finish_flatten_binder(NULL, obj, out);

}

}

inline static status_t finish_unflatten_binder(

BpBinder* /*proxy*/, const flat_binder_object& /*flat*/,

const Parcel& /*in*/)

{

return NO_ERROR;

}

virtual status_t addService(const String16& name, const sp<IBinder>& service,

bool allowIsolated)

{

//封装进程间的通信数据

Parcel data, reply;

//写入Binder进程间通信请求头

data.writeInterfaceToken(IServiceManager::getInterfaceDescriptor());

//写入要注册的Service组件的名称

data.writeString16(name);

//将Service组件封装成flat_binder_object结构体

data.writeStrongBinder(service);

data.writeInt32(allowIsolated ? 1 : 0);

status_t err = remote()->transact(ADD_SERVICE_TRANSACTION, data, &reply);

return err == NO_ERROR ? reply.readExceptionCode() : err;

}

//第一个参数code的值为ADD_SERVICE_TRANSACTION

//第二个参数data包含要传递给Binder驱动程序的进程间通信数据

//第三个参数reply是一个输出参数,用来保存进程间通信的结果

//第四个参数flags用来描述进程间通信请求是同步还是异步的,默认为0即同步

status_t BpBinder::transact(

uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags)

{

// Once a binder has died, it will never come back to life.

if (mAlive) {

status_t status = IPCThreadState::self()->transact(

mHandle, code, data, reply, flags);

if (status == DEAD_OBJECT) mAlive = 0;

return status;

}

return DEAD_OBJECT;

}status_t IPCThreadState::transact(int32_t handle,

uint32_t code, const Parcel& data,

Parcel* reply, uint32_t flags)

{

status_t err = data.errorCheck();

flags |= TF_ACCEPT_FDS;

IF_LOG_TRANSACTIONS() {

TextOutput::Bundle _b(alog);

alog << "BC_TRANSACTION thr " << (void*)pthread_self() << " / hand "

<< handle << " / code " << TypeCode(code) << ": "

<< indent << data << dedent << endl;

}

if (err == NO_ERROR) {

LOG_ONEWAY(">>>> SEND from pid %d uid %d %s", getpid(), getuid(),

(flags & TF_ONE_WAY) == 0 ? "READ REPLY" : "ONE WAY");

//将data写入到binder_transaction_data结构体中

err = writeTransactionData(BC_TRANSACTION, flags, handle, code, data, NULL);

}

status_t IPCThreadState::writeTransactionData(int32_t cmd, uint32_t binderFlags,

int32_t handle, uint32_t code, const Parcel& data, status_t* statusBuffer)

{

binder_transaction_data tr;

//初始化定义好的binder_transaction_data结构体

tr.target.ptr = 0; /* Don't pass uninitialized stack data to a remote process */

tr.target.handle = handle;

tr.code = code;

tr.flags = binderFlags;

tr.cookie = 0;

tr.sender_pid = 0;

tr.sender_euid = 0;

const status_t err = data.errorCheck();

if (err == NO_ERROR) {

tr.data_size = data.ipcDataSize();

tr.data.ptr.buffer = data.ipcData();

tr.offsets_size = data.ipcObjectsCount()*sizeof(binder_size_t);

tr.data.ptr.offsets = data.ipcObjects();

} else if (statusBuffer) {

tr.flags |= TF_STATUS_CODE;

*statusBuffer = err;

tr.data_size = sizeof(status_t);

tr.data.ptr.buffer = reinterpret_cast<uintptr_t>(statusBuffer);

tr.offsets_size = 0;

tr.data.ptr.offsets = 0;

} else {

return (mLastError = err);

}

//将命令协议号cmd也就是BC_TRANSACTION和binder_transaction_data结构体tr写入到IPCThreadState类的成员变量mOut所描述的一个命令协议缓冲区中

//表示有一个BC_TRANSACTION的命令协议需要发送给Binder驱动程序处理

mOut.writeInt32(cmd);

mOut.write(&tr, sizeof(tr));

return NO_ERROR;

}

status_t IPCThreadState::transact(int32_t handle,

uint32_t code, const Parcel& data,

Parcel* reply, uint32_t flags)

{

status_t err = data.errorCheck();

flags |= TF_ACCEPT_FDS;

IF_LOG_TRANSACTIONS() {

TextOutput::Bundle _b(alog);

alog << "BC_TRANSACTION thr " << (void*)pthread_self() << " / hand "

<< handle << " / code " << TypeCode(code) << ": "

<< indent << data << dedent << endl;

}

if (err == NO_ERROR) {

LOG_ONEWAY(">>>> SEND from pid %d uid %d %s", getpid(), getuid(),

(flags & TF_ONE_WAY) == 0 ? "READ REPLY" : "ONE WAY");

//将data写入到binder_transaction_data结构体中

err = writeTransactionData(BC_TRANSACTION, flags, handle, code, data, NULL);

}

if (err != NO_ERROR) {

if (reply) reply->setError(err);

return (mLastError = err);

}

if ((flags & TF_ONE_WAY) == 0) {

#if 0

if (code == 4) { // relayout

ALOGI(">>>>>> CALLING transaction 4");

} else {

ALOGI(">>>>>> CALLING transaction %d", code);

}

#endif

if (reply) {

//向Binder驱动程序发送BC_TRANSACTION命令协议

err = waitForResponse(reply);

} else {

Parcel fakeReply;

err = waitForResponse(&fakeReply);

}

}

status_t IPCThreadState::waitForResponse(Parcel *reply, status_t *acquireResult)

{

int32_t cmd;

int32_t err;

while (1) {

//循环调用talkWithDriver和Binder驱动交互,以便将命令协议发送给Binder驱动程序并等待进程通信结果

if ((err=talkWithDriver()) < NO_ERROR) break;

}status_t IPCThreadState::talkWithDriver(bool doReceive)

{

if (mProcess->mDriverFD <= 0) {

return -EBADF;

}

binder_write_read bwr;

// Is the read buffer empty?

const bool needRead = mIn.dataPosition() >= mIn.dataSize();

// We don't want to write anything if we are still reading

// from data left in the input buffer and the caller

// has requested to read the next data.

const size_t outAvail = (!doReceive || needRead) ? mOut.dataSize() : 0;

bwr.write_size = outAvail;

//结构体binder_write_read的写入端和IPCThreadState类的输出缓冲区(输出给Binder驱动程序)变量mOut对应起来

bwr.write_buffer = (uintptr_t)mOut.data();

// This is what we'll read.

if (doReceive && needRead) {

bwr.read_size = mIn.dataCapacity();

//结构体binder_write_read的读出端和IPCThreadState类的输入缓冲区(来自Binder驱动程序的输入)变量mIn对应起来

bwr.read_buffer = (uintptr_t)mIn.data();

} else {

bwr.read_size = 0;

bwr.read_buffer = 0;

}

IF_LOG_COMMANDS() {

TextOutput::Bundle _b(alog);

if (outAvail != 0) {

alog << "Sending commands to driver: " << indent;

const void* cmds = (const void*)bwr.write_buffer;

const void* end = ((const uint8_t*)cmds)+bwr.write_size;

alog << HexDump(cmds, bwr.write_size) << endl;

while (cmds < end) cmds = printCommand(alog, cmds);

alog << dedent;

}

alog << "Size of receive buffer: " << bwr.read_size

<< ", needRead: " << needRead << ", doReceive: " << doReceive << endl;

}

// Return immediately if there is nothing to do.

if ((bwr.write_size == 0) && (bwr.read_size == 0)) return NO_ERROR;

bwr.write_consumed = 0;

bwr.read_consumed = 0;

status_t err;

do {

IF_LOG_COMMANDS() {

alog << "About to read/write, write size = " << mOut.dataSize() << endl;

}

#if defined(HAVE_ANDROID_OS)

//循环使用IO控制命令BINDER_WRITE_READ和Binder驱动程序交互

//Binder驱动程序会先调用函数binder_thread_write来处理进程给它发的命令协议,然后调用函数binder_thread_read读取Binder驱动程序给进程发的返回协议

if (ioctl(mProcess->mDriverFD, BINDER_WRITE_READ, &bwr) >= 0)

}switch (cmd) {

case BINDER_WRITE_READ: {

struct binder_write_read bwr;

if (size != sizeof(struct binder_write_read)) {

ret = -EINVAL;

goto err;

}

if (copy_from_user(&bwr, ubuf, sizeof(bwr))) {

ret = -EFAULT;

goto err;

}

if (binder_debug_mask & BINDER_DEBUG_READ_WRITE)

printk(KERN_INFO "binder: %d:%d write %ld at %08lx, read %ld at %08lx\n",

proc->pid, thread->pid, bwr.write_size, bwr.write_buffer, bwr.read_size, bwr.read_buffer);

if (bwr.write_size > 0) {

ret = binder_thread_write(proc, thread, (void __user *)bwr.write_buffer, bwr.write_size, &bwr.write_consumed);

if (ret < 0) {

bwr.read_consumed = 0;

if (copy_to_user(ubuf, &bwr, sizeof(bwr)))

ret = -EFAULT;

goto err;

}

}

if (bwr.read_size > 0) {

ret = binder_thread_read(proc, thread, (void __user *)bwr.read_buffer, bwr.read_size, &bwr.read_consumed, filp->f_flags & O_NONBLOCK);

if (!list_empty(&proc->todo))

wake_up_interruptible(&proc->wait);

if (ret < 0) {

if (copy_to_user(ubuf, &bwr, sizeof(bwr)))

ret = -EFAULT;

goto err;

}

}

if (binder_debug_mask & BINDER_DEBUG_READ_WRITE)

printk(KERN_INFO "binder: %d:%d wrote %ld of %ld, read return %ld of %ld\n",

proc->pid, thread->pid, bwr.write_consumed, bwr.write_size, bwr.read_consumed, bwr.read_size);

if (copy_to_user(ubuf, &bwr, sizeof(bwr))) {

ret = -EFAULT;

goto err;

}

break;

}

int

binder_thread_write(struct binder_proc *proc, struct binder_thread *thread,

void __user *buffer, int size, signed long *consumed)

{

case BC_TRANSACTION:

case BC_REPLY: {

struct binder_transaction_data tr;

if (copy_from_user(&tr, ptr, sizeof(tr)))

return -EFAULT;

ptr += sizeof(tr);

binder_transaction(proc, thread, &tr, cmd == BC_REPLY);

break;

}

static void

binder_transaction(struct binder_proc *proc, struct binder_thread *thread,

struct binder_transaction_data *tr, int reply)

{

struct binder_transaction *t;

struct binder_work *tcomplete;

size_t *offp, *off_end;

struct binder_proc *target_proc;

struct binder_thread *target_thread = NULL;

struct binder_node *target_node = NULL;

struct list_head *target_list;

wait_queue_head_t *target_wait;

struct binder_transaction *in_reply_to = NULL;

struct binder_transaction_log_entry *e;

uint32_t return_error;

e = binder_transaction_log_add(&binder_transaction_log);

e->call_type = reply ? 2 : !!(tr->flags & TF_ONE_WAY);

e->from_proc = proc->pid;

e->from_thread = thread->pid;

e->target_handle = tr->target.handle;

e->data_size = tr->data_size;

e->offsets_size = tr->offsets_size;

if (reply) {

//处理BC_REPLY命令协议

in_reply_to = thread->transaction_stack;

if (in_reply_to == NULL) {

binder_user_error("binder: %d:%d got reply transaction "

"with no transaction stack\n",

proc->pid, thread->pid);

return_error = BR_FAILED_REPLY;

goto err_empty_call_stack;

}

binder_set_nice(in_reply_to->saved_priority);

if (in_reply_to->to_thread != thread) {

binder_user_error("binder: %d:%d got reply transaction "

"with bad transaction stack,"

" transaction %d has target %d:%d\n",

proc->pid, thread->pid, in_reply_to->debug_id,

in_reply_to->to_proc ?

in_reply_to->to_proc->pid : 0,

in_reply_to->to_thread ?

in_reply_to->to_thread->pid : 0);

return_error = BR_FAILED_REPLY;

in_reply_to = NULL;

goto err_bad_call_stack;

}

thread->transaction_stack = in_reply_to->to_parent;

target_thread = in_reply_to->from;

if (target_thread == NULL) {

return_error = BR_DEAD_REPLY;

goto err_dead_binder;

}

if (target_thread->transaction_stack != in_reply_to) {

binder_user_error("binder: %d:%d got reply transaction "

"with bad target transaction stack %d, "

"expected %d\n",

proc->pid, thread->pid,

target_thread->transaction_stack ?

target_thread->transaction_stack->debug_id : 0,

in_reply_to->debug_id);

return_error = BR_FAILED_REPLY;

in_reply_to = NULL;

target_thread = NULL;

goto err_dead_binder;

}

target_proc = target_thread->proc;

} else {

//处理BC_TRANSACTION命令协议

if (tr->target.handle) {

struct binder_ref *ref;

ref = binder_get_ref(proc, tr->target.handle);

if (ref == NULL) {

binder_user_error("binder: %d:%d got "

"transaction to invalid handle\n",

proc->pid, thread->pid);

return_error = BR_FAILED_REPLY;

goto err_invalid_target_handle;

}

target_node = ref->node;

} else {

//因为程序Main_mediaserver给驱动程序发送BC_TRANSACTION命令协议的目的是要将Service组件MediaPlayerService注册到Service Manager?

//所以在binder_transaction_data结构体tr中,目标Binder对象是一个Binder引用对象,句柄值为0

//所以下面这句语句将目标Binder实体对象target_node指向了一个引用了Service Manager的Binder实体对象binder_context_mgr_node

//binder_context_mgr_node是在Service Manager启动的时候创建的

target_node = binder_context_mgr_node;

if (target_node == NULL) {

return_error = BR_DEAD_REPLY;

goto err_no_context_mgr_node;

}

}

e->to_node = target_node->debug_id;

//找到目标进程

target_proc = target_node->proc;

if (target_proc == NULL) {

return_error = BR_DEAD_REPLY;

goto err_dead_binder;

}

if (!(tr->flags & TF_ONE_WAY) && thread->transaction_stack) {

struct binder_transaction *tmp;

tmp = thread->transaction_stack;

if (tmp->to_thread != thread) {

binder_user_error("binder: %d:%d got new "

"transaction with bad transaction stack"

", transaction %d has target %d:%d\n",

proc->pid, thread->pid, tmp->debug_id,

tmp->to_proc ? tmp->to_proc->pid : 0,

tmp->to_thread ?

tmp->to_thread->pid : 0);

return_error = BR_FAILED_REPLY;

goto err_bad_call_stack;

}

while (tmp) {

if (tmp->from && tmp->from->proc == target_proc)

//找到目标线程

target_thread = tmp->from;

tmp = tmp->from_parent;

}

}

}

if (target_thread) {

e->to_thread = target_thread->pid;

target_list = &target_thread->todo;

target_wait = &target_thread->wait;

} else {

target_list = &target_proc->todo;

target_wait = &target_proc->wait;

}

e->to_proc = target_proc->pid;

/* TODO: reuse incoming transaction for reply */

//分配一个binder_transaction结构体t

t = kzalloc(sizeof(*t), GFP_KERNEL);

if (t == NULL) {

return_error = BR_FAILED_REPLY;

goto err_alloc_t_failed;

}

binder_stats.obj_created[BINDER_STAT_TRANSACTION]++;

//分配一个binder_work结构体tcomplete

tcomplete = kzalloc(sizeof(*tcomplete), GFP_KERNEL);

if (tcomplete == NULL) {

return_error = BR_FAILED_REPLY;

goto err_alloc_tcomplete_failed;

}

binder_stats.obj_created[BINDER_STAT_TRANSACTION_COMPLETE]++;

t->debug_id = ++binder_last_id;

e->debug_id = t->debug_id;

if (binder_debug_mask & BINDER_DEBUG_TRANSACTION) {

if (reply)

printk(KERN_INFO "binder: %d:%d BC_REPLY %d -> %d:%d, "

"data %p-%p size %zd-%zd\n",

proc->pid, thread->pid, t->debug_id,

target_proc->pid, target_thread->pid,

tr->data.ptr.buffer, tr->data.ptr.offsets,

tr->data_size, tr->offsets_size);

else

printk(KERN_INFO "binder: %d:%d BC_TRANSACTION %d -> "

"%d - node %d, data %p-%p size %zd-%zd\n",

proc->pid, thread->pid, t->debug_id,

target_proc->pid, target_node->debug_id,

tr->data.ptr.buffer, tr->data.ptr.offsets,

tr->data_size, tr->offsets_size);

}

if (!reply && !(tr->flags & TF_ONE_WAY))

t->from = thread;

else

t->from = NULL;

t->sender_euid = proc->tsk->cred->euid;

t->to_proc = target_proc;

t->to_thread = target_thread;

t->code = tr->code;

t->flags = tr->flags;

t->priority = task_nice(current);

t->buffer = binder_alloc_buf(target_proc, tr->data_size,

tr->offsets_size, !reply && (t->flags & TF_ONE_WAY));

if (t->buffer == NULL) {

return_error = BR_FAILED_REPLY;

goto err_binder_alloc_buf_failed;

}

t->buffer->allow_user_free = 0;

t->buffer->debug_id = t->debug_id;

t->buffer->transaction = t;

t->buffer->target_node = target_node;

if (target_node)

binder_inc_node(target_node, 1, 0, NULL);

offp = (size_t *)(t->buffer->data + ALIGN(tr->data_size, sizeof(void *)));

if (copy_from_user(t->buffer->data, tr->data.ptr.buffer, tr->data_size)) {

binder_user_error("binder: %d:%d got transaction with invalid "

"data ptr\n", proc->pid, thread->pid);

return_error = BR_FAILED_REPLY;

goto err_copy_data_failed;

}

if (copy_from_user(offp, tr->data.ptr.offsets, tr->offsets_size)) {

binder_user_error("binder: %d:%d got transaction with invalid "

"offsets ptr\n", proc->pid, thread->pid);

return_error = BR_FAILED_REPLY;

goto err_copy_data_failed;

}

if (!IS_ALIGNED(tr->offsets_size, sizeof(size_t))) {

binder_user_error("binder: %d:%d got transaction with "

"invalid offsets size, %zd\n",

proc->pid, thread->pid, tr->offsets_size);

return_error = BR_FAILED_REPLY;

goto err_bad_offset;

}

off_end = (void *)offp + tr->offsets_size;

for (; offp < off_end; offp++) {

struct flat_binder_object *fp;

if (*offp > t->buffer->data_size - sizeof(*fp) ||

t->buffer->data_size < sizeof(*fp) ||

!IS_ALIGNED(*offp, sizeof(void *))) {

binder_user_error("binder: %d:%d got transaction with "

"invalid offset, %zd\n",

proc->pid, thread->pid, *offp);

return_error = BR_FAILED_REPLY;

goto err_bad_offset;

}

fp = (struct flat_binder_object *)(t->buffer->data + *offp);

switch (fp->type) {

case BINDER_TYPE_BINDER:

case BINDER_TYPE_WEAK_BINDER: {

struct binder_ref *ref;

struct binder_node *node = binder_get_node(proc, fp->binder);

if (node == NULL) {

//创建一个新的Binder实体对象node

node = binder_new_node(proc, fp->binder, fp->cookie);

if (node == NULL) {

return_error = BR_FAILED_REPLY;

goto err_binder_new_node_failed;

}

node->min_priority = fp->flags & FLAT_BINDER_FLAG_PRIORITY_MASK;

node->accept_fds = !!(fp->flags & FLAT_BINDER_FLAG_ACCEPTS_FDS);

}

if (fp->cookie != node->cookie) {

binder_user_error("binder: %d:%d sending u%p "

"node %d, cookie mismatch %p != %p\n",

proc->pid, thread->pid,

fp->binder, node->debug_id,

fp->cookie, node->cookie);

goto err_binder_get_ref_for_node_failed;

}

//在目标进程即Service Manager进程创建一个Binder引用对象来引用Service组件即MediaPlayerService组件

ref = binder_get_ref_for_node(target_proc, node);

if (ref == NULL) {

return_error = BR_FAILED_REPLY;

goto err_binder_get_ref_for_node_failed;

}

if (fp->type == BINDER_TYPE_BINDER)

fp->type = BINDER_TYPE_HANDLE;

else

fp->type = BINDER_TYPE_WEAK_HANDLE;

fp->handle = ref->desc;

binder_inc_ref(ref, fp->type == BINDER_TYPE_HANDLE, &thread->todo);

if (binder_debug_mask & BINDER_DEBUG_TRANSACTION)

printk(KERN_INFO " node %d u%p -> ref %d desc %d\n",

node->debug_id, node->ptr, ref->debug_id, ref->desc);

} break; default:

binder_user_error("binder: %d:%d got transactio"

"n with invalid object type, %lx\n",

proc->pid, thread->pid, fp->type);

return_error = BR_FAILED_REPLY;

goto err_bad_object_type;

}

}

if (reply) {

BUG_ON(t->buffer->async_transaction != 0);

binder_pop_transaction(target_thread, in_reply_to);

} else if (!(t->flags & TF_ONE_WAY)) {

BUG_ON(t->buffer->async_transaction != 0);

t->need_reply = 1;

t->from_parent = thread->transaction_stack;

thread->transaction_stack = t;

} else {

BUG_ON(target_node == NULL);

BUG_ON(t->buffer->async_transaction != 1);

if (target_node->has_async_transaction) {

target_list = &target_node->async_todo;

target_wait = NULL;

} else

target_node->has_async_transaction = 1;

}

//将binder_transaction结构体t封装成类型为BINDER_WORK_TRANSACTION的工作项添加到目标进程的todo队列中

t->work.type = BINDER_WORK_TRANSACTION;

list_add_tail(&t->work.entry, target_list);

//将binder_work结构体tcomplete封装成类型为BINDER_WORK_TRANSACTION_COMPLETE的工作项添加到源进程的todo队列中

tcomplete->type = BINDER_WORK_TRANSACTION_COMPLETE;

list_add_tail(&tcomplete->entry, &thread->todo);

if (target_wait)

//唤醒目标进程处理事件

wake_up_interruptible(target_wait);

//程序执行到这里,源线程和目标线程会并发去执行各自todo队列中的工作项

return;

static int

binder_thread_read(struct binder_proc *proc, struct binder_thread *thread,

void __user *buffer, int size, signed long *consumed, int non_block)

{

void __user *ptr = buffer + *consumed;

void __user *end = buffer + size;

int ret = 0;

int wait_for_proc_work;

if (*consumed == 0) {

if (put_user(BR_NOOP, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

}

retry:

/*只有当没有在等待其他线程完成另外的事务和没有未处理的事项的时候

才会将wait_for_proc_work置为1*/

wait_for_proc_work = thread->transaction_stack == NULL && list_empty(&thread->todo);

if (thread->return_error != BR_OK && ptr < end) {

if (thread->return_error2 != BR_OK) {

if (put_user(thread->return_error2, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

if (ptr == end)

goto done;

thread->return_error2 = BR_OK;

}

if (put_user(thread->return_error, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

thread->return_error = BR_OK;

goto done;

}

//线程处于空闲状态

thread->looper |= BINDER_LOOPER_STATE_WAITING;

if (wait_for_proc_work)

//当前线程所属进程又多了一个空闲的Binder线程

proc->ready_threads++;

mutex_unlock(&binder_lock);

if (wait_for_proc_work) {

//检查当前线程检查所属进程的todo队列中是否有未处理工作项

if (!(thread->looper & (BINDER_LOOPER_STATE_REGISTERED |

BINDER_LOOPER_STATE_ENTERED))) {

binder_user_error("binder: %d:%d ERROR: Thread waiting "

"for process work before calling BC_REGISTER_"

"LOOPER or BC_ENTER_LOOPER (state %x)\n",

proc->pid, thread->pid, thread->looper);

wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2);

}

//将当前线程的优先级设为所属进程的优先级

binder_set_nice(proc->default_priority);

if (non_block) {

if (!binder_has_proc_work(proc, thread))

ret = -EAGAIN;

} else

ret = wait_event_interruptible_exclusive(proc->wait, binder_has_proc_work(proc, thread));

} else {

if (non_block) {

if (!binder_has_thread_work(thread))

ret = -EAGAIN;

} else

ret = wait_event_interruptible(thread->wait, binder_has_thread_work(thread));

}

mutex_lock(&binder_lock);

if (wait_for_proc_work)

//减少当前线程所属进程空闲的Binder线程

proc->ready_threads--;

thread->looper &= ~BINDER_LOOPER_STATE_WAITING;

if (ret)

return ret;

while (1) {

//循环处理工作项

uint32_t cmd;

struct binder_transaction_data tr;

struct binder_work *w;

struct binder_transaction *t = NULL;

if (!list_empty(&thread->todo))

//取工作项

w = list_first_entry(&thread->todo, struct binder_work, entry);

else if (!list_empty(&proc->todo) && wait_for_proc_work)

w = list_first_entry(&proc->todo, struct binder_work, entry);

else {

if (ptr - buffer == 4 && !(thread->looper & BINDER_LOOPER_STATE_NEED_RETURN)) /* no data added */

goto retry;

break;

}

if (end - ptr < sizeof(tr) + 4)

break;

switch (w->type) {

case BINDER_WORK_TRANSACTION: {

t = container_of(w, struct binder_transaction, work);

} break;

case BINDER_WORK_TRANSACTION_COMPLETE: {

cmd = BR_TRANSACTION_COMPLETE;

//将一个BINDER_WORK_TRANSACTION_COMPLETE返回协议写入到用户空间提供的缓冲区中

if (put_user(cmd, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

binder_stat_br(proc, thread, cmd);

if (binder_debug_mask & BINDER_DEBUG_TRANSACTION_COMPLETE)

printk(KERN_INFO "binder: %d:%d BR_TRANSACTION_COMPLETE\n",

proc->pid, thread->pid);

list_del(&w->entry);

kfree(w);

binder_stats.obj_deleted[BINDER_STAT_TRANSACTION_COMPLETE]++;

} break;

case BINDER_WORK_NODE: {

struct binder_node *node = container_of(w, struct binder_node, work);

uint32_t cmd = BR_NOOP;

const char *cmd_name;

int strong = node->internal_strong_refs || node->local_strong_refs;

int weak = !hlist_empty(&node->refs) || node->local_weak_refs || strong;

if (weak && !node->has_weak_ref) {

cmd = BR_INCREFS;

cmd_name = "BR_INCREFS";

node->has_weak_ref = 1;

node->pending_weak_ref = 1;

node->local_weak_refs++;

} else if (strong && !node->has_strong_ref) {

cmd = BR_ACQUIRE;

cmd_name = "BR_ACQUIRE";

node->has_strong_ref = 1;

node->pending_strong_ref = 1;

node->local_strong_refs++;

} else if (!strong && node->has_strong_ref) {

cmd = BR_RELEASE;

cmd_name = "BR_RELEASE";

node->has_strong_ref = 0;

} else if (!weak && node->has_weak_ref) {

cmd = BR_DECREFS;

cmd_name = "BR_DECREFS";

node->has_weak_ref = 0;

}

if (cmd != BR_NOOP) {

if (put_user(cmd, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

if (put_user(node->ptr, (void * __user *)ptr))

return -EFAULT;

ptr += sizeof(void *);

if (put_user(node->cookie, (void * __user *)ptr))

return -EFAULT;

ptr += sizeof(void *);

binder_stat_br(proc, thread, cmd);

if (binder_debug_mask & BINDER_DEBUG_USER_REFS)

printk(KERN_INFO "binder: %d:%d %s %d u%p c%p\n",

proc->pid, thread->pid, cmd_name, node->debug_id, node->ptr, node->cookie);

} else {

list_del_init(&w->entry);

if (!weak && !strong) {

if (binder_debug_mask & BINDER_DEBUG_INTERNAL_REFS)

printk(KERN_INFO "binder: %d:%d node %d u%p c%p deleted\n",

proc->pid, thread->pid, node->debug_id, node->ptr, node->cookie);

rb_erase(&node->rb_node, &proc->nodes);

kfree(node);

binder_stats.obj_deleted[BINDER_STAT_NODE]++;

} else {

if (binder_debug_mask & BINDER_DEBUG_INTERNAL_REFS)

printk(KERN_INFO "binder: %d:%d node %d u%p c%p state unchanged\n",

proc->pid, thread->pid, node->debug_id, node->ptr, node->cookie);

}

}

} break;

case BINDER_WORK_DEAD_BINDER:

case BINDER_WORK_DEAD_BINDER_AND_CLEAR:

case BINDER_WORK_CLEAR_DEATH_NOTIFICATION: {

struct binder_ref_death *death = container_of(w, struct binder_ref_death, work);

uint32_t cmd;

if (w->type == BINDER_WORK_CLEAR_DEATH_NOTIFICATION)

cmd = BR_CLEAR_DEATH_NOTIFICATION_DONE;

else

cmd = BR_DEAD_BINDER;

if (put_user(cmd, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

if (put_user(death->cookie, (void * __user *)ptr))

return -EFAULT;

ptr += sizeof(void *);

if (binder_debug_mask & BINDER_DEBUG_DEATH_NOTIFICATION)

printk(KERN_INFO "binder: %d:%d %s %p\n",

proc->pid, thread->pid,

cmd == BR_DEAD_BINDER ?

"BR_DEAD_BINDER" :

"BR_CLEAR_DEATH_NOTIFICATION_DONE",

death->cookie);

if (w->type == BINDER_WORK_CLEAR_DEATH_NOTIFICATION) {

list_del(&w->entry);

kfree(death);

binder_stats.obj_deleted[BINDER_STAT_DEATH]++;

} else

list_move(&w->entry, &proc->delivered_death);

if (cmd == BR_DEAD_BINDER)

goto done; /* DEAD_BINDER notifications can cause transactions */

} break;

}

if (!t)

continue;

BUG_ON(t->buffer == NULL);

if (t->buffer->target_node) {

struct binder_node *target_node = t->buffer->target_node;

tr.target.ptr = target_node->ptr;

tr.cookie = target_node->cookie;

t->saved_priority = task_nice(current);

if (t->priority < target_node->min_priority &&

!(t->flags & TF_ONE_WAY))

binder_set_nice(t->priority);

else if (!(t->flags & TF_ONE_WAY) ||

t->saved_priority > target_node->min_priority)

binder_set_nice(target_node->min_priority);

cmd = BR_TRANSACTION;

} else {

tr.target.ptr = NULL;

tr.cookie = NULL;

cmd = BR_REPLY;

}

tr.code = t->code;

tr.flags = t->flags;

tr.sender_euid = t->sender_euid;

if (t->from) {

struct task_struct *sender = t->from->proc->tsk;

tr.sender_pid = task_tgid_nr_ns(sender, current->nsproxy->pid_ns);

} else {

tr.sender_pid = 0;

}

tr.data_size = t->buffer->data_size;

tr.offsets_size = t->buffer->offsets_size;

tr.data.ptr.buffer = (void *)t->buffer->data + proc->user_buffer_offset;

tr.data.ptr.offsets = tr.data.ptr.buffer + ALIGN(t->buffer->data_size, sizeof(void *));

if (put_user(cmd, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

if (copy_to_user(ptr, &tr, sizeof(tr)))

return -EFAULT;

ptr += sizeof(tr);

binder_stat_br(proc, thread, cmd);

if (binder_debug_mask & BINDER_DEBUG_TRANSACTION)

printk(KERN_INFO "binder: %d:%d %s %d %d:%d, cmd %d"

"size %zd-%zd ptr %p-%p\n",

proc->pid, thread->pid,

(cmd == BR_TRANSACTION) ? "BR_TRANSACTION" : "BR_REPLY",

t->debug_id, t->from ? t->from->proc->pid : 0,

t->from ? t->from->pid : 0, cmd,

t->buffer->data_size, t->buffer->offsets_size,

tr.data.ptr.buffer, tr.data.ptr.offsets);

list_del(&t->work.entry);

t->buffer->allow_user_free = 1;

if (cmd == BR_TRANSACTION && !(t->flags & TF_ONE_WAY)) {

t->to_parent = thread->transaction_stack;

t->to_thread = thread;

thread->transaction_stack = t;

} else {

t->buffer->transaction = NULL;

kfree(t);

binder_stats.obj_deleted[BINDER_STAT_TRANSACTION]++;

}

break;

}

done:

*consumed = ptr - buffer;

if (proc->requested_threads + proc->ready_threads == 0 &&

proc->requested_threads_started < proc->max_threads &&

(thread->looper & (BINDER_LOOPER_STATE_REGISTERED |

BINDER_LOOPER_STATE_ENTERED)) /* the user-space code fails to */

/*spawn a new thread if we leave this out */) {

//请求当前线程所属进程增加一个新的Binder线程来处理进程间通信请求

proc->requested_threads++;

if (binder_debug_mask & BINDER_DEBUG_THREADS)

printk(KERN_INFO "binder: %d:%d BR_SPAWN_LOOPER\n",

proc->pid, thread->pid);

if (put_user(BR_SPAWN_LOOPER, (uint32_t __user *)buffer))

return -EFAULT;

}

return 0;

}

status_t IPCThreadState::waitForResponse(Parcel *reply, status_t *acquireResult)

{

int32_t cmd;

int32_t err;

while (1) {

//循环调用talkWithDriver和Binder驱动交互,以便将命令协议发送给Binder驱动程序并等待进程通信结果

if ((err=talkWithDriver()) < NO_ERROR) break;

err = mIn.errorCheck();

if (err < NO_ERROR) break;

if (mIn.dataAvail() == 0) continue;

cmd = mIn.readInt32();

IF_LOG_COMMANDS() {

alog << "Processing waitForResponse Command: "

<< getReturnString(cmd) << endl;

}

switch (cmd) {

case BR_TRANSACTION_COMPLETE:

if (!reply && !acquireResult) goto finish;

break;

case BR_DEAD_REPLY:

err = DEAD_OBJECT;

goto finish;

case BR_FAILED_REPLY:

err = FAILED_TRANSACTION;

goto finish;

case BR_ACQUIRE_RESULT:

{

ALOG_ASSERT(acquireResult != NULL, "Unexpected brACQUIRE_RESULT");

const int32_t result = mIn.readInt32();

if (!acquireResult) continue;

*acquireResult = result ? NO_ERROR : INVALID_OPERATION;

}

goto finish;

case BR_REPLY:

{

binder_transaction_data tr;

err = mIn.read(&tr, sizeof(tr));

ALOG_ASSERT(err == NO_ERROR, "Not enough command data for brREPLY");

if (err != NO_ERROR) goto finish;

if (reply) {

if ((tr.flags & TF_STATUS_CODE) == 0) {

//将进程间的通信请求的结果保存在reply中

reply->ipcSetDataReference(

void Parcel::ipcSetDataReference(const uint8_t* data, size_t dataSize,

const binder_size_t* objects, size_t objectsCount, release_func relFunc, void* relCookie)

{

binder_size_t minOffset = 0;

freeDataNoInit();

mError = NO_ERROR;

mData = const_cast<uint8_t*>(data);

mDataSize = mDataCapacity = dataSize;

//ALOGI("setDataReference Setting data size of %p to %lu (pid=%d)", this, mDataSize, getpid());

mDataPos = 0;

ALOGV("setDataReference Setting data pos of %p to %zu", this, mDataPos);

mObjects = const_cast<binder_size_t*>(objects);

mObjectsSize = mObjectsCapacity = objectsCount;

mNextObjectHint = 0;

mOwner = relFunc;

mOwnerCookie = relCookie;

for (size_t i = 0; i < mObjectsSize; i++) {

binder_size_t offset = mObjects[i];

if (offset < minOffset) {

ALOGE("%s: bad object offset %"PRIu64" < %"PRIu64"\n",

__func__, (uint64_t)offset, (uint64_t)minOffset);

mObjectsSize = 0;

break;

}

minOffset = offset + sizeof(flat_binder_object);

}

scanForFds();

}

int main(int argc __unused, char** argv)

{

//获取一个ProcessState实例

sp<ProcessState> proc(ProcessState::self());

//获取Service Manager代理对象

sp<IServiceManager> sm = defaultServiceManager();

ALOGI("ServiceManager: %p", sm.get());

AudioFlinger::instantiate();

//初始化多媒体系统的MediaPlayerService服务

MediaPlayerService::instantiate();

//启动Binder线程池

ProcessState::self()->startThreadPool();

//将当前线程加入到线程池中去等待和处理进程间通信请求

IPCThreadState::self()->joinThreadPool();

}

}

void ProcessState::startThreadPool()

{

AutoMutex _l(mLock);

if (!mThreadPoolStarted) {

mThreadPoolStarted = true;

spawnPooledThread(true);

}

}void ProcessState::spawnPooledThread(bool isMain)

{

if (mThreadPoolStarted) {

String8 name = makeBinderThreadName();

ALOGV("Spawning new pooled thread, name=%s\n", name.string());

//创建PoolThread对象

sp<Thread> t = new PoolThread(isMain);

//启动新线程

t->run(name.string());

}

}class PoolThread : public Thread

{

public:

PoolThread(bool isMain)

: mIsMain(isMain)

{

}

protected:

virtual bool threadLoop()

{

IPCThreadState::self()->joinThreadPool(mIsMain);

return false;

}

const bool mIsMain;

};void IPCThreadState::joinThreadPool(bool isMain)

{

LOG_THREADPOOL("**** THREAD %p (PID %d) IS JOINING THE THREAD POOL\n", (void*)pthread_self(), getpid());

mOut.writeInt32(isMain ? BC_ENTER_LOOPER : BC_REGISTER_LOOPER);

// This thread may have been spawned by a thread that was in the background

// scheduling group, so first we will make sure it is in the foreground

// one to avoid performing an initial transaction in the background.

set_sched_policy(mMyThreadId, SP_FOREGROUND);

status_t result;

do {

processPendingDerefs();

// now get the next command to be processed, waiting if necessary

result = getAndExecuteCommand();

if (result < NO_ERROR && result != TIMED_OUT && result != -ECONNREFUSED && result != -EBADF) {

ALOGE("getAndExecuteCommand(fd=%d) returned unexpected error %d, aborting",

mProcess->mDriverFD, result);

abort();

}

// Let this thread exit the thread pool if it is no longer

// needed and it is not the main process thread.

if(result == TIMED_OUT && !isMain) {

break;

}

} while (result != -ECONNREFUSED && result != -EBADF);

LOG_THREADPOOL("**** THREAD %p (PID %d) IS LEAVING THE THREAD POOL err=%p\n",

(void*)pthread_self(), getpid(), (void*)result);

mOut.writeInt32(BC_EXIT_LOOPER);

talkWithDriver(false);

}

status_t IPCThreadState::getAndExecuteCommand()

{

status_t result;

int32_t cmd;

result = talkWithDriver();

if (result >= NO_ERROR) {

size_t IN = mIn.dataAvail();

if (IN < sizeof(int32_t)) return result;

cmd = mIn.readInt32();

IF_LOG_COMMANDS() {

alog << "Processing top-level Command: "

<< getReturnString(cmd) << endl;

}

result = executeCommand(cmd);

// After executing the command, ensure that the thread is returned to the

// foreground cgroup before rejoining the pool. The driver takes care of

// restoring the priority, but doesn't do anything with cgroups so we

// need to take care of that here in userspace. Note that we do make

// sure to go in the foreground after executing a transaction, but

// there are other callbacks into user code that could have changed

// our group so we want to make absolutely sure it is put back.

set_sched_policy(mMyThreadId, SP_FOREGROUND);

}

return result;

}

394

394

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?