原标题:线性回归数学推导及Python实现

线性回归的数学推导以及Python实现;

假如有这么一堆数据,可以找到一条趋势线

这条线满足每个点到线的距离平方和最小,如何找到这条线?

其实就是求解W

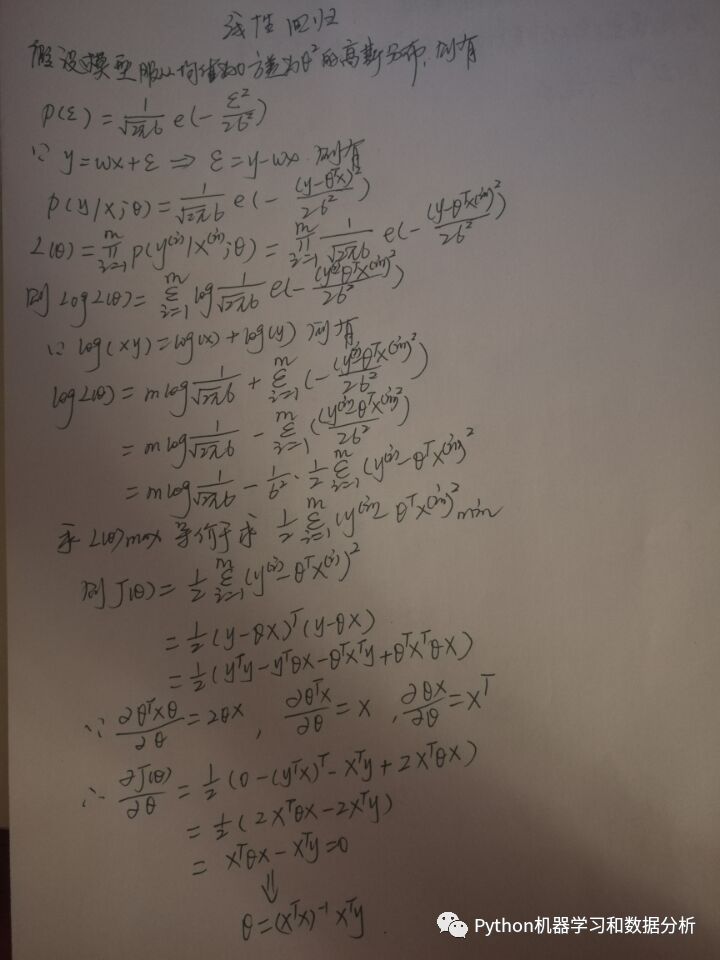

a、具体推导如下图所示:

如何用计算机语言表达数学公式?

当然是神器numpy

b、Python实现

~矩阵解法

如果通过上述数学公式进行求解参数W,仅需20行代码可以搞定,但在实际应用中,并不是所有的矩阵都可以进行求逆操作,因此在大多数机器学习算法中使用更多的则是使用迭代法(梯度下降),对参数W求解最优解;

c、使用梯度下降对线性模型进行求解,具体代码如下所示

~梯度下降解法

defcost(theta0,theta1,theta2,x,y ):

J =0

m =len (x )

fori inrange (m ):

h =theta0 +theta1 *x [i ][0]+theta2 *x [i ][1]

J +=(h -y [i ])**2

J /=(2*m )

returnJ

defpart_theta0(theta0,theat1,theta2,x,y ):

h =theta0 +theat1 *x [:, 0]+theta2 *x [:, 1]

diff =(h -y )

partial =diff.sum ()/x.shape [0]

returnpartial

defpart_theta1(theta0,theat1,theta2,x,y ):

h =theta0 +theat1 *x [:, 0] +theta2 *x [:, 1]

diff =(h -y )*x [:, 0]

partial =diff.sum ()/x.shape [0]

returnpartial

defpart_theta2(theta0,theat1,theta2,x,y ):

h =theta0 +theat1 *x [:, 0] +theta2 *x [:, 1]

diff =(h -y )*x [:, 1]

partial =diff.sum ()/x.shape [0]

returnpartial

defgradient(x,y,aph =0.01,theta0 =0,theta1 =0,theta2 =0):

maxer =50000

counter =0

c =cost (theta0,theta1,theta2,data_x,data_y )

costs =[c ]

c1 =c +10

theta0s =[theta0 ]

theta1s =[theta1 ]

theta2s =[theta2 ]

wucha =0.0000001

while(np.abs (c -c1 )>wucha )and(counter

c1 =c

update_theta0 =aph *part_theta0 (theta0,theta1,theta2,x,y )

update__theta1 =aph *part_theta1 (theta0,theta1,theta2,x,y )

update__theta2 =aph *part_theta2 (theta0,theta1,theta2,x,y )

theta0 -=update_theta0

theta1 -=update__theta1

theta2 -=update__theta2

theta0s.append (theta0 )

theta1s.append (theta1 )

theta2s.append (theta2 )

c =cost (theta0,theta1,theta2,data_x,data_y )

costs.append (c )

counter +=1

returntheta0,theta1,theta2,counter

defpredict(X ):

theta0,theta1,theta2,counter =gradient (data_x,data_y )

print(counter )

y_pred =theta0 +theta1 *X [:, 0]+theta2 *X [:, 1]

returny_pred

print(predict (np.array ([[20, 5]])))

欢迎加群一起讨论学习~

www.vbafans.com返回搜狐,查看更多

责任编辑:

1341

1341

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?