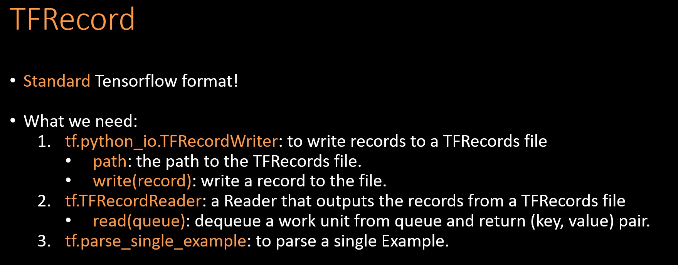

How to transform our data into Tensorflow TFRecord?

- Transform into TFRecord

- Read and decode

接下来,将not_mnist数据集作为自己的数据集,生成TFRecord文件。

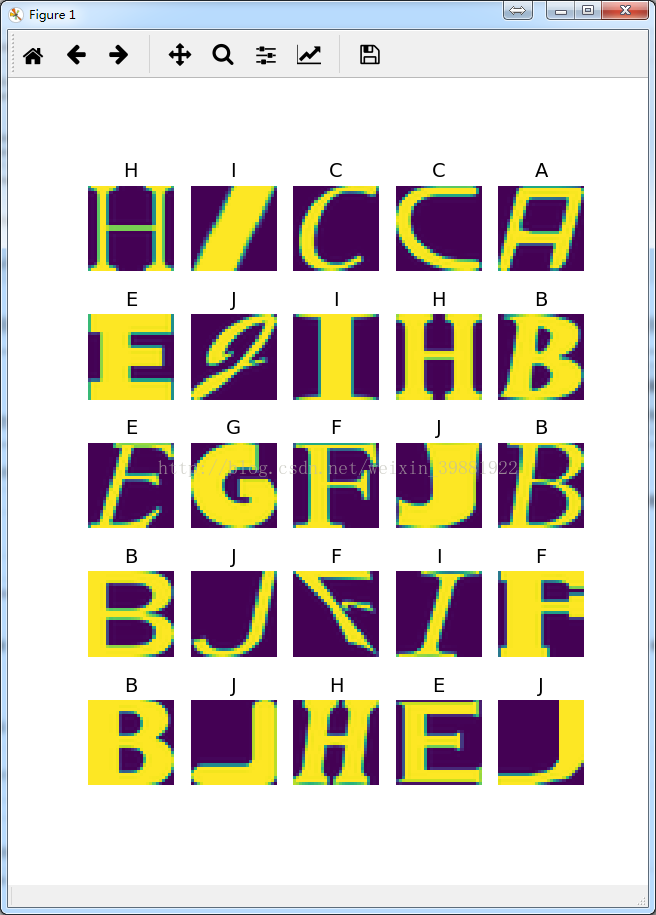

import tensorflow as tf import numpy as np import os import matplotlib.pyplot as plt import skimage.io as io os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2' # %% def get_file(file_dir): '''Get full image directory and corresponding labels Args: file_dir: file directory Returns: images: image directories, list, string labels: label, list, int ''' images = [] #存放每张图片的路径[‘./notMNIST_small/A/MDEtMDEtMDAudHRm.png’,...,'./notMNIST_small/G/R2FyYW1vbmRQcmVtclByby1NZWRJdERpc3Aub3Rm.png] temp = [] #存放数据集下每一个子文件的路径['./notMNIST_small/A','./notMNIST_small/B',...,'/notMNIST_small/J'] for root, sub_folders, files in os.walk(file_dir): # image directories for name in files: images.append(os.path.join(root, name)) # get 10 sub-folder names for name in sub_folders: temp.append(os.path.join(root, name)) # assign 10 labels based on the folder names labels = [] #循环数据集下每一个子文件夹 for one_folder in temp: #获得子文件夹中图片的个数,os.listdir() 方法用于返回指定的文件夹包含的文件或文件夹的名字的列表 n_img = len(os.listdir(one_folder)) #用‘/'来划分子文件夹路径,如./notMNIST_small/A,取最后一个元素,其实就是获得ABCDEFGHIJ letter = one_folder.split('/')[-1] #按照子文件夹名字的不同,来划分类,贴上标签,共10类 if letter == 'A': labels = np.append(labels, n_img * [1]) elif letter == 'B': labels = np.append(labels, n_img * [2]) elif letter == 'C': labels = np.append(labels, n_img * [3]) elif letter == 'D': labels = np.append(labels, n_img * [4]) elif letter == 'E': labels = np.append(labels, n_img * [5]) elif letter == 'F': labels = np.append(labels, n_img * [6]) elif letter == 'G': labels = np.append(labels, n_img * [7]) elif letter == 'H': labels = np.append(labels, n_img * [8]) elif letter == 'I': labels = np.append(labels, n_img * [9]) else: labels = np.append(labels, n_img * [10]) # shuffle temp = np.array([images, labels])#[['/notMNIST_small/A/MDEtMDEtMDAudHRm.png',...,'/notMNIST_small/J/SWNvbmUgTFQgUmVndWxhciBJdGFsaWMgT3NGLnR0Zg==.png'],[1,1,1...10,10,10]] temp = temp.transpose()#[['/notMNIST_small/A/MDEtMDEtMDAudHRm.png',1],...,['/notMNIST_small/J/SWNvbmUgTFQgUmVndWxhciBJdGFsaWMgT3NGLnR0Zg==.png',10] np.random.shuffle(temp)#打乱顺序 image_list = list(temp[:, 0])#读取temp中第0列,即images label_list = list(temp[:, 1])#读取temp中第1列,即labels label_list = [int(float(i)) for i in label_list] return image_list, label_list # %% #输入的图片跟标签都是特征,因为其类型不同,故将labels转换成int64,将图片转换成bytes #生成整数型的属性 def int64_feature(value): """Wrapper for inserting int64 features into Example proto.""" if not isinstance(value, list): value = [value] return tf.train.Feature(int64_list=tf.train.Int64List(value=value)) #生成字符串型整数 def bytes_feature(value): return tf.train.Feature(bytes_list=tf.train.BytesList(value=[value])) # %% def convert_to_tfrecord(images, labels, save_dir, name): '''convert all images and labels to one tfrecord file. Args: images: list of image directories, string type labels: list of labels, int type save_dir: the directory to save tfrecord file, e.g.: '/home/folder1/' name: the name of tfrecord file, string type, e.g.: 'train' Return: no return Note: converting needs some time, be patient... ''' #生成tfrecords文件保存路径 filename = os.path.join(save_dir, name + '.tfrecords') n_samples = len(labels) if np.shape(images)[0] != n_samples: raise ValueError('Images size %d does not match label size %d.' % (images.shape[0], n_samples)) # wait some time here, transforming need some time based on the size of your data. #向这个文件中写入 writer = tf.python_io.TFRecordWriter(filename) print('\nTransform start......') #循环所有图片 for i in np.arange(0, n_samples): try: #读图,需转换成array image = io.imread(images[i]) # type(image) must be array! #将图像矩阵转化成一个字符串 image_raw = image.tostring() label = int(labels[i]) #将每个图片和其对应的label写入每一个example example = tf.train.Example(features=tf.train.Features(feature={ 'label': int64_feature(label), 'image_raw': bytes_feature(image_raw)})) writer.write(example.SerializeToString()) #如果图片损坏,将错误信息打印出来 except IOError as e: print('Could not read:', images[i]) print('error: %s' % e) print('Skip it!\n') writer.close() print('Transform done!') # %% #读取tfrecord文件,并生成批次 def read_and_decode(tfrecords_file, batch_size): '''read and decode tfrecord file, generate (image, label) batches Args: tfrecords_file: the directory of tfrecord file batch_size: number of images in each batch Returns: image: 4D tensor - [batch_size, width, height, channel] label: 1D tensor - [batch_size] ''' # make an input queue from the tfrecord file #将文件生成一个队列 filename_queue = tf.train.string_input_producer([tfrecords_file]) #创建一个reader来读取TFRecord文件 reader = tf.TFRecordReader() #从文件中独处一个样例。也可以使用read_up_to函数一次性读取多个样例 _, serialized_example = reader.read(filename_queue) #解析每一个元素。如果需要解析多个样例,可以用parse_example函数 img_features = tf.parse_single_example( serialized_example, features={ 'label': tf.FixedLenFeature([], tf.int64), 'image_raw': tf.FixedLenFeature([], tf.string), }) #tf.decode_raw可以将字符串解析成图像对应的像素数组 image = tf.decode_raw(img_features['image_raw'], tf.uint8) ########################################################## # you can put data augmentation here, I didn't use it ########################################################## # all the images of notMNIST are 28*28, you need to change the image size if you use other dataset. image = tf.reshape(image, [28, 28]) label = tf.cast(img_features['label'], tf.int32) image_batch, label_batch = tf.train.batch([image, label], batch_size=batch_size, num_threads=64, capacity=2000) return image_batch, tf.reshape(label_batch, [batch_size]) # %% Convert data to TFRecord test_dir = 'F://TensorFlow--middleteach//TFRecord_notmnist//notMNIST_small//' save_dir = 'F://TensorFlow--middleteach//TFRecord_notmnist//' BATCH_SIZE = 25 # Convert test data: you just need to run it ONCE ! name_test = 'test' images, labels = get_file(test_dir) tfrecords_file_dir='F://TensorFlow--middleteach//TFRecord_notmnist//test.tfrecords' if not os.path.exists(tfrecords_file_dir): convert_to_tfrecord(images, labels, save_dir, name_test) # %% TO test train.tfrecord file #一个batchsize读取25张图,展示成5行5列 def plot_images(images, labels): '''plot one batch size ''' for i in np.arange(0, BATCH_SIZE): plt.subplot(5, 5, i + 1) #关闭坐标轴显示 plt.axis('off') '''ord()函数主要用来返回对应字符的ascii码,chr()主要用来表示ascii码对应的字符他的输入时数字 print ord('a) #97 print chr(97) #a ''' plt.title(chr(ord('A') + labels[i] - 1), fontsize=14) # plt.subplots_adjust(top=0.5) plt.imshow(images[i]) plt.show() tfrecords_file = 'F://TensorFlow--middleteach//TFRecord_notmnist//test.tfrecords' image_batch, label_batch = read_and_decode(tfrecords_file, batch_size=BATCH_SIZE) with tf.Session() as sess: i = 0 #启用多线程处理数据 coord = tf.train.Coordinator() threads = tf.train.start_queue_runners(coord=coord) try: while not coord.should_stop() and i < 1: # just plot one batch size image, label = sess.run([image_batch, label_batch]) plot_images(image, label) i += 1 except tf.errors.OutOfRangeError: print('done!') finally: coord.request_stop() coord.join(threads)

677

677

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?