首先看以下脚本所执行的内容

#!/bin/bash

#定义空变量

NN1_HOSTNAME=""

NN2_HOSTNAME=""

NN1_SERVICEID=""

NN2_SERVICEID=""

NN1_SERVICESTATE=""

NN2_SERVICESTATE=""

#设置需要发送邮件的邮箱

EMAIL=254059606@qq.com

#配置hadoop命令的目录

CDH_BIN_HOME=/home/hadoop/app/hadoop/bin

#查看集群的nameservices及namenode_serviceids

ha_name=$(${CDH_BIN_HOME}/hdfs getconf -confKey dfs.nameservices)

namenode_serviceids=$(${CDH_BIN_HOME}/hdfs getconf -confKey dfs.ha.namenodes.${ha_name})

#通过for循环,将节点名称赋值到对应的变量中

for node in $(echo ${namenode_serviceids//,/ }); do

state=$(${CDH_BIN_HOME}/hdfs haadmin -getServiceState $node)

#如果节点状态为active,就将节点名称赋值到NN1_SERVICEID,节点状态赋值到NN1_SERVICESTATE,以及对应active节点的主机名NN1_HOSTNAME

if [ "$state" == "active" ]; then

NN1_SERVICEID="${node}"

NN1_SERVICESTATE="${state}"

NN1_HOSTNAME=`echo $(${CDH_BIN_HOME}/hdfs getconf -confKey dfs.namenode.rpc-address.${ha_name}.${node}) | awk -F ':' '{print $1}'`

#echo "${NN1_HOSTNAME} : ${NN1_SERVICEID} : ${NN1_SERVICESTATE}"

elif [ "$state" == "standby" ]; then

NN2_SERVICEID="${node}"

NN2_SERVICESTATE="${state}"

NN2_HOSTNAME=`echo $(${CDH_BIN_HOME}/hdfs getconf -confKey dfs.namenode.rpc-address.${ha_name}.${node}) | awk -F ':' '{print $1}'`

#echo "${NN2_HOSTNAME} : ${NN2_SERVICEID} : ${NN2_SERVICESTATE}"

else

echo "hdfs haadmin -getServiceState $node: unkown"

fi

done

echo " "

echo "Hostname Namenode_Serviceid Namenode_State"

echo "${NN1_HOSTNAME} ${NN1_SERVICEID} ${NN1_SERVICESTATE}"

echo "${NN2_HOSTNAME} ${NN2_SERVICEID} ${NN2_SERVICESTATE}"

#将节点信息保存到HDFS_HA.log

#save current NN1/2_HOSTNAME state

echo "${NN1_HOSTNAME} ${NN1_SERVICEID} ${NN1_SERVICESTATE}" > HDFS_HA.log

echo "${NN2_HOSTNAME} ${NN2_SERVICEID} ${NN2_SERVICESTATE}" >> HDFS_HA.log

#如果不存在HDFS_HA_LAST.log文件,就会跳过if判断,直接执行最后一步。

#如果文件存在,会将当前active节点的namenode_serviceids跟HDFS_HA_LAST.log文件中的active进行比较。

if [ -f HDFS_HA_LAST.log ];then

HISTORYHOSTNAME=`cat HDFS_HA_LAST.log| awk '{print $1}' | head -n 1`

#如果名称不一致,则会发送邮件到我们的邮箱

if [ "$HISTORYHOSTNAME" != "${NN1_HOSTNAME}" ];then

echo "send a mail"

echo -e "`date "+%Y-%m-%d %H:%M:%S"` : Please to check namenode log." | mail \

-r "From: alertAdmin <254059606@qq.com>" \

-s "Warn: CDH HDFS HA Failover!." ${EMAIL}

fi

fi

cat HDFS_HA.log > HDFS_HA_LAST.log

执行get_hdfs_ha_state.sh脚本,可以看到保存的日志文件

[hadoop@ruozedata001 shell]$ ./get_hdfs_ha_state.sh

Hostname Namenode_Serviceid Namenode_State

ruozedata001 nn1 active

ruozedata002 nn2 standby

当我们通过手工模拟节点切换的效果

[hadoop@ruozedata001 shell]$ hdfs haadmin -failover nn1 nn2

Failover to NameNode at ruozedata002/172.16.69.174:8020 successful

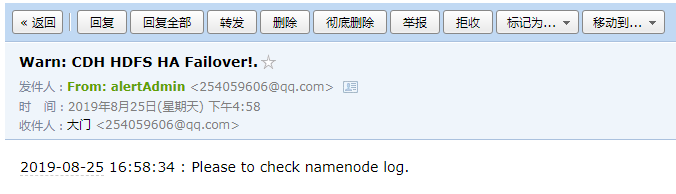

再执行脚本,会发现系统已经发送邮件到我们邮箱

[hadoop@ruozedata001 shell]$ ./get_hdfs_ha_state.sh

Hostname Namenode_Serviceid Namenode_State

ruozedata002 nn2 active

ruozedata001 nn1 standby

send a mail

[hadoop@ruozedata001 shell]$ cat HDFS_HA.log

ruozedata002 nn2 active

ruozedata001 nn1 standby

[hadoop@ruozedata001 shell]$ cat HDFS_HA_LAST.log

ruozedata002 nn2 active

ruozedata001 nn1 standby

参考:

https://blog.csdn.net/qq_40337206/article/details/100051934

https://blog.csdn.net/weixin_43975538/article/details/100051828

6855

6855

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?