下载数据

import tensorflow as tf

from tensorflow import keras

(X_train_full, y_train_full), (X_test, y_test) = keras.datasets.mnist.load_data()

数据处理

X_valid, X_train = X_train_full[:5000] / 255., X_train_full[5000:] / 255.

y_valid, y_train = y_train_full[:5000], y_train_full[5000:]

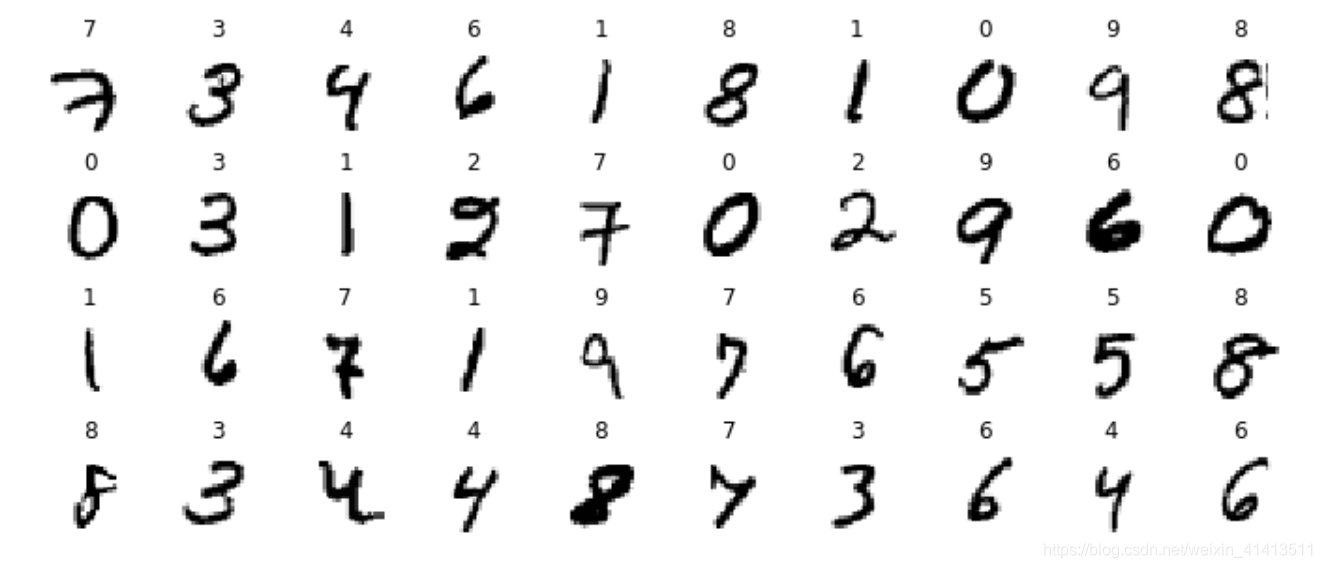

简单查看数据

import matplotlib.pyplot as plt

n_rows = 4

n_cols = 10

plt.figure(figsize=(n_cols * 1.2, n_rows * 1.2))

for row in range(n_rows):

for col in range(n_cols):

index = n_cols * row + col

plt.subplot(n_rows, n_cols, index + 1)#要生成4行10列,这是第index + 1个图

plt.imshow(X_train[index], cmap="binary", interpolation="nearest")

plt.axis('off')

plt.title(y_train[index], fontsize=12)

plt.subplots_adjust(wspace=0.2, hspace=0.5)#调整子图布局

plt.show()

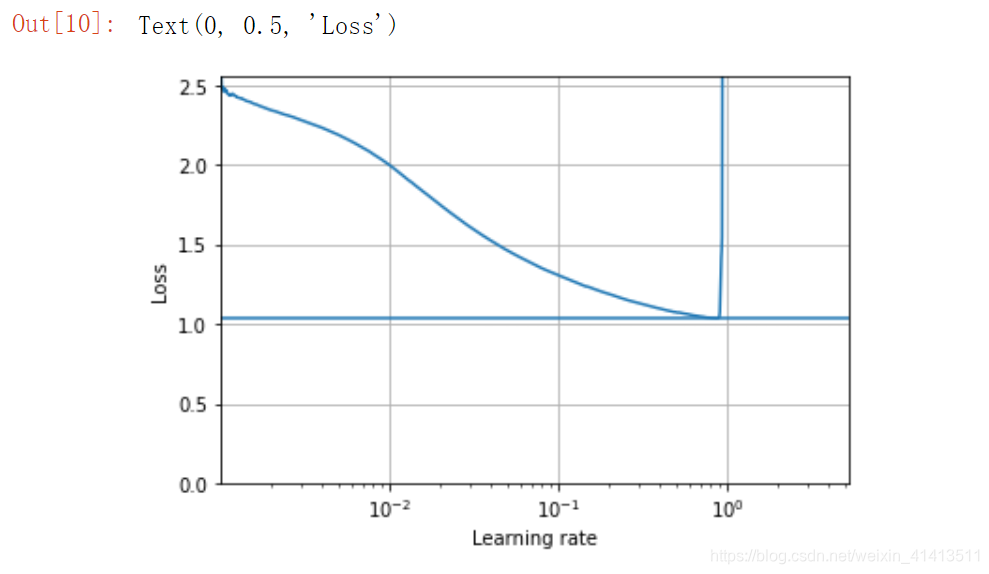

寻找合适的学习率

#设置回调函数,每个数据训练轮次,将学习率*factor

K = keras.backend

class ExponentialLearningRate(keras.callbacks.Callback):

def __init__(self, factor):

self.factor = factor

self.rates = []

self.losses = []

def on_batch_end(self, batch, logs):

self.rates.append(K.get_value(self.model.optimizer.lr))

self.losses.append(logs["loss"])

K.set_value(self.model.optimizer.lr, self.model.optimizer.lr * self.factor)

import numpy as np

keras.backend.clear_session()

np.random.seed(42)

tf.random.set_seed(42)

#设置神经网络参数

model = keras.models.Sequential([

keras.layers.Flatten(input_shape=[28, 28]),

keras.layers.Dense(300, activation="relu"),

keras.layers.Dense(100, activation="relu"),

keras.layers.Dense(10, activation="softmax")

])

model.compile(loss="sparse_categorical_crossentropy",

optimizer=keras.optimizers.SGD(learning_rate=1e-3),

metrics=["accuracy"])

expon_lr = ExponentialLearningRate(factor=1.005)

训练模型找出最优学习率

history = model.fit(X_train, y_train, epochs=1,

validation_data=(X_valid, y_valid),

callbacks=[expon_lr])

plt.plot(expon_lr.rates, expon_lr.losses)

plt.gca().set_xscale('log')#设置为对数坐标

plt.hlines(min(expon_lr.losses), min(expon_lr.rates), max(expon_lr.rates))#绘制水平线,即绘制最小值的水平线

plt.axis([min(expon_lr.rates), max(expon_lr.rates), 0, expon_lr.losses[0]])#设置XY轴范围

plt.grid()#显示网格线

plt.xlabel("Learning rate")

plt.ylabel("Loss")

可以看到,学习率在0.6之后就变动较大,因此最优学习率取一半即0.3

训练神经网络

keras.backend.clear_session()

np.random.seed(42)

tf.random.set_seed(42)

model = keras.models.Sequential([

keras.layers.Flatten(input_shape=[28, 28]),

keras.layers.Dense(300, activation="relu"),

keras.layers.Dense(100, activation="relu"),

keras.layers.Dense(10, activation="softmax")

])

model.compile(loss="sparse_categorical_crossentropy",

optimizer=keras.optimizers.SGD(lr=3e-1),

metrics=["accuracy"])

#设置保 存 路 径

import os

run_index = 1 # increment this at every run

run_logdir = os.path.join(os.curdir, "my_mnist_logs", "run_{:03d}".format(run_index))

run_logdir

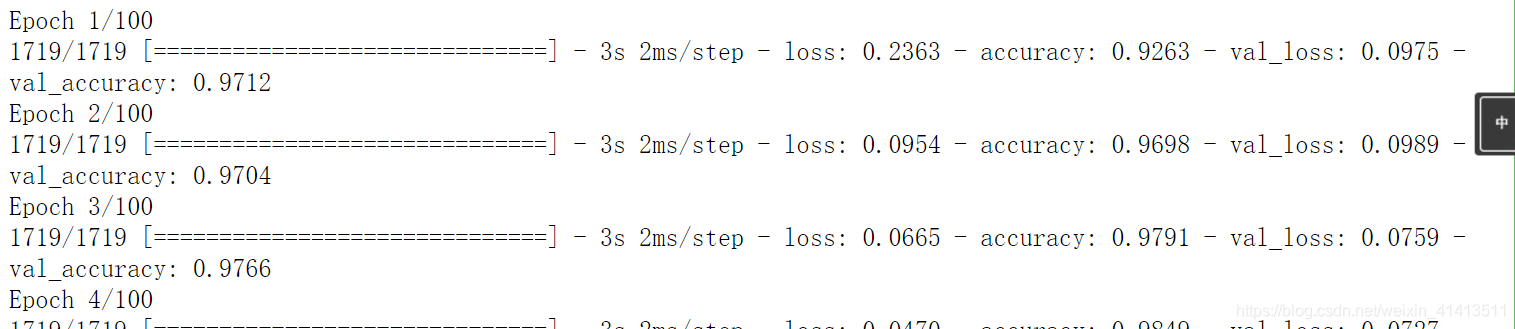

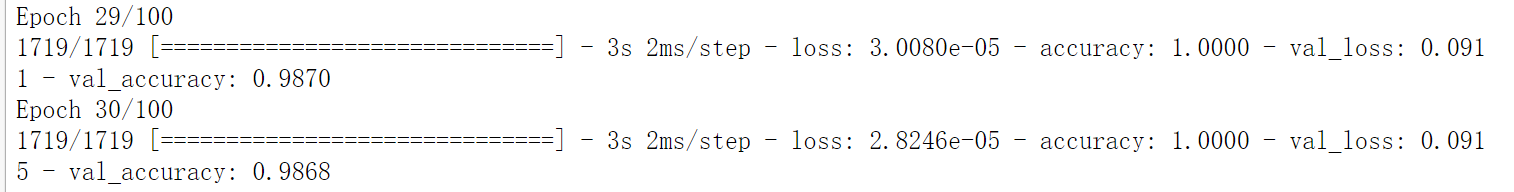

开始训练

early_stopping_cb = keras.callbacks.EarlyStopping(patience=20)#patience: 没有进步的训练轮数,在这之后训练就会被停止

checkpoint_cb = keras.callbacks.ModelCheckpoint("my_mnist_model.h5", save_best_only=True)#在每个训练期之后保存模型。

tensorboard_cb = keras.callbacks.TensorBoard(run_logdir)#这个回调函数为 Tensorboard 编写一个日志

history = model.fit(X_train, y_train, epochs=100,

validation_data=(X_valid, y_valid),

callbacks=[checkpoint_cb, early_stopping_cb, tensorboard_cb])

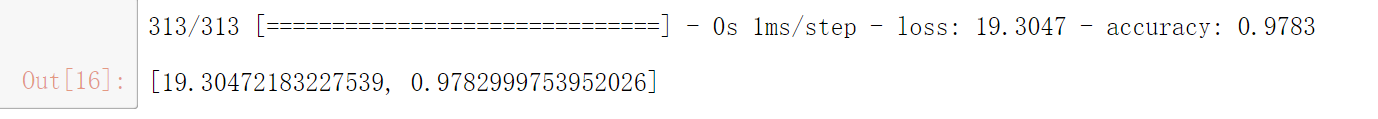

在测试集上评估

model = keras.models.load_model("my_mnist_model.h5") # rollback to best model

model.evaluate(X_test, y_test)

1464

1464

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?