赛题:

赛题以医疗数据挖掘为背景,要求选手使用提供的心跳信号传感器数据训练模型并完成不同心跳信号的分类的任务。

数据实例:

Lightgbm模型介绍:

基本单位是决策树,通过多个决策树进行最终结果判断。lgb采用的是生长方法是leaf-wise learning,减少了计算量,当然这样的算下下也需要控制树的深度和每个叶节点的最小的数据量,从而减少over fit。分裂点,xgb采取的是预排序的方法,而lgb采取的是histogram算法,即将特征值分为很多小桶,直接在这些桶上寻找分类,这样带来了存储代价和计算代价等方面的缩小,从而得到更好的性能。另外,数据结构的变化也使得细节处理方面效率有所不同,比如对缓存的利用,lgb更加高效。从而使得它右很好的加速性能,特别是类别特征处理,也使得lgb在特定的数据集上有非常大的提升。

————————————————

版权声明:本文为CSDN博主「Sisyphus_369」的原创文章,遵循CC 4.0 BY-SA版权协议,转载请附上原文出处链接及本声明。

原文链接:https://blog.csdn.net/weixin_40677836/article/details/111472972

步骤流程

- 数据预处理

- 特征提取出来作为每一列数据

- 数据内存优化(目的为了减少内存,加快后期模型运行速度)

- 模型建立

- 建立lightgbm模型

- 模型交叉校验

- 交叉验证进行模型形能评估

- 预测

此部分数据读取并进行预处理

import pandas as pd

import os

import matplotlib.pyplot as plt

from tqdm import tqdm

os.chdir(r'G:\天池\心电图挖掘')

df_train = pd.read_csv(r'train.csv', encoding='utf-8')

df_test = pd.read_csv(r'testA.csv', encoding='utf-8')

df_train.set_index('id', inplace=True)

df_test.set_index('id', inplace=True)

def reduce_mem_usage(df):

start_mem = df.memory_usage().sum() / 1024**2

print('Memory usage of dataframe is {:.2f} MB'.format(start_mem))

for col in df.columns:

col_type = df[col].dtype

if col_type != object:

c_min = df[col].min()

c_max = df[col].max()

if str(col_type)[:3] == 'int':

if c_min > np.iinfo(np.int8).min and c_max < np.iinfo(np.int8).max:

df[col] = df[col].astype(np.int8)

elif c_min > np.iinfo(np.int16).min and c_max < np.iinfo(np.int16).max:

df[col] = df[col].astype(np.int16)

elif c_min > np.iinfo(np.int32).min and c_max < np.iinfo(np.int32).max:

df[col] = df[col].astype(np.int32)

elif c_min > np.iinfo(np.int64).min and c_max < np.iinfo(np.int64).max:

df[col] = df[col].astype(np.int64)

else:

if c_min > np.finfo(np.float16).min and c_max < np.finfo(np.float16).max:

df[col] = df[col].astype(np.float16)

elif c_min > np.finfo(np.float32).min and c_max < np.finfo(np.float32).max:

df[col] = df[col].astype(np.float32)

else:

df[col] = df[col].astype(np.float64)

else:

df[col] = df[col].astype('category')

end_mem = df.memory_usage().sum() / 1024**2

print('Memory usage after optimization is: {:.2f} MB'.format(end_mem))

print('Decreased by {:.1f}%'.format(100 * (start_mem - end_mem) / start_mem))

return df

all_columns_name = ['id']

all_columns_name = all_columns_name + ['id_%s'%i for i in range(len(df_train.iloc[0,0].split(',')))] + ['label']

all_columns_name_ = all_columns_name + ['id_%s'%i for i in range(len(df_train.iloc[0,0].split(',')))]

df_train_new = pd.DataFrame(columns=(all_columns_name))

df_test_new = pd.DataFrame(columns=(['id'] + ['id_%s'%i for i in range(len(df_train.iloc[0,0].split(',')))]))

for i in tqdm(range(205)):

df_train_new['id_%s'%i] = df_train['heartbeat_signals'].apply(lambda x:float(x.split(',')[i]))

df_test_new['id_%s'%i] = df_test['heartbeat_signals'].apply(lambda x:float(x.split(',')[i]))

df_train_new['id'] = df_train.index.tolist()

df_test_new['id'] = df_test.index.tolist()

df_train_new['label'] = df_train['label']

上面部份运行目的是数据处理以下效果,并将数据进行内存优化,方便后续处理

接下来进行模型建立

from sklearn.model_selection import train_test_split

import lightgbm as lgb

from sklearn.metrics import f1_score

X_train = df_train_new.drop(['id','label'], axis=1)

y_train = df_train_new['label']

X_train_split, X_val, y_train_split, y_val = train_test_split(X_train, y_train, test_size=0.2)

train_matrix = lgb.Dataset(X_train_split, label=y_train_split)

valid_matrix = lgb.Dataset(X_val, label=y_val)

params = {

"num_leaves": 128,

"metric": None,

"objective": "multiclass",

"num_class": 4,

"nthread": 10,

"verbose": -1,

}

# F1-score

def f1_score_vali(preds, data_vali):

labels = data_vali.get_label()

preds = np.argmax(preds.reshape(4, -1), axis=0)

score_vali = f1_score(y_true=labels, y_pred=preds, average='macro')

return 'f1_score', score_vali, True

"""使用训练集数据进行模型训练"""

model = lgb.train(params,

train_set=train_matrix,

valid_sets=valid_matrix,

num_boost_round=2000,

verbose_eval=50,

early_stopping_rounds=200,

feval=f1_score_vali)

交叉验证评估模型性能

from sklearn.model_selection import KFold

x_train = df_train_new.drop(['id', 'label'], axis=1)

y_train = df_train_new['label']

#5折交叉验证

folds = 5

seed = 2021

# shuffle 进行数据打乱

kf = KFold(n_splits=folds, shuffle=True, random_state=seed)

cv_scores= []

for i, (train_index, valid_index) in enumerate(kf.split(x_train, y_train)):

print('************************************ {} ************************************'.format(str(i+1)))

x_train_split, y_train_split, x_val, y_val = x_train.iloc[train_index],y_train.iloc[train_index], x_train.iloc[valid_index], y_train.iloc[valid_index]

train_matrix = lgb.Dataset(x_train_split, label=y_train_split)

valid_matrix = lgb.Dataset(x_val, label=y_val)

params = {

"num_leaves": 128,

"metric": None,

"objective": "multiclass",

"num_class": 4,

"nthread": 10,

"verbose": -1,

}

model = lgb.train(params,

train_set=train_matrix,

valid_sets=valid_matrix,

num_boost_round=2000,

verbose_eval=100,

early_stopping_rounds=200,

feval=f1_score_vali)

val_pred = model.predict(x_val, num_iteration=model.best_iteration)

val_pred = np.argmax(val_pred, axis=1)

cv_scores.append(f1_score(y_true=y_val, y_pred=val_pred, average='macro'))

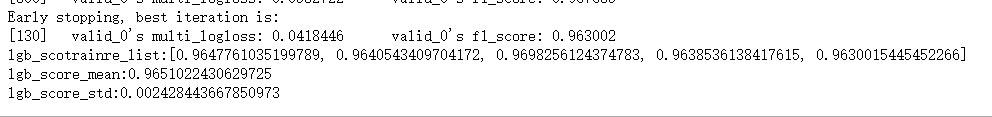

print('lgb_scotrainre_list:{}'.format(cv_scores))

print('lgb_score_mean:{}'.format(np.mean(cv_scores)))

print("lgb_score_std:{}".format(np.std(cv_scores)))

经过5折交叉验证,得出f1—score为0.96,效果还可以,那么就进行预测

预测

X_test = df_test_new.drop(['id'], axis=1)

test_pred_lgb = model.predict(X_test, num_iteration=model.best_iteration)

df_1 = pd.DataFrame(test_pred_lgb)

df_1.index=df_test_new['id']

df_1.rename(columns={0: 'label_0', 1:'label_1', 2:'label_2', 3:'label_3'}, inplace=True)

df_1.to_csv('final_result.csv')

最终将数据提交到天池:

2883

2883

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?