本文基于Flink1.13.6搭建的Hudi数据湖,作为入门级别的尝试,目前已安装Hadoop基本环境。

1、组件版本

| 组件 | 版本 |

| Flink | 1.13.6_scala-2.12 |

| Hudi | 0.12.0 |

| Hadoop | 3.1.0 |

| mysql | 5.7.33 |

| kafka | 2.12-2.7.0 |

2、Flink环境配置

1)解压Flink安装包

tar -zxvf flink-1.13.6-bin-scala_2.12.tgz -C /home/opt/2) 修改flink-conf.xml配置

vim /home/opt/flink-1.13.6/conf/flink-conf.yaml

classloader.check-leaked-classloader: false

taskmanager.numberOfTaskSlots: 4

state.backend: rocksdb

execution.checkpointing.interval: 30000

state.checkpoints.dir: hdfs://node01:9000/ckps

state.backend.incremental: true3) 拷贝编译好的hudi包到Flink的lib目录

cp /opt/software/hudi-0.12.0/packaging/hudi-flink-bundle/target/hudi-flink1.13-bundle_2.12-0.12.0.jar /home/opt/flink-1.13.6/lib/

# 拷贝guava包,解决依赖冲突

cp /opt/module/hadoop-3.1.3/share/hadoop/common/lib/guava-27.0-jre.jar /home/opt/flink-1.13.6/lib/3、sql-client 方式

3.1 local模式,启动Flink

/home/opt/flink-1.13.6/bin/start-cluster.sh

/home/opt/flink-1.13.6/bin/sql-client.sh embedded3.2 yarn-session模式

# 启动yarn-session

/home/opt/flink-1.13.6/bin/yarn-session.sh -d

# 启动sql-client

/home/opt/flink-1.13.6/bin/sql-client.sh embedded -s yarn-session备注:此处需要拷贝hadoop-mapreduce-client-core-3.1.3.jar包,解决依赖问题。

cp /opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-client-core-3.1.3.jar /home/opt/flink-1.13.6/lib/4、示例

插入数据

set sql-client.execution.result-mode=tableau;

-- 创建hudi表

CREATE TABLE t1(

uuid VARCHAR(20) PRIMARY KEY NOT ENFORCED,

name VARCHAR(10),

age INT,

ts TIMESTAMP(3),

`partition` VARCHAR(20)

)

PARTITIONED BY (`partition`)

WITH (

'connector' = 'hudi',

'path' = 'hdfs://node01:8020/tmp/hudi_flink/t1',

'table.type' = 'MERGE_ON_READ' –- 默认是COW

);

-- 插入数据

INSERT INTO t1 VALUES

('id1','Danny',23,TIMESTAMP '1970-01-01 00:00:01','par1'),

('id2','Stephen',33,TIMESTAMP '1970-01-01 00:00:02','par1'),

('id3','Julian',53,TIMESTAMP '1970-01-01 00:00:03','par2'),

('id4','Fabian',31,TIMESTAMP '1970-01-01 00:00:04','par2'),

('id5','Sophia',18,TIMESTAMP '1970-01-01 00:00:05','par3'),

('id6','Emma',20,TIMESTAMP '1970-01-01 00:00:06','par3'),

('id7','Bob',44,TIMESTAMP '1970-01-01 00:00:07','par4'),

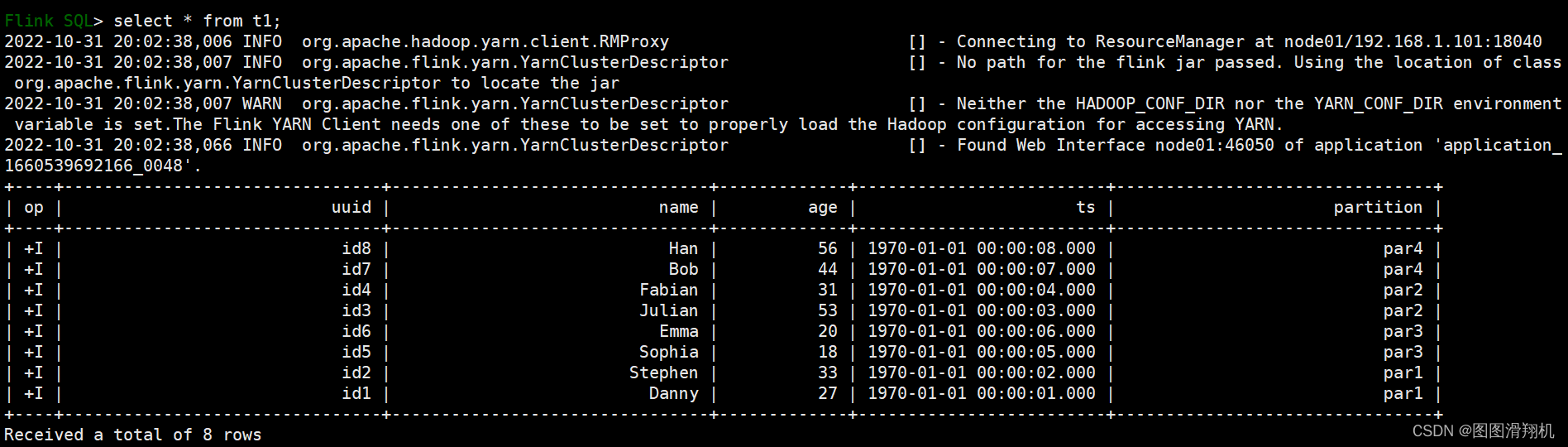

('id8','Han',56,TIMESTAMP '1970-01-01 00:00:08','par4');查询数据

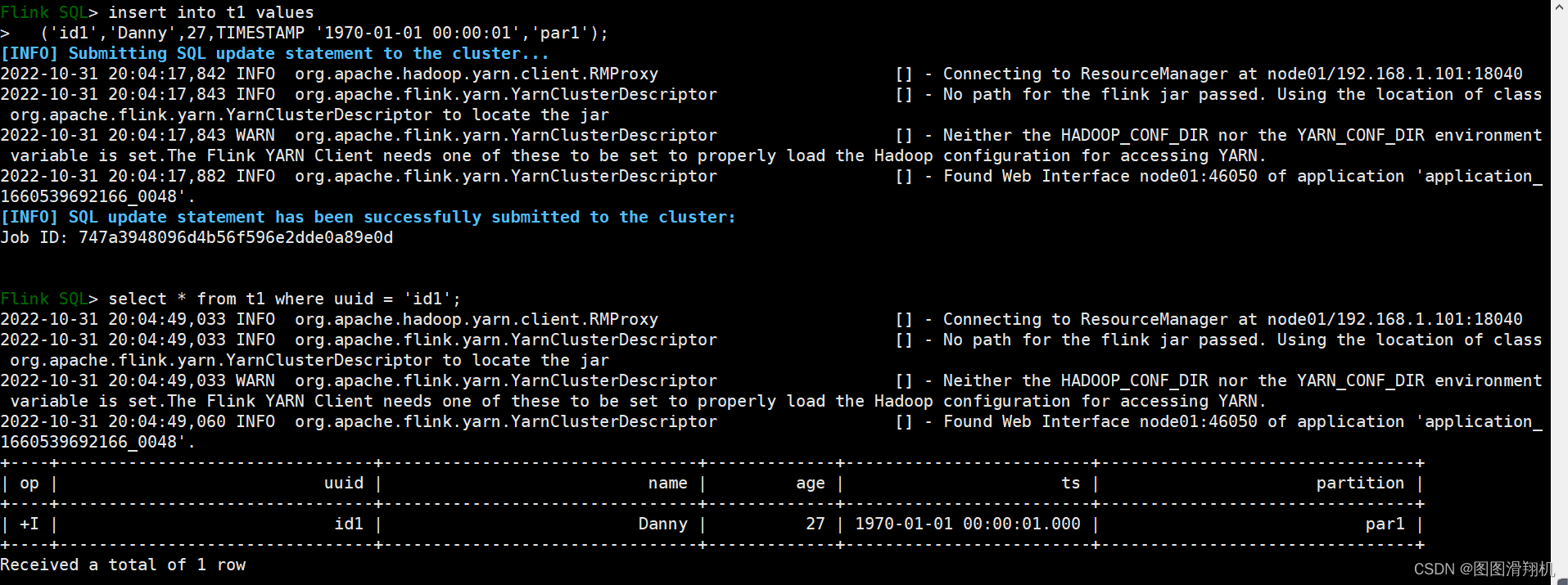

更新数据

1188

1188

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?