使用神经网络完成对手写数字识别,主要步骤包括

1. 使用Pytorch内置函数下载MNIST数据集

2. 利用torchvision对数据进行预处理,调用torch.utils建立一个数据迭代器

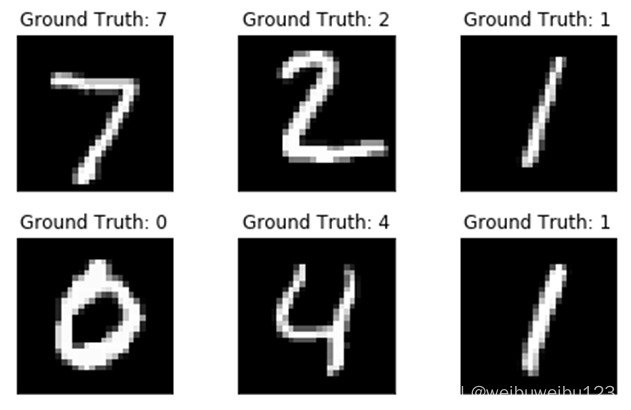

3. 可视化数据源

4. 使用nn工具箱构建神经网络模型

5. 实例化模型,并定义损失函数

6. 训练模型

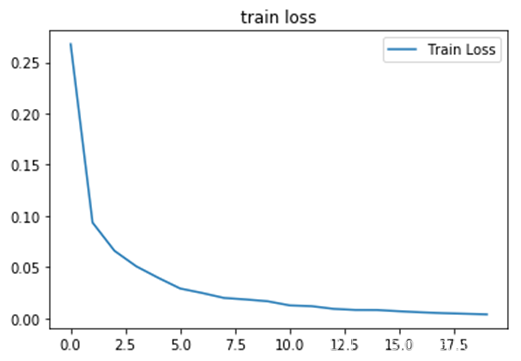

7. 可视化结果

过程:

1.准备数据

import numpy as np

import torch

# 导入 pytorch 内置的 mnist 数据

from torchvision.datasets import mnist

#import torchvision

#导入预处理模块

import torchvision.transforms as transforms

from torch.utils.data import DataLoader

#导入nn及优化器

import torch.nn.functional as F

import torch.optim as optim

from torch import nn

2. 定义一些超参数

# 定义一些超参数

train_batch_size = 64

test_batch_size = 128

learning_rate = 0.01

num_epoches = 20

3.下载数据集合并对数据进行预处理

#定义预处理函数

transform = transforms.Compose([transforms.ToTensor(),transforms.Normalize([0.5], [0.5])])

#下载数据,并对数据进行预处理

train_dataset = mnist.MNIST('./data', train=True, transform=transform, download=True)

test_dataset = mnist.MNIST('./data', train=False, transform=transform)

#得到一个生成器

train_loader = DataLoader(train_dataset, batch_size=train_batch_size, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=test_batch_size, shuffle=False)

4.可视化源数据

import matplotlib.pyplot as plt

examples = enumerate(test_loader)

batch_idx, (example_data, example_targets) = next(examples)

fig = plt.figure()

for i in range(6):

plt.subplot(2,3,i+1)

plt.tight_layout()

plt.imshow(example_data[i][0], cmap='gray', interpolation='none')

plt.title("Ground Truth: {}".format(example_targets[i]))

plt.xticks([])

plt.yticks([])

plt.show()

5.构建模型

class Net(nn.Module):

"""

使用sequential构建网络,Sequential()函数的功能是将网络的层组合到一起

"""

def __init__(self, in_dim, n_hidden_1, n_hidden_2, out_dim):

super(Net, self).__init__()

self.layer1 = nn.Sequential(nn.Linear(in_dim, n_hidden_1),nn.BatchNorm1d(n_hidden_1))

self.layer2 = nn.Sequential(nn.Linear(n_hidden_1, n_hidden_2),nn.BatchNorm1d(n_hidden_2))

self.layer3 = nn.Sequential(nn.Linear(n_hidden_2, out_dim))

def forward(self, x):

x = F.relu(self.layer1(x))

x = F.relu(self.layer2(x))

x = self.layer3(x)

return x

6.实例化网络

#实例化模型

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

#if torch.cuda.device_count() > 1:

# print("Let's use", torch.cuda.device_count(), "GPUs")

# # dim = 0 [20, xxx] -> [10, ...], [10, ...] on 2GPUs

# model = nn.DataParallel(model)

model = Net(28 * 28, 300, 100, 10)

model.to(device)

# 定义损失函数和优化器

criterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=lr, momentum=momentum)

7.训练网络

# 开始训练

losses = []

acces = []

eval_losses = []

eval_acces = []

writer = SummaryWriter(log_dir='logs',comment='train-loss')

for epoch in range(num_epoches):

train_loss = 0

train_acc = 0

model.train()

#动态修改参数学习率

if epoch%5==0:

optimizer.param_groups[0]['lr']*=0.9

print(optimizer.param_groups[0]['lr'])

for img, label in train_loader:

img=img.to(device)

label = label.to(device)

img = img.view(img.size(0), -1)

# 前向传播

out = model(img)

loss = criterion(out, label)

# 反向传播

optimizer.zero_grad()

loss.backward()

optimizer.step()

# 记录误差

train_loss += loss.item()

# 保存loss的数据与epoch数值

writer.add_scalar('Train', train_loss/len(train_loader), epoch)

# 计算分类的准确率

_, pred = out.max(1)

num_correct = (pred == label).sum().item()

acc = num_correct / img.shape[0]

train_acc += acc

losses.append(train_loss / len(train_loader))

acces.append(train_acc / len(train_loader))

# 在测试集上检验效果

eval_loss = 0

eval_acc = 0

#net.eval() # 将模型改为预测模式

model.eval()

for img, label in test_loader:

img=img.to(device)

label = label.to(device)

img = img.view(img.size(0), -1)

out = model(img)

loss = criterion(out, label)

# 记录误差

eval_loss += loss.item()

# 记录准确率

_, pred = out.max(1)

num_correct = (pred == label).sum().item()

acc = num_correct / img.shape[0]

eval_acc += acc

eval_losses.append(eval_loss / len(test_loader))

eval_acces.append(eval_acc / len(test_loader))

print('epoch: {}, Train Loss: {:.4f}, Train Acc: {:.4f}, Test Loss: {:.4f}, Test Acc: {:.4f}'

.format(epoch, train_loss / len(train_loader), train_acc / len(train_loader),

eval_loss / len(test_loader), eval_acc / len(test_loader)))

8.打印参数

plt.title('train loss')

plt.plot(np.arange(len(losses)), losses)

#plt.plot(np.arange(len(eval_losses)), eval_losses)

#plt.legend(['Train Loss', 'Test Loss'], loc='upper right')

plt.legend(['Train Loss'], loc='upper right')

全部代码:

import os

os.environ["KMP_DUPLICATE_LIB_OK"]="TRUE"

#1.导入必要的模块

from matplotlib import transforms

import torch

import numpy as np

#导入内置的mnist数据集合

from torchvision.datasets import mnist

#导入预处理模块

import torchvision.transforms as transforms

from torch.utils.data import DataLoader

#导入nn及优化器

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

#可视化

import matplotlib.pyplot as plt

#2.定义一些超参数

train_batch_size = 64

test_batch_size = 128

learning_rata = 0.01

num_epoches = 20

lr = 0.01

momentum = 0.5

#3.下载数据并进行处理

#定义预处理函数,将这些预处理函数依次放在Compose函数中

'''

1.transforms.Compose可以把一些转换函数组合在一起

2.Normalize([0.5],[0.5])对张量进行归一化,这里两个0.5分别表示对张量进行归一化的全局平均值和方差,

因为图像是灰色的,只有一个通道,如果有多个通道,则需要多个数字,如3个通道,应该是Normalize([m1,m2,m3],[n1,n2,n3]);

3.download参数控制是否需要下载,如果./data数据集合下包含了MNIST,可以选择False

4.用DataLoader得到生成器,这样可以节省内存

5.torchvision及data

'''

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize([0.5], [0.5])])

train_dataset = mnist.MNIST('./data', train=True, transform=transform, download=True)

test_dataset = mnist.MNIST('./data', train=False, transform=transform)

train_loader = DataLoader(train_dataset, batch_size=train_batch_size, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=test_batch_size, shuffle=False)

#4.可视化数据源

#enumerate()函数表示将列表、字符串等可遍历的数据对象组成一个索引序列

#[(0,'apple'),(1,'babab')]

#next()函数

examples = enumerate(test_loader)

batch_idx, (example_data, example_target) = next(examples)

fig = plt.figure()

for i in range(6):

plt.subplot(2, 3, i+1)

plt.tight_layout()

plt.imshow(example_data[i][0], cmap='gray', interpolation='none')

plt.title("Ground True:{}".format(example_target[i]))

plt.xticks([])

plt.yticks([])

plt.show()

#5.构建模型

class Net(nn.Module):

def __init__(self, in_dim, n_hidden_1, n_hidden_2, out_dim):

super(Net, self).__init__()

self.layer1 = nn.Sequential(nn.Linear(in_dim, n_hidden_1), nn.BatchNorm1d(n_hidden_1))

self.layer2 = nn.Sequential(nn.Linear(n_hidden_1, n_hidden_2), nn.BatchNorm1d(n_hidden_2))

self.layer3 = nn.Sequential(nn.Linear(n_hidden_2, out_dim))

def forward(self, x):

x = F.relu(self.layer1(x))

x = F.dropout(x)

x = F.relu(self.layer2(x))

x = self.layer3(x)

return x

#6.实例化网络,检测是否可用GPU

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

model = Net(28 *28, 300, 100, 10)

model.to(device)

#7.定义损失函数和优化器

criterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=lr, momentum=momentum)

#8.训练模型

losses = []

acces = []

eval_losses = []

eval_acces = []

for epoch in range(num_epoches):

train_loss = 0

train_acc = 0

model.train()

if epoch % 5== 0 :

optimizer.param_groups[0]['lr']*=0.1

for img, label in train_loader:

img = img.to(device)

label = label.to(device)

img = img.view(img.size(0), -1)

out = model(img)

loss = criterion(out, label)

optimizer.zero_grad()

loss.backward()

optimizer.step()

train_loss +=loss.item()

_, pred = out.max(1)

num_correct = (pred == label).sum().item()

acc = num_correct / img.shape[0]

train_acc += acc

losses.append(train_loss / len(train_loader))

acces.append(train_acc / len(train_loader))

eval_loss = 0

eval_acc = 0

model.eval()

for img, label in test_loader:

img = img.to(device)

label = label.to(device)

img = img.view(img.size(0), -1)

out = model(img)

loss = criterion(out, label)

eval_loss += loss.item()

_, pred = out.max(1)

num_correct = (pred ==label).sum().item()

acc = num_correct / img.shape[0]

eval_acc +=acc

eval_losses.append(eval_loss / len(test_loader))

eval_acces.append(eval_acc / len(test_loader))

print('epoch:{}, Train loss:{:.4f},Train acc:{:.4f},Test loss:{:.4f}, Test acc:{:.4f}'

.format(epoch, train_loss/len(train_loader), train_acc/len(train_loader), eval_loss/len(test_loader),

eval_acc/len(test_loader)))

plt.title('trainloss')

plt.plot(np.arange(len(losses)), losses)

plt.legend(['Train loss'], loc='upper right')

plt.show()

289

289

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?