-

The data will be inevitably long-tailed.

For example, if we target at increasing the images of tail class instances like “remote controller”, we have to bring in more head instance like “sofa” and “TV” simultaneously in every newly added image. -

The paradoxical effects of long tail:

“bad” bias: It is bad because the classification is severely biased towards the data-rich data.

“good” bias: It is good because the long-tailed distribution essentially encodes the natural inter-dependencies of classes , for example, '‘TV’ is indeed a good context for “remote controller”. Any disrespect of it will hurt the feature representaion learning, e.g., re-weighting or re-sampling, inevitably causes under-fitting to the head or over-fitting to the tail. -

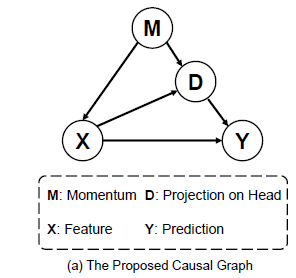

Causal graph

Revisit the “bad” bias in a causal view:

- the backdoor p

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

893

893

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?