这个事,干了好几次了,不过之前是在Windows上,这次改为linux,竟然一遍成功了。

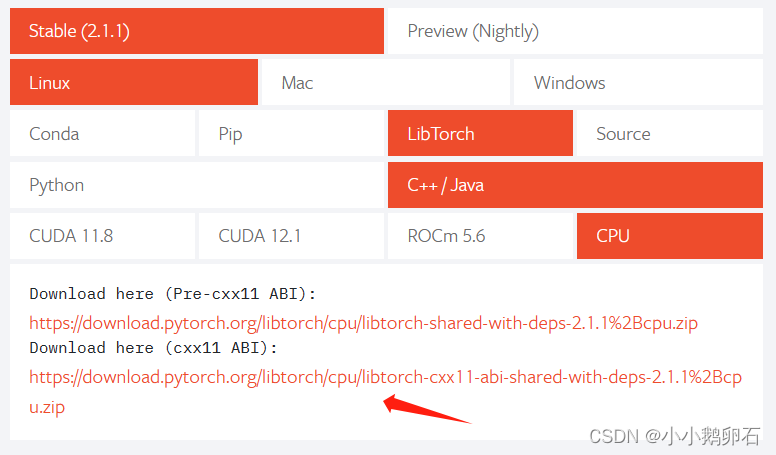

首先官网把包下载下来

一开始不知道怎么用,省流,它是cmake的包。

然后解压到cmake环境下。给出官网LOADING A TORCHSCRIPT MODEL IN C++

然后跟着走,竟然成功了。

贴出代码防丢失。

跑完的目录结构

.

├── build

│ ├── CMakeCache.txt

│ ├── CMakeFiles

│ │ ├── 3.22.1

│ │ │ ├── CMakeCCompiler.cmake

│ │ │ ├── CMakeCXXCompiler.cmake

│ │ │ ├── CMakeDetermineCompilerABI_C.bin

│ │ │ ├── CMakeDetermineCompilerABI_CXX.bin

│ │ │ ├── CMakeSystem.cmake

│ │ │ ├── CompilerIdC

│ │ │ │ ├── a.out

│ │ │ │ ├── CMakeCCompilerId.c

│ │ │ │ └── tmp

│ │ │ └── CompilerIdCXX

│ │ │ ├── a.out

│ │ │ ├── CMakeCXXCompilerId.cpp

│ │ │ └── tmp

│ │ ├── cmake.check_cache

│ │ ├── CMakeDirectoryInformation.cmake

│ │ ├── CMakeOutput.log

│ │ ├── CMakeTmp

│ │ ├── hcn.dir

│ │ │ ├── build.make

│ │ │ ├── cmake_clean.cmake

│ │ │ ├── compiler_depend.internal

│ │ │ ├── compiler_depend.make

│ │ │ ├── compiler_depend.ts

│ │ │ ├── DependInfo.cmake

│ │ │ ├── depend.make

│ │ │ ├── flags.make

│ │ │ ├── hcn.cpp.o

│ │ │ ├── hcn.cpp.o.d

│ │ │ ├── link.txt

│ │ │ ├── main.cpp.o

│ │ │ ├── main.cpp.o.d

│ │ │ └── progress.make

│ │ ├── Makefile2

│ │ ├── Makefile.cmake

│ │ ├── progress.marks

│ │ └── TargetDirectories.txt

│ ├── cmake_install.cmake

│ ├── compile_commands.json

│ ├── hcn

│ └── Makefile

├── CMakeLists.txt

├── hcn2.pt

├── hcn.cpp

├── hcn.h

├── hcn.pt

└── main.cpp

CMakeLists.txt

cmake_minimum_required(VERSION 3.0 FATAL_ERROR)

project(hcn)

set(CMAKE_PREFIX_PATH "~/cmake/libtorch")

set(CXX_STANDARD 17)

set(CMAKE_BUILD_TYPE Debug)

# include_directories("./")

# LINK_DIRECTORIES("~/cmake/libtorch")

find_package(Torch REQUIRED)

# message(TORCH_LIBRARIES: "${TORCH_LIBRARIES}")

add_executable(hcn main.cpp hcn.cpp)

target_link_options(hcn PRIVATE -static-libgcc -static-libstdc++)

target_link_libraries(hcn "${TORCH_LIBRARIES}")

hcn.h

#pragma once

#include <iostream>

#include <torch/script.h>

namespace myHcn {

class Hcn

{

public:

std::vector<torch::jit::IValue> inputs;

c10::IValue output;

Hcn(const std::string & modelFile);

~Hcn() = default;

torch::Tensor getHc();

torch::Tensor run(float tfValue);

int shotOverCallback();

private:

torch::jit::script::Module model;

torch::Tensor hc; // 额外传入model的参数

bool setHc();

bool isRunning;

};

} // namespace myHcn

hcn.cpp

#include <iostream>

#include <torch/script.h>

#include "hcn.h"

namespace myHcn {

using namespace std;

using namespace torch;

Hcn::Hcn(const string &modelFile)

{

model = jit::load(modelFile);

isRunning = false;

}

bool Hcn::setHc()

{

if (isRunning) {

hc = output.toTuple()->elements()[1].toTensor();

} else {

hc = torch::zeros({4,1,1,96});

}

return true;

}

Tensor Hcn::getHc()

{

return hc;

}

Tensor Hcn::run(float tfValue)

{

setHc();

isRunning = true;

// Tensor input1 = tensor({tfValue});

// inputs.clear();

// inputs.push_back(input1);

// inputs.push_back(hc);

inputs = {tensor({tfValue}),hc};

output = model.forward(inputs);

return output.toTuple()->elements()[0].toTensor();

}

int Hcn::shotOverCallback()

{

isRunning = false;

return 0;

}

} // namespace myHcn

main.cpp

#include <iostream>

#include <chrono>

#include <torch/script.h>

#include "hcn.h"

using namespace std;

using namespace myHcn;

int main(int argc, const char *argv[])

{

string modelFile("../hcn2.pt");

Hcn pt = Hcn(modelFile);

// 接收数据,运行部分

torch::Tensor hcnValue = pt.run(9);

cout << "==================" << endl;

cout << hcnValue << endl;

hcnValue = pt.run(10);

cout << "==================" << endl;

cout << hcnValue << endl;

// 一炮停止标志位

pt.shotOverCallback();

float itValue = 0.1;

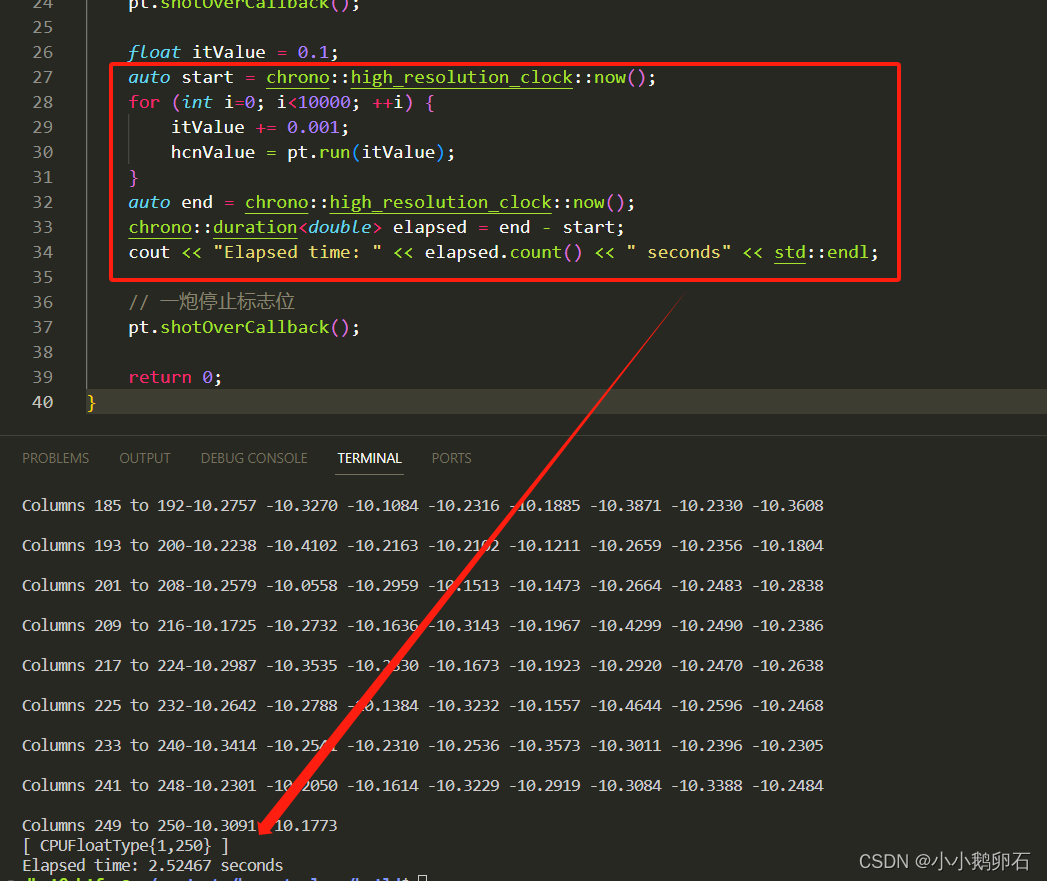

auto start = chrono::high_resolution_clock::now();

for (int i=0; i<10000; ++i) {

itValue += 0.001;

hcnValue = pt.run(itValue);

}

auto end = chrono::high_resolution_clock::now();

chrono::duration<double> elapsed = end - start;

cout << "Elapsed time: " << elapsed.count() << " seconds" << std::endl;

// 一炮停止标志位

pt.shotOverCallback();

return 0;

}

推理10000次,用时2.5秒,比python快多了,可以可以。

下一步准备转onnx格式试试。

2562

2562

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?