整理之前做的东西,刚接触天池,这个代码是论坛出的,后面会在这基础上优化!

试试规范一下这些比赛题的步骤:

回归问题

第一步:导包

几个典型的包,和PCA,找参数的一些例如GridSearchCV等,最好记住一些!!

也就是降维用到的算法,参数优化的算法,分类、回归模型的算法,找个时间整理一些!

## 基础工具

import numpy as np

import pandas as pd

import warnings

import matplotlib

import matplotlib.pyplot as plt

import seaborn as sns

from scipy.special import jn

from IPython.display import display, clear_output

import time

warnings.filterwarnings('ignore')

%matplotlib inline

## 模型预测的

from sklearn import linear_model

from sklearn import preprocessing

from sklearn.svm import SVR

from sklearn.ensemble import RandomForestRegressor,GradientBoostingRegressor

## 数据降维处理的

from sklearn.decomposition import PCA,FastICA,FactorAnalysis,SparsePCA

%pip install xgboost

import lightgbm as lgb

import xgboost as xgb

## 参数搜索和评价的

from sklearn.model_selection import GridSearchCV,cross_val_score,StratifiedKFold,train_test_split

from sklearn.metrics import mean_squared_error, mean_absolute_error

第二步:读入数据

用pandas来做,给我定死了!!

## 通过Pandas对于数据进行读取 (pandas是一个很友好的数据读取函数库)

Train_data = pd.read_csv('used_car_train_20200313.csv', sep=' ')

TestA_data = pd.read_csv('used_car_testA_20200313.csv', sep=' ')

## 输出数据的大小信息

print('Train data shape:',Train_data.shape)

print('TestA data shape:',TestA_data.shape)

Out:

其实我觉得测试集会不会有点多了啊,比例3:1,赛题给的管他呢。

其实我觉得测试集会不会有点多了啊,比例3:1,赛题给的管他呢。

第三步:看数据

记住几个典型函数head(),info(),describe(),columns…

都试一下看看数据啥样子

Train_data.head()#必须要的函数

看看数据长啥样子

Train_data.info()

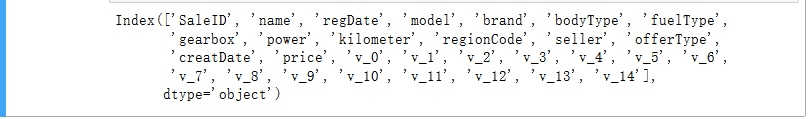

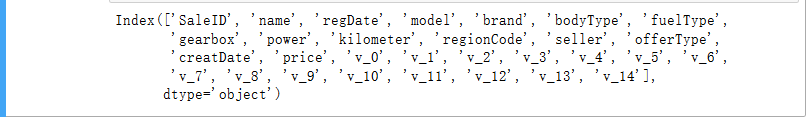

Train_data.columns

TestA_data.info()

Train_data.describe()

这幅图主要看方差,举两个例子:

这幅图主要看方差,举两个例子:

- 数据的方差很小,极限来说全是相等的数值,那么这个特征对于模型的训练就没有什么用了。

- 就是量纲问题,标签和标签之间的量纲很大就应该考虑做归一化等操作了,例如一个标签数值范围(0-10)另外一个(10000-1000000000)。

TestA_data.describe()

第四步特征选择

这是一门技术活,一方面要根据项目的实际意义,另外还得看特征下的数据长啥样,要不要做独热编码,要不要去掉,不管是在深度学习还是机器学习,特征工程很大程度决定了你的模型准确度。

numerical_cols = Train_data.select_dtypes(exclude = 'object').columns

print(numerical_cols)

对于object类型的数据常见的就是时间日期的处理,你到底要不要具体和你的项目实际相关。同时这里有很多匿名标签,又该如何处理呢?

categorical_cols = Train_data.select_dtypes(include = 'object').columns

print(categorical_cols)

out:

Index(['notRepairedDamage'], dtype='object')

## 选择特征列

feature_cols = [col for col in numerical_cols if col not in ['SaleID','name','regDate','creatDate','price','model','brand','regionCode','seller']]

feature_cols = [col for col in feature_cols if 'Type' not in col]

print(feature_cols)

## 提前特征列,标签列构造训练样本和测试样本

X_data = Train_data[feature_cols]

Y_data = Train_data['price']

X_test = TestA_data[feature_cols]

print('X train shape:',X_data.shape)

print('X test shape:',X_test.shape)

上图的特征选择只是靠感觉来的,还可以优化。

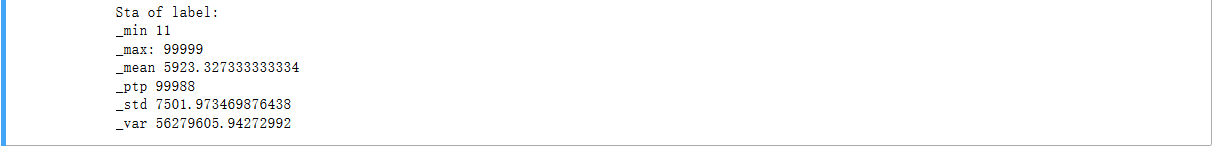

查看预测值的分布情况

def Sta_inf(data):

print('_min',np.min(data))

print('_max:',np.max(data))

print('_mean',np.mean(data))

print('_ptp',np.ptp(data))

print('_std',np.std(data))

print('_var',np.var(data))

print('Sta of label:')

Sta_inf(Y_data)

## 绘制标签的统计图,查看标签分布

plt.hist(Y_data)

plt.show()

plt.close()

第五步:模型选择

## xgb-Model

xgr = xgb.XGBRegressor(n_estimators=120, learning_rate=0.1, gamma=0, subsample=0.8,\

colsample_bytree=0.9, max_depth=7) #,objective ='reg:squarederror'

scores_train = []

scores = []

## 5折交叉验证方式

sk=StratifiedKFold(n_splits=5,shuffle=True,random_state=0)

for train_ind,val_ind in sk.split(X_data,Y_data):

train_x=X_data.iloc[train_ind].values

train_y=Y_data.iloc[train_ind]

val_x=X_data.iloc[val_ind].values

val_y=Y_data.iloc[val_ind]

xgr.fit(train_x,train_y)

pred_train_xgb=xgr.predict(train_x)

pred_xgb=xgr.predict(val_x)

score_train = mean_absolute_error(train_y,pred_train_xgb)

scores_train.append(score_train)

score = mean_absolute_error(val_y,pred_xgb)

scores.append(score)

print('Train mae:',np.mean(score_train))

print('Val mae',np.mean(scores))

out:

Train mae: 621.8123289580285

Val mae 714.7313738286391

def build_model_xgb(x_train,y_train):

model = xgb.XGBRegressor(n_estimators=150, learning_rate=0.1, gamma=0, subsample=0.8,\

colsample_bytree=0.9, max_depth=7) #, objective ='reg:squarederror'

model.fit(x_train, y_train)

return model

def build_model_lgb(x_train,y_train):

estimator = lgb.LGBMRegressor(num_leaves=127,n_estimators = 150)

param_grid = {

'learning_rate': [0.01, 0.05, 0.1, 0.2],

}

gbm = GridSearchCV(estimator, param_grid)

gbm.fit(x_train, y_train)

return gbm

## Split data with val

x_train,x_val,y_train,y_val = train_test_split(X_data,Y_data,test_size=0.3)

print('Train lgb...')

model_lgb = build_model_lgb(x_train,y_train)

val_lgb = model_lgb.predict(x_val)

MAE_lgb = mean_absolute_error(y_val,val_lgb)

print('MAE of val with lgb:',MAE_lgb)

print('Predict lgb...')

model_lgb_pre = build_model_lgb(X_data,Y_data)

subA_lgb = model_lgb_pre.predict(X_test)

print('Sta of Predict lgb:')

Sta_inf(subA_lgb)

print('Train xgb...')

model_xgb = build_model_xgb(x_train,y_train)

val_xgb = model_xgb.predict(x_val)

MAE_xgb = mean_absolute_error(y_val,val_xgb)

print('MAE of val with xgb:',MAE_xgb)

print('Predict xgb...')

model_xgb_pre = build_model_xgb(X_data,Y_data)

subA_xgb = model_xgb_pre.predict(X_test)

print('Sta of Predict xgb:')

Sta_inf(subA_xgb)

## 这里我们采取了简单的加权融合的方式

val_Weighted = (1-MAE_lgb/(MAE_xgb+MAE_lgb))*val_lgb+(1-MAE_xgb/(MAE_xgb+MAE_lgb))*val_xgb

val_Weighted[val_Weighted<0]=10 # 由于我们发现预测的最小值有负数,而真实情况下,price为负是不存在的,由此我们进行对应的后修正

print('MAE of val with Weighted ensemble:',mean_absolute_error(y_val,val_Weighted))

sub_Weighted = (1-MAE_lgb/(MAE_xgb+MAE_lgb))*subA_lgb+(1-MAE_xgb/(MAE_xgb+MAE_lgb))*subA_xgb

## 查看预测值的统计进行

plt.hist(Y_data)

plt.show()

plt.close()

sub = pd.DataFrame()

sub['SaleID'] = TestA_data.SaleID

sub['price'] = sub_Weighted

sub.to_csv('./sub_Weighted.csv',index=False)

sub.head()

最后600多分,优化的地方多了,下篇做优化!

9748

9748

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?