这是OSTEP 第二部分的阅读笔记,之前的笔记见

这一部分介绍了OS如何给每个process提供拥有private memory的vision,从base and bound 的方法到segmentation再讲到最终paging。

Memory Virtualization

The Abstraction: Address Space

Address Space: abstraction of physical memory, running program’s view of memory in the system.

Goal

- transparency: invisible to running program -> process has private physical memory

- efficiency: in terms of both time and space, with features like TLB

- protection: process cannot touch memory outside its address space

Memory API

-

Libray calls:

malloc(),free() -

No memory leak if process exits.

-

Tools: valgrind, purify

-

System call:

brk(addr)- change the end of the heap to new addrsbrk(increment)mmap()- allocate anonymous memory which is not assoicated with file, swap space

-

Other library calls

calloc(): also zeros itrealloc(): move a memory pointer to a larger region

Mechanism: Address Translation

Efficiency: hardware support (TLB, page table)

Control: protection

Flexibility: process can use the address space at will

Dynamic Relocation (Base and Bounds)

physical address = virtual address + base

- Make sure virtual address within bound

memory management unit (MMU) helps with address translation

Hardware Support

- privileged mode and user mode

- Set base/ bound register

- base and bounds registers - part of MMU (All translation is done by MMU)

- translate (add base, check within bound)

- raise exception when outside of bound

- Instruction for modifying base and bound register - in kernel mode

OS Support

- Track free physical memory (free list)

- Base/bounds management

- Set base and bound register correctly during context switch (Store it in PCB)

- Exception handling

- Terminate offending process

Problem

- Internal fragmentation: Space inside the allocated unit is not used since the heap and stack is small.

- Besides, address space cannot be larger than the physical memory.

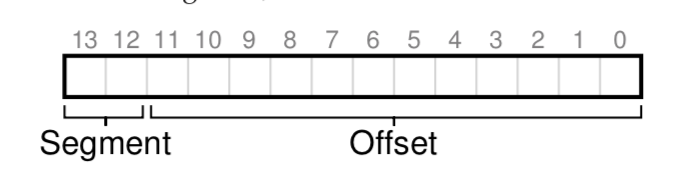

Segmentation: Generalized Base/ Bounds

base + bound pair

top 2 bits: which segment the virtual address refers to

Support for Sharing

Read only segment can be shared by several processes

OS Support

- Set up base and bound register

- Allocate more space when segments grow, update bound register

- Free list?

Problem

- external fragmentation: segment is with different size, there will be holes between segments.

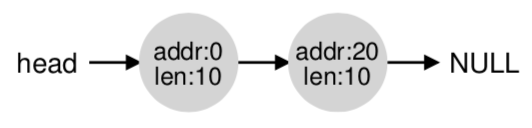

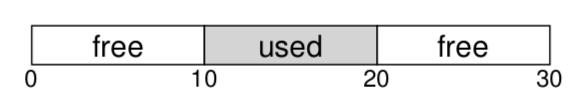

Detour: Free-Space Management

Important for managing memory with variable-sized units.

(heap/ segmentation)

Problem: how to minimize external fragmentation?

Low-level Mechanism

Splitting and Coalescing

- Represented with list

- Split: the node is split into two and one is returned

- Coalescing: when some space is freed, check its left and right and whether they can be merged into a larger list

Tracking the size of Allocated Region

- header block for tracking the size

- Magic number is for sanity check

- Magic number is for sanity check

- When user asks for size n, we have to provide n+sizeof(header)

Embedding a Free List

Growing the Heap

Calling sbrk() might be needed to grow the heap

Basic Strategies

Best Fit

Smallest chunk that is larger than the required size

- +Reduce wasted space

- -performance penalty for exhaustive search

Worst Fit

Always divide the largest chunk

- +leave several big chunks

- -high overhead

- -research shows the performance is bad

First Fit

Return the first fitted one

- +Speed (no need to search exhaustively)

- Order is important!

- address-based ordering

- +simple coalescing

- +reduced fragmentation

- address-based ordering

Next Fit

Record the last returned pointer, start search from the next chunk

- +spread the searches for free space throughout hte list more uniformly

Other Approaches

Segregated Lists

- Assumption: There is a request of popular size

Method:

-

Particular memory is dedicated for one particular size of requests.

-

Other chunk of memory for other size.

Buddy Allocation

Make coalesce simple!

When a chunk is freed, check whether its buddy is free, if so, do the coalescing.

Beauty: the buddy block address differs by 1 bit

Problem

- scaling: more complex data structure (balanced binary tree, splay tree, partially-ordered tree)

- multithread: allocator should be thread-safe

Paging

Divide memory into fixed-sized unit (page)

Advantage

- flexibility: x consider how the process use the page

- simplicity: free list for handling free page

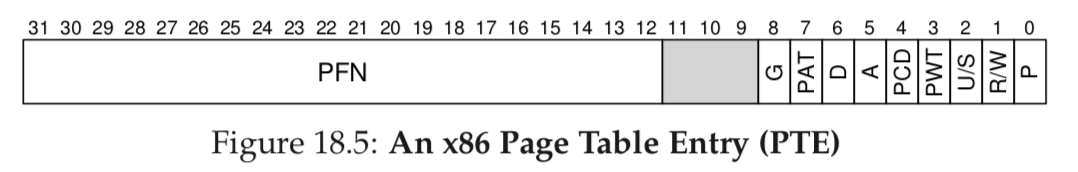

Page Table

Size

-

We need 1 page table per process

-

a 32 bit machine, 20 bits for VPN, then it requires 2^20 * 4B = 4MB for page table

- Very large! -> stored in memory (Actually, it is in OS virtual memory, can be swapped to disk)

What stored?

- valid bit: whether the translation is valid. e.g., the space between stack and heap is invalid.

- solving internal fragmentation

- protection bit: readable/ writable/ executable

- present bit: in physical memory or on disk

- allow OS to hold memory larger than physical memory

- dirty bit

- referenced bit

- for replacement

Problem

- Slow!

- Suppose we have page-table base register for accessing location of the page table

- One extra memory reference

- Suppose we have page-table base register for accessing location of the page table

Faster Translations (TLBs, translation-lookaside buffer)

address-translation cache in MMU

Put PTE(page table entry) inside TLB for referenced VPN.

TLB Miss?

- hardware handle

- Find the PTE with page-table base register

- software handle

- cause exception! Bring the PTE to TLB

- Be careful! Do not cause ping pong problem, ensure that the PTE is inside TLB

- +simplicity + flexibility

- Modifying TLB with privileged instruction!

Aside: CISC vs RISC

CISC: instruction high-level, code compact

RISC: compiler needs simplified instruction -> simple, uniform, and fast

What stored?

valid|VPN(tag) | PFN | other bits(similar to what’s in PTE)

- valid bit indicate whether TLB entry is valid

TLB issue: Context Switch

TLB entry is not useful on context switch

Solution:

-

flush TLB (Set all valid bit to 0, either performed by hardware or OS)

-

sharing of the TLB (no flush during context switch, faster)

- use ASID(Address space identifier, usually 8 bits, fewer than PID, 32 bits) to check whether the TLB entry is valid for current process

- Possible that 2 process share 1 PFN

- use ASID(Address space identifier, usually 8 bits, fewer than PID, 32 bits) to check whether the TLB entry is valid for current process

Paging: Smaller Tables

How to make the page table smaller?

Bigger Pages

Make the page size larger can make the page table smaller.

-internal fragmentation

-Increase efficiency of TLB

Multi-level Page Tables

page directory: contains a page of page table

PDE: valid bit + PFN

valid bit = 0 means the page in this directory is not used.

+benefit sparse address space

+page directory also fits in one page

-extra memory reference to get page directory on TLB miss

Hashing: Inverted Page Tables

use hashtable to map VPN to PFN

Physical Memory

What if physical memory is not large enough?

Mechanism: Swap Space

Reserved space on the disk for moving pages back and forth

-

present bit: track whether a page is in physical memory

- page not present -> page fault

- The disk location of the page can be stored in PTE

- Usually handled by OS directly for simplicity (performance is not an issue as accessing disk is slow)

- page not present -> page fault

-

Full control flow:

-

When page replacement happens?

- When there are fewer than low watermark(LW) pages available in physical memory

- Evict until more than high watermark(HW) pages available

- Send a lot to disk at once!

Policy

Regards to replacement policy when the physical memory is full.

Metrics

Average memory access Time (AMAT)

A

M

A

T

=

T

M

+

(

P

M

i

s

s

⋅

T

D

)

AMAT = T_M + (P_{Miss}\cdot T_D)

AMAT=TM+(PMiss⋅TD)

The Optimal Replacement Policy

Replace the one that will be accessed furthest in the future

- Impossible!

3 type of cache miss:

Compulsory, Capacity, Conflict

FIFO

+simple to implement

-cannot determine the importance of a page

Random

random evict, depend on luck!

+simple

LRU & LFU(Least frequently used)

swapping out the least recently used or least frequently used

-perfect LRU is very expensive (go through all page in the physical memory)

Approximate LRU

Clock algorithm

A ring, a reference bit for each page, if the page is referenced when the clock walks a whole round, it will not be evicted. Do the clock walk when eviction is needed.

Considering Dirty Bit for Replacement Policy

Intuition: Prefer to evict clean page! Otherwise a write to disk is necessary.

Modified clock algorithm

First try to find a clean page to evict.

Question

Q1. When to bring page into physical memory?

A: demand paging + prefetching

Q2. What if the memory is too full?

A: Some processes will be chosen to be kicked off.

Put everything together: Complete Virtual Memory Systems

VAX/ VMS Virtual Memory

The OS for Digital Equipment Corporation (DEC)

-

Page size: 512B

-

Segmenting user address space

- Save space for page table

-

Kernel virtual address space

- One more page table lookup in kernel address space

- Can swap process page table to disk

-

More classic VM!

A Real Address Space

- page 0 is set to be invalid

- kernel virtual address space in process virtual address space

- When context switch, the PTBR for P0 and P1 points to that of new process, however, PTBR for S stays the same (same kernel).

- +kernel memory can be swapped to disk

- +moving data from user address space will be simple (in same address space)

- Need to derenfence a pointer in user address space

Page Replacement

valid bit, protection bit, dirty bit, but no referemce bit

segmented FIFO replacement policy: each process has a fixed size (resident set size(RSS)) of page that can reside in memory.

second-chance lists: If the page is evicted from RSS, it will be put into the second-chance lists

- dirty and clean pages are in separate lists

- also in FIFO order, and has chance to be reclaimed

clustering during swapping dirty page to disk enhance efficiency

Other Tricks

- demand zeroing: defer zeroing the page when the page is read or written

- If a page is requested, it is marked inaccessible, only when the page is used, a free page is taken and zeroed

- copy-on-write: copy a page to another process, the page will be marked as read-only

- If some process write to that page, it will then get its private copy

The Linux Virtual Memory System

Focus on Linux on x86

The Linux Address Space

- Kernel address space

- Two type: Logical and Virtual

- Kernel logical address

- Allocated with kmalloc

- Cannot be swapped to memory

- Mapped to physical memory directly (0xc0000000 -> 0x00000000), suitable for operations need contiguous physical memory(Direct Memory Access(DMA))

- Kernel virtual address

- Allocated with vmalloc

- Kernel can has more than 1 GB of memory (some can be swapped to disk)

- Kernel logical address

- Two type: Logical and Virtual

Page Table Structure

for x86: hardware manger, multilevel page table, one page table per process

- 32bit - 4GB, memory size incresed, 4GB is not enough, 64bits memory is needed

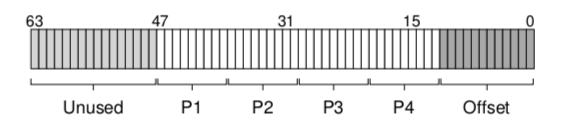

4 level page table, 4KB per page

- Large Page Support: process can choose to use huge pages(2MB or 1GB)

- +better TLB efficiency

- -internal fragmentation

The Page Cache

Save page in the unused part of the memory.

- sources:

- memory-mapped files

- Anonymous memory (process heap/stack)

- Keeps track of dirty page in cache

- Write back to swap space or file

- 2Q replacement policy

- One active list and another inactive list, the page is put in inactive list first, and sent to the active queue when re-referenced

- bottom of the active list will be sent to the inactive queue

Security Issue

Buffer Overflow

Insert code into user address space with buffer overflow.

int some_function(char *input) {

char dest_buffer[100];

strcpy(dest_buffer, input); // oops, unbounded copy!

}

Solution: Do not execute code on stack

Return-Oriented Programming

Overwrite return address of a function.

Solution: Address Space layout randomized - randomize place for stack, heap and code in address space.

Meltdown and Spectre

Computer speculatively execute code.

8994

8994

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?