线性回归

个人理解:数据按照y=wx+b的形式进行分布,其中loss=(wx+b-y)计算损失函数

用到的数据集data(截取了一部分):

import numpy#先导入numpy模块

我们先获得表格里的数值,我们需要定义数值为points,x,y分别代表的其中两列的数值,定义一个totalError,返回值为平均totalError

def compute_error_for_line_given_points(b, w, points):

totalError = 0

for i in range(0,len(points)):#循环所有的结点

x = points[i, 0]

y = points[i, 1]

totalError += (y-(w * x + b)) ** 2

return totalError / float(len(points))

接着计算梯度,定义b的当前梯度,w的当前梯度,数值依旧points,跟学习率learningRate去更新梯度。首先初始化b,w的梯度,N为设置一下所有结点的数量,然后循环所有的结点得到x,y的两列的数值,带入b与w的梯度之中,根据公式算出梯度,然后根据学习率再去更新梯度,用之前的减去学习率*梯度

def step_gradient(b_current, w_current, points, learningRate):

b_gradient = 0

w_gradient = 0

N = float(len(points))#所有节点数量

for i in range(0,len(points)):#循环所有的结点

x = points[i, 0]

y = points[i, 1]#取得x,y坐标

b_gradient += -(2/N) * (y - ((w_current * x) + b_current))

w_gradient += -(2/N) * (y - ((w_current * x) + b_current))

new_b = b_current - (learningRate * b_gradient)

new_m = w_current - (learningRate * w_gradient)

return [new_b, new_m]

阶段性,每一小段计算梯度与斜率

设置:数值points,开始时b的值,开始时m的值,学习率,迭代次数。

def gradient_descent_runner(points, starting_b, strating_m, learning_rate, num_iterations):

b = starting_b

m = strating_m

for i in range(num_iterations):

b, m = step_gradient(b, m, np.array(points), learning_rate)#计算每一步梯度

return [b, m]

最后主函数

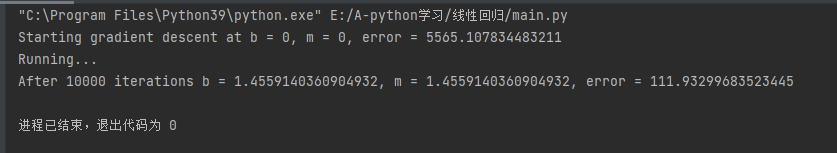

训练的流程:

1.先读取数据

2.设置变量:学习率,b与m的初始值,训练次数

3.打印最开始的变量信息

4.开始调用阶段性训练代码,训练num_iterations次

5.打印最后的loss

if __name__ == '__main__':

points = np.genfromtxt("data.csv", delimiter=",")

learning_rate = 0.0001

initial_b = 0 #initial y-intercept guess 理解一下就是初始化的过程

initial_m = 0 #initial slope guess

num_iterations = 10000#训练次数

print("Starting gradient descent at b = {0}, m = {1}, error = {2}"

.format(initial_b,initial_m,

compute_error_for_line_given_points(initial_b, initial_m, points))

)

print("Running...")

[b, m]=gradient_descent_runner(points, initial_b, initial_m,learning_rate, num_iterations)

print("After {0} iterations b = {1}, m = {2}, error = {3}".

format(num_iterations, b, m,

compute_error_for_line_given_points(b, m, points))

结果

1034

1034

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?