文章目录

一,简单线性回归实现

1,一元线性回归算法

公式:

y = ax + b

a = Σ[(x.i - x.mean)(y.i - y.mean)] / Σ[(x.i - x.mean)**2]

b = y.mean - a*x.mean

num = 0.0

d = 0.0

for x_i, y_i in zip(x, y):

num += (x_i - np.mean(x)) * (y_i - np.mean(y))

d += (x_i - np.mean(x)) **2

# 得到回归系数和截距

a = num / d

b = y_mean - a*x_mean

# 单一数据预测

y_hat = a*x +b

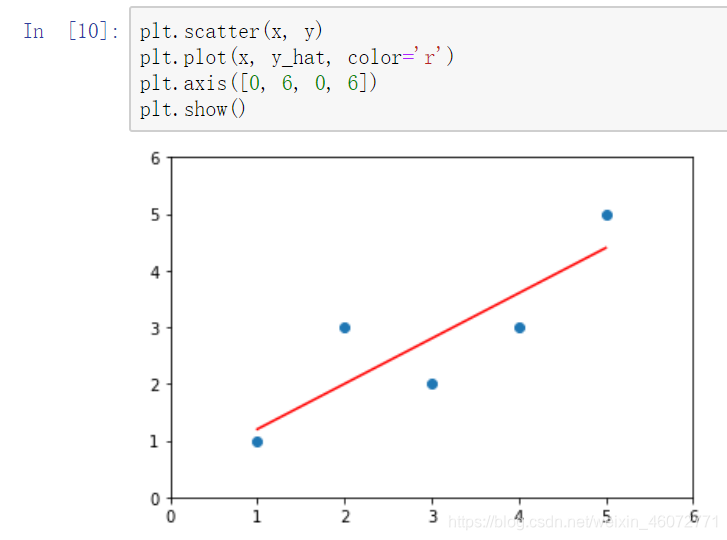

绘制出数据与回归函数

2,封装自己的简单线性回归

class SimpleLinearRegression:

def __init__(self):

self.a_ = None

self.b_ = None

def fit(self, x_train, y_train):

"""

训练参数

:param x_train:属性数据集

:param y_train: 标签数据集

:return: a 回归系数,b 截距

"""

assert x_train.ndim == 1, "一元线性回归只能有一个属性"

assert len(x_train) == len(y_train), "属性——标签一一对应"

x_mean = np.mean(x_train)

y_mean = np.mean(y_train)

num = 0.0

d = 0.0

for x_i, y_i in zip(x_train, y_train):

num += (x_i - x_mean) * (y_i - y_mean)

d += (x_i - x_mean) ** 2

self.a_ = num / d

self.b_ = y_mean - self.a_ * x_mean

return self

def predict(self, x_predict):

"""

预测一组数据

:param x_predict:预测数据集

:return: 预测结果

"""

assert x_predict.ndim == 1, "只能有一个属性"

assert self.a_ is not None and self.b_ is not None, "need use .fit()"

return np.array([self._predict(x) for x in x_predict])

def _predict(self, x_single):

"""

对单一数据进行线性回归

:param x_single: 单一预测数据

:return: 预测结果

"""

return self.a_ * x_single + self.b_

def __repr__(self):

return "一元线性规划"

使用

创建对象,导入训练数据

reg1 = SimpleLinearRegression1()

reg1.fit(x, y)

进行预测

reg1.predict(np.array([x_predict]))

>>>array([5.2])

3,使用向量运算计算回归系数

向量运算的效率更高

只改变 reg.fit()函数其他不变

def fit(self, x_train, y_train):

"""

训练参数---使用矩阵乘法代替循环

:param x_train:属性数据集

:param y_train: 标签数据集

:return: a 回归系数,b 截距

"""

assert x_train.ndim == 1, "一元线性回归只能有一个属性"

assert len(x_train) == len(y_train), "属性——标签一一对应"

"""矩阵乘法代替循环"""

x_mean = np.mean(x_train)

y_mean = np.mean(y_train)

num = (x_train - x_mean).dot(y_train - y_mean)

d = (x_train - x_mean).dot(x_train - x_mean)

self.a_ = num

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

164

164

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?