第十二讲 RNN

直接使用pytorch自带的rnn单元实现

输入为“hello”,期望得到输出“ohlol”

hello为一串字符,首先要进行向量化才能输入到网络,将helo四个字符编码组成一个字典,每个字符在使用one-hot编码,则得到五个向量,每个向量代表hello中的一个字符,每个向量长度为4,即input_size = 4, 以此作为输入。

代码:

import torch

input_size = 4 # 每个字符使用一个4维向量来表示

hidden_size = 4 # 期望输出是4维

batch_size = 1 # 1个词

num_layers = 1 # 1层RNN

seq_len = 5 # 每个词有5个字符

# prepare data

idx2char = ['e', 'h', 'l', 'o'] # 字符表

x_data = [1,0,2,2,3] # 输入hello

y_data = [3,1,2,3,2] # 期望输出ohlol

one_hoe_lookup = [[1,0,0,0], [0,1,0,0], [0,0,1,0], [0,0,0,1]] # one-hot编码

x_one_hot = [one_hoe_lookup[x] for x in x_data]

inputs = torch.Tensor(x_one_hot).view(seq_len, batch_size, input_size) # input:[seq_len, batch_size, input_size]

labels = torch.LongTensor(y_data) # label: [seq_len, batch_size]

# design model

class Model(torch.nn.Module):

def __init__(self, input_size, hidden_size, batch_size, num_layers=1):

super(Model, self).__init__()

self.batch_size = batch_size

self.input_size = input_size

self.hidden_size = hidden_size

self.num_layers = num_layers

self.rnn = torch.nn.RNN(input_size=self.input_size, hidden_size=self.hidden_size, num_layers=num_layers)

def forward(self, input):

hidden = torch.zeros(self.num_layers, self.batch_size, self.hidden_size) # 初始化h0

out, _ = self.rnn(input, hidden) # 输出的是out和hidden, out:[seq_len, batch_size, hidden_size]

return out.view(-1, self.hidden_size)

net = Model(input_size, hidden_size, batch_size, num_layers)

# construct loss and optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(net.parameters(), lr=0.01)

# train

for epoch in range(50):

optimizer.zero_grad()

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

_, idx = outputs.max(dim=1)

idx = idx.data.numpy()

print("Predicted: ", "".join([idx2char[x] for x in idx]), end='')

print(", Epoch [%d/15] loss=%.4f" % (epoch+1, loss.item()))

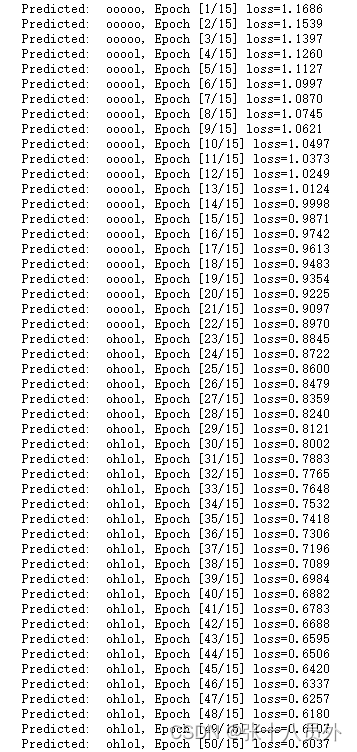

结果:

5万+

5万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?