方法

- 通过

CMD命令行进入tar.gz.part文件的目录下 - 通过如下命令将各个分卷压缩包

XXXX.tar.gz.part合并为一个完整的压缩包XXX.tar.gz:

copy /b XXXX.tar.gz.part* XXX.tar.gz

- 然后再用解压软件解压就好(如:

WinRAR)

举例

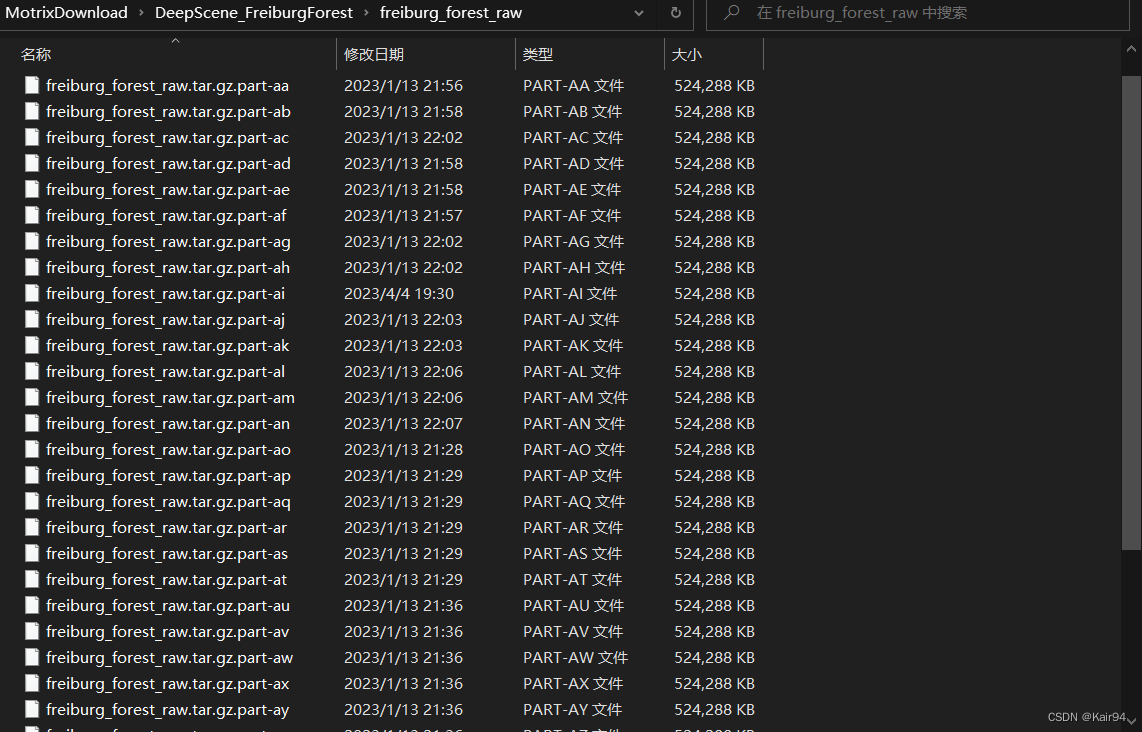

- 进入

DeepScene_FreiburgForest\freiburg_forest_raw文件夹

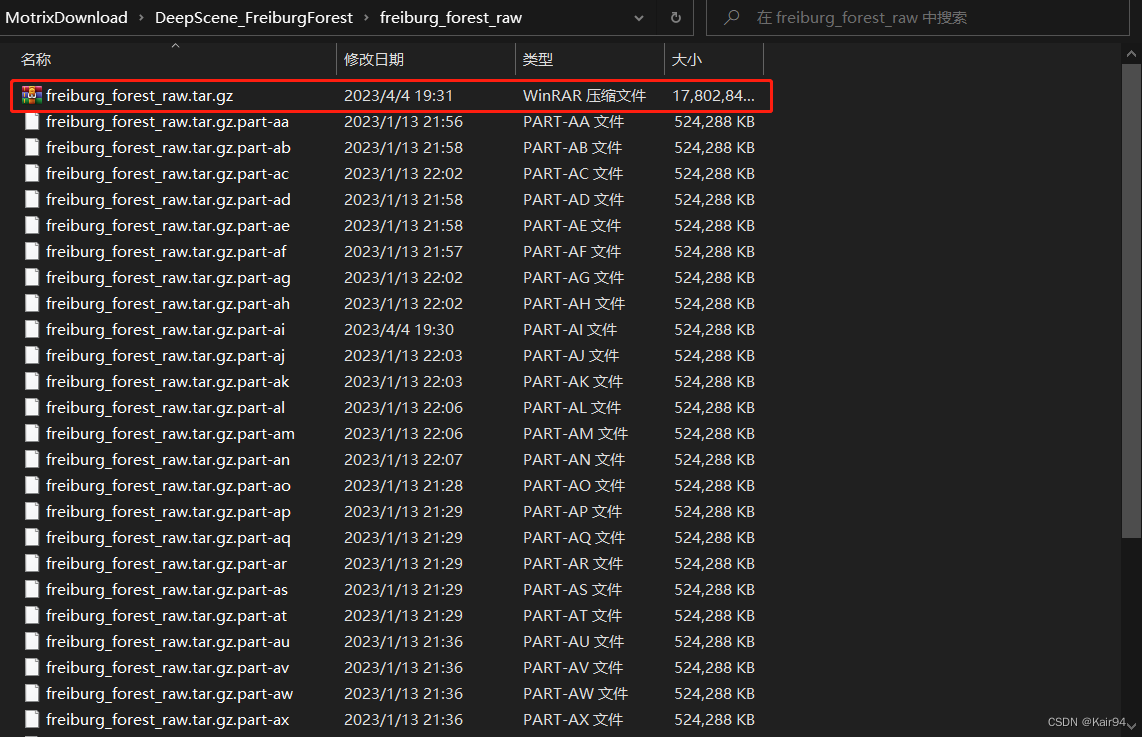

- 输入如下命令

copy /b freiburg_forest_raw.tar.gz.part* freiburg_forest_raw.tar.gz

- 在同一个文件夹下会生合并后的压缩包

freiburg_forest_raw.tar.gz

- 然后再用WinRAR解压

1408

1408

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?