源码:

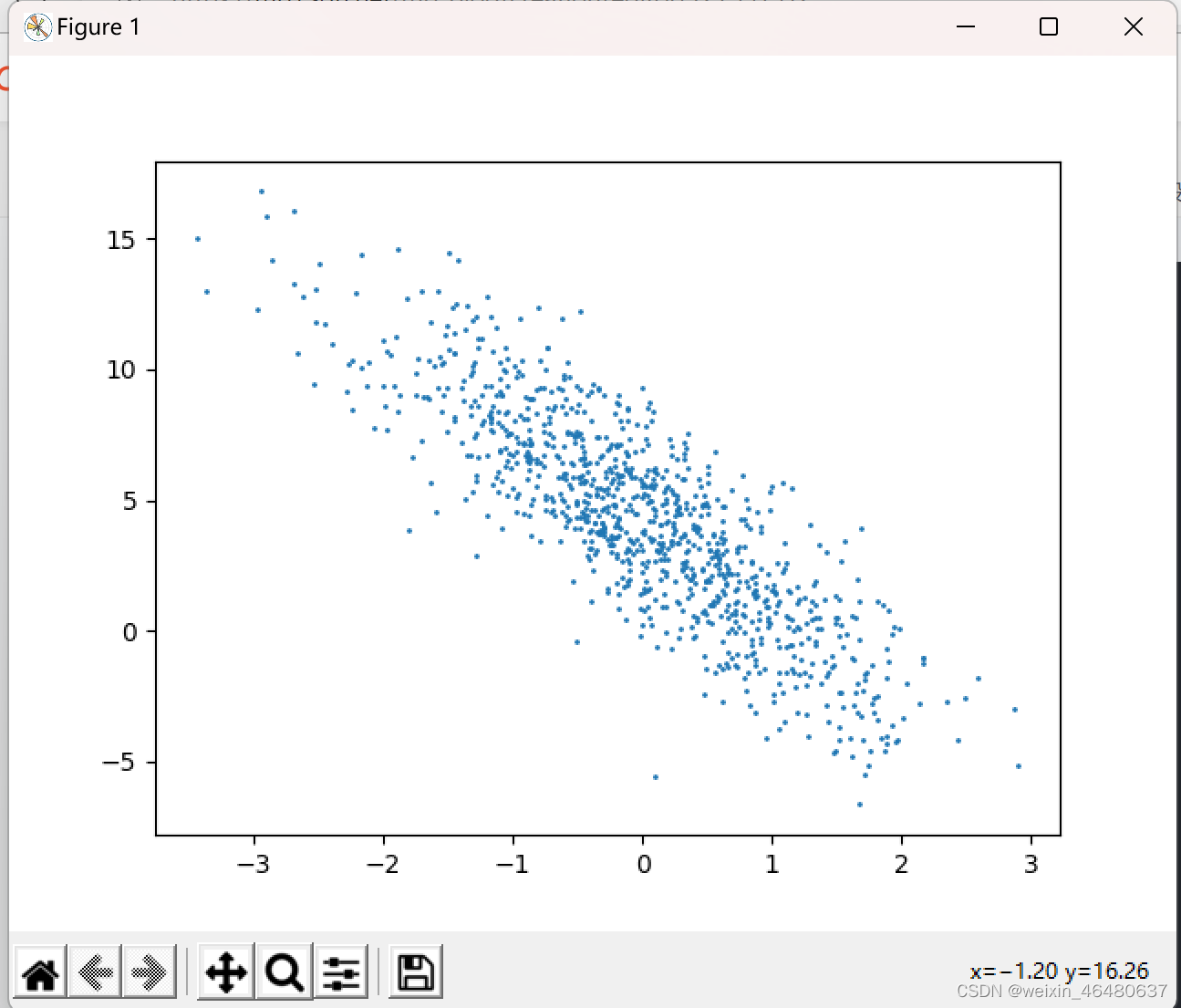

import numpy as np import torch from matplotlib import pyplot as plt # 建立数据集 def synthetic_data(w, b, num_examples): w = torch.tensor(w) X = torch.normal(0, 1, (num_examples, len(w))) y = torch.matmul(X, w)+b y += torch.normal(0, 0.01, y.shape) return X, y.reshape(-1, 1) # 代入真实值 true_w = [2, -3.4] true_b = 4.2 features, labels = synthetic_data(true_w, true_b, 1000) plt.scatter(features[:, 1], labels, 1) plt.show()

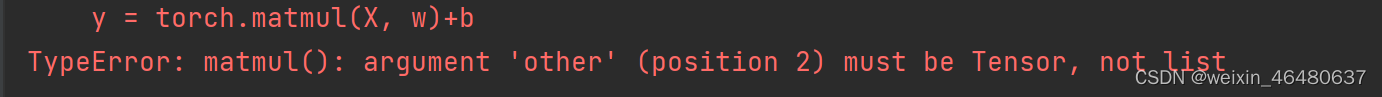

注意:

当出现这个错误时,需要添加 w = torch.tensor(w),将w转变为tensor,不能为list形式

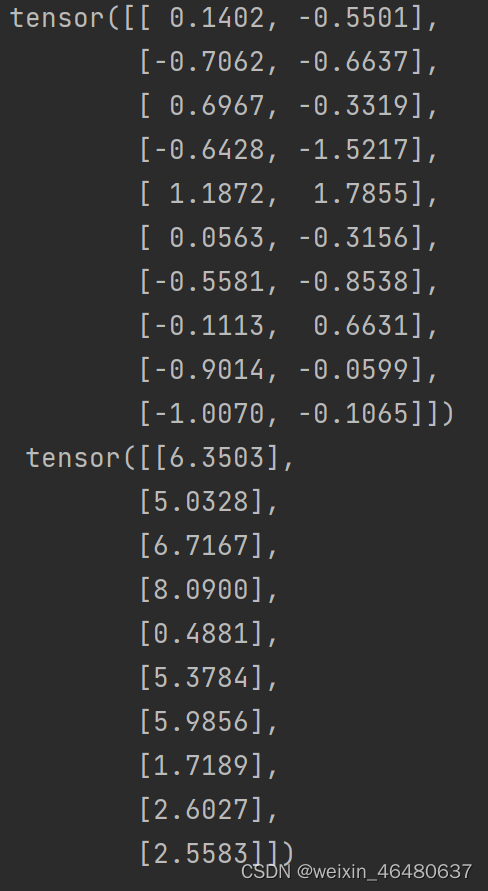

# 读取数据 建立一个函数,实现小批量读取 def data_iter(batch_size, features, labels): num_examples = len(features) indices = list(range(num_examples)) random.shuffle(indices) for i in range(0, num_examples, batch_size): batch_indices = torch.tensor(indices[i: min(i + batch_size, num_examples)]) yield features[batch_indices], labels[batch_indices] batch_size = 10 for X, y in data_iter(batch_size, features, labels): print(X, '\n', y) break

# 初始化模型参数 w = torch.normal(0, 0.01, size=(2, 1), requires_grad=True) b = torch.zeros(1, requires_grad=True) # 定义模型 def linreg(X, w, b): return torch.matmul(X, w)+b # 定义损失函数 def squared_loss(y_hat, y): return (y_hat - y.reshape(y_hat.shape)) ** 2 / 2 # 定义优化算法 def sgd(params, lr, batch_size): with torch.no_grad(): for param in params: param -= lr * param.grad / batch_size param.grad.zero_()

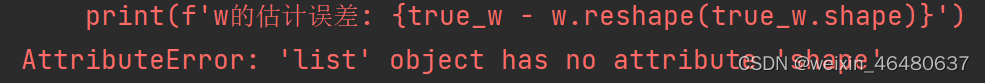

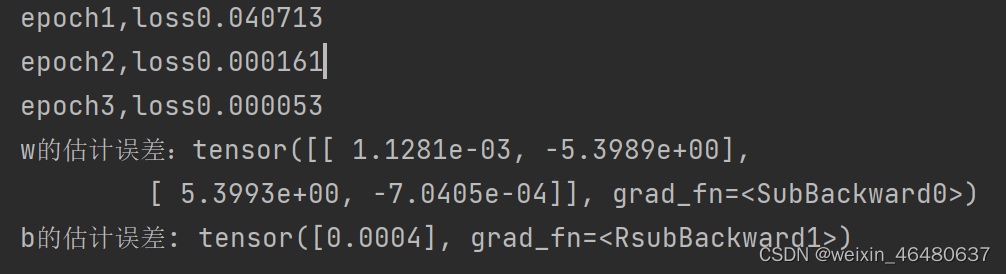

# 训练 lr = 0.03 num_epochs = 3 net = linreg loss = squared_loss for epoch in range(num_epochs): for X, y in data_iter(batch_size, features, labels): l = loss(net(X, w, b), y) l.sum().backward() sgd([w, b], lr, batch_size) with torch.no_grad(): train_l = loss(net(features, w, b), labels) print(f'epoch{epoch+1},loss{float(train_l.mean()):f}') print(f'w的估计误差: {true_w - w.reshape(true_w.shape)}') print(f'b的估计误差: {true_b - b}')

所以进行代码修改:

print(f'w的估计误差:{torch.tensor(true_w)-w}')

可以发现三次循环的结果,一次比一次误差小。

172

172

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?