Task02:PyTorch进阶训练技巧

0. 教程地址

https://github.com/datawhalechina/thorough-pytorch

1. 自定义损失函数

1.1 函数方式

通过输出值和目标值进行计算,返回损失值

>>> import torch

>>> def my_loss(output, target):

... loss = torch.mean((output - target)**2)

... return loss

...

>>>

1.2 类方式

一般地,Loss函数部分继承自_loss, 部分继承自_WeightedLoss

而_WeightedLoss继承自_loss,_loss继承自 nn.Module

所以,我们自定义的损失函数类就需要继承自nn.Module类

下面以分割领域常见的Dice Loss损失函数举例:

D S C = 2 ∣ X ∩ Y ∣ ∣ X ∣ + ∣ Y ∣ DSC = \frac{2|X∩Y|}{|X|+|Y|} DSC=∣X∣+∣Y∣2∣X∩Y∣

>>> import torch.nn as nn

>>> class DiceLoss(nn.Module):

... def __init__(self, weight=None, size_average=True):

... super(DiceLoss,self).__init__()

... def forward(self, inputs, targets, smooth=1):

... inputs = F.sigmoid(inputs)

... inputs = inputs.view(-1)

... targets = targets.view(-1)

... intersection = (inputs * targets).sum()

... dice = (2.*intersection + smooth)/(inputs.sum() + targets.sum() + smooth)

... return 1 - dice

...

>>>

还有一些其他的常用Loss,如BCE-Dice Loss:

>>> class DiceBCELoss(nn.Module):

... def __init__(self, weight=None, size_average=True):

... super(DiceBCELoss, self).__init__()

... def forward(self, inputs, targets, smooth=1):

... inputs = F.sigmoid(inputs)

... inputs = inputs.view(-1)

... targets = targets.view(-1)

... intersection = (inputs * targets).sum()

... dice_loss = 1 - (2.*intersection + smooth)/(inputs.sum() + targets.sum() + smooth)

... BCE = F.binary_cross_entropy(inputs, targets, reduction='mean')

... Dice_BCE = BCE + dice_loss

... return Dice_BCE

...

>>>

Jaccard/Intersection over Union (IoU) Loss:

>>> class IoULoss(nn.Module):

... def __init__(self, weight=None, size_average=True):

... super(IoULoss, self).__init__()

... def forward(self, inputs, targets, smooth=1):

... inputs = F.sigmoid(inputs)

... inputs = inputs.view(-1)

... targets = targets.view(-1)

... intersection = (inputs * targets).sum()

... total = (inputs + targets).sum()

... union = total - intersection

... IoU = (intersection + smooth)/(union + smooth)

... return 1 - IoU

...

>>>

Focal Loss:

>>> ALPHA = 0.8

>>> GAMMA = 2

>>> class FocalLoss(nn.Module):

... def __init__(self, weight=None, size_average=True):

... super(FocalLoss, self).__init__()

... def forward(self, inputs, targets, alpha=ALPHA, gamma=GAMMA, smooth=1):

... inputs = F.sigmoid(inputs)

... inputs = inputs.view(-1)

... targets = targets.view(-1)

... BCE = F.binary_cross_entropy(inputs, targets, reduction='mean')

... BCE_EXP = torch.exp(-BCE)

... focal_loss = alpha * (1-BCE_EXP)**gamma * BCE

... return focal_loss

...

>>>

2. 动态调整学习率

2.1 官方API

-

lr_scheduler.LambdaLR

-

lr_scheduler.MultiplicativeLR

-

lr_scheduler.StepLR

-

lr_scheduler.MultiStepLR

-

lr_scheduler.ExponentialLR

-

lr_scheduler.CosineAnnealingLR

-

lr_scheduler.ReduceLROnPlateau

-

lr_scheduler.CyclicLR

-

lr_scheduler.OneCycleLR

-

lr_scheduler.CosineAnnealingWarmRestarts

我们在使用官方给出的torch.optim.lr_scheduler时,需要将scheduler.step()放在optimizer.step()后面进行使用。

2.2 自定义scheduler

通过自定义函数adjust_learning_rate来改变param_group中lr的值来实现

>>> def adjust_learning_rate(optimizer, epoch):

... lr = args.lr * (0.1 ** (epoch // 30))

... for param_group in optimizer.param_groups:

... param_group['lr'] = lr

...

>>>

调用过程如下:

def adjust_learning_rate(optimizer,...):

...

optimizer = torch.optim.SGD(model.parameters(),lr = args.lr,momentum = 0.9)

for epoch in range(10):

train(...)

validate(...)

adjust_learning_rate(optimizer,epoch)

3. 模型微调

3.1 概念

通过对已经训练好的模型进行参数调整,使其用于新数据集训练

3.2 流程

-

拿到源模型,可以下载或者自行在源数据集训练

-

将源模型输出层外所有的结构和参数复制到目标模型

-

重构目标模型的输出层,并随机初始化改成的对应参数

-

使用目标数据集训练目标模型

3.3 图示

3.4 实践

>>> # 使用requires_grad=False冻结部分网络层,只计算新初始化的层的梯度

>>> def set_parameter_requires_grad(model, feature_extracting):

... if feature_extracting:

... for param in model.parameters():

... param.requires_grad = False

...

>>> import torchvision.models as models

>>> # 冻结参数的梯度

>>> feature_extract = True

>>> # 通过传入pretrained参数,决定是否使用预训练好的权重

>>> model = models.resnet50(pretrained=True)

Downloading: "https://download.pytorch.org/models/resnet50-19c8e357.pth" to C:\Users\Hunter-G/.cache\torch\hub\checkpoints\resnet50-19c8e357.pth

100%|██████████████████████████████████████████████████████████████████████████████████████████| 97.8M/97.8M [01:23<00:00, 1.23MB/s]

>>> set_parameter_requires_grad(model, feature_extract)

>>> # 修改模型

>>> num_ftrs = model.fc.in_features

>>> model.fc = nn.Linear(in_features=512, out_features=4, bias=True)

>>> model.fc

Linear(in_features=512, out_features=4, bias=True)

>>>

在训练过程中,model仍会回传梯度,但是参数更新只会发生在fc层。

4. 半精度训练

4.1 概念

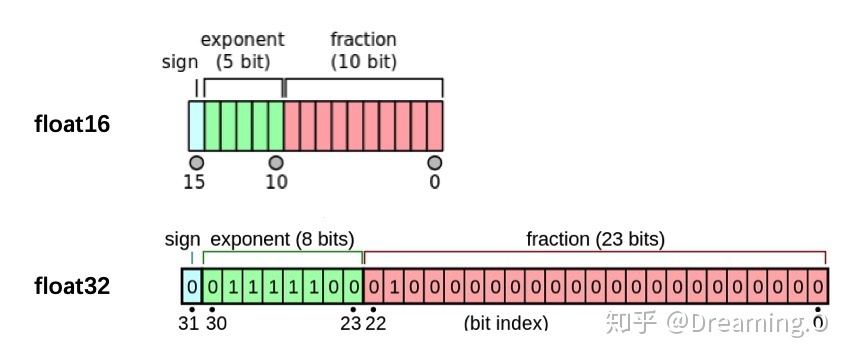

PyTorch默认的浮点数存储方式用的是torch.float32

但使用torch.float16格式的信息也不会影响结果

由于数位减了一半,因此被称为“半精度”,具体如下图:

4.2 实践

-

导入相关的包

from torch.cuda.amp import autocast -

装饰模型

在定义模型时,用autocast装饰模型中的forward函数

@autocast()

def forward(self, x):

...

return x

- 训练过程

for x in train_loader:

x = x.cuda()

with autocast():

output = model(x)

...

半精度训练主要适用于数据本身的size比较大(比如说3D图像、视频等)。

当数据本身的size并不大时(比如手写数字MNIST数据集的图片尺寸只有28*28)

使用半精度训练则可能不会带来显著的提升。

8050

8050

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?