Llama.cpp运行流程

参考;在低配Windows上部署原版llama.cpp_git llama csdn-CSDN博客

需求:本机上运行大模型语言的算法等;

实现方式:Llama.cpp使用int4这种数值模式,显著降低内存需求;使用原始C++的项目来重写Llama代码,使得其可以在各种硬件上本地运行;

主要途径:使用优化和量化技术来量化权重的情况下,Llama.cpp使得大型语言模型可以在本地的多种硬件上运行,而无需GPU;内存带宽往往是推理的瓶颈,通过量化使用更少的精度可以减少存储模型所需的内存。

1.下载Llama.cpp

https://github.com/ggerganov/llama.cpp

2.准备编译工具

Llama.cpp是跨平台的,在Windows平台下,需要准备MinGW和Cmake

MinGW

Set-ExecutionPolicy RemoteSigned -Scope CurrentUser

iex "& {

$(irm get.scoop.sh)} -RunAsAdmin"

scoop bucket add extras

scoop bucket add main

scoop install mingw

Cmake

https://cmake.org/download/

安装相应版本

3.编译LLaMa.cpp

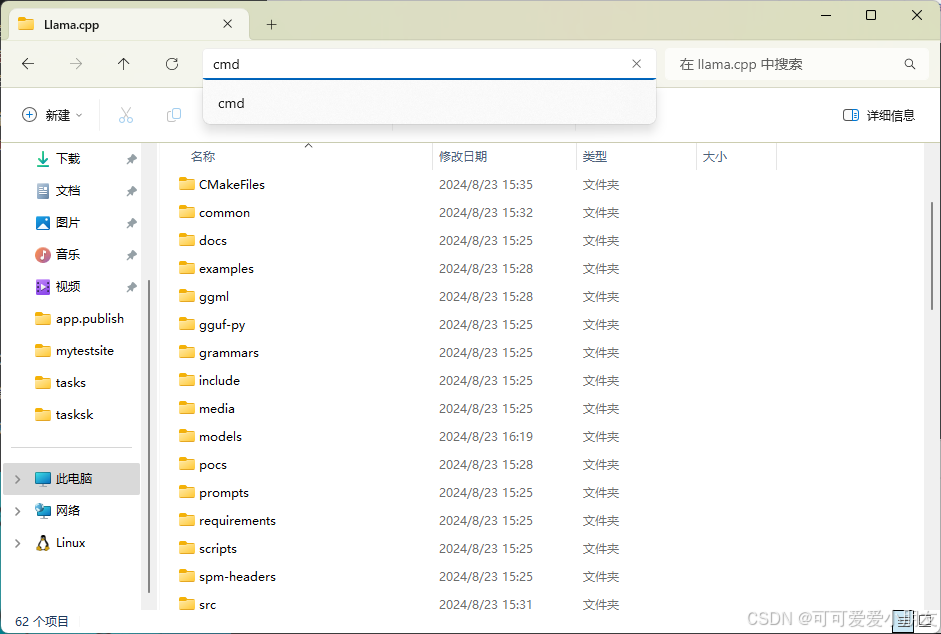

进入下载好的文件夹

编译

cmake . -G "MinGW Makefiles"

cmake --build . --config Release

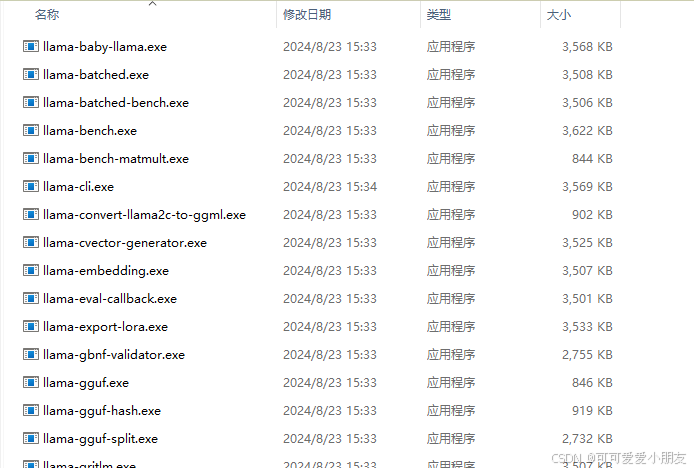

在bin/生成exe文件

现在llama更新很快,对于之前所提及的 quantize.exe 和 main.exe 都有相应的名称变化

main.exe : llama-cli.exe

quantize.exe : llama-quantize.exe

因为本地运行的gguf模型文件已经从hugging face下载好了,所以无需使用 llama-quantize.exe文件

部署上也参考了以下:

llama.cpp/examples/main at master · ggerganov/llama.cpp (github.com)

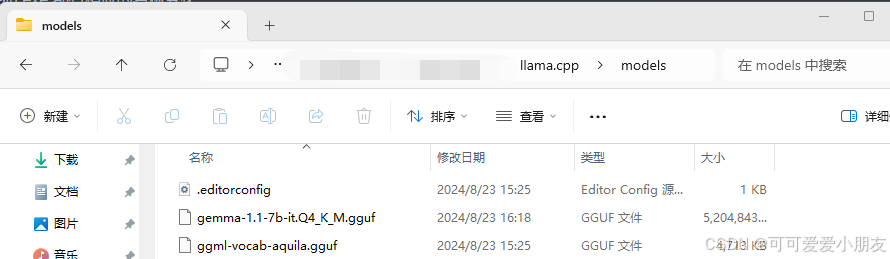

有了程序之后,我们需要下载模型

就是以上提及的GGUF文件

直接采用了

https://huggingface.co/ggml-org/gemma-1.1-7b-it-Q4_K_M-GGUF/resolve/main/gemma-1.1-7b-it.Q4_K_M.gguf?download=true

Gemma模型

下载好的gguf模型文件默认放到models/文件夹中

切换到bin目录下,运行如下代码:

llama-cli.exe -m ..\models\gemma-1.1-7b-it.Q4_K_M.gguf --prompt "Once upon a time"

上述 …\ 表示切换到上一层目录之后再寻找models文件

运行效果:

C:\……\llama.cpp\bin>llama-cli.exe -m models\gemma-1.1-7b-it.Q4_K_M.gguf --prompt "Once upon a time"

Log start

main: build = 3616 (11b84eb4)

main: built with for x86_64-w64-mingw32

main: seed = 1724916830

gguf_init_from_file: failed to open 'models\gemma-1.1-7b-it.Q4_K_M.gguf': 'No such file or directory'

llama_model_load: error loading model: llama_model_loader: failed to load model from models\gemma-1.1-7b-it.Q4_K_M.gguf

llama_load_model_from_file: failed to load model

llama_init_from_gpt_params: error: failed to load model 'models\gemma-1.1-7b-it.Q4_K_M.gguf'

main: error: unable to load model

C:\Users\v-yangxinyi\Llama.cpp\llama.cpp\bin>llama-cli.exe -m ..\models\gemma-1.1-7b-it.Q4_K_M.gguf --prompt "Once upon a time"

Log start

main: build = 3616 (11b84eb4)

main: built with for x86_64-w64-mingw32

main: seed = 1724916851

llama_model_loader: loaded meta data with 24 key-value pairs and 254 tensors from ..\models\gemma-1.1-7b-it.Q4_K_M.gguf (version GGUF V3 (latest))

llama_model_loader: Dumping metadata keys/values. Note: KV overrides do not apply in this output.

llama_model_loader: - kv 0: general.architecture str = gemma

llama_model_loader: - kv 1: general.name str = gemma-1.1-7b-it

llama_model_loader: - kv 2: gemma.context_length u32 = 8192

llama_model_loader: - kv 3: gemma.embedding_length u32 = 3072

llama_model_loader: - kv 4: gemma.block_count u32 = 28

llama_model_loader: - kv 5: gemma.feed_forward_length u32 = 24576

llama_model_loader: - kv 6: gemma.attention.head_count u32 = 16

llama_model_loader: - kv 7: gemma.attention.head_count_kv u32 = 16

llama_model_loader: - kv 8: gemma.attention.layer_norm_rms_epsilon f32 = 0.000001

llama_model_loader: - kv 9: gemma.attention.key_length u32 = 256

llama_model_loader: - kv 10: gemma.attention.value_length u32 = 256

llama_model_loader: - kv 11: general.file_type u32 = 15

llama_model_loader: - kv 12: tokenizer.ggml.model str = llama

llama_model_loader: - kv 13: tokenizer.ggml.tokens arr[str,256000] = ["<pad>", "<eos>", "<bos>", "<unk>", ...

llama_model_loader: - kv 14: tokenizer.ggml.scores arr[f32,256000] = [0.000000, 0.000000, 0.000000, 0.0000...

llama_model_loader: - kv 15: tokenizer.ggml.token_type arr[i32,256000] = [3, 3, 3, 2, 1, 1, 1, 1, 1, 1, 1, 1, ...

llama_model_loader: - kv 16: tokenizer.ggml.bos_token_id u32 = 2

llama_model_loader: - kv 17: tokenizer.ggml.eos_token_id u32 = 1

llama_model_loader: - kv 18: tokenizer.ggml.unknown_token_id u32 = 3

llama_model_loader: - kv 19: tokenizer.ggml.padding_token_id u32 = 0

llama_model_loader: - kv 20: tokenizer.ggml.add_bos_token bool = true

llama_model_loader: - kv 21: tokenizer.ggml.add_eos_token bool = false

llama_model_loader: - kv 22: tokenizer.chat_template str = {

{ bos_token }}{% if messages[0]['rol...

llama_model_loader: - kv 23: general.quantization_version u32 = 2

llama_model_loader: - type f32: 57 tensors

llama_model_loader: - type q4_K: 168 tensors

llama_model_loader: - type q6_K: 29 tensors

llm_load_vocab: special tokens cache size = 4

llm_load_vocab: token to piece cache size = 1.6014 MB

llm_load_print_meta: format = GGUF V3 (latest)

llm_load_print_meta: arch = gemma

llm_load_print_meta: vocab type = SPM

llm_load_print_meta: n_vocab = 256000

llm_load_print_meta: n_merges = 0

llm_load_print_meta: vocab_only = 0

llm_load_print_meta: n_ctx_train = 8192

llm_load_print_meta: n_embd = 3072

llm_load_print_meta: n_layer = 28

llm_load_print_meta: n_head = 16

llm_load_print_meta: n_head_kv = 16

llm_load_print_meta: n_rot = 256

llm_load_print_meta: n_swa = 0

llm_load_print_meta: n_embd_head_k = 256

llm_load_print_meta: n_embd_head_v = 256

llm_load_print_meta: n_gqa = 1

llm_load_print_meta: n_embd_k_gqa = 4096

llm_load_print_meta: n_embd_v_gqa = 4096

llm_load_print_meta: f_norm_eps = 0.0e+00

llm_load_print_meta: f_norm_rms_eps = 1.0e-06

llm_load_print_meta: f_clamp_kqv = 0.0e+00

llm_load_print_meta: f_max_alibi_bias = 0.0e+00

llm_load_print_meta: f_logit_scale = 0.0e+00

llm_load_print_meta: n_ff = 24576

llm_load_print_meta: n_expert = 0

llm_load_print_meta: n_expert_used = 0

llm_load_print_meta: causal attn = 1

llm_load_print_meta: pooling type = 0

llm_load_print_meta: rope type = 2

llm_load_print_meta: rope scaling = linear

llm_load_print_meta: freq_base_train = 10000.0

llm_load_print_meta: freq_scale_train = 1

llm_load_print_meta: n_ctx_orig_yarn = 8192

llm_load_print_meta: rope_finetuned = unknown

llm_load_print_meta: ssm_d_conv = 0

llm_load_print_meta: ssm_d_inner = 0

llm_load_print_meta: ssm_d_state = 0

llm_load_print_meta: ssm_dt_rank = 0

llm_load_print_meta: ssm_dt_b_c_rms = 0

llm_load_print_meta: model type = 7B

llm_load_print_meta: model ftype = Q4_K - Medium

llm_load_print_meta: model params = 8.54 B

llm_load_print_meta: model size = 4.96 GiB (4.99 BPW)

llm_load_print_meta: general.name = gemma-1.1-7b-it

llm_load_print_meta: BOS token = 2 '<bos>'

llm_load_print_meta: EOS token = 1 '<eos>'

llm_load_print_meta: UNK token = 3 '<unk>'

llm_load_print_meta: PAD token &

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?