本文是backtrader实战内容,解决实战痛点。

查看本专栏完整内容,请访问:https://blog.csdn.net/windanchaos/category_12384821.html

本文发布地址:https://blog.csdn.net/windanchaos/article/details/131882046

本文主要解决backtrader使用者调试代码,耗时费力、编码生产效率奇低、回测速度慢的问题。是上一个方案的替代方案,也提供解耦数据加载和策略执行的代码。

本方案也可和上一个方案的方法混搭使用,读者根据自己的实际情况选择。

本文适合以下场景的读者:

- 要求读者已经解决基本的

backtrader安装和使用问题,可以编写策略 - 读者策略使用

数据量大以及指标计算量大,需要将数据完整加载执行策略,但是回测跑代码十分耗时,比如调试一次代码,加载和预处理数据要十几分钟甚至几十分钟的情况。 - 本文不能解决

backtrader自身运行机制的执行速度问题,比如python的伪多线程问题、比如策略next方法体就是得一个bar一个bar的循环执行,比如你非参数调优场景且策略只需要单次执行。方案主要解决需要重复debug开发的耗时问题。

| 维度 | 默认时间(s) | 方案时间(s) |

|---|---|---|

| 数据load时间 | 195.71 | 5.60 |

| 程序执行时间 | 229.88 | 40.21 |

本人实验:数据是沪深300,总共556支股票,日线级别。时间从’2015-03-17’到’2023-04-21’。策略next方法空转不耗时。

备注:数据首次加载的时间不能省。

方案核心

本方案的核心:

本方案相较于上一个方案,可直接在IDE中使用,不再依赖jupyter lab。本方案在缓存数据量较小(如5G左右)较为有优势,过大系统读取缓存文件的io耗时也会上升,加上指标初始化目前所有方案都没有解耦,数据量大了指标初始化耗时也不得不重视。这时候使用上一个方案,或者混合使用,效率会更高。

金主爸爸,根据自己的需要酌情选择。

添加依赖

pip install joblib

重写Cerebro类

在上一个方案的基础上,增加了缓存序列换和反序列化的代码逻辑。

所以,数据加载和策略执行是做了解耦的,故可以和jupyter lab混用。

即:在jupyter lab中使用本方案,可以节省缓存加载和指标计算的时间。

新的Cerebro见代码。

import itertools

import os

from os.path import exists

import joblib

import backtrader as bt

smas = {}

class myCerebro2(bt.Cerebro):

def __int__(self):

super.__init__()

def addstrategy(self, strategy, *args, **kwargs):

'''

添加策略,为保证可重复执行,需要清除之前添加过的策略

如果你添加了多个策略,该方法需要增加判定清除条件。

'''

# if len(self.strats) == XX:

# self.strats.clear()

self.strats.clear()

self.strats.append([(strategy, args, kwargs)])

return len(self.strats) - 1

def optstrategy(self, strategy, *args, **kwargs):

'''

寻找最佳参数,为保证可重复执行,需要清除之前添加过的策略组合

'''

self.strats.clear()

self._dooptimize = True

args = self.iterize(args)

optargs = itertools.product(*args)

optkeys = list(kwargs)

vals = self.iterize(kwargs.values())

optvals = itertools.product(*vals)

okwargs1 = map(zip, itertools.repeat(optkeys), optvals)

optkwargs = map(dict, okwargs1)

it = itertools.product([strategy], optargs, optkwargs)

self.strats.append(it)

def _preloaddata(self):

self._exactbars = int(self.p.exactbars)

self._dopreload = self.p.preload

for data in self.datas:

data.reset()

if self._exactbars < 1: # datas can be full length

data.extend(size=self.params.lookahead)

data._start()

if self._dopreload:

data.preload()

# 默认preload

def dopreloaddata(self, cachedata=True, replacecache=False, cachefile='backtrader_tmp.joblib'):

"""

cachedata: 是否缓存数据

replacecache: 是否替换缓存

cachefile: 缓存文件路径

"""

# 处理缓存文件

fileexist = exists(cachefile)

# 如果缓存存在且需要重新加载则删除文件

if replacecache and fileexist:

os.remove(cachefile)

# 文件状态更新

fileexist = exists(cachefile)

# 缓存文件不存在则加载数据

if not fileexist:

self._preloaddata()

# 缓存到文件

if cachedata:

joblib.dump(self, cachefile)

else:

if cachedata:

load = joblib.load(cachefile)

self.analyzers = load.analyzers

self.datas = load.datas

self.broker = load.broker

self.params = load.params

self.p = load.p

self.datasbyname = load.datasbyname

else:

self._preloaddata()

def run(self, **kwargs):

self._event_stop = False # Stop is requested

if not self.datas:

return [] # nothing can be run

pkeys = self.params._getkeys()

for key, val in kwargs.items():

if key in pkeys:

setattr(self.params, key, val)

# Manage activate/deactivate object cache

bt.linebuffer.LineActions.cleancache() # clean cache

bt.indicator.Indicator.cleancache() # clean cache

bt.linebuffer.LineActions.usecache(self.p.objcache)

bt.indicator.Indicator.usecache(self.p.objcache)

self._dorunonce = self.p.runonce

self._dopreload = self.p.preload

self._exactbars = int(self.p.exactbars)

if self._exactbars:

self._dorunonce = False # something is saving memory, no runonce

self._dopreload = self._dopreload and self._exactbars < 1

self._doreplay = self._doreplay or any(x.replaying for x in self.datas)

if self._doreplay:

# preloading is not supported with replay. full timeframe bars

# are constructed in realtime

self._dopreload = False

if self._dolive or self.p.live:

# in this case both preload and runonce must be off

self._dorunonce = False

self._dopreload = False

self.runwriters = list()

# Add the system default writer if requested

if self.p.writer is True:

wr = bt.WriterFile()

self.runwriters.append(wr)

# Instantiate any other writers

for wrcls, wrargs, wrkwargs in self.writers:

wr = wrcls(*wrargs, **wrkwargs)

self.runwriters.append(wr)

# Write down if any writer wants the full csv output

self.writers_csv = any(map(lambda x: x.p.csv, self.runwriters))

self.runstrats = list()

if self.signals: # allow processing of signals

signalst, sargs, skwargs = self._signal_strat

if signalst is None:

# Try to see if the 1st regular strategy is a signal strategy

try:

signalst, sargs, skwargs = self.strats.pop(0)

except IndexError:

pass # Nothing there

else:

if not isinstance(signalst, bt.SignalStrategy):

# no signal ... reinsert at the beginning

self.strats.insert(0, (signalst, sargs, skwargs))

signalst = None # flag as not presetn

if signalst is None: # recheck

# Still None, create a default one

signalst, sargs, skwargs = bt.SignalStrategy, tuple(), dict()

# Add the signal strategy

self.addstrategy(signalst,

_accumulate=self._signal_accumulate,

_concurrent=self._signal_concurrent,

signals=self.signals,

*sargs,

**skwargs)

if not self.strats: # Datas are present, add a strategy

self.addstrategy(bt.Strategy)

iterstrats = bt.itertools.product(*self.strats)

if not self._dooptimize or self.p.maxcpus == 1:

# If no optimmization is wished ... or 1 core is to be used

# let's skip process "spawning"

for iterstrat in iterstrats:

runstrat = self.runstrategies(iterstrat)

self.runstrats.append(runstrat)

if self._dooptimize:

for cb in self.optcbs:

cb(runstrat) # callback receives finished strategy

else:

# 移动到dopreload

# if self.p.optdatas and self._dopreload and self._dorunonce:

# for data in self.datas:

# data.reset()

# if self._exactbars < 1: # datas can be full length

# data.extend(size=self.params.lookahead)

# data._start()

# if self._dopreload:

# data.preload()

if self.p.optdatas and self._dopreload and self._dorunonce:

for data in self.datas:

data.reset()

data._start()

pool = bt.multiprocessing.Pool(self.p.maxcpus or None)

for r in pool.imap(self, iterstrats):

self.runstrats.append(r)

for cb in self.optcbs:

cb(r) # callback receives finished strategy

pool.close()

if self.p.optdatas and self._dopreload and self._dorunonce:

for data in self.datas:

data.reset()

data._start()

if not self._dooptimize:

# avoid a list of list for regular cases

return self.runstrats[0]

return self.runstrats

def runstrategies(self, iterstrat, predata=False):

'''

Internal method invoked by ``run```to run a set of strategies

'''

self._init_stcount()

self.runningstrats = runstrats = list()

for store in self.stores:

store.start()

if self.p.cheat_on_open and self.p.broker_coo:

# try to activate in broker

if hasattr(self._broker, 'set_coo'):

self._broker.set_coo(True)

if self._fhistory is not None:

self._broker.set_fund_history(self._fhistory)

for orders, onotify in self._ohistory:

self._broker.add_order_history(orders, onotify)

self._broker.start()

for feed in self.feeds:

feed.start()

if self.writers_csv:

wheaders = list()

for data in self.datas:

if data.csv:

wheaders.extend(data.getwriterheaders())

for writer in self.runwriters:

if writer.p.csv:

writer.addheaders(wheaders)

# self._plotfillers = [list() for d in self.datas]

# self._plotfillers2 = [list() for d in self.datas]

for stratcls, sargs, skwargs in iterstrat:

sargs = self.datas + list(sargs)

try:

strat = stratcls(*sargs, **skwargs)

except bt.errors.StrategySkipError:

continue # do not add strategy to the mix

if self.p.oldsync:

strat._oldsync = True # tell strategy to use old clock update

if self.p.tradehistory:

strat.set_tradehistory()

runstrats.append(strat)

tz = self.p.tz

if isinstance(tz, bt.integer_types):

tz = self.datas[tz]._tz

else:

tz = bt.tzparse(tz)

if runstrats:

# loop separated for clarity

defaultsizer = self.sizers.get(None, (None, None, None))

for idx, strat in enumerate(runstrats):

if self.p.stdstats:

strat._addobserver(False, bt.observers.Broker)

if self.p.oldbuysell:

strat._addobserver(True, bt.observers.BuySell)

else:

strat._addobserver(True, bt.observers.BuySell,

barplot=True)

if self.p.oldtrades or len(self.datas) == 1:

strat._addobserver(False, bt.observers.Trades)

else:

strat._addobserver(False, bt.observers.DataTrades)

for multi, obscls, obsargs, obskwargs in self.observers:

strat._addobserver(multi, obscls, *obsargs, **obskwargs)

for indcls, indargs, indkwargs in self.indicators:

strat._addindicator(indcls, *indargs, **indkwargs)

for ancls, anargs, ankwargs in self.analyzers:

strat._addanalyzer(ancls, *anargs, **ankwargs)

sizer, sargs, skwargs = self.sizers.get(idx, defaultsizer)

if sizer is not None:

strat._addsizer(sizer, *sargs, **skwargs)

strat._settz(tz)

strat._start()

for writer in self.runwriters:

if writer.p.csv:

writer.addheaders(strat.getwriterheaders())

if not predata:

for strat in runstrats:

strat.qbuffer(self._exactbars, replaying=self._doreplay)

for writer in self.runwriters:

writer.start()

# Prepare timers

self._timers = []

self._timerscheat = []

for timer in self._pretimers:

# preprocess tzdata if needed

timer.start(self.datas[0])

if timer.params.cheat:

self._timerscheat.append(timer)

else:

self._timers.append(timer)

if self._dopreload and self._dorunonce:

if self.p.oldsync:

self._runonce_old(runstrats)

else:

self._runonce(runstrats)

else:

if self.p.oldsync:

self._runnext_old(runstrats)

else:

self._runnext(runstrats)

for strat in runstrats:

strat._stop()

self._broker.stop()

if not predata:

for data in self.datas:

data.stop()

for feed in self.feeds:

feed.stop()

for store in self.stores:

store.stop()

self.stop_writers(runstrats)

if self._dooptimize and self.p.optreturn:

# Results can be optimized

results = list()

for strat in runstrats:

for a in strat.analyzers:

a.strategy = None

a._parent = None

for attrname in dir(a):

if attrname.startswith('data'):

setattr(a, attrname, None)

oreturn = bt.OptReturn(strat.params, analyzers=strat.analyzers, strategycls=type(strat))

results.append(oreturn)

return results

return runstrats

dopreloaddata方法中增加三个参数。如果数据变动,则可将replacecache设置为True,也可手动删除backtrader_tmp.joblib文件。

def dopreloaddata(self, cachedata=True, replacecache=False, cachefile='backtrader_tmp.joblib'):

"""

cachedata: 是否缓存数据

replacecache: 是否替换缓存

cachefile: 缓存文件路径

"""

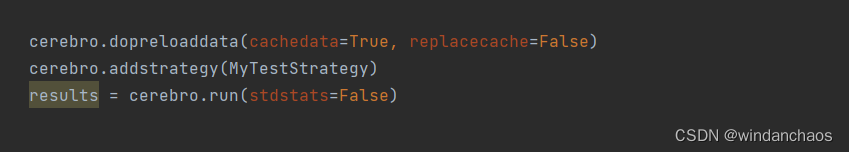

一个典型的执行代码:

将Python解释器替换成PyPy

pypy引入了类似JVM的即时编译(JIT)技术,可以将Python代码动态地编译成机器码,从而提高执行速度。使用了增量垃圾回收的技术,可以减少垃圾回收的停顿时间,提高程序的响应性能。PyPy还在内存管理方面进行了优化,可以减少内存占用,提高程序的效率。

但是,任何事物,有利必然有弊。它并不完全兼容所有的Python代码,一些第三方库可能不完全支持PyPy,或者需要进行额外的配置和调整才能在PyPy上正常运行。搞定这些都是你的时间成本。

bakctrader官方博客中有对pypy的测试,详见链接,我专栏中有对应博客文章的中文翻译(付费酌情点击)。效果上看,内存和性能提升还是很明显的。

大致性能提升指标:内存节约30%左右,执行时间节约40%左右。并且作者鼓励大家使用pypy。代价就是你不能绘图了。

backtrader作者在文中鼓励尽量使用

pypy

Use pypy where possible

经笔者验证,本文的核心解决思路可以结合PyPy。你可先切PyPy再做后续,也可以跳过本节。

1、安装提速操作:

没有安装或不会使用anaconda,请自行百度,没什么难度。

# anaconda提速

conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/free/

conda config --set show_channel_urls yes

# pip提速

pip config set global.index-url https://pypi.tuna.tsinghua.edu.cn/simple

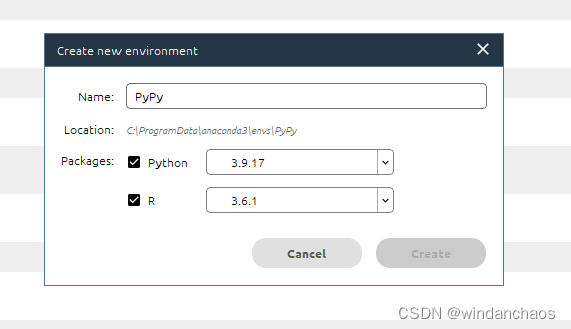

2、创建PyPy的执行环境

使用anaconda新建一个enviroment。如下图:

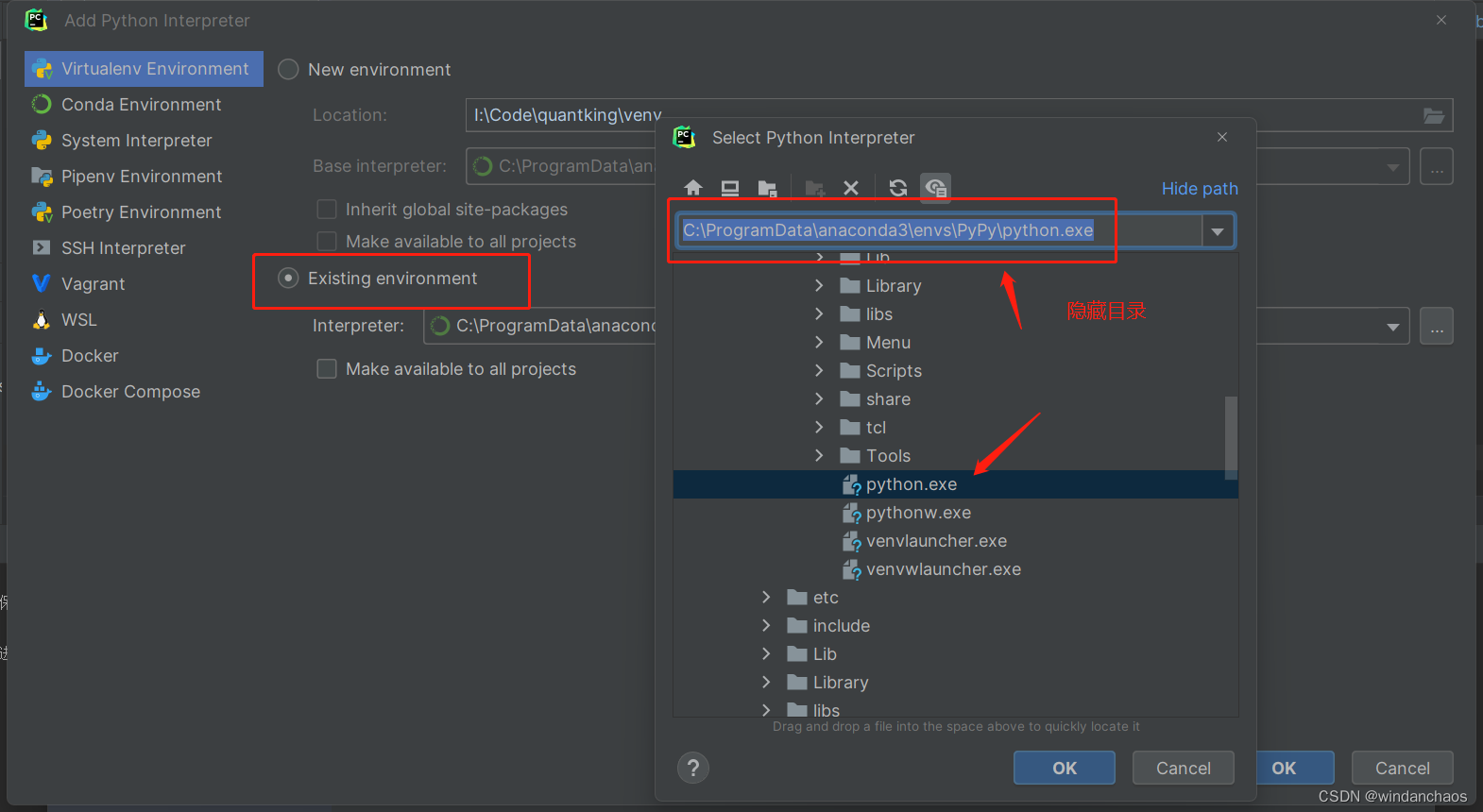

在pycharm中使用该enviroment即可,新建环境目录地址:C:\ProgramData\anaconda3\envs\PyPy\python.exe

3、获取自己工程的依赖包

pycharm中敲击 pip freeze > requirements.txt

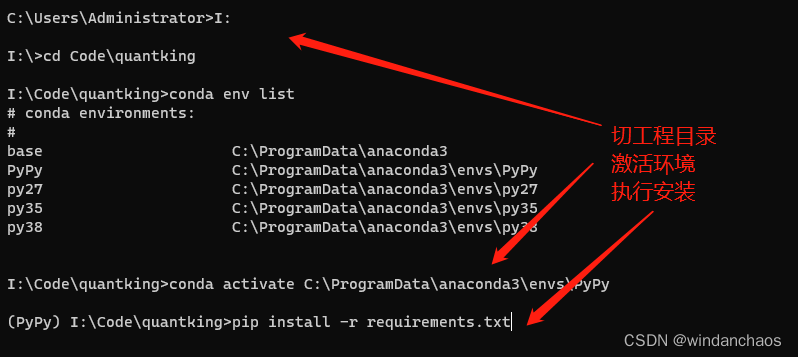

4、剩下的事就是激活该环境,安装依赖

切到PyPy的environment,在conda中操作(而不是pycharm)

conda activate C:\ProgramData\anaconda3\envs\PyPy

pip istall -r requirements.txt

其他

- 指标的初始化时间,可以优化,参考上一个方案 改造你的Strategy类章节

- 本方案,可增加判定缓存文件逻辑,文件有则不执行加载

DataFeed动作,还可以加快几秒

745

745

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?