更新时间:

2017.5.5

对一些函数或者类有了新的认识。重要的地方修改一下。

简化了大量的东西,保留重点,只写了常用函数的用法,其他的根据需要看文档

之前讲完变量常量等等基本量的操作,意味着最基本的东西都有了,然后接下来很重要的就是那些量和操作怎么组成更大的集合,怎么运行这个集合。这些就是计算图谱Graph和Session的作用了。

因为篇幅和格式的缘故,这里的测试代码并没写全,全部的代码可以在我的GitHub上面找到:LearningTensorFlow/3.Graph_and_Session/

一.Graph

首先官方文档地址:tf.Graph

这里仅列出了最基本和频繁使用的函数,更多特定的需要请参考文档。

Ⅰ.介绍

一个TensorFlow的运算,被表示为一个数据流的图。

一幅图中包含一些操作(Operation)对象,这些对象是计算节点。前面说过的Tensor对象,则是表示在不同的操作(operation)间的数据节点

你一旦开始你的任务,就已经有一个默认的图已经创建好了。而且可以通过调用tf.get_default_graph()来访问到。

添加一个操作到默认的图里面,只要简单的调用一个定义了新操作的函数就行。比如下面的例子展示的:

import tensorflow as tf

import numpy as np

c=tf.constant(value=1)

#print(assert c.graph is tf.get_default_graph())

print(c.graph)

print(tf.get_default_graph())另外一种典型的用法就是要使用到Graph.as_default() 的上下文管理器( context manager),它能够在这个上下文里面覆盖默认的图。如下例

import tensorflow as tf

import numpy as np

c=tf.constant(value=1)

#print(assert c.graph is tf.get_default_graph())

print(c.graph)

print(tf.get_default_graph())

g=tf.Graph()

print("g:",g)

with g.as_default():

d=tf.constant(value=2)

print(d.graph)

#print(g)

g2=tf.Graph()

print("g2:",g2)

g2.as_default()

e=tf.constant(value=15)

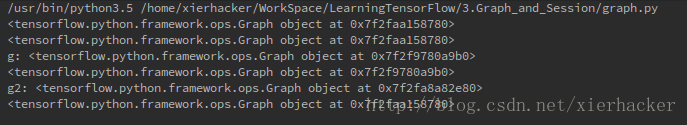

print(e.graph)结果:

上面的例子里面创创建了一个新的图g,然后把g设为默认,那么接下来的操作不是在默认的图中,而是在g中了。你也可以认为现在g这个图就是新的默认的图了。

要注意的是,最后一个量e不是定义在with语句里面的,也就是说,e会包含在最开始的那个图中。也就是说,要在某个graph里面定义量,要在with语句的范围里面定义。

一个Graph的实例支持任意多数量通过名字区分的的“collections”。

为了方便,当构建一个大的图的时候,collection能够存储很多类似的对象。比如 tf.Variable就使用了一个collection(tf.GraphKeys.GLOBAL_VARIABLES),包含了所有在图创建过程中的变量。

也可以通过之指定新名称定义其他的collection

Ⅱ.属性

building_function:Returns True iff this graph represents a function.

finalized:返回True,要是这个图被终止了

graph_def_versions:The GraphDef version information of this graph.

seed:The graph-level random seed of this graph.

version:Returns a version number that increases as ops are added to the graph.

Ⅲ.函数

__init__()

作用:创建一个新的,空的图

add_to_collection(name,value)

作用:存放值在给定名称的collection里面(因为collection不是sets,所以有可能一个值会添加很多次)

参数:

name:</

本文介绍了TensorFlow中的Graph和Session的概念及其作用。Graph是数据流图,包含操作对象和数据节点,用于组织计算任务。Session则负责运行Graph中的操作。文章详细讲解了Graph的介绍、属性和常见函数,以及Session的属性和重要函数,提供了相关代码示例,帮助理解如何创建和使用Graph及Session。

本文介绍了TensorFlow中的Graph和Session的概念及其作用。Graph是数据流图,包含操作对象和数据节点,用于组织计算任务。Session则负责运行Graph中的操作。文章详细讲解了Graph的介绍、属性和常见函数,以及Session的属性和重要函数,提供了相关代码示例,帮助理解如何创建和使用Graph及Session。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

462

462

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?