DMNet:《Dynamic Multi-Scale Filters for Semantic Segmentation》

发布于2019ICCV。

有意思的是,DMNet的作者和APCNet的作者是同一个人,而且,DMNet和APCNet的结构十分相似,也就是这个作者,同一年做了两个方案,分别发在了ICCV和CVPR。

先前写过的APCNet的文章地址:语义分割系列12-APCNet(pytorch实现)。

引文

DMNet论文想要解决的是困扰已久的多尺度分割问题(Multi-scale)。

在先前的工作中:

- DeepLab系列,用的是空洞卷积(dilated conv、atrous conv)来扩大感受野以捕获多尺度信息,但是,这种卷积操作引入了大量的计算量,而且容易引起局部邻域的信息丢失。同时,空洞卷积有一个比较致命的问题,就是这个扩张数率的选择,选择过大的速率,小物体就会丢失信息,导致一些网格效应、边界效应。

- Inception,用的是多个不同大小的卷积核并行,来处理多尺度问题,同样引入了相当一部分的计算量,而且,参数多了就容易导致过拟合。

- PSPNet提出的池化金字塔(PPM)是一个比较有效的方法,也是APCNet和本文模型的思想主要来源,毕竟APCNet和DMNet都有PPM的影子。PPM通过不同大小的池化来捕获多尺度的上下文信息。不过这种捕获信息的方式也会损失一定的信息(当然这是废话的,对特征图进行池化操作必然损失信息)。

作者说了这么多别人不好,然后就开始引入自己怎么怎么好↓

本文亮点:

- 提出了端到端的DMNet模型,可以利用动态多尺度的过滤器对语义进行细分,相对于之前模型参数固定的方法,DMNet可以对图像的内容进行自适应的变化。

- 提出了动态卷积模块,来捕获多尺度语义信息,每一个DCM模块都可以处理与输入尺寸相关的比例变化。

模型

(吐槽:与 APCNet一模一样的架构图)

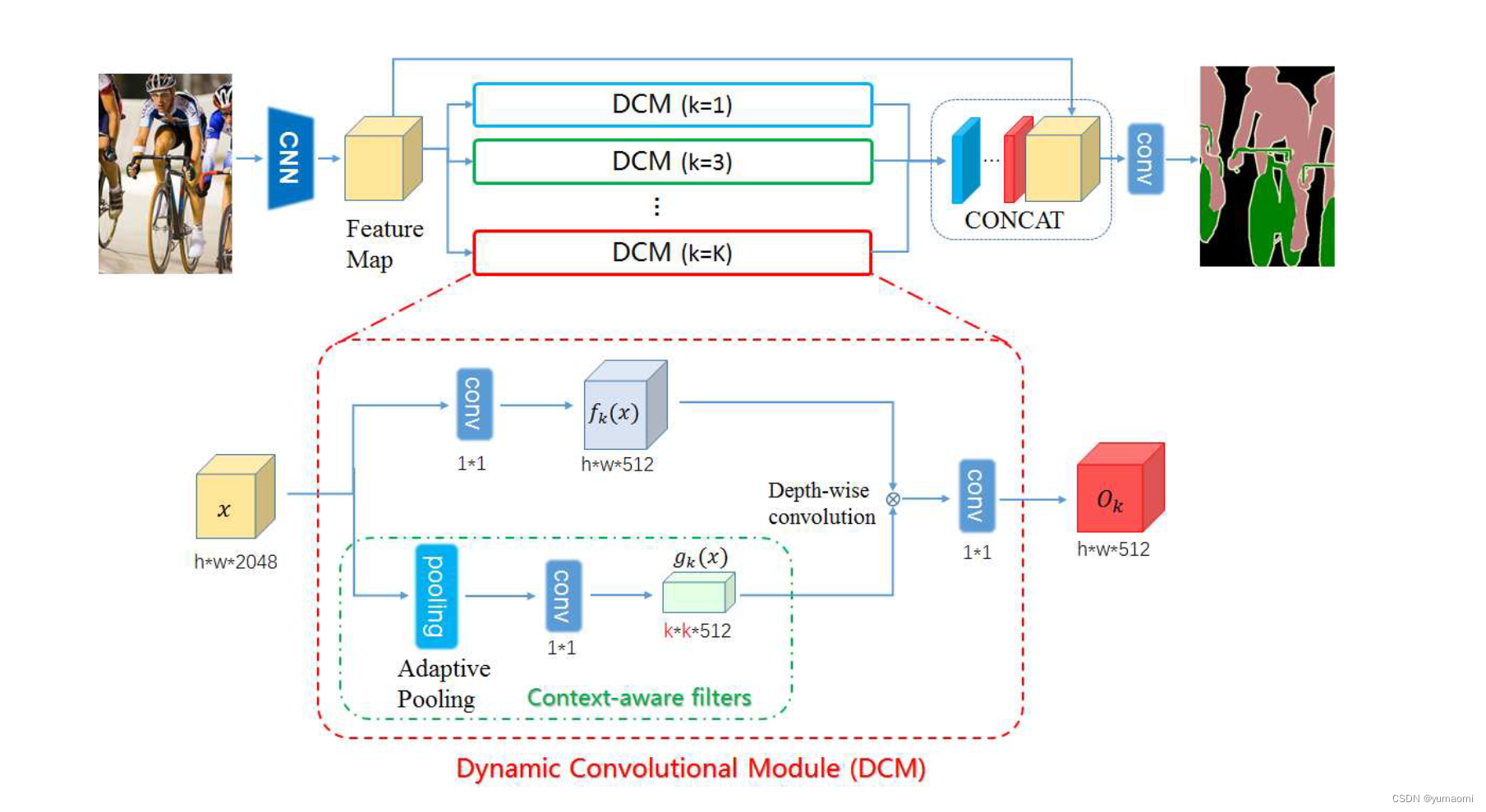

和PSPNet、APCNet的架构一样,只是设计了几个拥有不同k值的DCM模块。

DCM模块

所以,这篇文章的重点内容在于DCM模块的设计。

文章中写了这么一句话:

The goal of DCM is to capture a specific scale representation for the input image adaptively.

DCM模块的目标是自适应捕获输入图像的特定比例表示。

DCM模块内容如下,主要的亮点在于绿色框内的Context-aware filters。

作者称这个结构为上下文感知过滤器(Context-aware filters)。在这些filters中嵌入了丰富的内容和高级语义信息而且这些filters能够适应输入的图像,捕获图像内部的不同尺寸信息。

DCM结构比较简单,输入的特征图x实现需要经过一个卷积层来减少通道数。x经过一个AdaptiveAvgPooling(k),k值是自定义的一个量。经过卷积后生成k×k×512大小的gk(x),最后用一个Depth-wise conv将上下两个分支的特征图融合。就得到了一个DCM模块的输出。最后像APCNet一样,把所有特征融合起来就算完成了这个网络的全部内容。

复现

backbone-ResNet50

import torch

import torch.nn as nn

class BasicBlock(nn.Module):

expansion: int = 4

def __init__(self, inplanes, planes, stride = 1, downsample = None, groups = 1,

base_width = 64, dilation = 1, norm_layer = None):

super(BasicBlock, self).__init__()

if norm_layer is None:

norm_layer = nn.BatchNorm2d

if groups != 1 or base_width != 64:

raise ValueError("BasicBlock only supports groups=1 and base_width=64")

if dilation > 1:

raise NotImplementedError("Dilation > 1 not supported in BasicBlock")

# Both self.conv1 and self.downsample layers downsample the input when stride != 1

self.conv1 = nn.Conv2d(inplanes, planes ,kernel_size=3, stride=stride,

padding=dilation,groups=groups, bias=False,dilation=dilation)

self.bn1 = norm_layer(planes)

self.relu = nn.ReLU(inplace=True)

self.conv2 = nn.Conv2d(planes, planes ,kernel_size=3, stride=stride,

padding=dilation,groups=groups, bias=False,dilation=dilation)

self.bn2 = norm_layer(planes)

self.downsample = downsample

self.stride = stride

def forward(self, x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample= None,

groups = 1, base_width = 64, dilation = 1, norm_layer = None,):

super(Bottleneck, self).__init__()

if norm_layer is None:

norm_layer = nn.BatchNorm2d

width = int(planes * (base_width / 64.0)) * groups

# Both self.conv2 and self.downsample layers downsample the input when stride != 1

self.conv1 = nn.Conv2d(inplanes, width, kernel_size=1, stride=1, bias=False)

self.bn1 = norm_layer(width)

self.conv2 = nn.Conv2d(width, width, kernel_size=3, stride=stride, bias=False, padding=dilation, dilation=dilation)

self.bn2 = norm_layer(width)

self.conv3 = nn.Conv2d(width, planes * self.expansion, kernel_size=1, stride=1, bias=False)

self.bn3 = norm_layer(planes * self.expansion)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

self.stride = stride

def forward(self, x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

class ResNet(nn.Module):

def __init__(

self,block, layers,num_classes = 1000, zero_init_residual = False, groups = 1,

width_per_group = 64, replace_stride_with_dilation = None, norm_layer = None):

super(ResNet, self).__init__()

if norm_layer is None:

norm_layer = nn.BatchNorm2d

self._norm_layer = norm_layer

self.inplanes = 64

self.dilation = 2

if replace_stride_with_dilation is None:

# each element in the tuple indicates if we should replace

# the 2x2 stride with a dilated convolution instead

replace_stride_with_dilation = [False, False, False]

if len(replace_stride_with_dilation) != 3:

raise ValueError(

"replace_stride_with_dilation should be None "

f"or a 3-element tuple, got {replace_stride_with_dilation}"

)

self.groups = groups

self.base_width = width_per_group

self.conv1 = nn.Conv2d(3, self.inplanes, kernel_size=7, stride=2, padding=3, bias=False)

self.bn1 = norm_layer(self.inplanes)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=1, dilate=replace_stride_with_dilation[0])

self.layer3 = self._make_layer(block, 256, layers[2], stride=2, dilate=replace_stride_with_dilation[1])

self.layer4 = self._make_layer(block, 512, layers[3], stride=1, dilate=replace_stride_with_dilation[2])

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode="fan_out", nonlinearity="relu")

elif isinstance(m, (nn.BatchNorm2d, nn.GroupNorm)):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

# Zero-initialize the last BN in each residual branch,

# so that the residual branch starts with zeros, and each residual block behaves like an identity.

# This improves the model by 0.2~0.3% according to https://arxiv.org/abs/1706.02677

if zero_init_residual:

for m in self.modules():

if isinstance(m, Bottleneck):

nn.init.constant_(m.bn3.weight, 0) # type: ignore[arg-type]

elif isinstance(m, BasicBlock):

nn.init.constant_(m.bn2.weight, 0) # type: ignore[arg-type]

def _make_layer(

self,

block,

planes,

blocks,

stride = 1,

dilate = False,

):

norm_layer = self._norm_layer

downsample = None

previous_dilation = self.dilation

if dilate:

self.dilation *= stride

stride = stride

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion, kernel_size=1, stride=stride, bias=False),

norm_layer(planes * block.expansion))

layers = []

layers.append(

block(

self.inplanes, planes, stride, downsample, self.groups, self.base_width, previous_dilation, norm_layer

)

)

self.inplanes = planes * block.expansion

for _ in range(1, blocks):

layers.append(

block(

self.inplanes,

planes,

groups=self.groups,

base_width=self.base_width,

dilation=self.dilation,

norm_layer=norm_layer,

)

)

return nn.Sequential(*layers)

def _forward_impl(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

return x

def forward(self, x) :

return self._forward_impl(x)

def _resnet(block, layers, pretrained_path = None, **kwargs,):

model = ResNet(block, layers, **kwargs)

if pretrained_path is not None:

model.load_state_dict(torch.load(pretrained_path), strict=False)

return model

def resnet50(pretrained_path=None, **kwargs):

return ResNet._resnet(Bottleneck, [3, 4, 6, 3],pretrained_path,**kwargs)

def resnet101(pretrained_path=None, **kwargs):

return ResNet._resnet(Bottleneck, [3, 4, 23, 3],pretrained_path,**kwargs)DMNet

import torch

import torch.nn as nn

import torch.nn.functional as F

from torchvision.transforms import Resize

class DCMModle(nn.Module):

def __init__(self, in_channels=2048, channels=512, filter_size=1, fusion=True):

super(DCMModle, self).__init__()

self.filter_size = filter_size

self.in_channels = in_channels

self.channels = channels

self.fusion = fusion

# Global Information vector

self.reduce_Conv = nn.Conv2d(self.in_channels, self.channels, 1)

self.filter = nn.AdaptiveAvgPool2d(self.filter_size)

self.filter_gen_conv = nn.Conv2d(self.in_channels, self.channels, 1, 1,

0)

self.residual_conv = nn.Conv2d(self.channels, self.channels, 1)

self.global_info = nn.Conv2d(self.channels, self.channels, 1)

self.gla = nn.Conv2d(self.channels, self.filter_size**2, 1, 1, 0)

self.activate = nn.Sequential(nn.BatchNorm2d(self.channels),

nn.ReLU()

)

if self.fusion:

self.fusion_conv = nn.Conv2d(self.channels, self.channels, 1)

def forward(self, x):

b, c, h, w = x.shape

generted_filter = self.filter_gen_conv(self.filter(x)).view(b, self.channels, self.filter_size, self.filter_size)

x = self.reduce_Conv(x)

c = self.channels

# [1, b * c, h, w], c = self.channels

x = x.view(1, b * c, h, w)

# [b * c, 1, filter_size, filter_size]

generted_filter = generted_filter.view(b * c, 1, self.filter_size,

self.filter_size)

pad = (self.filter_size - 1) // 2

if (self.filter_size - 1) % 2 == 0:

p2d = (pad, pad, pad, pad)

else:

p2d = (pad + 1, pad, pad + 1, pad)

x = F.pad(input=x, pad=p2d, mode='constant', value=0)

# [1, b * c, h, w]

output = nn.functional.conv2d(input=x, weight=generted_filter, groups=b * c)

# [b, c, h, w]

output = output.view(b, c, h, w)

output = self.activate(output)

if self.fusion:

output = self.fusion_conv(output)

return output

class DCMModuleList(nn.ModuleList):

def __init__(self, filter_sizes = [1,2,3,6], in_channels = 2048, channels = 512):

super(DCMModuleList, self).__init__()

self.filter_sizes = filter_sizes

self.in_channels = in_channels

self.channels = channels

for filter_size in self.filter_sizes:

self.append(

DCMModle(in_channels, channels, filter_size)

)

def forward(self, x):

out = []

for DCM in self:

DCM_out = DCM(x)

out.append(DCM_out)

return out

class DMNet(nn.Module):

def __init__(self, num_classes):

super(DMNet, self).__init__()

self.num_classes = num_classes

self.backbone = ResNet.resnet50(replace_stride_with_dilation=[1,2,4])

self.in_channels = 2048

self.channels = 512

self.DMNet_pyramid = DCMModuleList(filter_sizes=[1,2,3,6], in_channels=self.in_channels, channels=self.channels)

self.conv1 = nn.Sequential(

nn.Conv2d(4*self.channels + self.in_channels, self.channels, 3, padding=1),

nn.BatchNorm2d(self.channels),

nn.ReLU()

)

self.cls_conv = nn.Conv2d(self.channels, self.num_classes, 3, padding=1)

def forward(self, x):

x = self.backbone(x)

DM_out = self.DMNet_pyramid(x)

DM_out.append(x)

x = torch.cat(DM_out, dim=1)

x = self.conv1(x)

x = Resize((8*x.shape[-2], 8*x.shape[-1]))(x)

x = self.cls_conv(x)

return x

数据集-Camvid

# 导入库

import os

os.environ['CUDA_VISIBLE_DEVICES'] = '0'

import torch

import torch.nn as nn

import torch.optim as optim

import torch.nn.functional as F

from torch import optim

from torch.utils.data import Dataset, DataLoader, random_split

from tqdm import tqdm

import warnings

warnings.filterwarnings("ignore")

import os.path as osp

import matplotlib.pyplot as plt

from PIL import Image

import numpy as np

import albumentations as A

from albumentations.pytorch.transforms import ToTensorV2

torch.manual_seed(17)

# 自定义数据集CamVidDataset

class CamVidDataset(torch.utils.data.Dataset):

"""CamVid Dataset. Read images, apply augmentation and preprocessing transformations.

Args:

images_dir (str): path to images folder

masks_dir (str): path to segmentation masks folder

class_values (list): values of classes to extract from segmentation mask

augmentation (albumentations.Compose): data transfromation pipeline

(e.g. flip, scale, etc.)

preprocessing (albumentations.Compose): data preprocessing

(e.g. noralization, shape manipulation, etc.)

"""

def __init__(self, images_dir, masks_dir):

self.transform = A.Compose([

A.Resize(224, 224),

A.HorizontalFlip(),

A.VerticalFlip(),

A.Normalize(),

ToTensorV2(),

])

self.ids = os.listdir(images_dir)

self.images_fps = [os.path.join(images_dir, image_id) for image_id in self.ids]

self.masks_fps = [os.path.join(masks_dir, image_id) for image_id in self.ids]

def __getitem__(self, i):

# read data

image = np.array(Image.open(self.images_fps[i]).convert('RGB'))

mask = np.array( Image.open(self.masks_fps[i]).convert('RGB'))

image = self.transform(image=image,mask=mask)

return image['image'], image['mask'][:,:,0]

def __len__(self):

return len(self.ids)

# 设置数据集路径

DATA_DIR = r'dataset\camvid' # 根据自己的路径来设置

x_train_dir = os.path.join(DATA_DIR, 'train_images')

y_train_dir = os.path.join(DATA_DIR, 'train_labels')

x_valid_dir = os.path.join(DATA_DIR, 'valid_images')

y_valid_dir = os.path.join(DATA_DIR, 'valid_labels')

train_dataset = CamVidDataset(

x_train_dir,

y_train_dir,

)

val_dataset = CamVidDataset(

x_valid_dir,

y_valid_dir,

)

train_loader = DataLoader(train_dataset, batch_size=24, shuffle=True,drop_last=True)

val_loader = DataLoader(val_dataset, batch_size=24, shuffle=True,drop_last=True)训练

model = DMNet(num_classes=33).cuda()

#model.load_state_dict(torch.load(r"checkpoints/resnet101-5d3b4d8f.pth"), strict=False)from d2l import torch as d2l

from tqdm import tqdm

import pandas as pd

#损失函数选用多分类交叉熵损失函数

lossf = nn.CrossEntropyLoss(ignore_index=255)

#选用adam优化器来训练

optimizer = optim.SGD(model.parameters(), lr=0.1)

scheduler = torch.optim.lr_scheduler.StepLR(optimizer, step_size=50, gamma=0.5, last_epoch=-1)

#训练50轮

epochs_num = 100

def train_ch13(net, train_iter, test_iter, loss, trainer, num_epochs,scheduler,

devices=d2l.try_all_gpus()):

timer, num_batches = d2l.Timer(), len(train_iter)

animator = d2l.Animator(xlabel='epoch', xlim=[1, num_epochs], ylim=[0, 1],

legend=['train loss', 'train acc', 'test acc'])

net = nn.DataParallel(net, device_ids=devices).to(devices[0])

loss_list = []

train_acc_list = []

test_acc_list = []

epochs_list = []

time_list = []

for epoch in range(num_epochs):

# Sum of training loss, sum of training accuracy, no. of examples,

# no. of predictions

metric = d2l.Accumulator(4)

for i, (features, labels) in enumerate(train_iter):

timer.start()

l, acc = d2l.train_batch_ch13(

net, features, labels.long(), loss, trainer, devices)

metric.add(l, acc, labels.shape[0], labels.numel())

timer.stop()

if (i + 1) % (num_batches // 5) == 0 or i == num_batches - 1:

animator.add(epoch + (i + 1) / num_batches,

(metric[0] / metric[2], metric[1] / metric[3],

None))

test_acc = d2l.evaluate_accuracy_gpu(net, test_iter)

animator.add(epoch + 1, (None, None, test_acc))

scheduler.step()

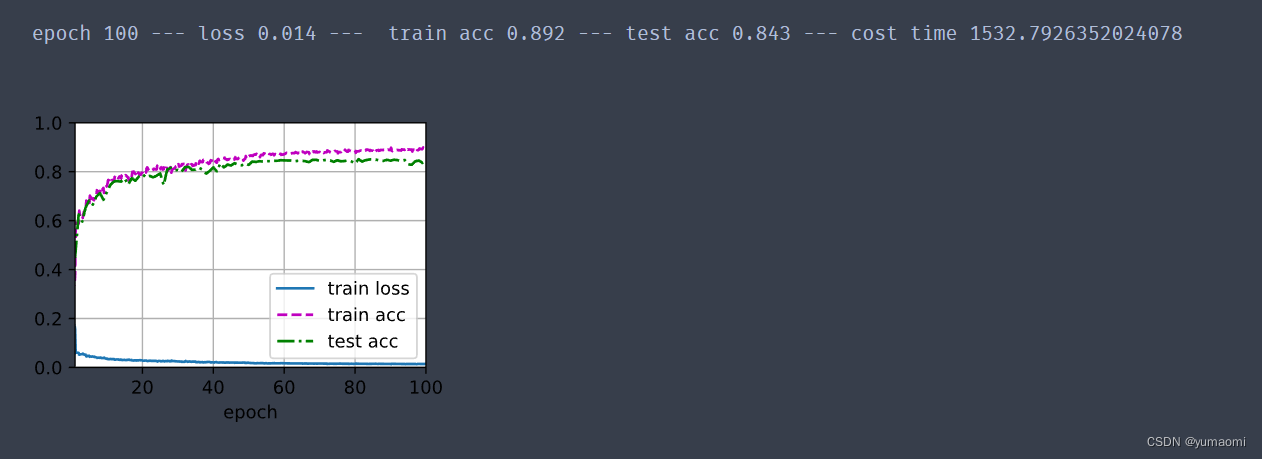

print(f"epoch {epoch+1} --- loss {metric[0] / metric[2]:.3f} --- train acc {metric[1] / metric[3]:.3f} --- test acc {test_acc:.3f} --- cost time {timer.sum()}")

#---------保存训练数据---------------

df = pd.DataFrame()

loss_list.append(metric[0] / metric[2])

train_acc_list.append(metric[1] / metric[3])

test_acc_list.append(test_acc)

epochs_list.append(epoch+1)

time_list.append(timer.sum())

df['epoch'] = epochs_list

df['loss'] = loss_list

df['train_acc'] = train_acc_list

df['test_acc'] = test_acc_list

df['time'] = time_list

df.to_excel("savefile/DMNet_camvid.xlsx")

#----------------保存模型-------------------

if np.mod(epoch+1, 5) == 0:

torch.save(model.state_dict(), f'checkpoints/DMNet_{epoch+1}.pth')

train_ch13(model, train_loader, val_loader, lossf, optimizer, epochs_num,scheduler)训练结果

7725

7725

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?