一:基本原理

NCC是一种基于统计学计算两组样本数据相关性的算法,其取值范围为[-1, 1]之间,而对图像来说,每个像素点都可以看出是RGB数值,这样整幅图像就可以看成是一个样本数据的集合,如果它有一个子集与另外一个样本数据相互匹配则它的ncc值为1,表示相关性很高,如果是-1则表示完全不相关,基于这个原理,实现图像基于模板匹配识别算法。

图像匹配指在已知目标基准图的子图集合中,寻找与实时图像最相似的子图,以达到目标识别与定位目的的图像技术。主要方法有:基于图像灰度相关方法、基于图像特征方法、基于神经网络相关的人工智能方法(还在完善中)。

基于图像灰度的匹配算法简单,匹配准确度高,主要用空间域的一维或二维滑动模版进行图像匹配,不同的算法区别主要体现在模版及相关准则的选择方面,但计算量大,不利于实时处理,对灰度变化、旋转、形变以及遮挡等比较敏感;

基于图像特征的方法计算量相对较小,对灰度变化、形变及遮挡有较好的适应性,通过在原始图中提取点、线、区域等显著特征作为匹配基元,进而用于特征匹配,但是匹配精度不高。

通常又把基于灰度的匹配算法,称作相关匹配算法。相关匹配算法又分为两类:一类强调景物之间的差别程度如平法差法(SD)和平均绝对差值法(MAD)等;另一类强调景物之间的相似程度,主要算法又分成两类,一是积相关匹配法,二是相关系数法。

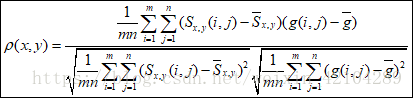

今天我们就来说说归一化互相关系数法(NCC)。

g的灰度均值

图像Sx,y的灰度均值

相关系数满足:

在[-1,1]绝对尺度范围之间衡量两者的相似性。相关系数刻画了两者之间的近似程度的线性描述。一般说来,越接近于1,两者越近似的有线性关系。

2. C++代码实现

(1) 获取模板像素并计算均值与标准方差、像素与均值diff数据样本

(2) 根据模板大小,在目标图像上从左到右,从上到下移动窗口,计

算每移动一个像素之后窗口内像素与模板像素的ncc值,与阈值比较,大于

阈值则记录位置

(3) 根据得到位置信息,使用红色矩形标记出模板匹配识别结果。

(4) UI显示结果

int main() {

Mat image2 = imread("C:\\Users\\Administrator\\Desktop\\t2\\temp.png", IMREAD_GRAYSCALE);

Mat image1 = imread("C:\\Users\\Administrator\\Desktop\\t2\\temp.png", IMREAD_GRAYSCALE);

int overlap = image2.cols;

float pearsonCorrelationCoefficientMax = 0;

int overlapMaxCorrelationCoefficient = 0;

//for (int overlap = 350; overlap < image2.cols; overlap += 10)

{

//****************************************//

Mat imageTemp = image2;//(Rect(0, 0, overlap, image1.rows));

long double tempTotalcount = 0;

long double tempTotalPixel = 0;

for (int i = 0; i < overlap; i++)

{

for (int j = 0; j < image1.rows; j++)

{

tempTotalcount += 1;

//cout << i<<","<<j<<":"<<int(imageTemp.at<uchar>(j,i)) << ",";

tempTotalPixel += float(imageTemp.at<uchar>(j, i));

}

cout << endl;

}

float tempAvg = tempTotalPixel / tempTotalcount;//灰度平均值

//**************************************//

long double tempSubstract = 0;

for (int i = 0; i < overlap; i++)

{

for (int j = 0; j < image1.rows; j++)

{

long double tempSquare = (long double(imageTemp.at<uchar>(j, i)) - tempAvg)* (long double(imageTemp.at<uchar>(j, i)) - tempAvg);

tempSubstract = tempSubstract + tempSquare;

}

cout << endl;

}

float tempVariance = sqrt(tempSubstract / tempTotalcount);//灰度标准差

//***********************************************//

Mat imageBase = image1(Rect(image1.cols - overlap, 0, overlap, image1.rows));

int baseTotalcount = 0;

int baseTotalPixel = 0;

for (int i = 0; i < overlap; i++)

{

for (int j = 0; j < image1.rows; j++)

{

baseTotalcount += 1;

//cout << i<<","<<j<<":"<<int(imageTemp.at<uchar>(j,i)) << ",";

baseTotalPixel += float(imageBase.at<uchar>(j, i));

}

cout << endl;

}

float baseAvg = baseTotalPixel / baseTotalcount;

//*****************************************//

long double baseSubstract = 0;

for (int i = 0; i < overlap; i++)

{

for (int j = 0; j < image1.rows; j++)

{

long double baseSquare = (long double(imageBase.at<uchar>(j, i)) - baseAvg)* (long double(imageBase.at<uchar>(j, i)) - baseAvg);

baseSubstract = baseSubstract + baseSquare;

}

cout << endl;

}

float baseVariance = sqrt(baseSubstract / baseTotalcount);

//***************************************//

long double dotMul = 0;

for (int i = 0; i < overlap; i++)

{

for (int j = 0; j < image1.rows; j++)

{

dotMul += abs((long double(imageBase.at<uchar>(j, i)) - baseAvg)*(long double(imageTemp.at<uchar>(j, i)) - tempAvg));

}

cout << endl;

}

float dotMulAvg = dotMul / (baseTotalcount);

float pearsonCorrelationCoefficient = dotMulAvg / (baseVariance*tempVariance);

if (pearsonCorrelationCoefficientMax < pearsonCorrelationCoefficient)

{

pearsonCorrelationCoefficientMax = pearsonCorrelationCoefficient;

overlapMaxCorrelationCoefficient = overlap;

}

}

cout << "最大相关系数" << pearsonCorrelationCoefficientMax << endl;

cout << "最大相关系数时重叠区域" << overlapMaxCorrelationCoefficient << endl;

imshow("", image1);

waitKey(0);

}

140

140

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?