代码

运行时注意修改文件路径

import torch

from torch import nn

from torch.nn import Sequential, Conv2d, ReLU, MaxPool2d, Linear, Dropout, Flatten

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

from torchvision import datasets, transforms

# 准备数据集

train_data = datasets.FashionMNIST("../datasets", train=True, transform=transforms.Compose([transforms.Resize(size=224), transforms.ToTensor()]), download=True)

test_data = datasets.FashionMNIST("../datasets", train=False, transform=transforms.Compose([transforms.Resize(size=224), transforms.ToTensor()]), download=True)

# print(test_data.data.shape) # 28*28 -> 224*224

# 数据长度

train_data_size = len(train_data)

test_data_size = len(test_data)

# print(train_data_size) # 60000

# print(test_data_size) # 10000

# 加载数据集

train_dataloader = DataLoader(train_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)

# tensorboard

writer = SummaryWriter("logs")

# 添加设备

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# 搭建神经网络

class AlexNet(nn.Module):

def __init__(self):

super(AlexNet, self).__init__()

self.conv1 = Sequential(

Conv2d(1, 96, 11, 4, 3),

ReLU(),

MaxPool2d(3, 2)

)

self.conv2 = Sequential(

Conv2d(96, 256, 5, 1, 2),

ReLU(),

MaxPool2d(3, 2)

)

self.conv3 = Sequential(

Conv2d(256, 384, 3, 1, 1),

ReLU(),

Conv2d(384, 384, 3, 1, 1),

ReLU(),

Conv2d(384, 256, 3, 1, 1),

ReLU(),

MaxPool2d(3, 2)

)

self.fc = Sequential(

Flatten(), # 展开成一维

Linear(256*6*6, 4096),

ReLU(),

Dropout(0.5),

Linear(4096, 4096),

ReLU(),

Dropout(0.5),

Linear(4096, 10) # FashionMNIST数据集是10分类,所以要改一下原AlexNet的out_features

)

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

x = self.conv3(x)

x = self.fc(x)

return x

# 创建网络模型

model = AlexNet()

model.to(device)

# 损失函数

loss_fn = nn.CrossEntropyLoss()

loss_fn.to(device)

# 优化器

learning_rate = 0.001 # 亲测0.01的学习率时,准确率仅为9.9%

optim = torch.optim.Adam(model.parameters(), lr=learning_rate)

# 设置训练网络的参数

train_step = 0

test_step = 0

epoch = 10

for i in range(epoch):

print("————————第{}轮训练开始————————".format(i + 1))

# 训练

model.train()

for data in train_dataloader:

imgs, targets = data

imgs = imgs.to(device)

targets = targets.to(device)

output = model(imgs)

loss = loss_fn(output, targets)

# 优化器优化模型参数

optim.zero_grad()

loss.backward()

optim.step()

train_step += 1

if train_step % 100 == 0:

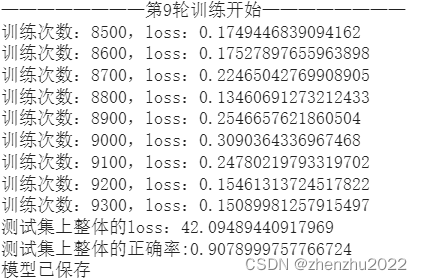

print("训练次数:{},loss:{}".format(train_step, loss))

writer.add_scalar("train_loss", loss, train_step)

# 测试

model.eval()

total_test_loss = 0

total_test_accuracy = 0

with torch.no_grad():

for data in test_dataloader:

imgs, targets = data

imgs = imgs.to(device)

targets = targets.to(device)

output = model(imgs)

loss = loss_fn(output, targets)

total_test_loss += loss

total_test_accuracy += (output.argmax(1) == targets).sum()

print("测试集上整体的loss:{}".format(total_test_loss))

print("测试集上整体的正确率:{}".format(total_test_accuracy/test_data_size))

writer.add_scalar("test_loss", total_test_loss, test_step)

writer.add_scalar("test_accuracy", total_test_accuracy/test_data_size, test_step)

test_step += 1

torch.save(model, "./savedModel/AlexNet/AlexNet_{}.pth".format(i))

print("模型已保存")

writer.close()训练和测试结果

895

895

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?