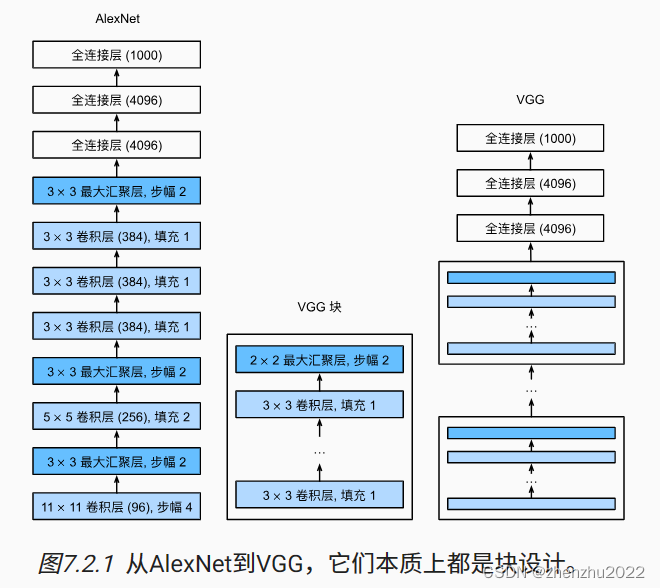

目录

模型

代码

import torch

from torch import nn

from torchvision import datasets, transforms

from torch.utils.data import DataLoader

from tqdm import tqdm

# 加载数据

train_dataset = datasets.FashionMNIST(root="../datasets/", transform=transforms.Compose([transforms.ToTensor(), transforms.Resize(224)]), train=True, download=True)

test_dataset = datasets.FashionMNIST(root="../datasets/", transform=transforms.Compose([transforms.ToTensor(), transforms.Resize(224)]), train=False, download=True)

train_dataloader = DataLoader(train_dataset, batch_size=128, shuffle=True)

test_dataloader = DataLoader(test_dataset, batch_size=128, shuffle=False)

# 定义 VGG 网络结构

class VGG11(nn.Module):

def __init__(self, conv_arch):

super().__init__()

self.conv_blks = []

self.conv_arch = conv_arch

self.conv_blocks()

self.convs = nn.Sequential(*self.conv_blks)

self.linears = nn.Sequential(nn.Flatten(),

nn.Linear(128 * 7 * 7, 4096), nn.ReLU(), nn.Dropout(0.5),

nn.Linear(4096, 4096), nn.ReLU(), nn.Dropout(0.5),

nn.Linear(4096, 10))

# 定义 vgg 卷积块函数

def vgg_block(self, num_convs, in_channels, out_channels):

layers = []

for _ in range(num_convs):

layers.append(nn.Conv2d(in_channels, out_channels, kernel_size=3, padding=1))

layers.append(nn.ReLU())

in_channels = out_channels

layers.append(nn.MaxPool2d(kernel_size=2, stride=2))

return nn.Sequential(*layers)

def conv_blocks(self):

in_channels = 1

for num_convs, out_channels in self.conv_arch:

self.conv_blks.append(self.vgg_block(num_convs, in_channels, out_channels))

in_channels = out_channels

def forward(self, x):

x = self.convs(x)

x = self.linears(x)

return x

conv_arch = ((1, 16), (1, 32), (2, 64), (2, 128), (2, 128))

device = "cuda:0" if torch.cuda.is_available() else "cpu"

vgg11 = VGG11(conv_arch).to(device)

# 定义超参数

epochs = 10

lr = 1e-4

# 定义优化器

optimizer = torch.optim.Adam(vgg11.parameters(), lr = lr)

# 定义损失函数

loss_fn = nn.CrossEntropyLoss()

# 训练

for epoch in range(epochs):

train_loss_epoch = []

for train_data, labels in tqdm(train_dataloader):

train_data = train_data.to(device)

labels = labels.to(device)

y_hat = vgg11(train_data)

loss = loss_fn(y_hat, labels)

optimizer.zero_grad()

loss.backward()

optimizer.step()

train_loss_epoch.append(loss.cpu().detach().numpy())

print(f'epoch:{epoch}, train_loss:{sum(train_loss_epoch) / len(train_loss_epoch)}')

with torch.no_grad():

test_loss_epoch = []

right = 0

for test_data, labels in tqdm(test_dataloader):

test_data = test_data.to(device)

labels = labels.to(device)

y_hat = vgg11(test_data)

loss = loss_fn(y_hat, labels)

test_loss_epoch.append(loss.cpu().detach().numpy())

right += (torch.argmax(y_hat, 1) == labels).sum()

acc = right / len(test_dataset)

print(f'test_loss:{sum(test_loss_epoch) / len(test_loss_epoch)}, acc:{acc}')

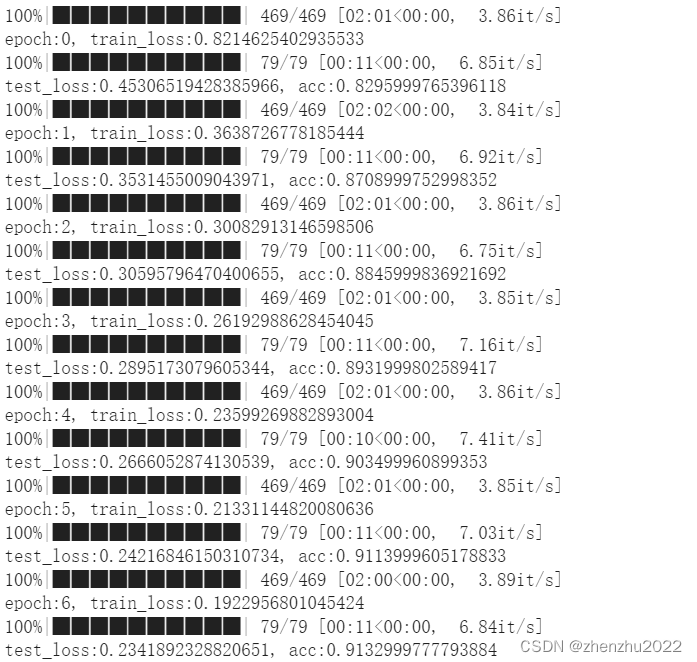

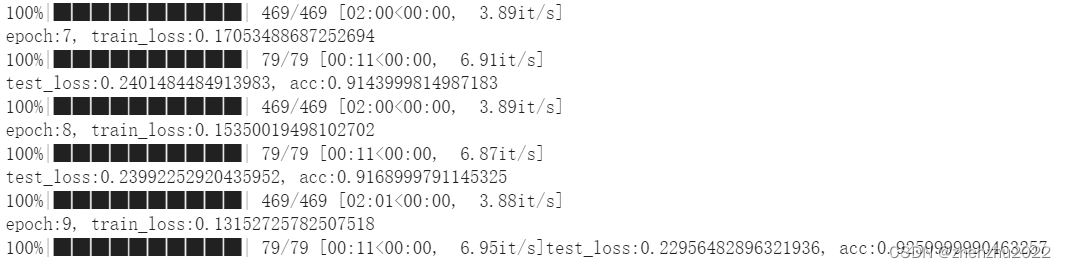

训练结果

总结

考虑到原VGG11网络的卷积通道数太大,导致训练时间过长的问题,因此我将通道数做了除4处理。

本文介绍了使用PyTorch实现的VGG11模型在FashionMNIST数据集上的训练过程,针对原始模型通道数过多导致训练时间过长的问题,对网络结构进行了调整。作者详细展示了数据加载、模型定义、训练步骤及性能评估结果。

本文介绍了使用PyTorch实现的VGG11模型在FashionMNIST数据集上的训练过程,针对原始模型通道数过多导致训练时间过长的问题,对网络结构进行了调整。作者详细展示了数据加载、模型定义、训练步骤及性能评估结果。

4668

4668

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?