Description

Background

- Early 90’s

- developed at University of Pennsylvania

- Most cited paper in NLP!!!

Size

- 40000 training sentences

- 2400 test sentences

Gerne

- Mostly Wall Street journal news stories and some spoken conversations

Importance

- Helped launch modern automatic methods

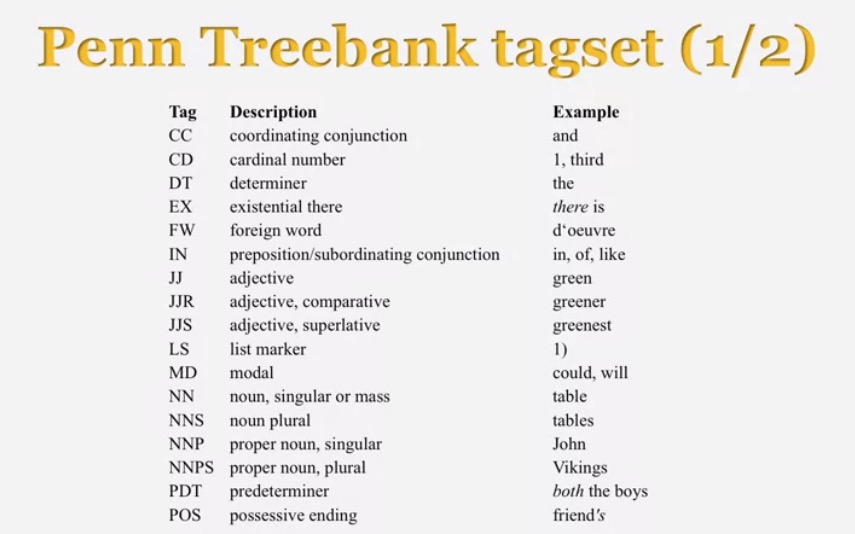

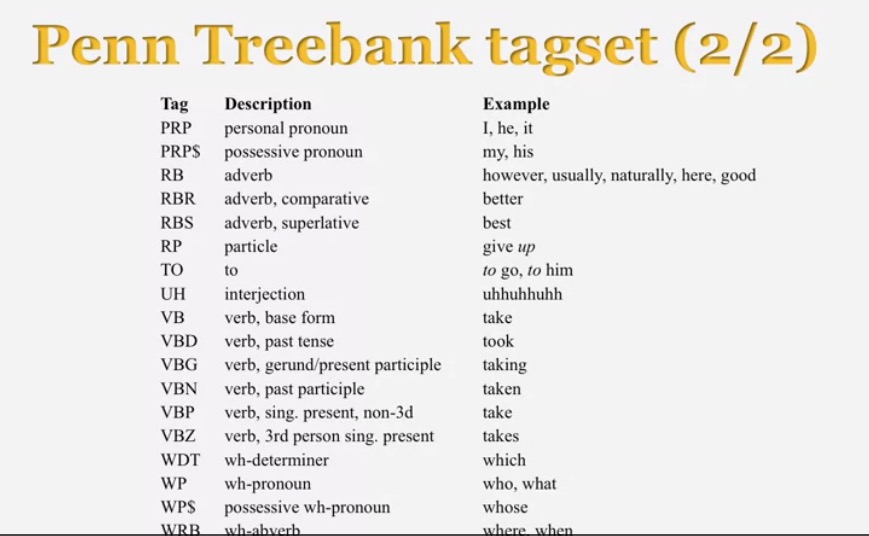

Penn Treebank tagsets

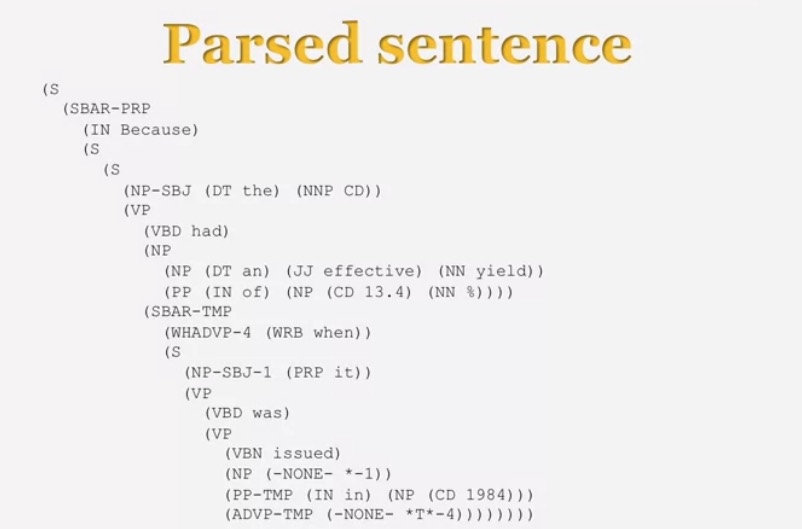

Peculiarities

- Complementizers

- e.g. “that”

- Gaps

- NONE

- SBAR

The use of Treebank

Disadvantages

- A lot more work to annotate 40+ sentences than to write a grammar

Advantages

-Statistics about different constituents and phenomena

- training and evaluating systems

- multilingual version

Evaluation methodology

Classification tasks

- Document retrieval

- POS tagging

- Parsing

Data split

- Training

- Dev-test

- Test

Baseline

- dumb baseline

- intelligent baseline

- human performance(oracle)

New methods

Evaluation methods

- Accuracy

- Precision and recall

Multiple references

- Interjudge agreement

Kappa

κ=P(A)−P(E)1−P(E)

- Agreement vs. expected agreement

-P(A) is the level of agreements of the judges

- P(E) is the expected probability of agreement by chance

Parsing evaluations

- Precision and recall

- Labeled precision and recall

- F1 score

- Crossing brackets

2941

2941

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?