Probabilistic language model

- Assign a probability to a sentence

- P(S)=P(w1,w2,...,wn)

- Different from deterministic methods using CFG

- The sum of the probabilities of all possible sentences must add up to 1

Predicting the next word

P(wn∣w1,w2,...,wn−1)

Uses of LM

- Speech recognition

- P(recognize speech) > P(wreck a nice beach)

- Text gerenation

- P(three houses) > P(three house)

- Spelling correction

- P(my cat eats fish) > P(my xat eats fish)

- Machine translation

- P(the blue house) > P(the house blue)

- OCR

- …

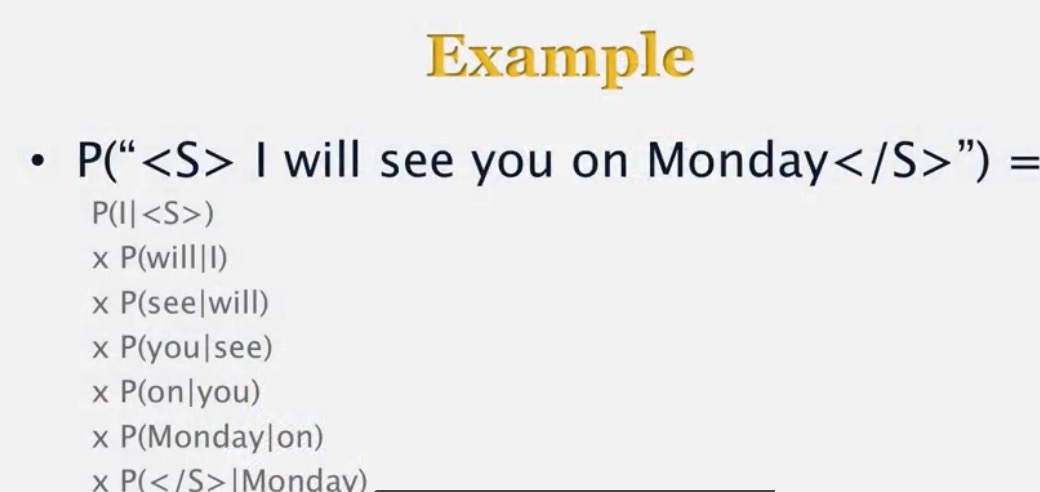

Probability of a sentence

P(S)=P(w1,w2,...,wn)=P(w1)P(w2∣w1)...P(wn∣w1,w2,...,wn−1)

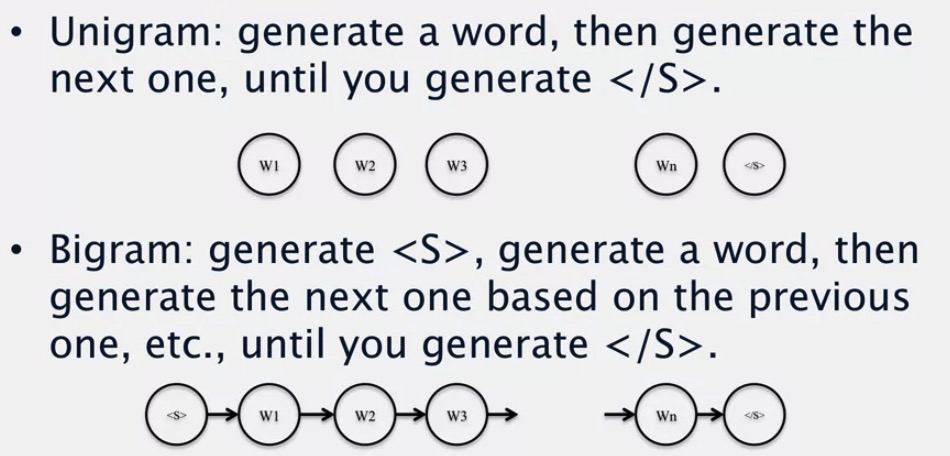

N-gram model

- Markov assumption: only look at limited history

- Unigram

- Bigram

- Trigram

- It is possible to go to 3, 4, 5 grams

N-grams

- Shakespeare unigrams

- 29524 types, approx 900k tokens

- Bigrams

- 346097 types

- Sparse data!!

Estimation

We cannot compute the conditional probability directly due to the data sparseness, so we have to use Markov Assumption.

MLE

Using training data

Unigram Example

- The word pizza appears 700 times in a corpus of

1×107

words

PML(pizza)=7001×107=7×10−5

Bigram Example

- The word with appears 1000 times in the corpus

- the phrase with spinach appears 6 times

PML(spinach∣with)=count(with spinach)count(with)=61000=0.006

The estimation is domain-based, and it may be not good for other gerenes

N-grams and regular languages

- N-grams are just one way to represent the weighted regular languages

Generative models

Engineering trick

- The MLE values are often on the order of

10−6

or less

- multiplying 20 such values gives a number on the order of 10−120

- this leads to underflow

- Use (base 10) logarithms instead

- 10−6 becomes -6

- Use sums instead of products

592

592

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?