def Conv1(in_planes, places, stride=2):

return nn.Sequential(

nn.Conv2d(in_channels=in_planes,out_channels=places,kernel_size=7,stride=stride,padding=3, bias=False),

nn.BatchNorm2d(places),

Swish(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

)

class Flatten(nn.Module):

def forward(self, x):

return x.view(x.shape[0], -1)

class SEBlock(nn.Module):

def init(self, channels, ratio=16):

super().init()

mid_channels = channels // ratio

self.se = nn.Sequential(

nn.AdaptiveAvgPool2d(1),

nn.Conv2d(channels, mid_channels, kernel_size=1, stride=1, padding=0, bias=True),

Swish(),

nn.Conv2d(mid_channels, channels, kernel_size=1, stride=1, padding=0, bias=True),

)

def forward(self, x):

return x * torch.sigmoid(self.se(x))

class MBConvBlock(nn.Module):

def init(self, in_channels, out_channels, kernel_size=3, stride=1, expansion_factor=6):

super(MBConvBlock, self).init()

self.stride = stride

self.expansion_factor = expansion_factor

mid_channels = (in_channels * expansion_factor)

self.bottleneck = nn.Sequential(

Conv1x1BNAct(in_channels, mid_channels),

ConvBNAct(mid_channels, mid_channels, kernel_size, stride, groups=mid_channels),

SEBlock(mid_channels),

Conv1x1BN(mid_channels, out_channels)

)

if self.stride == 1:

self.shortcut = Conv1x1BN(in_channels, out_channels)

def forward(self, x):

out = self.bottleneck(x)

out = (out + self.shortcut(x)) if self.stride==1 else out

return out

class EfficientNet(nn.Module):

params = {

‘efficientnet_b0’: (1.0, 1.0, 224, 0.2),

‘efficientnet_b1’: (1.0, 1.1, 240, 0.2),

‘efficientnet_b2’: (1.1, 1.2, 260, 0.3),

‘efficientnet_b3’: (1.2, 1.4, 300, 0.3),

‘efficientnet_b4’: (1.4, 1.8, 380, 0.4),

‘efficientnet_b5’: (1.6, 2.2, 456, 0.4),

‘efficientnet_b6’: (1.8, 2.6, 528, 0.5),

‘efficientnet_b7’: (2.0, 3.1, 600, 0.5),

}

def init(self, subtype=‘efficientnet_b0’, num_classes=1000):

super(EfficientNet, self).init()

self.width_coeff = self.params[subtype][0]

self.depth_coeff = self.params[subtype][1]

self.dropout_rate = self.params[subtype][3]

self.depth_div = 8

self.stage1 = ConvBNAct(3, self._calculate_width(32), kernel_size=3, stride=2)

self.stage2 = self.make_layer(self._calculate_width(32), self._calculate_width(16), kernel_size=3, stride=1, block=self._calculate_depth(1))

self.stage3 = self.make_layer(self._calculate_width(16), self._calculate_width(24), kernel_size=3, stride=2, block=self._calculate_depth(2))

self.stage4 = self.make_layer(self._calculate_width(24), self._calculate_width(40), kernel_size=5, stride=2, block=self._calculate_depth(2))

self.stage5 = self.make_layer(self._calculate_width(40), self._calculate_width(80), kernel_size=3, stride=2, block=self._calculate_depth(3))

self.stage6 = self.make_layer(self._calculate_width(80), self._calculate_width(112), kernel_size=5, stride=1, block=self._calculate_depth(3))

self.stage7 = self.make_layer(self._calculate_width(112), self._calculate_width(192), kernel_size=5, stride=2, block=self._calculate_depth(4))

self.stage8 = self.make_layer(self._calculate_width(192), self._calculate_width(320), kernel_size=3, stride=1, block=self._calculate_depth(1))

self.classifier = nn.Sequential(

Conv1x1BNAct(320, 1280),

nn.AdaptiveAvgPool2d(1),

nn.Dropout2d(0.2),

Flatten(),

自我介绍一下,小编13年上海交大毕业,曾经在小公司待过,也去过华为、OPPO等大厂,18年进入阿里一直到现在。

深知大多数Python工程师,想要提升技能,往往是自己摸索成长或者是报班学习,但对于培训机构动则几千的学费,着实压力不小。自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

因此收集整理了一份《2024年Python开发全套学习资料》,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友,同时减轻大家的负担。

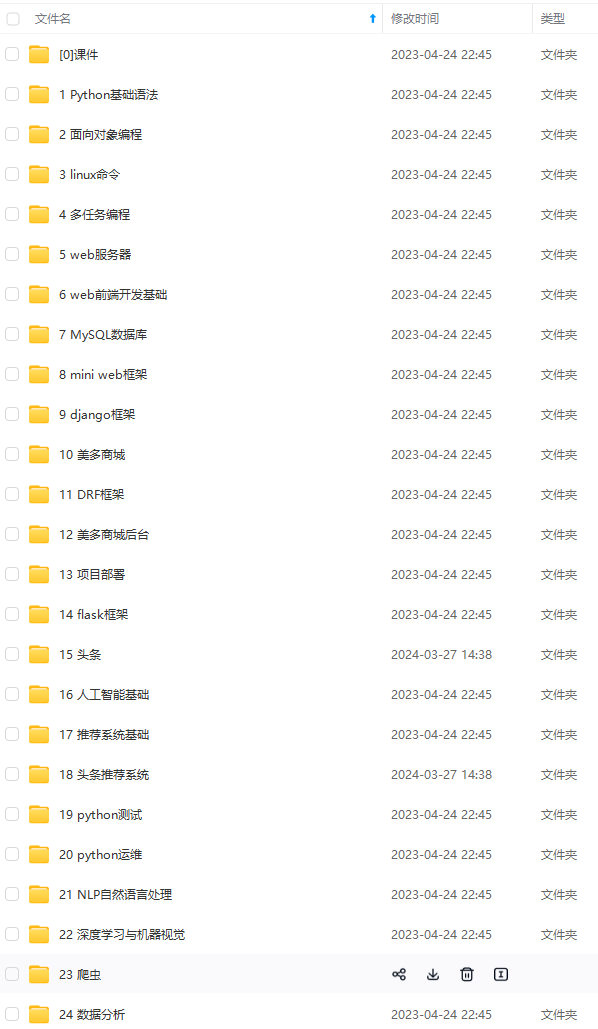

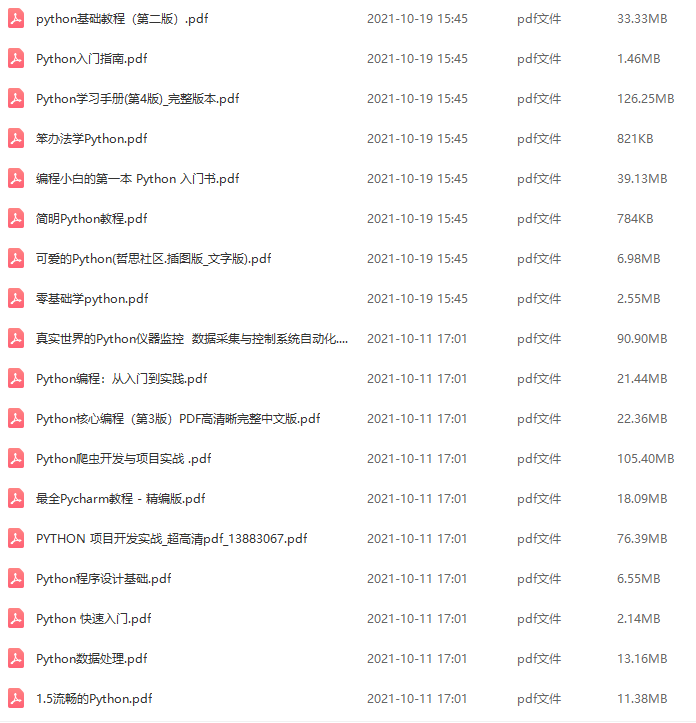

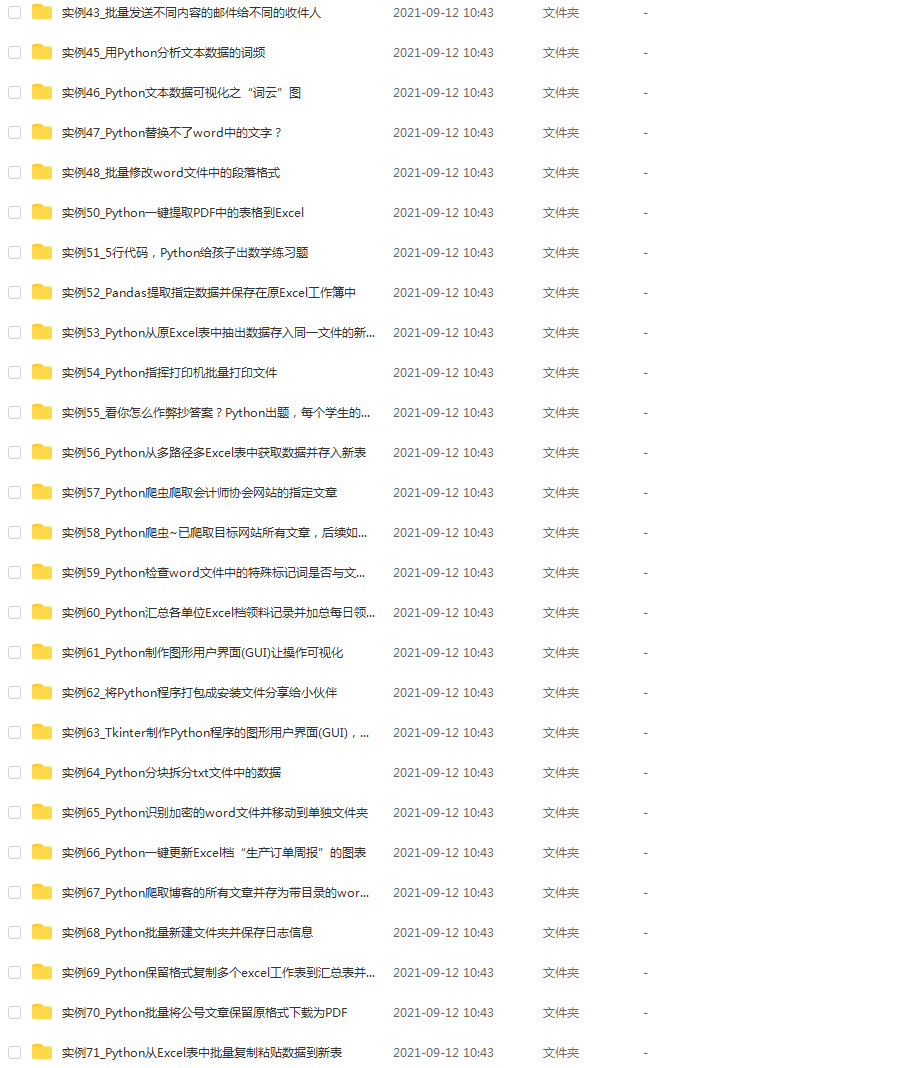

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,基本涵盖了95%以上Python开发知识点,真正体系化!

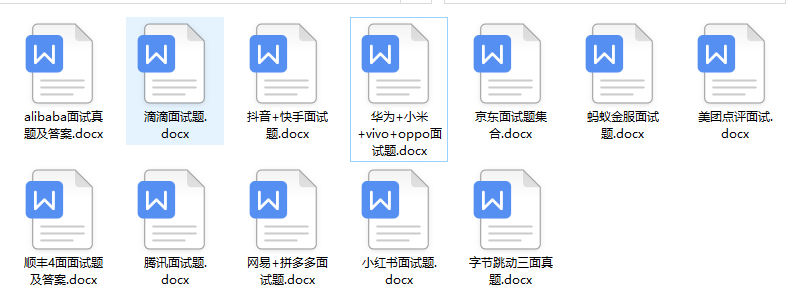

由于文件比较大,这里只是将部分目录大纲截图出来,每个节点里面都包含大厂面经、学习笔记、源码讲义、实战项目、讲解视频,并且后续会持续更新

如果你觉得这些内容对你有帮助,可以添加V获取:vip1024c (备注Python)

知识点,真正体系化!**

由于文件比较大,这里只是将部分目录大纲截图出来,每个节点里面都包含大厂面经、学习笔记、源码讲义、实战项目、讲解视频,并且后续会持续更新

如果你觉得这些内容对你有帮助,可以添加V获取:vip1024c (备注Python)

[外链图片转存中…(img-aJmjnXvd-1712714283518)]

3万+

3万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?