- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊

代码

import torch

import torch.nn as nn

import torchvision.transforms as transforms

import torchvision

from torchvision import transforms, datasets

from torchsummary import summary

import os,PIL,pathlib

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

device

import os,PIL,random,pathlib

data_dir = './data/p4-data/'

data_dir = pathlib.Path(data_dir)

data_paths = list(data_dir.glob('*'))

classeNames = [str(path).split("\\")[2] for path in data_paths]

classeNames

[‘Monkeypox’, ‘Others’]

total_datadir = './data/p4-data/'

# 关于transforms.Compose的更多介绍可以参考:https://blog.csdn.net/qq_38251616/article/details/124878863

train_transforms = transforms.Compose([

transforms.Resize([224, 224]), # 将输入图片resize成统一尺寸

transforms.ToTensor(), # 将PIL Image或numpy.ndarray转换为tensor,并归一化到[0,1]之间

transforms.Normalize( # 标准化处理-->转换为标准正太分布(高斯分布),使模型更容易收敛

mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]) # 其中 mean=[0.485,0.456,0.406]与std=[0.229,0.224,0.225] 从数据集中随机抽样计算得到的。

])

total_data = datasets.ImageFolder(total_datadir,transform=train_transforms)

print(total_data)

print(total_data.class_to_idx)

Dataset ImageFolder

Number of datapoints: 2142

Root location: ./data/p4-data/

StandardTransform

Transform: Compose(

Resize(size=[224, 224], interpolation=bilinear, max_size=None, antialias=None)

ToTensor()

Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

)

{‘Monkeypox’: 0, ‘Others’: 1}

image_in_shape = total_data[0][0].shape

image_in_shape

image_in_shape = total_data[0][0].shape

image_in_shape

train_size = int(0.8 * len(total_data))

test_size = len(total_data) - train_size

train_dataset, test_dataset = torch.utils.data.random_split(total_data, [train_size, test_size])

print(train_dataset, test_dataset)

print(train_size,test_size)

<torch.utils.data.dataset.Subset object at 0x000002D1F15DCD60> <torch.utils.data.dataset.Subset object at 0x000002D1F15DC1F0>

1713 429

import torch.nn.functional as F

class Network_bn(nn.Module):

def __init__(self):

super(Network_bn, self).__init__()

"""

nn.Conv2d()函数:

第一个参数(in_channels)是输入的channel数量

第二个参数(out_channels)是输出的channel数量

第三个参数(kernel_size)是卷积核大小

第四个参数(stride)是步长,默认为1

第五个参数(padding)是填充大小,默认为0

"""

self.conv11 = nn.Conv2d(in_channels=3, out_channels=12, kernel_size=5, stride=1, padding=0)

self.bn11 = nn.BatchNorm2d(12)

self.conv12 = nn.Conv2d(in_channels=12, out_channels=12, kernel_size=3, stride=1, padding=0)

self.bn12 = nn.BatchNorm2d(12)

self.conv2 = nn.Conv2d(in_channels=12, out_channels=12, kernel_size=3, stride=1, padding=0)

self.bn2 = nn.BatchNorm2d(12)

self.pool = nn.MaxPool2d(2,2)

self.conv4 = nn.Conv2d(in_channels=12, out_channels=24, kernel_size=5, stride=1, padding=0)

self.bn4 = nn.BatchNorm2d(24)

self.conv5 = nn.Conv2d(in_channels=24, out_channels=24, kernel_size=5, stride=1, padding=0)

self.bn5 = nn.BatchNorm2d(24)

self.fc1 = nn.Linear(24*50*50, len(classeNames))

def forward(self, x):

x = F.relu(self.bn11(self.conv11(x)))

x = F.relu(self.bn12(self.conv12(x)))

x = F.relu(self.bn2(self.conv2(x)))

x = self.pool(x)

x = F.relu(self.bn4(self.conv4(x)))

x = F.relu(self.bn5(self.conv5(x)))

x = self.pool(x)

x = x.view(-1, 24*50*50)

x = self.fc1(x)

return x

device = "cuda" if torch.cuda.is_available() else "cpu"

print("Using {} device".format(device))

model = Network_bn().to(device)

print('Image in shape is: %s' %{image_in_shape})

print(summary(model, image_in_shape))

输出:

Using cuda device

Image in shape is: {torch.Size([3, 224, 224])}

==========================================================================================

Layer (type:depth-idx) Output Shape Param #

==========================================================================================

├─Conv2d: 1-1 [-1, 12, 220, 220] 912

├─BatchNorm2d: 1-2 [-1, 12, 220, 220] 24

├─Conv2d: 1-3 [-1, 12, 218, 218] 1,308

├─BatchNorm2d: 1-4 [-1, 12, 218, 218] 24

├─Conv2d: 1-5 [-1, 12, 216, 216] 1,308

├─BatchNorm2d: 1-6 [-1, 12, 216, 216] 24

├─MaxPool2d: 1-7 [-1, 12, 108, 108] --

├─Conv2d: 1-8 [-1, 24, 104, 104] 7,224

├─BatchNorm2d: 1-9 [-1, 24, 104, 104] 48

├─Conv2d: 1-10 [-1, 24, 100, 100] 14,424

├─BatchNorm2d: 1-11 [-1, 24, 100, 100] 48

├─MaxPool2d: 1-12 [-1, 24, 50, 50] --

├─Linear: 1-13 [-1, 2] 120,002

==========================================================================================

Total params: 145,346

Trainable params: 145,346

Non-trainable params: 0

Total mult-adds (M): 387.61

==========================================================================================

Input size (MB): 0.57

...

Forward/backward pass size (MB): 33.73

Params size (MB): 0.55

Estimated Total Size (MB): 34.86

==========================================================================================

loss_fn = nn.CrossEntropyLoss() # 创建损失函数

learn_rate = 7e-5 # 学习率

opt = torch.optim.Adam(model.parameters(),lr=learn_rate)

batch_size = 48

epochs = 50

from torch.optim.lr_scheduler import LambdaLR

import numpy as np

lr_lambda = lambda epoch: 1.0 if epoch < 100 else np.exp(0.1 * (10 - epoch))

scheduler = LambdaLR(opt, lr_lambda, last_epoch=-1)

# 训练循环

def train(dataloader, model, loss_fn, optimizer):

size = len(dataloader.dataset) # 训练集的大小,一共60000张图片

num_batches = len(dataloader) # 批次数目,1875(60000/32)

train_loss, train_acc = 0, 0 # 初始化训练损失和正确率

for X, y in dataloader: # 获取图片及其标签

X, y = X.to(device), y.to(device)

# 计算预测误差

pred = model(X) # 网络输出

loss = loss_fn(pred, y) # 计算网络输出和真实值之间的差距,targets为真实值,计算二者差值即为损失

# 反向传播

optimizer.zero_grad() # grad属性归零

loss.backward() # 反向传播

optimizer.step() # 每一步自动更新

# 记录acc与loss

train_acc += (pred.argmax(1) == y).type(torch.float).sum().item()

train_loss += loss.item()

train_acc /= size

train_loss /= num_batches

return train_acc, train_loss

def test(dataloader, model, loss_fn):

size = len(dataloader.dataset) # 测试集的大小,一共10000张图片

num_batches = len(dataloader) # 批次数目,313(10000/32=312.5,向上取整)

test_loss, test_acc = 0, 0

# 当不进行训练时,停止梯度更新,节省计算内存消耗

with torch.no_grad():

for imgs, target in dataloader:

imgs, target = imgs.to(device), target.to(device)

# 计算loss

target_pred = model(imgs)

loss = loss_fn(target_pred, target)

test_loss += loss.item()

test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()

test_acc /= size

test_loss /= num_batches

return test_acc, test_loss

train_loss = []

train_acc = []

test_loss = []

test_acc = []

train_dl = torch.utils.data.DataLoader(train_dataset,

batch_size=batch_size,

shuffle=True,

num_workers=1)

test_dl = torch.utils.data.DataLoader(test_dataset,

batch_size=batch_size,

shuffle=True,

num_workers=1)

for X, y in test_dl:

print("Shape of X [N, C, H, W]: ", X.shape)

print("Shape of y: ", y.shape, y.dtype)

break

def scheduler_lr(optimizer, scheduler):

lr_history = []

"""optimizer的更新在scheduler更新的前面"""

for epoch in range(epochs):

optimizer.step() # 更新参数

lr_history.append(optimizer.param_groups[0]['lr'])

# print(optimizer.param_groups[0]['lr'])

scheduler.step() # 调整学习率

return lr_history

lr_history = scheduler_lr(opt, scheduler)

print(lr_history)

if __name__ == '__main__':

for epoch in range(epochs):

model.train()

epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, opt)

model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)

train_acc.append(epoch_train_acc)

train_loss.append(epoch_train_loss)

test_acc.append(epoch_test_acc)

test_loss.append(epoch_test_loss)

template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%,Test_loss:{:.3f}')

print(

template.format(epoch + 1, epoch_train_acc * 100, epoch_train_loss, epoch_test_acc * 100, epoch_test_loss))

print('Done')

输出:

Shape of X [N, C, H, W]: torch.Size([48, 3, 224, 224])

Shape of y: torch.Size([48]) torch.int64

[7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05]

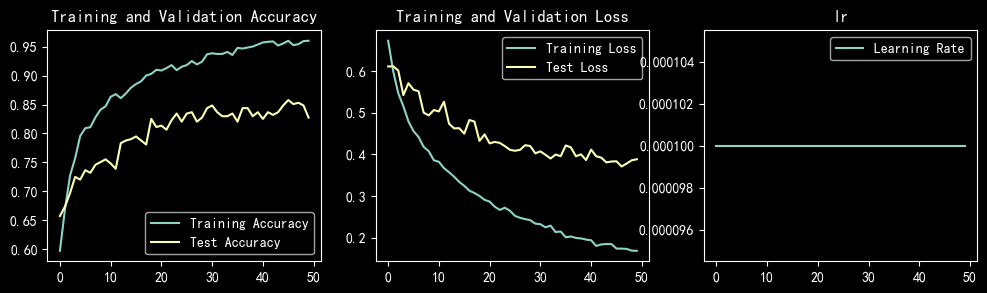

Epoch: 1, Train_acc:61.9%, Train_loss:0.704, Test_acc:76.5%,Test_loss:0.542

Epoch: 2, Train_acc:78.1%, Train_loss:0.463, Test_acc:83.2%,Test_loss:0.423

Epoch: 3, Train_acc:86.3%, Train_loss:0.361, Test_acc:79.3%,Test_loss:0.464

Epoch: 4, Train_acc:86.6%, Train_loss:0.327, Test_acc:86.0%,Test_loss:0.336

Epoch: 5, Train_acc:91.8%, Train_loss:0.244, Test_acc:87.4%,Test_loss:0.301

Epoch: 6, Train_acc:94.9%, Train_loss:0.191, Test_acc:87.4%,Test_loss:0.289

Epoch: 7, Train_acc:95.5%, Train_loss:0.168, Test_acc:90.0%,Test_loss:0.274

Epoch: 8, Train_acc:96.7%, Train_loss:0.142, Test_acc:90.2%,Test_loss:0.239

Epoch: 9, Train_acc:97.5%, Train_loss:0.123, Test_acc:90.4%,Test_loss:0.244

Epoch:10, Train_acc:98.3%, Train_loss:0.104, Test_acc:90.0%,Test_loss:0.235

Epoch:11, Train_acc:98.1%, Train_loss:0.103, Test_acc:86.9%,Test_loss:0.277

Epoch:12, Train_acc:98.4%, Train_loss:0.088, Test_acc:89.3%,Test_loss:0.252

Epoch:13, Train_acc:99.5%, Train_loss:0.064, Test_acc:90.9%,Test_loss:0.221

Epoch:14, Train_acc:99.4%, Train_loss:0.062, Test_acc:90.7%,Test_loss:0.220

Epoch:15, Train_acc:99.7%, Train_loss:0.055, Test_acc:90.4%,Test_loss:0.216

Epoch:16, Train_acc:99.9%, Train_loss:0.046, Test_acc:90.9%,Test_loss:0.239

Epoch:17, Train_acc:99.9%, Train_loss:0.039, Test_acc:91.6%,Test_loss:0.210

Epoch:18, Train_acc:99.9%, Train_loss:0.038, Test_acc:91.1%,Test_loss:0.216

Epoch:19, Train_acc:99.9%, Train_loss:0.039, Test_acc:87.4%,Test_loss:0.277

Epoch:20, Train_acc:100.0%, Train_loss:0.028, Test_acc:90.2%,Test_loss:0.217

Epoch:21, Train_acc:99.9%, Train_loss:0.029, Test_acc:90.2%,Test_loss:0.232

Epoch:22, Train_acc:99.9%, Train_loss:0.024, Test_acc:90.7%,Test_loss:0.208

Epoch:23, Train_acc:99.9%, Train_loss:0.022, Test_acc:92.1%,Test_loss:0.204

Epoch:24, Train_acc:100.0%, Train_loss:0.019, Test_acc:90.9%,Test_loss:0.215

Epoch:25, Train_acc:99.9%, Train_loss:0.021, Test_acc:90.9%,Test_loss:0.215

Epoch:26, Train_acc:99.9%, Train_loss:0.020, Test_acc:90.4%,Test_loss:0.253

Epoch:27, Train_acc:99.8%, Train_loss:0.020, Test_acc:88.8%,Test_loss:0.287

Epoch:28, Train_acc:100.0%, Train_loss:0.018, Test_acc:90.9%,Test_loss:0.215

Epoch:29, Train_acc:100.0%, Train_loss:0.016, Test_acc:90.9%,Test_loss:0.212

Epoch:30, Train_acc:100.0%, Train_loss:0.013, Test_acc:90.9%,Test_loss:0.232

Epoch:31, Train_acc:100.0%, Train_loss:0.012, Test_acc:91.1%,Test_loss:0.208

Epoch:32, Train_acc:100.0%, Train_loss:0.010, Test_acc:91.4%,Test_loss:0.213

Epoch:33, Train_acc:99.9%, Train_loss:0.014, Test_acc:91.1%,Test_loss:0.222

Epoch:34, Train_acc:100.0%, Train_loss:0.010, Test_acc:90.7%,Test_loss:0.226

Epoch:35, Train_acc:100.0%, Train_loss:0.010, Test_acc:90.0%,Test_loss:0.263

Epoch:36, Train_acc:100.0%, Train_loss:0.010, Test_acc:89.7%,Test_loss:0.231

Epoch:37, Train_acc:100.0%, Train_loss:0.010, Test_acc:90.9%,Test_loss:0.223

Epoch:38, Train_acc:100.0%, Train_loss:0.009, Test_acc:90.2%,Test_loss:0.221

Epoch:39, Train_acc:100.0%, Train_loss:0.008, Test_acc:91.1%,Test_loss:0.228

Epoch:40, Train_acc:100.0%, Train_loss:0.007, Test_acc:91.4%,Test_loss:0.224

Epoch:41, Train_acc:99.9%, Train_loss:0.009, Test_acc:90.9%,Test_loss:0.232

Epoch:42, Train_acc:100.0%, Train_loss:0.007, Test_acc:89.3%,Test_loss:0.244

Epoch:43, Train_acc:100.0%, Train_loss:0.006, Test_acc:91.4%,Test_loss:0.220

Epoch:44, Train_acc:100.0%, Train_loss:0.006, Test_acc:91.1%,Test_loss:0.220

Epoch:45, Train_acc:100.0%, Train_loss:0.005, Test_acc:90.7%,Test_loss:0.220

Epoch:46, Train_acc:100.0%, Train_loss:0.006, Test_acc:90.9%,Test_loss:0.214

Epoch:47, Train_acc:100.0%, Train_loss:0.006, Test_acc:91.1%,Test_loss:0.224

Epoch:48, Train_acc:100.0%, Train_loss:0.005, Test_acc:90.2%,Test_loss:0.237

Epoch:49, Train_acc:100.0%, Train_loss:0.005, Test_acc:90.7%,Test_loss:0.223

Epoch:50, Train_acc:100.0%, Train_loss:0.005, Test_acc:90.0%,Test_loss:0.229

Done

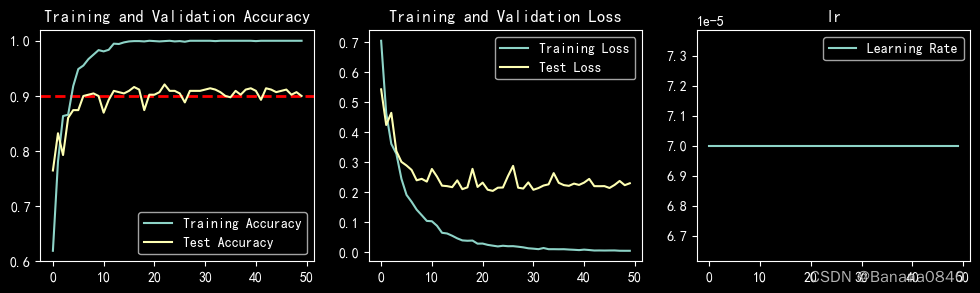

import matplotlib.pyplot as plt

import warnings

warnings.filterwarnings("ignore") #忽略警告信息

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 #分辨率

epochs_range = range(epochs)

plt.figure(figsize=(12, 3))

plt.subplot(1, 3, 1)

plt.axhline(y=0.9, color='r', linestyle='--', linewidth=2)

plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 3, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.subplot(1, 3, 3)

plt.plot(epochs_range, lr_history, label='Learning Rate')

plt.legend(loc='upper right')

plt.title('lr')

plt.show()

# 模型保存

PATH = './p4_model.pth' # 保存的参数文件名

torch.save(model.state_dict(), PATH)

# 将参数加载到model当中

model.load_state_dict(torch.load(PATH, map_location=device))

from PIL import Image

classes = list(total_data.class_to_idx)

def predict_one_image(image_path, model, transform, classes):

test_img = Image.open(image_path).convert('RGB')

# plt.imshow(test_img) # 展示预测的图片

test_img = transform(test_img)

img = test_img.to(device).unsqueeze(0)

model.eval()

output = model(img)

_,pred = torch.max(output,1)

pred_class = classes[pred]

print(f'预测结果是:{pred_class}')

# 预测训练集中的某张照片

predict_one_image(image_path='./data/p4-data/Monkeypox/M01_01_00.jpg',

model=model,

transform=train_transforms,

classes=classes)

预测结果是:Monkeypox

训练记录

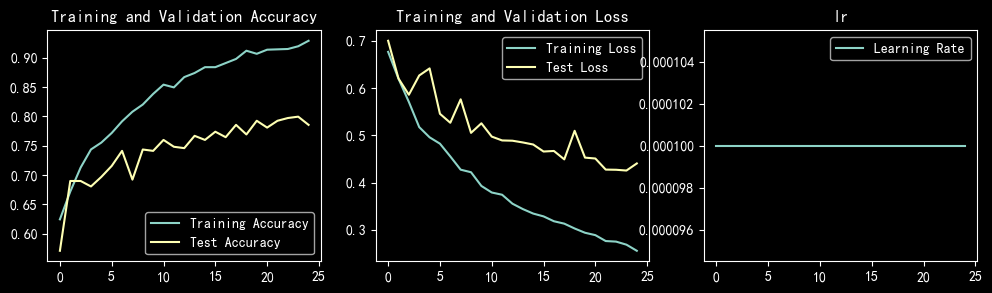

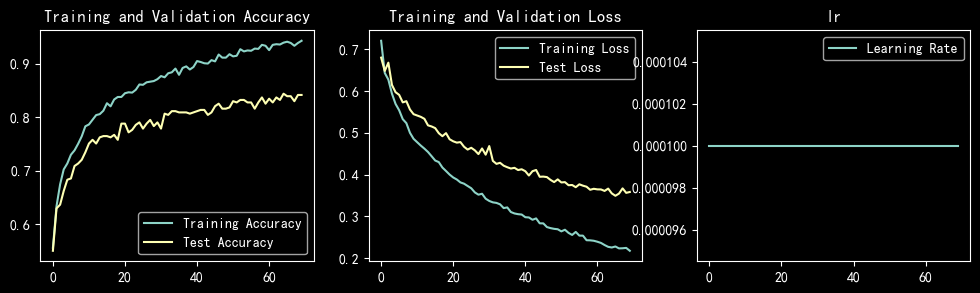

learn_rate = 1e-4 恒定学习率 epochs=25 batch_size = 32 SGD

learn_rate = 1e-4 恒定学习率 epochs=25 batch_size = 16 SGD

learn_rate = 1e-4 恒定学习率 epochs=50 batch_size = 16 SGD

learn_rate = 1e-4 恒定学习率 epochs=50 batch_size = 32 SGD

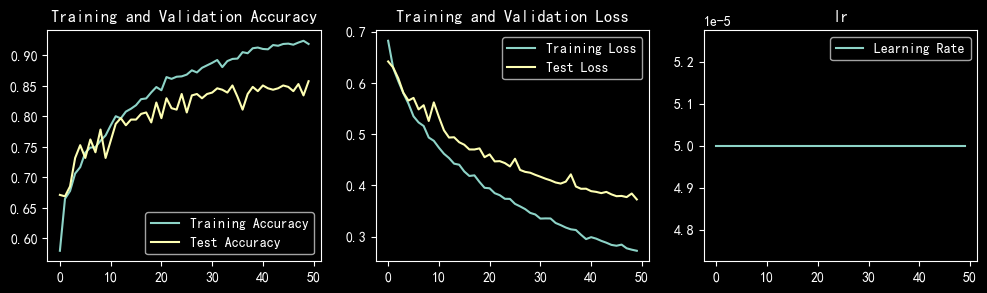

learn_rate = 5e-5 恒定学习率 epochs=50 batch_size = 32 SGD

learn_rate = 5e-5 恒定学习率 epochs=70 batch_size = 32 SGD

learn_rate = 5e-5 恒定学习率 epochs=50 batch_size = 64 SGD

learn_rate = 5e-5 恒定学习率 epochs=50 batch_size = 64 SGD

learn_rate = 5e-5 恒定学习率 epochs=50 batch_size = 64 SGD

调整网络结构,test_accuracy达到85%

conv1 55 -> conv11 33 conv12 3*3

Image in shape is: {torch.Size([3, 224, 224])}

==========================================================================================

Layer (type:depth-idx) Output Shape Param #

==========================================================================================

├─Conv2d: 1-1 [-1, 12, 222, 222] 336

├─BatchNorm2d: 1-2 [-1, 12, 222, 222] 24

├─Conv2d: 1-3 [-1, 12, 220, 220] 1,308

├─BatchNorm2d: 1-4 [-1, 12, 220, 220] 24

├─Conv2d: 1-5 [-1, 12, 218, 218] 1,308

├─BatchNorm2d: 1-6 [-1, 12, 218, 218] 24

├─MaxPool2d: 1-7 [-1, 12, 109, 109] --

├─Conv2d: 1-8 [-1, 24, 105, 105] 7,224

├─BatchNorm2d: 1-9 [-1, 24, 105, 105] 48

├─Conv2d: 1-10 [-1, 24, 101, 101] 14,424

├─BatchNorm2d: 1-11 [-1, 24, 101, 101] 48

├─MaxPool2d: 1-12 [-1, 24, 50, 50] --

├─Linear: 1-13 [-1, 2] 120,002

==========================================================================================

Total params: 144,770

Trainable params: 144,770

Non-trainable params: 0

Total mult-adds (M): 366.68

==========================================================================================

Input size (MB): 0.57

...

Forward/backward pass size (MB): 34.36

Params size (MB): 0.55

Estimated Total Size (MB): 35.49

==========================================================================================

learn_rate = 5e-5 恒定学习率 epochs=50 batch_size = 64 SGD

conv1 55 -> conv11 33 conv12 33

conv2 55 -> conv21 33 conv22 33

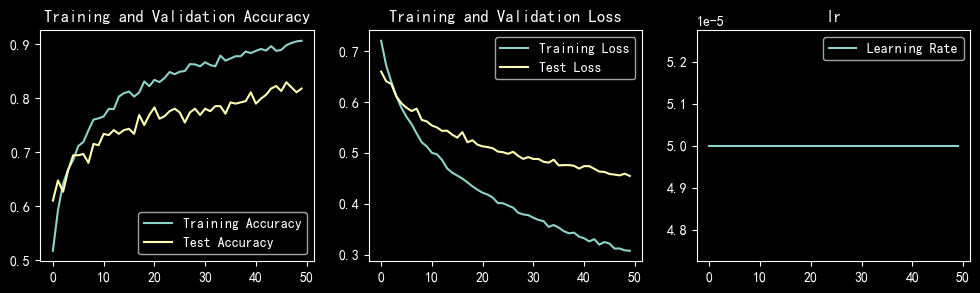

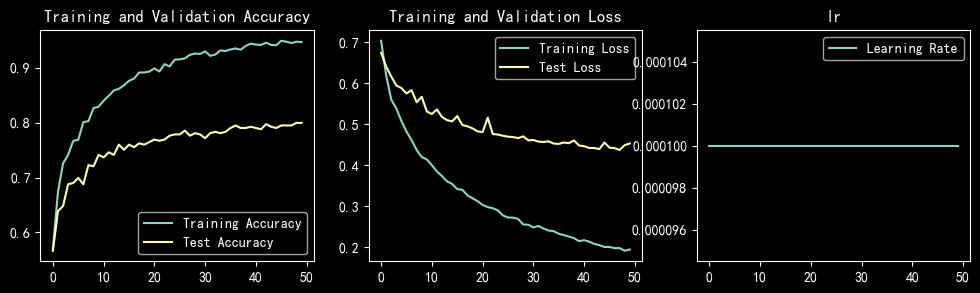

learn_rate = 1e-4 恒定学习率 epochs=50 batch_size = 64 SGD

conv1 55 -> conv11 33 conv12 33

conv2 55 -> conv21 33 conv22 33

Shape of X [N, C, H, W]: torch.Size([48, 3, 224, 224])

Shape of y: torch.Size([48]) torch.int64

[0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001, 0.0001]

Epoch: 1, Train_acc:59.0%, Train_loss:0.689, Test_acc:63.2%,Test_loss:0.641

Epoch: 2, Train_acc:65.1%, Train_loss:0.627, Test_acc:67.6%,Test_loss:0.619

Epoch: 3, Train_acc:70.1%, Train_loss:0.578, Test_acc:70.2%,Test_loss:0.575

Epoch: 4, Train_acc:71.8%, Train_loss:0.539, Test_acc:74.1%,Test_loss:0.557

Epoch: 5, Train_acc:73.5%, Train_loss:0.531, Test_acc:72.0%,Test_loss:0.564

Epoch: 6, Train_acc:76.6%, Train_loss:0.493, Test_acc:76.7%,Test_loss:0.522

Epoch: 7, Train_acc:78.8%, Train_loss:0.476, Test_acc:69.0%,Test_loss:0.604

Epoch: 8, Train_acc:79.7%, Train_loss:0.449, Test_acc:77.9%,Test_loss:0.494

Epoch: 9, Train_acc:82.0%, Train_loss:0.429, Test_acc:80.7%,Test_loss:0.477

Epoch:10, Train_acc:84.5%, Train_loss:0.409, Test_acc:81.4%,Test_loss:0.468

Epoch:11, Train_acc:83.6%, Train_loss:0.407, Test_acc:80.7%,Test_loss:0.456

Epoch:12, Train_acc:85.9%, Train_loss:0.386, Test_acc:81.6%,Test_loss:0.452

Epoch:13, Train_acc:86.7%, Train_loss:0.376, Test_acc:82.1%,Test_loss:0.442

Epoch:14, Train_acc:87.4%, Train_loss:0.361, Test_acc:80.9%,Test_loss:0.451

Epoch:15, Train_acc:88.0%, Train_loss:0.354, Test_acc:81.6%,Test_loss:0.430

Epoch:16, Train_acc:89.6%, Train_loss:0.341, Test_acc:83.7%,Test_loss:0.422

Epoch:17, Train_acc:89.8%, Train_loss:0.329, Test_acc:84.4%,Test_loss:0.413

Epoch:18, Train_acc:89.8%, Train_loss:0.325, Test_acc:83.4%,Test_loss:0.411

Epoch:19, Train_acc:89.1%, Train_loss:0.319, Test_acc:84.1%,Test_loss:0.405

Epoch:20, Train_acc:90.3%, Train_loss:0.311, Test_acc:84.6%,Test_loss:0.403

Epoch:21, Train_acc:90.1%, Train_loss:0.309, Test_acc:82.8%,Test_loss:0.412

Epoch:22, Train_acc:90.0%, Train_loss:0.297, Test_acc:85.8%,Test_loss:0.384

Epoch:23, Train_acc:92.2%, Train_loss:0.290, Test_acc:85.3%,Test_loss:0.387

Epoch:24, Train_acc:92.1%, Train_loss:0.283, Test_acc:85.8%,Test_loss:0.378

Epoch:25, Train_acc:91.9%, Train_loss:0.279, Test_acc:86.2%,Test_loss:0.374

Epoch:26, Train_acc:92.6%, Train_loss:0.269, Test_acc:86.9%,Test_loss:0.370

Epoch:27, Train_acc:92.4%, Train_loss:0.266, Test_acc:86.5%,Test_loss:0.369

Epoch:28, Train_acc:93.1%, Train_loss:0.257, Test_acc:86.2%,Test_loss:0.360

Epoch:29, Train_acc:92.5%, Train_loss:0.259, Test_acc:86.2%,Test_loss:0.357

Epoch:30, Train_acc:93.3%, Train_loss:0.247, Test_acc:86.0%,Test_loss:0.366

Epoch:31, Train_acc:93.6%, Train_loss:0.246, Test_acc:86.9%,Test_loss:0.361

Epoch:32, Train_acc:93.9%, Train_loss:0.242, Test_acc:86.5%,Test_loss:0.349

Epoch:33, Train_acc:93.4%, Train_loss:0.238, Test_acc:88.1%,Test_loss:0.352

Epoch:34, Train_acc:94.2%, Train_loss:0.231, Test_acc:87.9%,Test_loss:0.340

Epoch:35, Train_acc:93.8%, Train_loss:0.232, Test_acc:87.9%,Test_loss:0.340

Epoch:36, Train_acc:94.0%, Train_loss:0.228, Test_acc:86.9%,Test_loss:0.340

Epoch:37, Train_acc:94.2%, Train_loss:0.221, Test_acc:86.9%,Test_loss:0.335

Epoch:38, Train_acc:94.5%, Train_loss:0.217, Test_acc:88.8%,Test_loss:0.333

Epoch:39, Train_acc:95.0%, Train_loss:0.209, Test_acc:88.8%,Test_loss:0.326

Epoch:40, Train_acc:95.3%, Train_loss:0.206, Test_acc:90.0%,Test_loss:0.325

Epoch:41, Train_acc:94.3%, Train_loss:0.208, Test_acc:89.5%,Test_loss:0.329

Epoch:42, Train_acc:94.6%, Train_loss:0.204, Test_acc:88.3%,Test_loss:0.321

Epoch:43, Train_acc:95.5%, Train_loss:0.203, Test_acc:89.3%,Test_loss:0.324

Epoch:44, Train_acc:95.6%, Train_loss:0.197, Test_acc:88.1%,Test_loss:0.322

Epoch:45, Train_acc:94.9%, Train_loss:0.199, Test_acc:88.3%,Test_loss:0.314

Epoch:46, Train_acc:95.2%, Train_loss:0.198, Test_acc:88.8%,Test_loss:0.318

Epoch:47, Train_acc:95.2%, Train_loss:0.189, Test_acc:88.3%,Test_loss:0.310

Epoch:48, Train_acc:96.0%, Train_loss:0.186, Test_acc:88.6%,Test_loss:0.308

Epoch:49, Train_acc:95.7%, Train_loss:0.186, Test_acc:89.5%,Test_loss:0.313

Epoch:50, Train_acc:95.7%, Train_loss:0.184, Test_acc:88.6%,Test_loss:0.306

Done

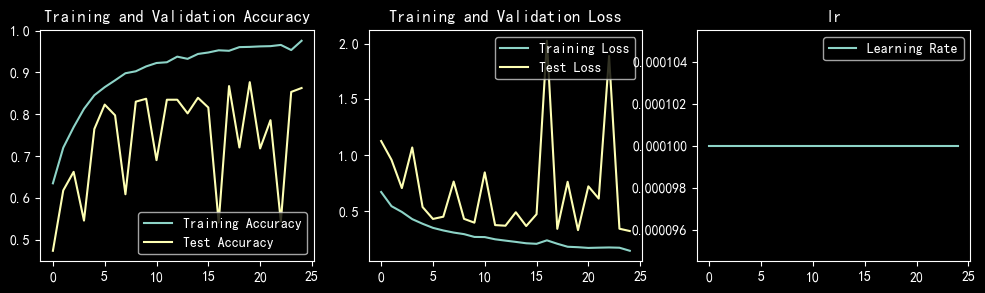

learn_rate = 1e-4 恒定学习率 epochs=50 batch_size = 32 SGD

conv1 55 -> conv11 33 conv12 33

conv2 55 -> conv21 33 conv22 33

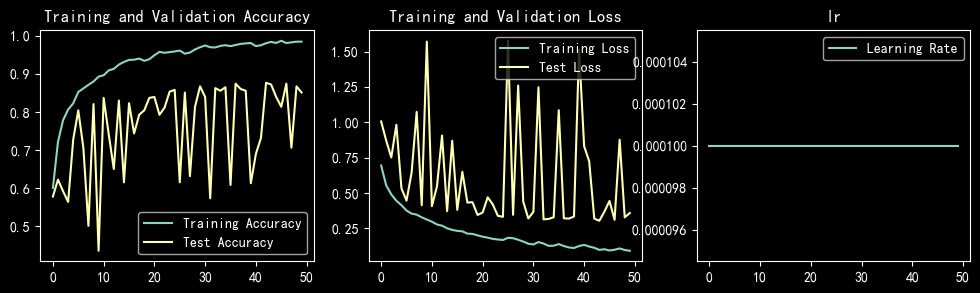

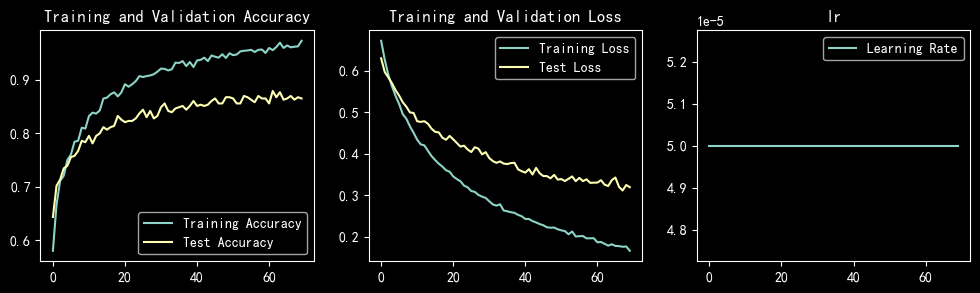

learn_rate = 1e-4 恒定学习率 epochs=70 batch_size = 64 SGD

conv1 55 -> conv11 33 conv12 33

conv2 55 -> conv21 33 conv22 33

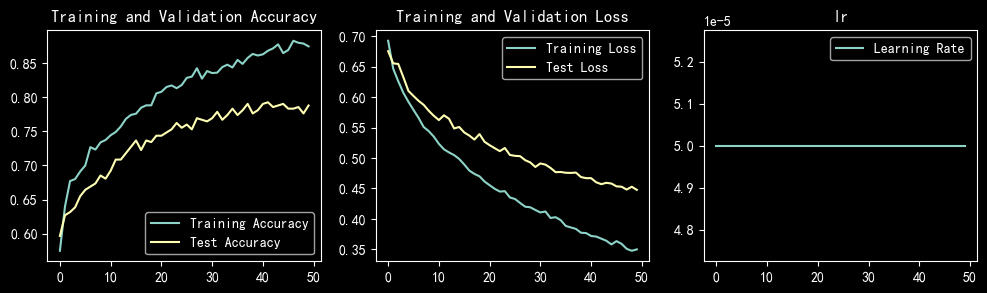

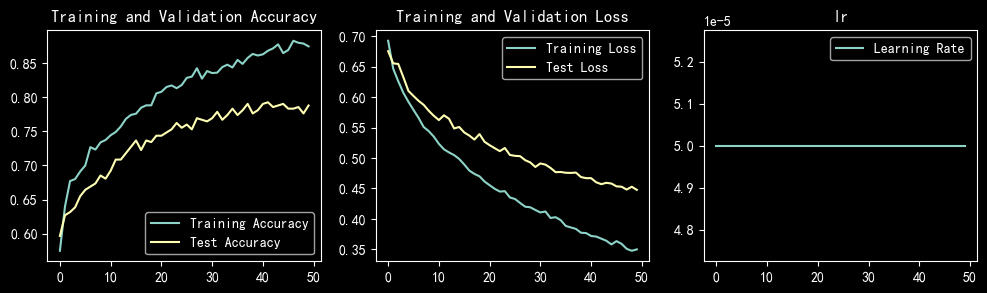

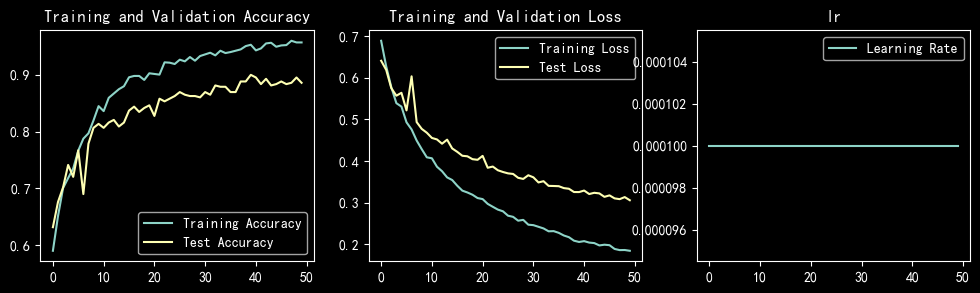

learn_rate = 1e-4 恒定学习率 epochs=50 batch_size = 48 SGD

conv1 55 -> conv11 33 conv12 33

conv2 55 -> conv21 33 conv22 33

conv4 55 -> conv41 33 conv42 3*3

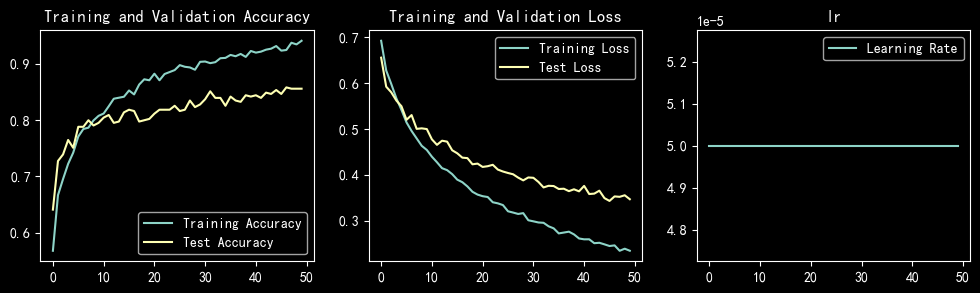

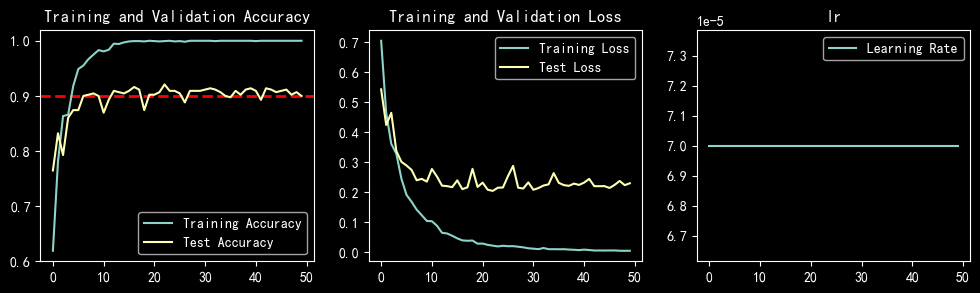

learn_rate = 7e-5 恒定学习率 epochs=50 batch_size = 48 Adam

conv1 55 -> conv11 33 conv12 3*3

Shape of X [N, C, H, W]: torch.Size([48, 3, 224, 224])

Shape of y: torch.Size([48]) torch.int64

[7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05, 7e-05]

Epoch: 1, Train_acc:61.9%, Train_loss:0.704, Test_acc:76.5%,Test_loss:0.542

Epoch: 2, Train_acc:78.1%, Train_loss:0.463, Test_acc:83.2%,Test_loss:0.423

Epoch: 3, Train_acc:86.3%, Train_loss:0.361, Test_acc:79.3%,Test_loss:0.464

Epoch: 4, Train_acc:86.6%, Train_loss:0.327, Test_acc:86.0%,Test_loss:0.336

Epoch: 5, Train_acc:91.8%, Train_loss:0.244, Test_acc:87.4%,Test_loss:0.301

Epoch: 6, Train_acc:94.9%, Train_loss:0.191, Test_acc:87.4%,Test_loss:0.289

Epoch: 7, Train_acc:95.5%, Train_loss:0.168, Test_acc:90.0%,Test_loss:0.274

Epoch: 8, Train_acc:96.7%, Train_loss:0.142, Test_acc:90.2%,Test_loss:0.239

Epoch: 9, Train_acc:97.5%, Train_loss:0.123, Test_acc:90.4%,Test_loss:0.244

Epoch:10, Train_acc:98.3%, Train_loss:0.104, Test_acc:90.0%,Test_loss:0.235

Epoch:11, Train_acc:98.1%, Train_loss:0.103, Test_acc:86.9%,Test_loss:0.277

Epoch:12, Train_acc:98.4%, Train_loss:0.088, Test_acc:89.3%,Test_loss:0.252

Epoch:13, Train_acc:99.5%, Train_loss:0.064, Test_acc:90.9%,Test_loss:0.221

Epoch:14, Train_acc:99.4%, Train_loss:0.062, Test_acc:90.7%,Test_loss:0.220

Epoch:15, Train_acc:99.7%, Train_loss:0.055, Test_acc:90.4%,Test_loss:0.216

Epoch:16, Train_acc:99.9%, Train_loss:0.046, Test_acc:90.9%,Test_loss:0.239

Epoch:17, Train_acc:99.9%, Train_loss:0.039, Test_acc:91.6%,Test_loss:0.210

Epoch:18, Train_acc:99.9%, Train_loss:0.038, Test_acc:91.1%,Test_loss:0.216

Epoch:19, Train_acc:99.9%, Train_loss:0.039, Test_acc:87.4%,Test_loss:0.277

Epoch:20, Train_acc:100.0%, Train_loss:0.028, Test_acc:90.2%,Test_loss:0.217

Epoch:21, Train_acc:99.9%, Train_loss:0.029, Test_acc:90.2%,Test_loss:0.232

Epoch:22, Train_acc:99.9%, Train_loss:0.024, Test_acc:90.7%,Test_loss:0.208

Epoch:23, Train_acc:99.9%, Train_loss:0.022, Test_acc:92.1%,Test_loss:0.204

Epoch:24, Train_acc:100.0%, Train_loss:0.019, Test_acc:90.9%,Test_loss:0.215

Epoch:25, Train_acc:99.9%, Train_loss:0.021, Test_acc:90.9%,Test_loss:0.215

Epoch:26, Train_acc:99.9%, Train_loss:0.020, Test_acc:90.4%,Test_loss:0.253

Epoch:27, Train_acc:99.8%, Train_loss:0.020, Test_acc:88.8%,Test_loss:0.287

Epoch:28, Train_acc:100.0%, Train_loss:0.018, Test_acc:90.9%,Test_loss:0.215

Epoch:29, Train_acc:100.0%, Train_loss:0.016, Test_acc:90.9%,Test_loss:0.212

Epoch:30, Train_acc:100.0%, Train_loss:0.013, Test_acc:90.9%,Test_loss:0.232

Epoch:31, Train_acc:100.0%, Train_loss:0.012, Test_acc:91.1%,Test_loss:0.208

Epoch:32, Train_acc:100.0%, Train_loss:0.010, Test_acc:91.4%,Test_loss:0.213

Epoch:33, Train_acc:99.9%, Train_loss:0.014, Test_acc:91.1%,Test_loss:0.222

Epoch:34, Train_acc:100.0%, Train_loss:0.010, Test_acc:90.7%,Test_loss:0.226

Epoch:35, Train_acc:100.0%, Train_loss:0.010, Test_acc:90.0%,Test_loss:0.263

Epoch:36, Train_acc:100.0%, Train_loss:0.010, Test_acc:89.7%,Test_loss:0.231

Epoch:37, Train_acc:100.0%, Train_loss:0.010, Test_acc:90.9%,Test_loss:0.223

Epoch:38, Train_acc:100.0%, Train_loss:0.009, Test_acc:90.2%,Test_loss:0.221

Epoch:39, Train_acc:100.0%, Train_loss:0.008, Test_acc:91.1%,Test_loss:0.228

Epoch:40, Train_acc:100.0%, Train_loss:0.007, Test_acc:91.4%,Test_loss:0.224

Epoch:41, Train_acc:99.9%, Train_loss:0.009, Test_acc:90.9%,Test_loss:0.232

Epoch:42, Train_acc:100.0%, Train_loss:0.007, Test_acc:89.3%,Test_loss:0.244

Epoch:43, Train_acc:100.0%, Train_loss:0.006, Test_acc:91.4%,Test_loss:0.220

Epoch:44, Train_acc:100.0%, Train_loss:0.006, Test_acc:91.1%,Test_loss:0.220

Epoch:45, Train_acc:100.0%, Train_loss:0.005, Test_acc:90.7%,Test_loss:0.220

Epoch:46, Train_acc:100.0%, Train_loss:0.006, Test_acc:90.9%,Test_loss:0.214

Epoch:47, Train_acc:100.0%, Train_loss:0.006, Test_acc:91.1%,Test_loss:0.224

Epoch:48, Train_acc:100.0%, Train_loss:0.005, Test_acc:90.2%,Test_loss:0.237

Epoch:49, Train_acc:100.0%, Train_loss:0.005, Test_acc:90.7%,Test_loss:0.223

Epoch:50, Train_acc:100.0%, Train_loss:0.005, Test_acc:90.0%,Test_loss:0.229

Done

知识点记录

BatchNorm2d

用于进行批归一化(Batch Normalization)操作。它通常应用于卷积神经网络(CNN)的卷积层之后或全连接层之前,用于规范化输入数据并加速网络的训练过程。

批归一化是一种常用的技术,旨在解决深度神经网络训练中的梯度消失和梯度爆炸问题,并提高网络的收敛速度和稳定性。批归一化通过对每个小批量样本的特征进行规范化,使得特征的均值接近0,方差接近1。这样可以使得输入数据分布更加稳定,有助于缓解梯度问题,提高网络的泛化能力。

BatchNorm2d层是应用于二维数据(例如图像)的批归一化操作。它在每个通道的特征图上进行归一化,并通过学习可学习的参数来调整规范化的结果。具体而言,对于每个通道,BatchNorm2d计算该通道上所有样本的均值和方差,并使用这些统计量来对该通道上的特征进行规范化。这样可以保持不同样本之间的特征分布的一致性。

例:

import torch

import torch.nn as nn

batchnorm = nn.BatchNorm2d(2)

input_data = torch.Tensor([

[[[1,2],[3,4]],[[100,102],[4,3]]],

[[[2,3],[4,5]],[[101,103],[5,3]]]

]

)

print (input_data)

output = batchnorm(input_data)

print (output)

tensor([[[[ 1., 2.],

[ 3., 4.]],

[[100., 102.],

[ 4., 3.]]],

[[[ 2., 3.],

[ 4., 5.]],

[[101., 103.],

[ 5., 3.]]]])

tensor([[[[-1.6330, -0.8165],

[ 0.0000, 0.8165]],

[[ 0.9691, 1.0100],

[-0.9947, -1.0151]]],

[[[-0.8165, 0.0000],

[ 0.8165, 1.6330]],

[[ 0.9896, 1.0305],

[-0.9742, -1.0151]]]], grad_fn=<NativeBatchNormBackward0>)

关于卷积核的思考

一直在思考这个问题,我们只设置了卷积核的size和channel,但是卷积核里面的具体值是多少是我们没有设置过的。

我们都知道有不同的卷积核可以用来识别不同的特征,但是在cnn的过程中,这个卷积核的变化是我们看不到的。

暂时没找到太好的解释,引用一下gpt的回答:

结论

- batch_size对于test_accuracy有什么影响

batch_size越小,容易震荡越大,其实理解也很容易,小批量数据更容易会不均匀,所以适当增大较好 - 如何分阶段配置学习率

下次一定

参考:

https://blog.csdn.net/weixin_44943389/article/details/131281942

一文弄懂BatchNorm1d和BatchNorm2d

https://blog.csdn.net/bigkaimyc/article/details/136648815

318

318

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?