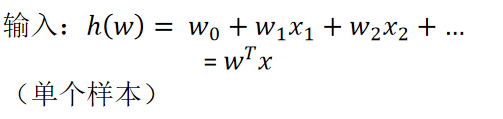

逻辑回归定义

逻辑回归是解决二分类问题的利器

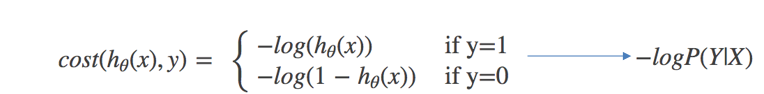

逻辑回归的损失函数

与线性回归原理相同,但由于是分类问题,损失函数不一样,只能通过梯度下降求解

对数似然损失函数:

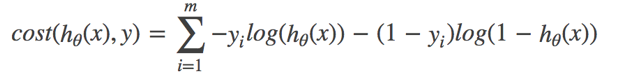

完整的损失函数:

cost损失的值越小,那么预测的类别准确度更高

sklearn逻辑回归API

sklearn.linear_model.LogisticRegression(penalty=‘l2’, C = 1.0)

Logistic回归分类器

coef_:回归系数

案例:

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LogisticRegression

from sklearn.preprocessing import StandardScaler

from sklearn.metrics import classification_report

def logistic():

"""

逻辑回归做二分类进行癌症预测(根据细胞的属性特征)

:return: NOne

"""

# 构造列标签名字

column = ['Sample code number','Clump Thickness', 'Uniformity of Cell Size','Uniformity of Cell Shape','Marginal Adhesion', 'Single Epithelial Cell Size','Bare Nuclei','Bland Chromatin','Normal Nucleoli','Mitoses','Class']

# 读取数据

data = pd.read_csv("https://archive.ics.uci.edu/ml/machine-learning-databases/breast-cancer-wisconsin/breast-cancer-wisconsin.data", names=column)

print(data)

# 缺失值进行处理

data = data.replace(to_replace='?', value=np.nan)# 将? 换成NAN

data = data.dropna() # 删除空值

# 进行数据的分割

x_train, x_test, y_train, y_test = train_test_split(data[column[1:10]], data[column[10]], test_size=0.25)

# 进行标准化处理

std = StandardScaler()

x_train = std.fit_transform(x_train)

x_test = std.transform(x_test)

# 逻辑回归预测

lg = LogisticRegression(C=1.0)

lg.fit(x_train, y_train)

print(lg.coef_) # 回归系数

y_predict = lg.predict(x_test)

print("准确率:", lg.score(x_test, y_test))

print("召回率:", classification_report(y_test, y_predict, labels=[2, 4], target_names=["良性", "恶性"]))

return None

if __name__ == "__main__":

logistic()

LogisticRegression(使用的场景)

应用:广告点击率预测、电商购物搭配推荐

优点:适合需要得到一个分类概率的场景

缺点:当特征空间很大时,逻辑回归的性能不是很好

(看硬件能力)

42万+

42万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?