逻辑回归(Logistic Regression)

软性二分类(Soft Binary Classification)

逻辑回归实际上是一种软性二分类(Soft Binary Classification),与 硬性二分类(Hard Binary Classification)的区别是数据一致,但是目标函数不同,软性二分类的目标是给出分类结果为正负样本的概率分别为多少,比如预测是否发放信用卡时,不在是 0/1 ,而是预测发放信用卡的概率的是多大。

目标函数公式如下:

f

(

x

)

=

P

(

+

1

∣

x

)

∈

[

0

,

1

]

f ( \mathbf { x } ) = P ( + 1 | \mathbf { x } ) \in [ 0,1 ]

f(x)=P(+1∣x)∈[0,1]

逻辑假设函数(Logistic Hypothesis)

与硬二分类(perceptron)不同的是不在使用 sign 函数,取而代之的是逻辑函数

θ

(

s

)

\theta(s)

θ(s),利用分数(

w

T

x

\mathbf{w}^{T}\mathbf{x}

wTx)来估计概率,所以逻辑假设函数(Logistic hypothesis)如下:

h

(

x

)

=

θ

(

w

T

x

)

h(\mathbf{x})=\operatorname{\theta}\left(\mathbf{w}^{T}\mathbf{x}\right)

h(x)=θ(wTx)

其中

θ

(

s

)

\theta(s)

θ(s) 的数学表达为:

θ

(

s

)

=

e

s

1

+

e

s

=

1

1

+

e

−

s

\theta ( s ) = \frac { e ^ { s } } { 1 + e ^ { s } } = \frac { 1 } { 1 + e ^ { - s } }

θ(s)=1+eses=1+e−s1

可以推导出

1

−

θ

(

s

)

=

θ

(

−

s

)

1 - \theta ( s ) = \theta ( -s )

1−θ(s)=θ(−s)。

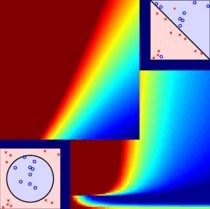

绘制出曲线如下:

可见 θ ( s ) \theta(s) θ(s) 是光滑(smooth),单调(monotonic),像 S 一样的乙状(sigmoid)函数。

逻辑假设函数的最终形式为:

h

(

x

)

=

1

1

+

exp

(

−

w

T

x

)

h ( \mathbf { x } ) = \frac { 1 } { 1 + \exp \left( - \mathbf { w } ^ { T } \mathbf { x } \right) }

h(x)=1+exp(−wTx)1

那么其也具备 θ ( s ) \theta(s) θ(s) 的性质,即 1 − h ( x ) = h ( − x ) 1 - h ( \mathbf { x } ) = h ( \mathbf { -x } ) 1−h(x)=h(−x)。

由前所述,逻辑回归的目标函数可以获取该样本为分别为正负样本的概率:

P

(

y

∣

x

)

=

{

f

(

x

)

for

y

=

+

1

1

−

f

(

x

)

for

y

=

−

1

P ( y | \mathbf { x } ) = \left\{ \begin{array} { l l } f ( \mathbf { x } ) & \text { for } y = + 1 \\ 1 - f ( \mathbf { x } ) & \text { for } y = - 1 \end{array} \right.

P(y∣x)={f(x)1−f(x) for y=+1 for y=−1

交叉熵误差(Cross-Entropy Error)

现在有了逻辑假设函数,那么 E in ( w ) E _ { \text {in } }(\mathbf{w}) Ein (w) 如何设计呢,这里选择全部样本分类正确的概率,这里叫做 likelihood ( h ) \text{likelihood}(h) likelihood(h):

Consider

D

=

{

(

x

1

,

1

)

,

(

x

2

,

−

1

)

,

…

,

(

x

N

,

−

1

)

}

\mathcal { D } = \left\{ \left( \mathbf { x } _ { 1 } , 1 \right) , \left( \mathbf { x } _ { 2 } , -1 \right) , \ldots , \left( \mathbf { x } _ { N } , -1 \right) \right\}

D={(x1,1),(x2,−1),…,(xN,−1)} :

likelihood

(

h

)

=

P

(

x

1

)

P

(

1

∣

x

1

)

×

P

(

x

2

)

P

(

−

1

∣

x

2

)

×

…

P

(

x

N

)

P

(

−

1

∣

x

N

)

=

P

(

x

1

)

h

(

x

1

)

×

P

(

x

2

)

(

1

−

h

(

x

2

)

)

×

…

P

(

x

N

)

(

1

−

h

(

x

N

)

)

=

P

(

x

1

)

h

(

x

1

)

×

P

(

x

2

)

h

(

−

x

2

)

×

…

P

(

x

N

)

h

(

−

x

N

)

=

P

(

x

1

)

h

(

y

1

x

1

)

×

P

(

x

2

)

h

(

y

2

x

2

)

×

…

P

(

x

N

)

h

(

y

n

x

N

)

=

∏

n

=

1

N

h

(

y

n

x

n

)

\begin{aligned}\text { likelihood}( h ) & = P \left( \mathrm { x } _ { 1 } \right) P( 1 | \mathrm { x }_{1}) \times P \left( \mathrm { x } _ { 2 } \right) P( -1 | \mathrm { x }_{2}) \times \ldots P \left( \mathrm { x } _ { N } \right) P( -1 | \mathrm { x }_{N}) \\& = P \left( \mathrm { x } _ { 1 } \right) h \left( \mathrm { x } _ { 1 } \right) \times P \left( \mathrm { x } _ { 2 } \right) \left( 1 - h \left( \mathrm { x } _ { 2 } \right) \right) \times \ldots P \left( \mathrm { x } _ { N } \right) \left( 1 - h \left( \mathrm { x } _ { N } \right) \right) \\ & = P \left( \mathrm { x } _ { 1 } \right) h \left( \mathrm { x } _ { 1 } \right) \times P \left( \mathrm { x } _ { 2 } \right) h \left( -\mathrm { x } _ { 2 } \right) \times \ldots P \left( \mathrm { x } _ { N } \right) h \left(- \mathrm { x } _ { N } \right) \\& = P \left( \mathrm { x } _ { 1 } \right) h \left( y_{1} \mathrm { x } _ { 1 } \right) \times P \left( \mathrm { x } _ { 2 } \right) h \left( y_{2} \mathrm { x } _ { 2 } \right) \times \ldots P \left( \mathrm { x } _ { N } \right) h \left(y_{n} \mathrm { x } _ { N } \right) \\& = \prod _ { n = 1 } ^ { N } h \left( y _ { n } \mathbf { x } _ { n } \right) \\ \end{aligned}

likelihood(h)=P(x1)P(1∣x1)×P(x2)P(−1∣x2)×…P(xN)P(−1∣xN)=P(x1)h(x1)×P(x2)(1−h(x2))×…P(xN)(1−h(xN))=P(x1)h(x1)×P(x2)h(−x2)×…P(xN)h(−xN)=P(x1)h(y1x1)×P(x2)h(y2x2)×…P(xN)h(ynxN)=n=1∏Nh(ynxn)

那么最佳假设函数

g

g

g 为:

g

=

argmax

h

likelihood

(

h

)

g = \underset { h } { \operatorname { argmax } } \operatorname { likelihood } ( h )

g=hargmaxlikelihood(h)

为了方便计算这里将

likelihood

(

h

)

\text { likelihood} ( h )

likelihood(h) 做一下转换:

likelihood

(

w

)

∝

∏

n

=

1

N

θ

(

y

n

w

T

x

n

)

\text { likelihood} ( \mathbf{w} ) \propto \prod _ { n = 1 } ^ { N } \theta \left( y _ { n } \mathbf { w } ^ { T } \mathbf { x } _ { n } \right)

likelihood(w)∝n=1∏Nθ(ynwTxn)

现在可以将其转换为相同任务的另一种形式,将连乘和求最大值转换为累加和求最小值:

max

w

likelihood

(

w

)

⇒

max

w

ln

likelihood

(

w

)

⇒

max

w

∑

n

=

1

N

ln

θ

(

y

n

w

T

x

n

)

⇒

min

w

1

N

∑

n

=

1

N

−

ln

θ

(

y

n

w

T

x

n

)

⇒

min

w

1

N

∑

n

=

1

N

−

ln

(

1

1

+

exp

(

y

n

w

T

x

n

)

)

⇒

min

w

1

N

∑

n

=

1

N

ln

(

1

+

exp

(

y

n

w

T

x

n

)

)

⇒

min

w

1

N

∑

n

=

1

N

err

(

w

,

x

n

,

y

n

)

⇒

E

in

(

w

)

\begin{aligned} \max_{\mathbf{w}} \text { likelihood} ( \mathbf{w} ) & \Rightarrow \max_{\mathbf{w}} \ln \text { likelihood} ( \mathbf{w} ) \\ & \Rightarrow \max_{\mathbf{w}} \sum_{n=1}^{N} \ln \theta \left( y _ { n } \mathbf { w } ^ { T } \mathbf { x } _ { n } \right)\\ & \Rightarrow \min_{\mathbf{w}} \frac{1}{N}\sum_{n=1}^{N} -\ln \theta \left( y _ { n } \mathbf { w } ^ { T } \mathbf { x } _ { n } \right) \\ & \Rightarrow \min_{\mathbf{w}} \frac{1}{N}\sum_{n=1}^{N} -\ln \left( \frac{1}{1 + \exp \left( y _ { n } \mathbf { w } ^ { T } \mathbf { x } _ { n } \right) }\right)\\ & \Rightarrow \min_{\mathbf{w}} \frac{1}{N}\sum_{n=1}^{N} \ln \left( 1 + \exp \left( y _ { n } \mathbf { w } ^ { T } \mathbf { x } _ { n } \right) \right)\\ & \Rightarrow \min_{\mathbf{w}} \frac{1}{N}\sum_{n=1}^{N} \text{err} \left( \mathbf { w } , \mathbf { x } _ { n } , y _ { n }\right) \Rightarrow E_{\text{in}}(\mathbf{w}) \end{aligned}

wmax likelihood(w)⇒wmaxln likelihood(w)⇒wmaxn=1∑Nlnθ(ynwTxn)⇒wminN1n=1∑N−lnθ(ynwTxn)⇒wminN1n=1∑N−ln(1+exp(ynwTxn)1)⇒wminN1n=1∑Nln(1+exp(ynwTxn))⇒wminN1n=1∑Nerr(w,xn,yn)⇒Ein(w)

其中 err ( w , x n , y n ) = ln ( 1 + exp ( y n w T x n ) ) \text{err} \left( \mathbf { w } , \mathbf { x } _ { n } , y _ { n }\right) = \ln \left( 1 + \exp \left( y _ { n } \mathbf { w } ^ { T } \mathbf { x } _ { n } \right) \right) err(w,xn,yn)=ln(1+exp(ynwTxn)) 被称作交叉熵误差(cross-entropy error)。

梯度下降 (Gradient Descent)

求得的 E in E_{\text{in}} Ein 仍然是连续的(continuous), 可微的(differentiable),二次可微的(twice-differentiable),凸的(convex)函数。那么根据连续可微凸函数的最优必要条件梯度为零,便可以获得最优的hypothesis。

下面来推导一下梯度 ∇ E in ( w ) \nabla E_{\text{in}} (\mathbf{w}) ∇Ein(w):

为了书写方便用圆圈和方块表示两个部分:

E

i

n

(

w

)

=

1

N

∑

n

=

1

N

ln

(

1

+

exp

(

−

y

n

w

T

x

n

⏞

◯

)

⏟

□

)

E _ { \mathrm { in } } ( \mathbf { w } ) = \frac { 1 } { N } \sum _ { n = 1 } ^ { N } \ln ( \underbrace { 1 + \exp ( \overbrace { - y _ { n } \mathbf { w } ^ { T } \mathbf { x } _ { n } }^{\bigcirc} ) } _ { \square } )

Ein(w)=N1n=1∑Nln(□

1+exp(−ynwTxn

◯))

那么

E

in

E_{\text{in}}

Ein 在

w

i

\mathbf{w}_i

wi 上的偏导为:

∂

E

in

(

w

)

∂

w

i

=

1

N

∑

n

=

1

N

(

∂

ln

(

□

)

∂

□

)

(

∂

(

1

+

exp

(

◯

)

)

∂

◯

)

(

∂

−

y

n

W

T

x

n

∂

w

i

)

=

1

N

∑

n

=

1

N

(

1

□

)

(

exp

(

◯

)

)

(

−

y

n

x

n

,

i

)

=

1

N

∑

n

=

1

N

(

exp

(

◯

)

exp

(

◯

)

+

1

)

(

−

y

n

x

n

,

i

)

=

1

N

∑

n

=

1

N

θ

(

◯

)

(

−

y

n

x

n

,

i

)

\begin{aligned} \frac { \partial E _ { \text {in } } ( \mathbf { w } ) } { \partial \mathbf { w } _ { i } } & = \frac { 1 } { N } \sum _ { n = 1 } ^ { N } \left( \frac { \partial \ln ( \square ) } { \partial \square } \right) \left( \frac { \partial ( 1 + \exp ( \bigcirc ) ) } { \partial \bigcirc } \right) \left( \frac { \partial - y _ { n } \mathbf { W } ^ { T } \mathbf { x } _ { n } } { \partial w _ { i } } \right)\\ & = \frac { 1 } { N } \sum _ { n = 1 } ^ { N } \left( \frac { 1 } { \square } \right) \left( { \exp ( \bigcirc ) } \right) \left( { - y _ { n } \mathbf { x } _ { n,i } } \right)\\ & = \frac { 1 } { N } \sum _ { n = 1 } ^ { N } \left( \frac { \exp ( \bigcirc ) } { \exp ( \bigcirc ) + 1 } \right) \left( { - y _ { n } \mathbf { x } _ { n,i } } \right)\\ & = \frac { 1 } { N } \sum _ { n = 1 } ^ { N } \theta(\bigcirc) \left( { - y _ { n } \mathbf { x } _ { n,i } } \right)\\ \end{aligned}

∂wi∂Ein (w)=N1n=1∑N(∂□∂ln(□))(∂◯∂(1+exp(◯)))(∂wi∂−ynWTxn)=N1n=1∑N(□1)(exp(◯))(−ynxn,i)=N1n=1∑N(exp(◯)+1exp(◯))(−ynxn,i)=N1n=1∑Nθ(◯)(−ynxn,i)

那么进一步可求得:

∇

E

in

(

w

)

=

1

N

∑

n

=

1

N

θ

(

−

y

n

w

T

x

n

)

(

−

y

n

x

n

)

\nabla E_{\text{in}} (\mathbf{w}) = \frac { 1 } { N } \sum _ { n = 1 } ^ { N } \theta(- y _ { n } \mathbf{w}^{T} \mathbf { x } _ { n }) \left( { - y _ { n } \mathbf { x } _ { n } } \right)\\

∇Ein(w)=N1n=1∑Nθ(−ynwTxn)(−ynxn)

如何使得梯度为零呢?

这里借鉴与PLA,使用迭代优化策略(iterative optimization approach):

For

t

=

0

,

1

,

…

t=0,1, \ldots

t=0,1,…

w

t

+

1

←

w

t

+

η

v

\mathbf { w } _ { t + 1 } \leftarrow \mathbf { w } _ { t } + \eta \mathbf { v }

wt+1←wt+ηv

when stop, return last w \mathbf{w} w as g g g.

其中 v \mathbf{v} v 为单位方向向量,即 ∣ ∣ v ∣ ∣ = 1 ||\mathbf{v}|| = 1 ∣∣v∣∣=1;而 η \eta η 代表了步长,所以用为正实数。

那么优化目标便变成:

min

∥

v

∥

=

1

E

i

n

(

w

t

+

η

v

)

\min _ { \| \mathbf { v } \| = 1 } E _ { \mathrm { in } } \left( \mathbf { w } _ { t } + \eta \mathbf { v } \right)

∥v∥=1minEin(wt+ηv)

方向向量 v \mathbf{v} v

那么先不考虑步长,认为其非常小,那么可以通过一阶泰勒展开转换为:

E

i

n

(

w

t

+

η

v

)

≈

E

i

n

(

w

t

)

+

η

v

T

∇

E

i

n

(

w

t

)

E _ { \mathrm { in } } \left( \mathbf { w } _ { t } + \eta \mathbf { v } \right) \approx E _ { \mathrm { in } } \left( \mathbf { w } _ { t } \right) + \eta \mathbf { v } ^ { T } \nabla E _ { \mathrm { in } } \left( \mathbf { w } _ { t } \right)

Ein(wt+ηv)≈Ein(wt)+ηvT∇Ein(wt)

可以看出:

min

∥

v

∥

=

1

E

in

(

w

t

)

⏟

known

+

η

⏟

given positive

v

T

∇

E

in

(

w

t

)

⏟

known

\min _ { \| \mathbf { v } \| = 1 } \underbrace { E _ { \text {in } } \left( \mathbf { w } _ { t } \right) } _ { \text {known } } + \underbrace { \eta } _ { \text {given positive } } \mathbf { v } ^ { T } \underbrace { \nabla E _ { \text {in } } \left( \mathbf { w } _ { t } \right) } _ { \text {known } }

∥v∥=1minknown

Ein (wt)+given positive

ηvTknown

∇Ein (wt)

看下图,当在原始点向最优点搜索时,应当顺着负梯度方向搜索。

同时从理论上讲,在不考虑步长时,当两个向量方向相反时,其乘积最小,所以方向向量应该是与梯度方向完全相反的单位向量:

v

=

−

∇

E

i

n

(

W

t

)

∥

∇

E

i

n

(

W

t

)

∥

\mathbf { v } = - \frac { \nabla E _ { \mathrm { in } } \left( \mathbf { W } _ { t } \right) } { \left\| \nabla E _ { \mathrm { in } } \left( \mathbf { W } _ { t } \right) \right\| }

v=−∥∇Ein(Wt)∥∇Ein(Wt)

那么迭代函数(

η

\eta

η is small)则为:

w

t

+

1

←

w

t

−

η

∇

E

i

n

(

w

t

)

∥

∇

E

i

n

(

w

t

)

∥

\mathbf { w } _ { t + 1 } \leftarrow \mathbf { w } _ { t } - \eta \frac { \nabla E _ { \mathrm { in } } \left( \mathbf { w } _ { t } \right) } { \left\| \nabla E _ { \mathrm { in } } \left( \mathbf { w } _ { t } \right) \right\| }

wt+1←wt−η∥∇Ein(wt)∥∇Ein(wt)

步长 η \eta η

观察下面三条曲线:

可以看出步长应当随着梯度大小进行调整,也就是第三条曲线上的搜索过程是最佳的。即步长 η \eta η 应与 ∥ ∇ E i n ( W t ) ∥ \left\| \nabla E _ { \mathrm { in } } \left( \mathbf { W } _ { t } \right) \right\| ∥∇Ein(Wt)∥ 单调(monotonic)相关。

现在令

η

=

η

⋅

∥

∇

E

i

n

(

W

t

)

∥

\eta = \eta \cdot \left\| \nabla E _ { \mathrm { in } } \left( \mathbf { W } _ { t } \right) \right\|

η=η⋅∥∇Ein(Wt)∥ ,那么迭代式可进一步转换为:

w

t

+

1

←

w

t

−

η

∇

E

i

n

(

w

t

)

\mathbf { w } _ { t + 1 } \leftarrow \mathbf { w } _ { t } - \eta { \nabla E _ { \mathrm { in } } \left( \mathbf { w } _ { t } \right) }

wt+1←wt−η∇Ein(wt)

这就是梯度下降法,一个简单而有常用的优化工具(a simple & popular optimization tool)。

算法实现

initialize w 0 \mathbf{w}_{0} w0

For t = 0 , 1 , … t=0,1, \ldots t=0,1,…

-

cumpute

∇ E in ( w ) = 1 N ∑ n = 1 N θ ( − y n w T x n ) ( − y n x n ) \nabla E_{\text{in}} (\mathbf{w}) = \frac { 1 } { N } \sum _ { n = 1 } ^ { N } \theta(- y _ { n } \mathbf{w}^{T} \mathbf { x } _ { n }) \left( { - y _ { n } \mathbf { x } _ { n } } \right)\\ ∇Ein(w)=N1n=1∑Nθ(−ynwTxn)(−ynxn) -

update by

w t + 1 ← w t − η ∇ E i n ( w t ) \mathbf { w } _ { t + 1 } \leftarrow \mathbf { w } _ { t } - \eta { \nabla E _ { \mathrm { in } } \left( \mathbf { w } _ { t } \right) } wt+1←wt−η∇Ein(wt) -

… until ∇ E in ( w ) = 0 \nabla E_{\text{in}} (\mathbf{w}) = 0 ∇Ein(w)=0 or enough iterations

return w t + 1 {\mathbf{w}}_{t+1} wt+1 as g

逻辑回归(Logistic Regression) → \rightarrow →分类(Classification)

在学习线性回归时就讨论过是否可以用于分类,现在进行相同的分析:

先令 s = w T x s = \mathbf { w } ^ { T } \mathbf { x } s=wTx ,同时当用于二分类时 y ∈ { − 1 , + 1 } y \in \{ - 1 , + 1 \} y∈{−1,+1} 所以各误差函数可以转换如下:

err 0/1 ( s , y ) = [ sign ( s ) ≠ y ] = [ sign ( y s ) ≠ 1 ] err SQR ( s , y ) = ( y − s ) 2 = ( y s − 1 ) 2 err CE ( s , y ) = ln ( 1 + exp ( − y s ) ) err SCE ( s , y ) = log 2 ( 1 + exp ( − y s ) ) \begin{aligned} \text{err}_ { \text{0/1 } } ( s , y ) & = [ \operatorname { sign } ( s ) \neq y ] = [ \operatorname { sign } ( y s ) \neq 1 ] \\ \text{err}_ { \text{SQR} } ( s , y ) & = ( y - s ) ^ { 2 } = ( y s - 1 ) ^ { 2 }\\ \text{err}_ { \text{CE } } ( s , y ) & = \ln ( 1 + \exp ( - y s ) ) \\ \text{err}_ { \text{SCE} } ( s , y ) & = \log _ { 2 } ( 1 + \exp ( - y s ) ) \end{aligned} err0/1 (s,y)errSQR(s,y)errCE (s,y)errSCE(s,y)=[sign(s)=y]=[sign(ys)=1]=(y−s)2=(ys−1)2=ln(1+exp(−ys))=log2(1+exp(−ys))

其中 err SCE \text{err}_ { \text{SCE} } errSCE 是通过 err CE \text{err}_ { \text{CE} } errCE 乘以 1 / ln ( 2 ) 1/ \ln (2) 1/ln(2) 获得的。

绘制出误差函数的曲线图关系(其中 err CE \text{err}_ { \text{CE} } errCE 与 err SCE \text{err}_ { \text{SCE} } errSCE 相似不再绘制):

由图可知:

err

0

/

1

(

s

,

y

)

≤

err

S

C

E

(

s

,

y

)

=

1

ln

2

err

C

E

(

s

,

y

)

\operatorname { err } _ { 0 / 1 } ( s , y ) \leq \operatorname { err } _ { \mathrm { SCE } } ( s , y ) = \frac { 1 } { \ln 2 } \operatorname { err } _ { \mathrm { CE } } ( s , y )

err0/1(s,y)≤errSCE(s,y)=ln21errCE(s,y)

进而推导得:

E

i

n

0

/

1

(

w

)

≤

E

i

n

S

C

E

(

W

)

=

1

ln

2

E

i

n

C

E

(

w

)

E

o

u

t

0

/

1

(

w

)

≤

E

o

u

t

S

C

E

(

W

)

=

1

ln

2

E

o

u

t

C

E

(

w

)

E _ { \mathrm { in } } ^ { 0 / 1 } ( \mathbf { w } ) \leq E _ { \mathrm { in } } ^ { \mathrm { SCE } } ( \mathrm { W } ) = \frac { 1 } { \ln 2 } E _ { \mathrm { in } } ^ { \mathrm { CE } } ( \mathbf { w } )\\ E _ { \mathrm { out } } ^ { 0 / 1 } ( \mathbf { w } ) \leq E _ { \mathrm { out } } ^ { \mathrm { SCE } } ( \mathrm { W } ) = \frac { 1 } { \ln 2 } E _ { \mathrm { out } } ^ { \mathrm { CE } } ( \mathbf { w } )

Ein0/1(w)≤EinSCE(W)=ln21EinCE(w)Eout0/1(w)≤EoutSCE(W)=ln21EoutCE(w)

那么可以得知:

E o u t 0 / 1 ( w ) ≤ E i n 0 / 1 ( w ) + Ω 0 / 1 ≤ 1 ln 2 E i n C E ( w ) + Ω 0 / 1 \begin{aligned} E _ { \mathrm { out } } ^ { 0 / 1 } ( \mathbf { w } ) & \leq E _ { \mathrm { in } } ^ { 0 / 1 } ( \mathbf { w } ) + \Omega ^ { 0 / 1 } \\ & \leq \frac{1}{\ln 2} E _ { \mathrm { in } } ^ { CE } ( \mathbf { w } ) + \Omega ^ { 0 / 1 } \end{aligned} Eout0/1(w)≤Ein0/1(w)+Ω0/1≤ln21EinCE(w)+Ω0/1

或者:

E

out

0

/

1

(

w

)

≤

1

ln

2

E

out

C

E

(

W

)

≤

1

ln

2

E

in

C

E

(

W

)

+

1

ln

2

Ω

C

E

\begin{aligned} E _ { \text {out } } ^ { 0 / 1 } ( \mathbf { w } ) & \leq \frac { 1 } { \ln 2 } E _ { \text {out } } ^ { \mathrm { CE } } ( \mathbf { W } ) \\ & \leq \frac { 1 } { \ln 2 } E _ { \text {in } } ^ { \mathrm { CE } } ( \mathbf { W } ) + \frac { 1 } { \ln 2 } \Omega ^ { \mathrm { CE } } \end{aligned}

Eout 0/1(w)≤ln21Eout CE(W)≤ln21Ein CE(W)+ln21ΩCE

进而得知:

small

E

i

n

C

E

(

w

)

⇒

small

E

o

u

t

0

/

1

(

w

)

\text { small } E _ { \mathrm { in } } ^ { \mathrm { CE } } ( \mathbf { w } ) \Rightarrow \text { small } E _ { \mathrm { out } } ^ { 0 / 1 } ( \mathbf { w } )

small EinCE(w)⇒ small Eout0/1(w)

所以逻辑回归可以用于分类。

实际运用中:

- linear regression sometimes used to set w0 for PLA/pocket/logistic regression

线性回归可用于获取PLA/pocket/logistic regression的初始权重向量 - logistic regression often preferred over pocket

逻辑回归比口袋算法更为常用

随机梯度下降 (Stochastic Gradient Descent)

随机梯度下降的思想为:

stochastic gradient

=

true gradient

+

zero-mean ‘noise’ directions

\text{stochastic gradient} = \text{true gradient} + \text{zero-mean ‘noise’ directions}

stochastic gradient=true gradient+zero-mean ‘noise’ directions

即认为在迭代足够多步后:

average true gradient

≈

average stochastic gradient

\text{average true gradient} \approx \text{average stochastic gradient}

average true gradient≈average stochastic gradient

其迭代公式为:

w

t

+

1

←

w

t

+

η

θ

(

−

y

n

w

t

T

x

n

)

(

y

n

x

n

)

⏟

−

∇

err

(

w

t

,

x

n

,

y

n

)

\mathbf { w } _ { t + 1 } \leftarrow \mathbf { w } _ { t } + \eta \underbrace { \theta \left( - y _ { n } \mathbf { w } _ { t } ^ { T } \mathbf { x } _ { n } \right) \left( y _ { n } \mathbf { x } _ { n } \right) } _ { - \nabla \operatorname { err } \left( \mathbf { w } _ { t } , \mathbf { x } _ { n } , y _ { n } \right) }

wt+1←wt+η−∇err(wt,xn,yn)

θ(−ynwtTxn)(ynxn)

由此看出其使用单个样本的误差梯度替换全部的梯度,将时间复杂度由

O

(

N

)

O(N)

O(N) 降为

O

(

1

)

O(1)

O(1) 。

6274

6274

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?