论文名称:Densely Connected Convolutional Networks(CVPR 2017, Best Paper Award)

论文链接:https://arxiv.org/pdf/1608.06993.pdf

源码链接:https://github.com/liuzhuang13/DenseNet

caffe版源码: https://github.com/liuzhuang13/DenseNetCaffe

本篇博文实现了在Windows环境下DenseNet网络的复现(caffe版)(应用到自己的数据集)

一、下载源码

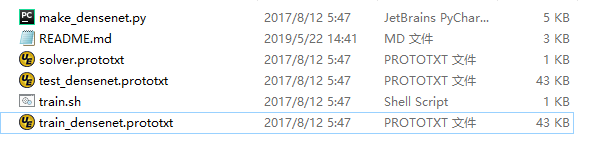

源码中共有六个文件,分别进行解释。

1.make_densenet.py

make_densenet.py主要用来生成train_densenet.prototxt文件、test_densenet.prototxt文件以及solver.prototxt文件。打开该文件然后run就可以生成以上三个文件。同时可以修改make_densenet.py其中的参数从而修改这三个文件内的参数,比如:路径等。

2.train_densenet.prototxt文件

train_densenet.prototxt文件是训练时用到的网络文件。

3.test_densenet.prototxt文件

test_densenet.prototxt文件是在训练过程中的验证部分用到的网络文件。

4.solver.prototxt文件

solver.prototxt文件是caffe常用的文件。

5.train. sh

train.sh是ubuntu系统下训练文件,在windows系统下是train.bat文件。

注意:

需要注意的是solver.prototxt文件中有两个重要的参数train_net: "train_densenet.prototxt"和test_net: "test_densenet.prototxt",这里个参数分别是训练和验证时用到的网络。原来的网络这部分的参数是net: "train_val.prototxt"。这是与原来的网络的很大的区别。

(不过train_densenet.prototxt文件、test_densenet.prototxt文件可以修改成一个文件,也就是原来我们常用的train_val.prototxt文件)

train_net: "train_densenet.prototxt"

test_net: "test_densenet.prototxt"

test_iter: 200

test_interval: 800

base_lr: 0.1

display: 1

max_iter: 230000

lr_policy: "multistep"

gamma: 0.1

momentum: 0.9

weight_decay: 0.0001

solver_mode: GPU

random_seed: 831486

stepvalue: 115000

stepvalue: 172500

type: "Nesterov"

二、修改网络训练文件

主要是修改train_densenet.prototxt文件、test_densenet.prototxt文件以及solver.prototxt文件

1.solver.prototxt文件

根据自己的数据集以及经验对改文件进行修改。

2.train_densenet.prototxt文件

主要修改mean_file路径、source路径和最后全连接层的输出参数num_output(根据自己的任务,如二分类,该值应该设为2)。

3.test_densenet.prototxt文件

同修改train_densenet.prototxt文件类似

主要修改mean_file路径、source路径和最后全连接层的输出参数num_output(根据自己的任务,如二分类,该值应该设为2)。

注意:

1.num_output参数一定要根据自己的任务进行修改。

2.根据自己电脑配置修改batch_size大小。

3.其实train_densenet.prototxt文件、test_densenet.prototxt文件可以修改成一个文件,也就是原来我们常用的train_val.prototxt文件(这里就不改了)。

三、开始训练

编辑train.bat,然后双击训练。

SET GLOG_logtostderr=1

caffe.exe路径\caffe.exe train --solver solver.prototxt路径\solver.prototxt

pause

训练时可能会出现内存不足的情况,这时候需要调整batch_size大小再进行训练。

四、测试

1.生成deploy.prototxt 文件。

我是根据test_densenet.prototxt文件自己生成了对应densenet的deploy.prototxt 文件,具体方法请查看我的另一篇博客:https://blog.csdn.net/GL3_24/article/details/90109237 。此处只贴出来对应的deploy.prototxt 文件,很长。仅供参考。

name: "DENSENET_121"

input: "Data1"

input_dim: 1

input_dim: 3

input_dim: 224

input_dim: 224

layer {

name: "Convolution1"

type: "Convolution"

bottom: "Data1"

top: "Convolution1"

convolution_param {

num_output: 16

bias_term: false

pad: 1

kernel_size: 3

stride: 1

weight_filler {

type: "msra"

}

bias_filler {

type: "constant"

}

}

}

layer {

name: "BatchNorm1"

type: "BatchNorm"

bottom: "Convolution1"

top: "BatchNorm1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

}

layer {

name: "Scale1"

type: "Scale"

bottom: "BatchNorm1"

top: "BatchNorm1"

scale_param {

filler {

value: 1

}

bias_term: true

bias_filler {

value: 0

}

}

}

layer {

name: "ReLU1"

type: "ReLU"

bottom: "BatchNorm1"

top: "BatchNorm1"

}

layer {

name: "Convolution2"

type: "Convolution"

bottom: "BatchNorm1"

top: "Convolution2"

convolution_param {

num_output: 12

bias_term: false

pad: 1

kernel_size: 3

stride: 1

weight_filler {

type: "msra"

}

bias_filler {

type: "constant"

}

}

}

layer {

name: "Dropout1"

type: "Dropout"

bottom: "Convolution2"

top: "Dropout1"

dropout_param {

dropout_ratio: 0.2

}

}

layer {

name: "Concat1"

type: "Concat"

bottom: "Convolution1"

bottom: "Dropout1"

top: "Concat1"

concat_param {

axis: 1

}

}

layer {

name: "BatchNorm2"

type: "BatchNorm"

bottom: "Concat1"

top: "BatchNorm2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

}

layer {

name: "Scale2"

type: "Scale"

bottom: "BatchNorm2"

top: "BatchNorm2"

scale_param {

filler {

value: 1

}

bias_term: true

bias_filler {

value: 0

}

}

}

layer {

name: "ReLU2"

type: "ReLU"

bottom: "BatchNorm2"

top: "BatchNorm2"

}

layer {

name: "Convolution3"

type: "Convolution"

bottom: "BatchNorm2"

top: "Convolution3"

convolution_param {

num_output: 12

bias_term: false

pad: 1

kernel_size: 3

stride: 1

weight_filler {

type: "msra"

}

bias_filler {

type: "constant"

}

}

}

layer {

name: "Dropout2"

type: "Dropout"

bottom: "Convolution3"

top: "Dropout2"

dropout_param {

dropout_ratio: 0.2

}

}

layer {

name: "Concat2"

type: "Concat"

bottom: "Concat1"

bottom: "Dropout2"

top: "Concat2"

concat_param {

axis: 1

}

}

layer {

name: "BatchNorm3"

type: "BatchNorm"

bottom: "Concat2"

top: "BatchNorm3"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

}

layer {

name: "Scale3"

type: "Scale"

bottom: "BatchNorm3"

top: "BatchNorm3"

scale_param {

filler {

value: 1

}

bias_term: true

bias_filler {

value: 0

}

}

}

layer {

name: "ReLU3"

type: "ReLU"

bottom: "BatchNorm3"

top: "BatchNorm3"

}

layer {

name: "Convolution4"

type: "Convolution"

bottom: "BatchNorm3"

top: "Convolution4"

convolution_param {

num_output: 12

bias_term: false

pad: 1

kernel_size: 3

stride: 1

weight_filler {

type: "msra"

}

bias_filler {

type: "constant"

}

}

}

layer {

name: "Dropout3"

type: "Dropout"

bottom: "Convolution4"

top: "Dropout3"

dropout_param {

dropout_ratio: 0.2

}

}

layer {

name: "Concat3"

type: "Concat"

bottom: "Concat2"

bottom: "Dropout3"

top: "Concat3"

concat_param {

axis: 1

}

}

layer {

name: "BatchNorm4"

type: "BatchNorm"

bottom: "Concat3"

top: "BatchNorm4"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

}

layer {

name: "Scale4"

type: "Scale"

bottom: "BatchNorm4"

top: "BatchNorm4"

scale_param {

filler {

value: 1

}

bias_term: true

bias_filler {

value: 0

}

}

}

layer {

name: "ReLU4"

type: "ReLU"

bottom: "BatchNorm4"

top: "BatchNorm4"

}

layer {

name: "Convolution5"

type: "Convolution"

bottom: "BatchNorm4"

top: "Convolution5"

convolution_param {

num_output: 12

bias_term: false

pad: 1

kernel_size: 3

stride: 1

weight_filler {

type: "msra"

}

bias_filler {

type: "constant"

}

}

}

layer {

name: "Dropout4"

type: "Dropout"

bottom: "Convolution5"

top: "Dropout4"

dropout_param {

dropout_ratio: 0.2

}

}

layer {

name: "Concat4"

type: "Concat"

bottom: "Concat3"

bottom: "Dropout4"

top: "Concat4"

concat_param {

axis: 1

}

}

layer {

name: "BatchNorm5"

type: "BatchNorm"

bottom: "Concat4"

top: "BatchNorm5"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

}

layer {

name: "Scale5"

type: "Scale"

bottom: "BatchNorm5"

top: "BatchNorm5"

scale_param {

filler {

value: 1

}

bias_term: true

bias_filler {

value: 0

}

}

}

layer {

name: "ReLU5"

type: "ReLU"

bottom: "BatchNorm5"

top: "BatchNorm5"

}

layer {

name: "Convolution6"

type: "Convolution"

bottom: "BatchNorm5"

top: "Convolution6"

convolution_param {

num_output: 12

bias_term: false

pad: 1

kernel_size: 3

stride: 1

weight_filler {

type: "msra"

}

bias_filler {

type: "constant"

}

}

}

layer {

name: "Dropout5"

type: "Dropout"

bottom: "Convolution6"

top: "Dropout5"

dropout_param {

dropout_ratio: 0.2

}

}

layer {

name: "Concat5"

type: "Concat"

bottom: "Concat4"

bottom: "Dropout5"

top: "Concat5"

concat_param {

axis: 1

}

}

layer {

name: "BatchNorm6"

type: "BatchNorm"

bottom: "Concat5"

top: "BatchNorm6"

param {

本文详细介绍了在Windows上使用Caffe实现DenseNet网络的步骤,包括源码下载、网络文件修改、训练、测试及预训练模型的fine-tune。涉及关键文件如train_densenet.prototxt、solver.prototxt的配置,并提醒注意内存管理和参数调整。

本文详细介绍了在Windows上使用Caffe实现DenseNet网络的步骤,包括源码下载、网络文件修改、训练、测试及预训练模型的fine-tune。涉及关键文件如train_densenet.prototxt、solver.prototxt的配置,并提醒注意内存管理和参数调整。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1111

1111

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?