一、LCEL的特点

LangChain表达式语言(LCEL)是一种声明式方法,可以轻松地将 链 组合在一起。LCEL从第一天起就被设计成支持将原型投入生产,无需更改代码,从最简单的 “ prompt + LLM ” 链 到最复杂的链(我们已经看到人们在生产中成功运行了具有数百个步骤的LCEL链)。强调几个你可能想使用LCEL的原因:

流媒体支持:当您使用LCEL构建链时,您可以获得最佳的首次令牌时间(直到第一批输出出来所经过的时间)。对于某些链来说,这意味着我们将令牌直接从LLM流式传输到流式输出解析器,然后可以与LLM提供者输出原始令牌相同的速率获得解析的增量输出块。

异步支持:任何用LCEL构建的链既可以用同步API调用(例如在你的Jupyter笔记本中进行原型开发时),也可以用异步API调用(例如在 LangServe 服务器)。这使得可以在原型和生产中使用相同的代码,从而获得出色的性能,并能够在同一台服务器上处理多个并发请求。

优化的并行执行:只要您的LCEL链有可以并行执行的步骤(例如,如果您从多个检索器获取文档),我们就会在同步和异步接口中自动执行,以尽可能减少延迟。

重试和回退:为LCEL链的任何部分配置重试和回退。这是一个很好的方法,使您的链在规模上更加可靠。我们目前正在努力为重试/回退添加流支持,因此您可以在没有任何延迟成本的情况下获得更高的可靠性。

访问中间结果:对于更复杂的链,甚至在最终输出产生之前访问中间步骤的结果通常非常有用。这可以用来让终端用户知道正在发生的事情,甚至只是为了调试您的链。您可以流式传输中间结果,它可在每个LangServe服务器。

输入和输出模式:输入和输出模式为每个LCEL链提供了从链的结构中推断出的Pydantic和JSONSchema模式。这可用于验证输入和输出,是LangServe不可或缺的一部分。

无缝LangSmith跟踪集成:随着您的链变得越来越复杂,了解每一步到底发生了什么变得越来越重要。和LCEL一起,全部 步骤会自动记录到 LangSmith 为了最大的可观察性和可调试性。

无缝LangServe部署集成:使用LCEL创建的任何链都可以使用LangServe.

二、开始

LCEL使从基本组件构建复杂链变得容易,并支持开箱即用的功能,如 流、并行和日志记录。

基本示例:Promt + Model + Output parser

( 提示 + 模型 + 输出解析器)

最基本和最常见的用例是将提示模板和模型链接在一起。为了了解这是如何工作的,让我们创建一个获取一个主题并生成一个笑话的链:

基本包安装:

pip install --upgrade --quiet langchain-core langchain-community langchain-openai示例代码:

from langchain_core.output_parsers import StrOutputParser

from langchain_core.prompts import ChatPromptTemplate

from langchain_openai import ChatOpenAI

# 提示

prompt = ChatPromptTemplate.from_template("tell me a short joke about {topic}")

# 模型(大语言模型)

model = ChatOpenAI(model="gpt-4")

# 输出解析器

output_parser = StrOutputParser()

# 组合成链

chain = prompt | model | output_parser

# 运行

response = chain.invoke({"topic": "ice cream"})

# 打印结果

print(response)(提示,这里的model:ChatOpenAI需要配置openai_api_key,如果是代理的还需要配置open_api_base,如果不了解的请先看入门篇。)

输出结果:

"Why don't ice creams ever get invited to parties?\n\nBecause they always drip when things heat up!"

请注意这段代码中的这一行,在这里我们使用 LCEL 将不同的组件拼凑成一条链:

chain = prompt | model | output_parser

这 | 符号类似于unix管道运算符,它将不同的组件链接在一起,将一个组件的 输出 作为 输入 提供给下一个组件。

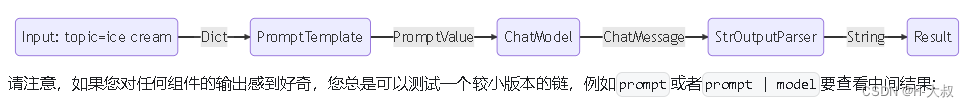

在这个链中,用户输入传递给提示模板,然后提示模板输出传递给模型,然后模型输出传递给输出解析器。让我们单独看一下每个组件,以真正了解发生了什么。

1.Prompt 提示

Prompt 是一个 BasePromptTemplate ,这意味着它接受模板变量的字典并生成PromptValue。PromptValue是一个完整提示的包装,可以传递给LLM(以字符串作为输入)或ChatModel(以消息序列作为输入)。它可以与任何一种语言模型类型一起使用,因为它定义了用于生成 BaseMessages 和用于生成字符串的逻辑。

prompt_value = prompt.invoke({"topic": "ice cream"})

prompt_valueChatPromptValue(messages=[HumanMessage(content='tell me a short joke about ice cream')])prompt_value.to_messages()[HumanMessage(content='tell me a short joke about ice cream')]prompt_value.to_string()'Human: tell me a short joke about ice cream'2.Model 模型

这 PromptValue 然后被传递给 model。在这种情况下我们的model是一个ChatModel,这意味着它将输出 BaseMessage.

message = model.invoke(prompt_value)

messageAIMessage(content="Why don't ice creams ever get invited to parties?\n\nBecause they always bring a melt down!")

如果我们的 model是一个 LLM,它将输出一个字符串。

from langchain_openai.llms import OpenAI

llm = OpenAI(model="gpt-3.5-turbo-instruct")

llm.invoke(prompt_value)'\n\nRobot: Why did the ice cream truck break down? Because it had a meltdown!'3. Output输出解析器

最后我们通过我们的model输出到output_parser,这是一个BaseOutputParser这意味着它需要一个字符串或一个BaseMessage作为输入。这StrOutputParser具体来说,很容易将任何输入转换为字符串。

output_parser.invoke(message)"Why did the ice cream go to therapy? \n\nBecause it had too many toppings and couldn't find its cone-fidence!"4.Entire Pipeline 整个管道

按照步骤进行:

- 我们将用户对所需主题的输入作为

{"topic": "ice cream"} - 这

prompt组件接受用户输入,然后在使用topic来构建提示。 - 这

model组件接受生成的提示,并传递到OpenAI LLM模型进行评估。模型生成的输出是一个ChatMessage对象。 - 最后,在

output_parser组件接受一个ChatMessage,并将其转换为从invoke方法返回的Python字符串。

input = {"topic": "ice cream"}

prompt.invoke(input)

# > ChatPromptValue(messages=[HumanMessage(content='tell me a short joke about ice cream')])

(prompt | model).invoke(input)

# > AIMessage(content="Why did the ice cream go to therapy?\nBecause it had too many toppings and couldn't cone-trol itself!")三、RAG 查询示例

(retrieval-augmented generation chain)

对于我们的下一个示例,我们希望运行一个检索增强生成链,以便在回答问题时添加一些上下文。

# Requires:

# pip install langchain docarray tiktoken

from langchain_community.vectorstores import DocArrayInMemorySearch

from langchain_core.output_parsers import StrOutputParser

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.runnables import RunnableParallel, RunnablePassthrough

from langchain_openai.chat_models import ChatOpenAI

from langchain_openai.embeddings import OpenAIEmbeddings

# 初始化一个向量存储

vectorstore = DocArrayInMemorySearch.from_texts(

["harrison worked at kensho", "bears like to eat honey"],

embedding=OpenAIEmbeddings(),

)

# 生成检索索引器

retriever = vectorstore.as_retriever()

# 一个对话模板,内含2个变量context和question

template = """Answer the question based only on the following context:

{context}

Question: {question}

"""

# 基于模板生成提示

prompt = ChatPromptTemplate.from_template(template)

# 基于对话openai生成模型

model = ChatOpenAI()

# 生成输出解析器

output_parser = StrOutputParser()

# 将检索索引器和输入内容(问题)生成检索

setup_and_retrieval = RunnableParallel(

{"context": retriever, "question": RunnablePassthrough()}

)

# 建立增强链

chain = setup_and_retrieval | prompt | model | output_parser

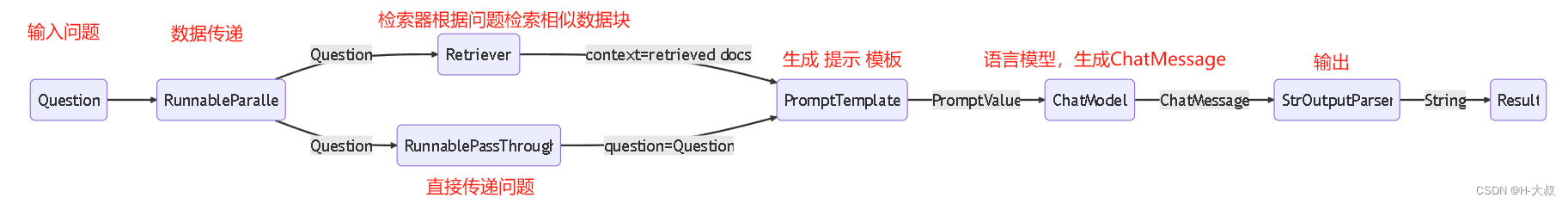

chain.invoke("where did harrison work?")在这种情况下,合成链为:

chain = setup_and_retrieval | prompt | model | output_parser

为了解释这一点,我们首先可以看到上面的提示模板接受了context和question作为提示中要替换的值。在构建提示模板之前,我们希望检索与搜索相关的文档,并将其作为上下文的一部分。

作为 第一步,我们已经使用内存存储设置了检索器,它可以基于查询检索文档。这也是一个可运行组件,可以与其他组件链接在一起,但您也可以尝试单独运行它:

retriever.invoke("where did harrison work?")然后 我们使用RunnableParallel通过使用检索到的文档条目和原始用户问题、使用检索器进行文档搜索以及使用RunnablePassthrough传递用户问题,为提示准备预期的输入:

setup_and_retrieval = RunnableParallel(

{"context": retriever, "question": RunnablePassthrough()}

)回顾一下,完整的链是:

setup_and_retrieval = RunnableParallel(

{"context": retriever, "question": RunnablePassthrough()}

)

chain = setup_and_retrieval | prompt | model | output_parser流程如下:

- 第一步创建一个

RunnableParallel具有两个词条的对象。第一个词条(context)将包括由检索器获取的文档结果。第二个词条(question)将包含用户的原始问题。为了传递问题,我们使用RunnablePassthrough复制这个词条。 - 将上面步骤中的词典输入到

prompt组件。然后它接受用户输入question以及检索到的文档context构造提示并输出提示值。 model组件接受生成的提示,并传递到OpenAI LLM模型进行评估。模型生成的输出是一个ChatMessage对象。- 最后,是

output_parser组件接受一个ChatMessage,并将其转换为从invoke方法返回的Python字符串。

四、为什么使用LECL

LCEL 使从基本组件构建复杂的链变得容易。为此,它提供了:统一的界面和合成原语。

1、统一的界面:每个 LCEL对象 都实现 Runnable接口 ,它定义了一组通用的调用方法(invoke, batch, stream, ainvoke...).这使得LCEL对象链也可以自动支持这些调用。也就是说,每个LCEL对象链本身就是一个LCEL对象。

2、合成原语:LCEL提供了许多原语,使构建链、并行化组件、添加回退、动态配置内部链等变得容易。

为了更好地理解LCEL的价值,看看它的实际应用并思考如果没有它我们如何重新创建类似的功能是很有帮助的。在本演练中,我们将使用快速入门的示例部分。我们将使用简单的prompt +模型链,它已经定义了很多功能,看看如何重新创建所有这些功能。

%pip install --upgrade --quiet langchain-core langchain-openai langchain-anthropic-

invoke (引子)

在最简单的情况下,我们只想传入一个主题字符串并获取一个笑话字符串:

没有LCEL

from typing import List

import openai

prompt_template = "Tell me a short joke about {topic}"

client = openai.OpenAI()

def call_chat_model(messages: List[dict]) -> str:

response = client.chat.completions.create(

model="gpt-3.5-turbo",

messages=messages,

)

return response.choices[0].message.content

def invoke_chain(topic: str) -> str:

prompt_value = prompt_template.format(topic=topic)

messages = [{"role": "user", "content": prompt_value}]

return call_chat_model(messages)

invoke_chain("ice cream")LCEL

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

from langchain_core.runnables import RunnablePassthrough

prompt = ChatPromptTemplate.from_template(

"Tell me a short joke about {topic}"

)

output_parser = StrOutputParser()

model = ChatOpenAI(model="gpt-3.5-turbo")

chain = (

{"topic": RunnablePassthrough()}

| prompt

| model

| output_parser

)

chain.invoke("ice cream")-

Stream(流式)

如果我们想 流式传输结果 ,我们需要更改我们的函数:

没有LCEL

from typing import Iterator

def stream_chat_model(messages: List[dict]) -> Iterator[str]:

stream = client.chat.completions.create(

model="gpt-3.5-turbo",

messages=messages,

stream=True,

)

for response in stream:

content = response.choices[0].delta.content

if content is not None:

yield content

def stream_chain(topic: str) -> Iterator[str]:

prompt_value = prompt.format(topic=topic)

return stream_chat_model([{"role": "user", "content": prompt_value}])

for chunk in stream_chain("ice cream"):

print(chunk, end="", flush=True)LCEL

for chunk in chain.stream("ice cream"):

print(chunk, end="", flush=True)-

Batch(批量)

如果我们想要 并行运行一批输入 ,我们将再次需要一个新函数:

没有LCEL

from concurrent.futures import ThreadPoolExecutor

def batch_chain(topics: list) -> list:

with ThreadPoolExecutor(max_workers=5) as executor:

return list(executor.map(invoke_chain, topics))

batch_chain(["ice cream", "spaghetti", "dumplings"])LCEL

chain.batch(["ice cream", "spaghetti", "dumplings"])-

Async(异步)

如果我们需要异步版本:

没有LCEL

async_client = openai.AsyncOpenAI()

async def acall_chat_model(messages: List[dict]) -> str:

response = await async_client.chat.completions.create(

model="gpt-3.5-turbo",

messages=messages,

)

return response.choices[0].message.content

async def ainvoke_chain(topic: str) -> str:

prompt_value = prompt_template.format(topic=topic)

messages = [{"role": "user", "content": prompt_value}]

return await acall_chat_model(messages)

await ainvoke_chain("ice cream")LCEL

chain.ainvoke("ice cream")-

LLM代替聊天模式

如果我们想使用完成端点而不是一个聊天端点:

没有LCEL

def call_llm(prompt_value: str) -> str:

response = client.completions.create(

model="gpt-3.5-turbo-instruct",

prompt=prompt_value,

)

return response.choices[0].text

def invoke_llm_chain(topic: str) -> str:

prompt_value = prompt_template.format(topic=topic)

return call_llm(prompt_value)

invoke_llm_chain("ice cream")LCEL

from langchain_openai import OpenAI

llm = OpenAI(model="gpt-3.5-turbo-instruct")

llm_chain = (

{"topic": RunnablePassthrough()}

| prompt

| llm

| output_parser

)

llm_chain.invoke("ice cream")-

不同的模型提供商

如果我们想用Anthropic代替OpenAI:

没有LCEL

import anthropic

anthropic_template = f"Human:\n\n{prompt_template}\n\nAssistant:"

anthropic_client = anthropic.Anthropic()

def call_anthropic(prompt_value: str) -> str:

response = anthropic_client.completions.create(

model="claude-2",

prompt=prompt_value,

max_tokens_to_sample=256,

)

return response.completion

def invoke_anthropic_chain(topic: str) -> str:

prompt_value = anthropic_template.format(topic=topic)

return call_anthropic(prompt_value)

invoke_anthropic_chain("ice cream")LCEL

from langchain_anthropic import ChatAnthropic

anthropic = ChatAnthropic(model="claude-2")

anthropic_chain = (

{"topic": RunnablePassthrough()}

| prompt

| anthropic

| output_parser

)

anthropic_chain.invoke("ice cream")-

运行时可配置性

如果我们想在运行时选择可配置的聊天模型或LLM:

没有LCEL

def invoke_configurable_chain(

topic: str,

*,

model: str = "chat_openai"

) -> str:

if model == "chat_openai":

return invoke_chain(topic)

elif model == "openai":

return invoke_llm_chain(topic)

elif model == "anthropic":

return invoke_anthropic_chain(topic)

else:

raise ValueError(

f"Received invalid model '{model}'."

" Expected one of chat_openai, openai, anthropic"

)

def stream_configurable_chain(

topic: str,

*,

model: str = "chat_openai"

) -> Iterator[str]:

if model == "chat_openai":

return stream_chain(topic)

elif model == "openai":

# Note we haven't implemented this yet.

return stream_llm_chain(topic)

elif model == "anthropic":

# Note we haven't implemented this yet

return stream_anthropic_chain(topic)

else:

raise ValueError(

f"Received invalid model '{model}'."

" Expected one of chat_openai, openai, anthropic"

)

def batch_configurable_chain(

topics: List[str],

*,

model: str = "chat_openai"

) -> List[str]:

# You get the idea

...

async def abatch_configurable_chain(

topics: List[str],

*,

model: str = "chat_openai"

) -> List[str]:

...

invoke_configurable_chain("ice cream", model="openai")

stream = stream_configurable_chain(

"ice_cream",

model="anthropic"

)

for chunk in stream:

print(chunk, end="", flush=True)

# batch_configurable_chain(["ice cream", "spaghetti", "dumplings"])

# await ainvoke_configurable_chain("ice cream")LCEL

from langchain_core.runnables import ConfigurableField

configurable_model = model.configurable_alternatives(

ConfigurableField(id="model"),

default_key="chat_openai",

openai=llm,

anthropic=anthropic,

)

configurable_chain = (

{"topic": RunnablePassthrough()}

| prompt

| configurable_model

| output_parser

)configurable_chain.invoke(

"ice cream",

config={"model": "openai"}

)

stream = configurable_chain.stream(

"ice cream",

config={"model": "anthropic"}

)

for chunk in stream:

print(chunk, end="", flush=True)

configurable_chain.batch(["ice cream", "spaghetti", "dumplings"])

# await configurable_chain.ainvoke("ice cream")-

Logging(记录)

如果我们想记录我们的中间结果:

没有LCEL

我们将 print 出于说明目的的中间步骤

def invoke_anthropic_chain_with_logging(topic: str) -> str:

print(f"Input: {topic}")

prompt_value = anthropic_template.format(topic=topic)

print(f"Formatted prompt: {prompt_value}")

output = call_anthropic(prompt_value)

print(f"Output: {output}")

return output

invoke_anthropic_chain_with_logging("ice cream")LCEL

每个组件都内置了与LangSmith的集成。如果我们设置以下两个环境变量,所有链跟踪都会记录到LangSmith。

import os

os.environ["LANGCHAIN_API_KEY"] = "..."

os.environ["LANGCHAIN_TRACING_V2"] = "true"

anthropic_chain.invoke("ice cream")下面是我们的LangSmith跟踪的样子:https://Smith . lang chain . com/public/e4de 52 f 8-BCD 9-4732-b950-dee E4 b 04 e 313/r

-

Fallbacks(回退)

没有LCEL

def invoke_chain_with_fallback(topic: str) -> str:

try:

return invoke_chain(topic)

except Exception:

return invoke_anthropic_chain(topic)

async def ainvoke_chain_with_fallback(topic: str) -> str:

try:

return await ainvoke_chain(topic)

except Exception:

# Note: we haven't actually implemented this.

return ainvoke_anthropic_chain(topic)

async def batch_chain_with_fallback(topics: List[str]) -> str:

try:

return batch_chain(topics)

except Exception:

# Note: we haven't actually implemented this.

return batch_anthropic_chain(topics)

invoke_chain_with_fallback("ice cream")

# await ainvoke_chain_with_fallback("ice cream")

batch_chain_with_fallback(["ice cream", "spaghetti", "dumplings"]))LCEL

fallback_chain = chain.with_fallbacks([anthropic_chain])

fallback_chain.invoke("ice cream")

# await fallback_chain.ainvoke("ice cream")

fallback_chain.batch(["ice cream", "spaghetti", "dumplings"])-

完整代码比较

即使在这种简单的情况下,我们的LCEL链也简洁地包含了许多功能。随着链变得越来越复杂,这变得尤其有价值。

没有LCEL

from concurrent.futures import ThreadPoolExecutor

from typing import Iterator, List, Tuple

import anthropic

import openai

prompt_template = "Tell me a short joke about {topic}"

anthropic_template = f"Human:\n\n{prompt_template}\n\nAssistant:"

client = openai.OpenAI()

async_client = openai.AsyncOpenAI()

anthropic_client = anthropic.Anthropic()

def call_chat_model(messages: List[dict]) -> str:

response = client.chat.completions.create(

model="gpt-3.5-turbo",

messages=messages,

)

return response.choices[0].message.content

def invoke_chain(topic: str) -> str:

print(f"Input: {topic}")

prompt_value = prompt_template.format(topic=topic)

print(f"Formatted prompt: {prompt_value}")

messages = [{"role": "user", "content": prompt_value}]

output = call_chat_model(messages)

print(f"Output: {output}")

return output

def stream_chat_model(messages: List[dict]) -> Iterator[str]:

stream = client.chat.completions.create(

model="gpt-3.5-turbo",

messages=messages,

stream=True,

)

for response in stream:

content = response.choices[0].delta.content

if content is not None:

yield content

def stream_chain(topic: str) -> Iterator[str]:

print(f"Input: {topic}")

prompt_value = prompt.format(topic=topic)

print(f"Formatted prompt: {prompt_value}")

stream = stream_chat_model([{"role": "user", "content": prompt_value}])

for chunk in stream:

print(f"Token: {chunk}", end="")

yield chunk

def batch_chain(topics: list) -> list:

with ThreadPoolExecutor(max_workers=5) as executor:

return list(executor.map(invoke_chain, topics))

def call_llm(prompt_value: str) -> str:

response = client.completions.create(

model="gpt-3.5-turbo-instruct",

prompt=prompt_value,

)

return response.choices[0].text

def invoke_llm_chain(topic: str) -> str:

print(f"Input: {topic}")

prompt_value = promtp_template.format(topic=topic)

print(f"Formatted prompt: {prompt_value}")

output = call_llm(prompt_value)

print(f"Output: {output}")

return output

def call_anthropic(prompt_value: str) -> str:

response = anthropic_client.completions.create(

model="claude-2",

prompt=prompt_value,

max_tokens_to_sample=256,

)

return response.completion

def invoke_anthropic_chain(topic: str) -> str:

print(f"Input: {topic}")

prompt_value = anthropic_template.format(topic=topic)

print(f"Formatted prompt: {prompt_value}")

output = call_anthropic(prompt_value)

print(f"Output: {output}")

return output

async def ainvoke_anthropic_chain(topic: str) -> str:

...

def stream_anthropic_chain(topic: str) -> Iterator[str]:

...

def batch_anthropic_chain(topics: List[str]) -> List[str]:

...

def invoke_configurable_chain(

topic: str,

*,

model: str = "chat_openai"

) -> str:

if model == "chat_openai":

return invoke_chain(topic)

elif model == "openai":

return invoke_llm_chain(topic)

elif model == "anthropic":

return invoke_anthropic_chain(topic)

else:

raise ValueError(

f"Received invalid model '{model}'."

" Expected one of chat_openai, openai, anthropic"

)

def stream_configurable_chain(

topic: str,

*,

model: str = "chat_openai"

) -> Iterator[str]:

if model == "chat_openai":

return stream_chain(topic)

elif model == "openai":

# Note we haven't implemented this yet.

return stream_llm_chain(topic)

elif model == "anthropic":

# Note we haven't implemented this yet

return stream_anthropic_chain(topic)

else:

raise ValueError(

f"Received invalid model '{model}'."

" Expected one of chat_openai, openai, anthropic"

)

def batch_configurable_chain(

topics: List[str],

*,

model: str = "chat_openai"

) -> List[str]:

...

async def abatch_configurable_chain(

topics: List[str],

*,

model: str = "chat_openai"

) -> List[str]:

...

def invoke_chain_with_fallback(topic: str) -> str:

try:

return invoke_chain(topic)

except Exception:

return invoke_anthropic_chain(topic)

async def ainvoke_chain_with_fallback(topic: str) -> str:

try:

return await ainvoke_chain(topic)

except Exception:

return ainvoke_anthropic_chain(topic)

async def batch_chain_with_fallback(topics: List[str]) -> str:

try:

return batch_chain(topics)

except Exception:

return batch_anthropic_chain(topics)LCEL

import os

from langchain_anthropic import ChatAnthropic

from langchain_openai import ChatOpenAI

from langchain_openai import OpenAI

from langchain_core.output_parsers import StrOutputParser

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.runnables import RunnablePassthrough, ConfigurableField

os.environ["LANGCHAIN_API_KEY"] = "..."

os.environ["LANGCHAIN_TRACING_V2"] = "true"

prompt = ChatPromptTemplate.from_template(

"Tell me a short joke about {topic}"

)

chat_openai = ChatOpenAI(model="gpt-3.5-turbo")

openai = OpenAI(model="gpt-3.5-turbo-instruct")

anthropic = ChatAnthropic(model="claude-2")

model = (

chat_openai

.with_fallbacks([anthropic])

.configurable_alternatives(

ConfigurableField(id="model"),

default_key="chat_openai",

openai=openai,

anthropic=anthropic,

)

)

chain = (

{"topic": RunnablePassthrough()}

| prompt

| model

| StrOutputParser()

)五、interface 接口

为了尽可能容易地创建定制链,我们实现了一个“Runable”协议。Runnable 在大多数组件中都实现了协议。这是一个标准接口,使得定义定制链以及以标准方式调用它们变得容易。标准接口包括:

这些也有相应的异步方法:

- astream:异步流回响应块

- ainvoke:调用输入异步链

- abatch:异步调用输入列表上的链

- astream_log:除了最终响应之外,在中间步骤发生时将其流回

- astream_events:链中发生的beta流事件(介绍在

langchain-core0.1.14)

这输入类型和输出类型因组件而异:

| Component 组件 | Input Type 输入类型 | Output Type 输出类型 |

|---|---|---|

| Prompt | Dictionary(词典) | PromptValue |

| ChatModel | Single string(简单字符串), list of chat messages(聊天消息体列表) or a PromptValue(提示值) | ChatMessage |

| LLM | Single string, list of chat messages or a PromptValue | String |

| OutputParser | The output of an LLM or ChatModel | Depends on the parser (取决于解析器) |

| Retriever | Single string | List of Documents |

| Tool | Single string or dictionary, depending on the tool | Depends on the tool |

所有可运行程序都公开输入和输出计划,以便检查输入和输出:

-input_schema:从Runnable的结构自动生成的输入Pydantic模型

-output_schema:从Runnable的结构自动生成的输出Pydantic模型

让我们来看看这些方法。为此,我们将创建一个超级简单的PromptTemplate + ChatModel链。

pip install –upgrade –quiet langchain-core langchain-community langchain-openaifrom langchain_core.prompts import ChatPromptTemplate

from langchain_openai import ChatOpenAI

model = ChatOpenAI()

prompt = ChatPromptTemplate.from_template("tell me a joke about {topic}")

chain = prompt | model输入模式

一个Runnable接受输入的描述。这是一个从任何Runnable的结构中动态生成的Pydantic模型。你可以称呼.schema()为JSONSchema表示。

# The input schema of the chain is the input schema of its first part, the prompt.

链的输入模式是提示的第一部分输入模式。

chain.input_schema.schema()

{'title': 'PromptInput',

'type': 'object',

'properties': {'topic': {'title': 'Topic', 'type': 'string'}}}prompt.input_schema.schema()

{'title': 'PromptInput',

'type': 'object',

'properties': {'topic': {'title': 'Topic', 'type': 'string'}}}model.input_schema.schema()

{'title': 'ChatOpenAIInput',

'anyOf': [{'type': 'string'},

{'$ref': '#/definitions/StringPromptValue'},

{'$ref': '#/definitions/ChatPromptValueConcrete'},

{'type': 'array',

'items': {'anyOf': [{'$ref': '#/definitions/AIMessage'},

{'$ref': '#/definitions/HumanMessage'},

{'$ref': '#/definitions/ChatMessage'},

{'$ref': '#/definitions/SystemMessage'},

{'$ref': '#/definitions/FunctionMessage'},

{'$ref': '#/definitions/ToolMessage'}]}}],

'definitions': {'StringPromptValue': {'title': 'StringPromptValue',

'description': 'String prompt value.',

'type': 'object',

'properties': {'text': {'title': 'Text', 'type': 'string'},

'type': {'title': 'Type',

'default': 'StringPromptValue',

'enum': ['StringPromptValue'],

'type': 'string'}},

'required': ['text']},

'AIMessage': {'title': 'AIMessage',

'description': 'A Message from an AI.',

'type': 'object',

'properties': {'content': {'title': 'Content',

'anyOf': [{'type': 'string'},

{'type': 'array',

'items': {'anyOf': [{'type': 'string'}, {'type': 'object'}]}}]},

'additional_kwargs': {'title': 'Additional Kwargs', 'type': 'object'},

'type': {'title': 'Type',

'default': 'ai',

'enum': ['ai'],

'type': 'string'},

'example': {'title': 'Example', 'default': False, 'type': 'boolean'}},

'required': ['content']},

'HumanMessage': {'title': 'HumanMessage',

'description': 'A Message from a human.',

'type': 'object',

'properties': {'content': {'title': 'Content',

'anyOf': [{'type': 'string'},

{'type': 'array',

'items': {'anyOf': [{'type': 'string'}, {'type': 'object'}]}}]},

'additional_kwargs': {'title': 'Additional Kwargs', 'type': 'object'},

'type': {'title': 'Type',

'default': 'human',

'enum': ['human'],

'type': 'string'},

'example': {'title': 'Example', 'default': False, 'type': 'boolean'}},

'required': ['content']},

'ChatMessage': {'title': 'ChatMessage',

'description': 'A Message that can be assigned an arbitrary speaker (i.e. role).',

'type': 'object',

'properties': {'content': {'title': 'Content',

'anyOf': [{'type': 'string'},

{'type': 'array',

'items': {'anyOf': [{'type': 'string'}, {'type': 'object'}]}}]},

'additional_kwargs': {'title': 'Additional Kwargs', 'type': 'object'},

'type': {'title': 'Type',

'default': 'chat',

'enum': ['chat'],

'type': 'string'},

'role': {'title': 'Role', 'type': 'string'}},

'required': ['content', 'role']},

'SystemMessage': {'title': 'SystemMessage',

'description': 'A Message for priming AI behavior, usually passed in as the first of a sequence\nof input messages.',

'type': 'object',

'properties': {'content': {'title': 'Content',

'anyOf': [{'type': 'string'},

{'type': 'array',

'items': {'anyOf': [{'type': 'string'}, {'type': 'object'}]}}]},

'additional_kwargs': {'title': 'Additional Kwargs', 'type': 'object'},

'type': {'title': 'Type',

'default': 'system',

'enum': ['system'],

'type': 'string'}},

'required': ['content']},

'FunctionMessage': {'title': 'FunctionMessage',

'description': 'A Message for passing the result of executing a function back to a model.',

'type': 'object',

'properties': {'content': {'title': 'Content',

'anyOf': [{'type': 'string'},

{'type': 'array',

'items': {'anyOf': [{'type': 'string'}, {'type': 'object'}]}}]},

'additional_kwargs': {'title': 'Additional Kwargs', 'type': 'object'},

'type': {'title': 'Type',

'default': 'function',

'enum': ['function'],

'type': 'string'},

'name': {'title': 'Name', 'type': 'string'}},

'required': ['content', 'name']},

'ToolMessage': {'title': 'ToolMessage',

'description': 'A Message for passing the result of executing a tool back to a model.',

'type': 'object',

'properties': {'content': {'title': 'Content',

'anyOf': [{'type': 'string'},

{'type': 'array',

'items': {'anyOf': [{'type': 'string'}, {'type': 'object'}]}}]},

'additional_kwargs': {'title': 'Additional Kwargs', 'type': 'object'},

'type': {'title': 'Type',

'default': 'tool',

'enum': ['tool'],

'type': 'string'},

'tool_call_id': {'title': 'Tool Call Id', 'type': 'string'}},

'required': ['content', 'tool_call_id']},

'ChatPromptValueConcrete': {'title': 'ChatPromptValueConcrete',

'description': 'Chat prompt value which explicitly lists out the message types it accepts.\nFor use in external schemas.',

'type': 'object',

'properties': {'messages': {'title': 'Messages',

'type': 'array',

'items': {'anyOf': [{'$ref': '#/definitions/AIMessage'},

{'$ref': '#/definitions/HumanMessage'},

{'$ref': '#/definitions/ChatMessage'},

{'$ref': '#/definitions/SystemMessage'},

{'$ref': '#/definitions/FunctionMessage'},

{'$ref': '#/definitions/ToolMessage'}]}},

'type': {'title': 'Type',

'default': 'ChatPromptValueConcrete',

'enum': ['ChatPromptValueConcrete'],

'type': 'string'}},

'required': ['messages']}}}输出模式

Runnable生成的输出的描述。这是一个由任何Runnable的结构动态生成的Pydantic模型。您可以对其调用 .schema() 以获得JSONSchema表示。

# The output schema of the chain is the output schema of its last part, in this case a ChatModel, which outputs a ChatMessage

链的输出模式是其最后部分的输出模式,在本例中为ChatModel,它输出ChatMessage。

chain.output_schema.schema()

{'title': 'ChatOpenAIOutput',

'anyOf': [{'$ref': '#/definitions/AIMessage'},

{'$ref': '#/definitions/HumanMessage'},

{'$ref': '#/definitions/ChatMessage'},

{'$ref': '#/definitions/SystemMessage'},

{'$ref': '#/definitions/FunctionMessage'},

{'$ref': '#/definitions/ToolMessage'}],

'definitions': {'AIMessage': {'title': 'AIMessage',

'description': 'A Message from an AI.',

'type': 'object',

'properties': {'content': {'title': 'Content',

'anyOf': [{'type': 'string'},

{'type': 'array',

'items': {'anyOf': [{'type': 'string'}, {'type': 'object'}]}}]},

'additional_kwargs': {'title': 'Additional Kwargs', 'type': 'object'},

'type': {'title': 'Type',

'default': 'ai',

'enum': ['ai'],

'type': 'string'},

'example': {'title': 'Example', 'default': False, 'type': 'boolean'}},

'required': ['content']},

'HumanMessage': {'title': 'HumanMessage',

'description': 'A Message from a human.',

'type': 'object',

'properties': {'content': {'title': 'Content',

'anyOf': [{'type': 'string'},

{'type': 'array',

'items': {'anyOf': [{'type': 'string'}, {'type': 'object'}]}}]},

'additional_kwargs': {'title': 'Additional Kwargs', 'type': 'object'},

'type': {'title': 'Type',

'default': 'human',

'enum': ['human'],

'type': 'string'},

'example': {'title': 'Example', 'default': False, 'type': 'boolean'}},

'required': ['content']},

'ChatMessage': {'title': 'ChatMessage',

'description': 'A Message that can be assigned an arbitrary speaker (i.e. role).',

'type': 'object',

'properties': {'content': {'title': 'Content',

'anyOf': [{'type': 'string'},

{'type': 'array',

'items': {'anyOf': [{'type': 'string'}, {'type': 'object'}]}}]},

'additional_kwargs': {'title': 'Additional Kwargs', 'type': 'object'},

'type': {'title': 'Type',

'default': 'chat',

'enum': ['chat'],

'type': 'string'},

'role': {'title': 'Role', 'type': 'string'}},

'required': ['content', 'role']},

'SystemMessage': {'title': 'SystemMessage',

'description': 'A Message for priming AI behavior, usually passed in as the first of a sequence\nof input messages.',

'type': 'object',

'properties': {'content': {'title': 'Content',

'anyOf': [{'type': 'string'},

{'type': 'array',

'items': {'anyOf': [{'type': 'string'}, {'type': 'object'}]}}]},

'additional_kwargs': {'title': 'Additional Kwargs', 'type': 'object'},

'type': {'title': 'Type',

'default': 'system',

'enum': ['system'],

'type': 'string'}},

'required': ['content']},

'FunctionMessage': {'title': 'FunctionMessage',

'description': 'A Message for passing the result of executing a function back to a model.',

'type': 'object',

'properties': {'content': {'title': 'Content',

'anyOf': [{'type': 'string'},

{'type': 'array',

'items': {'anyOf': [{'type': 'string'}, {'type': 'object'}]}}]},

'additional_kwargs': {'title': 'Additional Kwargs', 'type': 'object'},

'type': {'title': 'Type',

'default': 'function',

'enum': ['function'],

'type': 'string'},

'name': {'title': 'Name', 'type': 'string'}},

'required': ['content', 'name']},

'ToolMessage': {'title': 'ToolMessage',

'description': 'A Message for passing the result of executing a tool back to a model.',

'type': 'object',

'properties': {'content': {'title': 'Content',

'anyOf': [{'type': 'string'},

{'type': 'array',

'items': {'anyOf': [{'type': 'string'}, {'type': 'object'}]}}]},

'additional_kwargs': {'title': 'Additional Kwargs', 'type': 'object'},

'type': {'title': 'Type',

'default': 'tool',

'enum': ['tool'],

'type': 'string'},

'tool_call_id': {'title': 'Tool Call Id', 'type': 'string'}},

'required': ['content', 'tool_call_id']}}}Stream 流式

for s in chain.stream({"topic": "bears"}):

print(s.content, end="", flush=True)Sure, here's a bear-themed joke for you:

Why don't bears wear shoes?

Because they already have bear feet!Invoke 调用

chain.invoke({"topic": "bears"})AIMessage(content="Why don't bears wear shoes? \n\nBecause they have bear feet!")Batch 批量

chain.batch([{"topic": "bears"}, {"topic": "cats"}])[AIMessage(content="Sure, here's a bear joke for you:\n\nWhy don't bears wear shoes?\n\nBecause they already have bear feet!"),

AIMessage(content="Why don't cats play poker in the wild?\n\nToo many cheetahs!")]属性来设置并发请求的数量max_concurrency参数

chain.batch([{"topic": "bears"}, {"topic": "cats"}], config={"max_concurrency": 5})[AIMessage(content="Why don't bears wear shoes?\n\nBecause they have bear feet!"),

AIMessage(content="Why don't cats play poker in the wild? Too many cheetahs!")]Async Stream 异步流

async for s in chain.astream({"topic": "bears"}):

print(s.content, end="", flush=True)Why don't bears wear shoes?

Because they have bear feet!Async Invoke 异步调用

await chain.ainvoke({"topic": "bears"})AIMessage(content="Why don't bears ever wear shoes?\n\nBecause they already have bear feet!")Async Stream Events (beta) 异步事件流(测试版)

事件流是一种测试版API,并且可能会根据反馈进行一些更改。

注意:在langchain-core 0.2.0中引入

现在,当使用astream_events API时,为了一切正常运行,请:

- 使用

async贯穿整个代码(包括异步工具等) - 如果定义自定义函数/可运行函数,则传播回调。

- 无论何时在没有LCEL的情况下使用runnables,一定要调用

.astream()在LLM上而不是.ainvoke强制LLM对令牌进行流式传输。

Event Reference 事件参考

下面是一个参考表,显示了各种Runnable对象可能发出的一些事件。表格后面包含了一些Runnable的定义。

流传输可运行的输入时,⚠️将不可用,直到输入流被完全消耗。这意味着输入将在相应的end挂钩而不是start事件。

| event | name | chunk | input | output |

|---|---|---|---|---|

| on_chat_model_start | [model name] | {“messages”: [[SystemMessage, HumanMessage]]} | ||

| on_chat_model_stream | [model name] | AIMessageChunk(content=“hello”) | ||

| on_chat_model_end | [model name] | {“messages”: [[SystemMessage, HumanMessage]]} | {“generations”: […], “llm_output”: None, …} | |

| on_llm_start | [model name] | {‘input’: ‘hello’} | ||

| on_llm_stream | [model name] | ‘Hello’ | ||

| on_llm_end | [model name] | ‘Hello human!’ | ||

| on_chain_start | format_docs | |||

| on_chain_stream | format_docs | “hello world!, goodbye world!” | ||

| on_chain_end | format_docs | [Document(…)] | “hello world!, goodbye world!” | |

| on_tool_start | some_tool | {“x”: 1, “y”: “2”} | ||

| on_tool_stream | some_tool | {“x”: 1, “y”: “2”} | ||

| on_tool_end | some_tool | {“x”: 1, “y”: “2”} | ||

| on_retriever_start | [retriever name] | {“query”: “hello”} | ||

| on_retriever_chunk | [retriever name] | {documents: […]} | ||

| on_retriever_end | [retriever name] | {“query”: “hello”} | {documents: […]} | |

| on_prompt_start | [template_name] | {“question”: “hello”} | ||

| on_prompt_end | [template_name] | {“question”: “hello”} | ChatPromptValue(messages: [SystemMessage, …]) |

以下是与上述事件相关的声明:

format_docs:

def format_docs(docs: List[Document]) -> str:

'''Format the docs.'''

return ", ".join([doc.page_content for doc in docs])

format_docs = RunnableLambda(format_docs)some_tool:

@tool

def some_tool(x: int, y: str) -> dict:

'''Some_tool.'''

return {"x": x, "y": y}prompt:

template = ChatPromptTemplate.from_messages(

[("system", "You are Cat Agent 007"), ("human", "{question}")]

).with_config({"run_name": "my_template", "tags": ["my_template"]})让我们定义一个新的链,使展示astream_events接口(以及后来的astream_log界面)。

from langchain_community.vectorstores import FAISS

from langchain_core.output_parsers import StrOutputParser

from langchain_core.runnables import RunnablePassthrough

from langchain_openai import OpenAIEmbeddings

template = """Answer the question based only on the following context:

{context}

Question: {question}

"""

prompt = ChatPromptTemplate.from_template(template)

vectorstore = FAISS.from_texts(

["harrison worked at kensho"], embedding=OpenAIEmbeddings()

)

retriever = vectorstore.as_retriever()

retrieval_chain = (

{

"context": retriever.with_config(run_name="Docs"),

"question": RunnablePassthrough(),

}

| prompt

| model.with_config(run_name="my_llm")

| StrOutputParser()

)现在让我们使用astream_events从检索器和LLM获取事件。

async for event in retrieval_chain.astream_events(

"where did harrison work?", version="v1", include_names=["Docs", "my_llm"]

):

kind = event["event"]

if kind == "on_chat_model_stream":

print(event["data"]["chunk"].content, end="|")

elif kind in {"on_chat_model_start"}:

print()

print("Streaming LLM:")

elif kind in {"on_chat_model_end"}:

print()

print("Done streaming LLM.")

elif kind == "on_retriever_end":

print("--")

print("Retrieved the following documents:")

print(event["data"]["output"]["documents"])

elif kind == "on_tool_end":

print(f"Ended tool: {event['name']}")

else:

pass/home/eugene/src/langchain/libs/core/langchain_core/_api/beta_decorator.py:86: LangChainBetaWarning: This API is in beta and may change in the future.

warn_beta(--

Retrieved the following documents:

[Document(page_content='harrison worked at kensho')]

Streaming LLM:

|H|arrison| worked| at| Kens|ho|.||

Done streaming LLM.Parallelism 并行

让我们看看LangChain表达式语言是如何支持并行请求的。例如,当使用RunnableParallel(通常写成字典)它并行执行每个元素。

from langchain_core.runnables import RunnableParallel

chain1 = ChatPromptTemplate.from_template("tell me a joke about {topic}") | model

chain2 = (

ChatPromptTemplate.from_template("write a short (2 line) poem about {topic}")

| model

)

combined = RunnableParallel(joke=chain1, poem=chain2)%%time

chain1.invoke({"topic": "bears"})CPU times: user 18 ms, sys: 1.27 ms, total: 19.3 ms

Wall time: 692 ms

AIMessage(content="Why don't bears wear shoes?\n\nBecause they already have bear feet!")

%%time

chain2.invoke({"topic": "bears"})CPU times: user 10.5 ms, sys: 166 µs, total: 10.7 ms

Wall time: 579 ms

AIMessage(content="In forest's embrace,\nMajestic bears pace.")

%%time

combined.invoke({"topic": "bears"})CPU times: user 32 ms, sys: 2.59 ms, total: 34.6 ms

Wall time: 816 ms

{'joke': AIMessage(content="Sure, here's a bear-related joke for you:\n\nWhy did the bear bring a ladder to the bar?\n\nBecause he heard the drinks were on the house!"),

'poem': AIMessage(content="In wilderness they roam,\nMajestic strength, nature's throne.")}

Parallelism on batches 批量并行

并行可以与其他runnables结合使用。让我们尝试对批处理使用并行性。

%%time

chain1.batch([{"topic": "bears"}, {"topic": "cats"}])CPU times: user 17.3 ms, sys: 4.84 ms, total: 22.2 ms

Wall time: 628 ms

[AIMessage(content="Why don't bears wear shoes?\n\nBecause they have bear feet!"),

AIMessage(content="Why don't cats play poker in the wild?\n\nToo many cheetahs!")]

%%time

chain2.batch([{"topic": "bears"}, {"topic": "cats"}])CPU times: user 15.8 ms, sys: 3.83 ms, total: 19.7 ms

Wall time: 718 ms

[AIMessage(content='In the wild, bears roam,\nMajestic guardians of ancient home.'),

AIMessage(content='Whiskers grace, eyes gleam,\nCats dance through the moonbeam.')]

%%time

combined.batch([{"topic": "bears"}, {"topic": "cats"}])CPU times: user 44.8 ms, sys: 3.17 ms, total: 48 ms

Wall time: 721 ms

[{'joke': AIMessage(content="Sure, here's a bear joke for you:\n\nWhy don't bears wear shoes?\n\nBecause they have bear feet!"),

'poem': AIMessage(content="Majestic bears roam,\nNature's strength, beauty shown.")},

{'joke': AIMessage(content="Why don't cats play poker in the wild?\n\nToo many cheetahs!"),

'poem': AIMessage(content="Whiskers dance, eyes aglow,\nCats embrace the night's gentle flow.")}]

1960

1960

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?